本文主要是介绍Llama模型家族之使用 Supervised Fine-Tuning(SFT)微调预训练Llama 3 语言模型(一) LLaMA-Factory简介,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

LlaMA 3 系列博客

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (一)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (二)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (三)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (四)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (五)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (六)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (七)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (八)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (九)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (十)

构建安全的GenAI/LLMs核心技术解密之大模型对抗攻击(一)

构建安全的GenAI/LLMs核心技术解密之大模型对抗攻击(二)

构建安全的GenAI/LLMs核心技术解密之大模型对抗攻击(三)

构建安全的GenAI/LLMs核心技术解密之大模型对抗攻击(四)

构建安全的GenAI/LLMs核心技术解密之大模型对抗攻击(五)

你好 GPT-4o!

大模型标记器之Tokenizer可视化(GPT-4o)

大模型标记器 Tokenizer之Byte Pair Encoding (BPE) 算法详解与示例

大模型标记器 Tokenizer之Byte Pair Encoding (BPE)源码分析

大模型之自注意力机制Self-Attention(一)

大模型之自注意力机制Self-Attention(二)

大模型之自注意力机制Self-Attention(三)

基于 LlaMA 3 + LangGraph 在windows本地部署大模型 (十一)

Llama 3 模型家族构建安全可信赖企业级AI应用之 Code Llama (一)

Llama 3 模型家族构建安全可信赖企业级AI应用之 Code Llama (二)

Llama 3 模型家族构建安全可信赖企业级AI应用之 Code Llama (三)

Llama 3 模型家族构建安全可信赖企业级AI应用之 Code Llama (四)

Llama 3 模型家族构建安全可信赖企业级AI应用之 Code Llama (五)

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话(一)

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话(二)

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话(三)

大模型之深入理解Transformer位置编码(Positional Embedding)

大模型之深入理解Transformer Layer Normalization(一)

大模型之深入理解Transformer Layer Normalization(二)

大模型之深入理解Transformer Layer Normalization(三)

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(一)初学者的起点

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(二)矩阵操作的演练

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(三)初始化一个嵌入层

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(四)预先计算 RoPE 频率

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(五)预先计算因果掩码

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(六)首次归一化:均方根归一化(RMSNorm)

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(七) 初始化多查询注意力

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(八)旋转位置嵌入

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(九) 计算自注意力

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(十) 残差连接及SwiGLU FFN

大模型之一步一步使用PyTorch编写Meta的Llama 3代码(十一)输出概率分布 及损失函数计算

大模型之使用PyTorch编写Meta的Llama 3实际功能代码(一)加载简化分词器及设置参数

大模型之使用PyTorch编写Meta的Llama 3实际功能代码(二)RoPE 及注意力机制

大模型之使用PyTorch编写Meta的Llama 3实际功能代码(三) FeedForward 及 Residual Layers

大模型之使用PyTorch编写Meta的Llama 3实际功能代码(四) 构建 Llama3 类模型本身

大模型之使用PyTorch编写Meta的Llama 3实际功能代码(五)训练并测试你自己的 minLlama3

大模型之使用PyTorch编写Meta的Llama 3实际功能代码(六)加载已经训练好的miniLlama3模型

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话 (四)

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话 (五)

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话 (六)

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话 (七)

Llama 3 模型家族构建安全可信赖企业级AI应用之使用 Llama Guard 保护大模型对话 (八)

Llama 3 模型家族构建安全可信赖企业级AI应用之 CyberSecEval 2:量化 LLM 安全和能力的基准(一)

Llama 3 模型家族构建安全可信赖企业级AI应用之 CyberSecEval 2:量化 LLM 安全和能力的基准(二)

Llama 3 模型家族构建安全可信赖企业级AI应用之 CyberSecEval 2:量化 LLM 安全和能力的基准(三)

Llama 3 模型家族构建安全可信赖企业级AI应用之 CyberSecEval 2:量化 LLM 安全和能力的基准(四)

Llama 3 模型家族构建安全可信赖企业级AI应用之code shield(一)Code Shield简介

Llama 3 模型家族构建安全可信赖企业级AI应用之code shield(二)防止 LLM 生成不安全代码

Llama 3 模型家族构建安全可信赖企业级AI应用之code shield(三)Code Shield代码示例

Llama模型家族之使用 Supervised Fine-Tuning(SFT)微调预训练Llama 3 语言模型(一) LLaMA-Factory简介

微调大模型可以像这样轻松…

Llama-factory

LLaMA-Factory 项目特色

- 多种模型:LLaMA、LLaVA、Mistral、Mixtral-MoE、Qwen、Yi、Gemma、Baichuan、ChatGLM、Phi 等等。

- 集成方法:(增量)预训练、(多模态)指令监督微调、奖励模型训练、PPO 训练、DPO 训练、KTO 训练和 ORPO 训练。

- 多种精度:32 比特全参数微调、16 比特冻结微调、16 比特 LoRA 微调和基于 AQLM/AWQ/GPTQ/LLM.int8 的 2/4/8 比特 QLoRA 微调。

- 先进算法:GaLore、BAdam、DoRA、LongLoRA、LLaMA Pro、Mixture-of-Depths、LoRA+、LoftQ 和 Agent 微调。

- 实用技巧:FlashAttention-2、Unsloth、RoPE scaling、NEFTune 和 rsLoRA。

- 实验监控:LlamaBoard、TensorBoard、Wandb、MLflow 等等。

- 极速推理:基于 vLLM 的 OpenAI 风格 API、浏览器界面和命令行接口。

性能指标

与 ChatGLM 官方的 P-Tuning 微调相比,LLaMA Factory 的 LoRA 微调提供了 3.7 倍的加速比,同时在广告文案生成任务上取得了更高的 Rouge 分数。结合 4 比特量化技术,LLaMA Factory 的 QLoRA 微调进一步降低了 GPU 显存消耗。

- Training Speed: 训练阶段每秒处理的样本数量。(批处理大小=4,截断长度=1024)

- Rouge Score: 广告文案生成任务验证集上的 Rouge-2 分数。(批处理大小=4,截断长度=1024)

- GPU Memory: 4 比特量化训练的 GPU 显存峰值。(批处理大小=1,截断长度=1024)

- 在 ChatGLM 的 P-Tuning 中采用 pre_seq_len=128,在 LLaMA Factory 的 LoRA 微调中采用 lora_rank=32。

更新日志

[24/05/20] 官网支持了 PaliGemma 系列模型的微调。注意 PaliGemma 是预训练模型,你需要使用 gemma 模板进行微调使其获得对话能力。

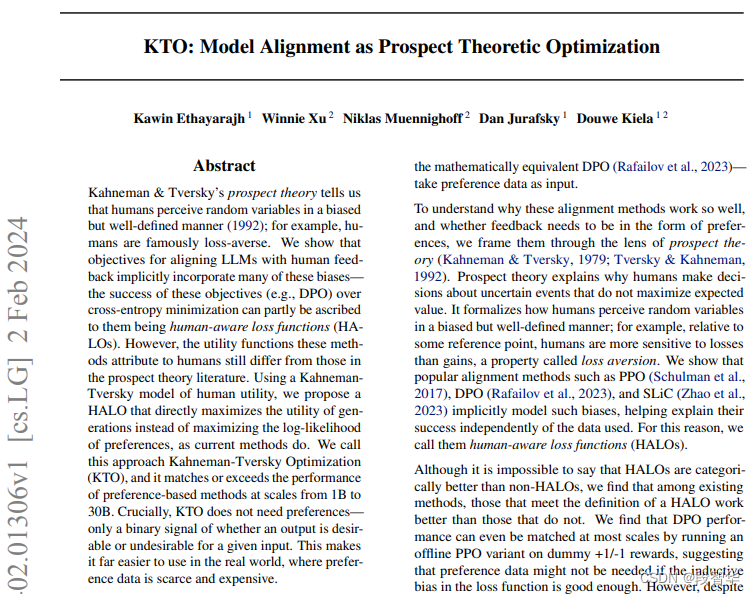

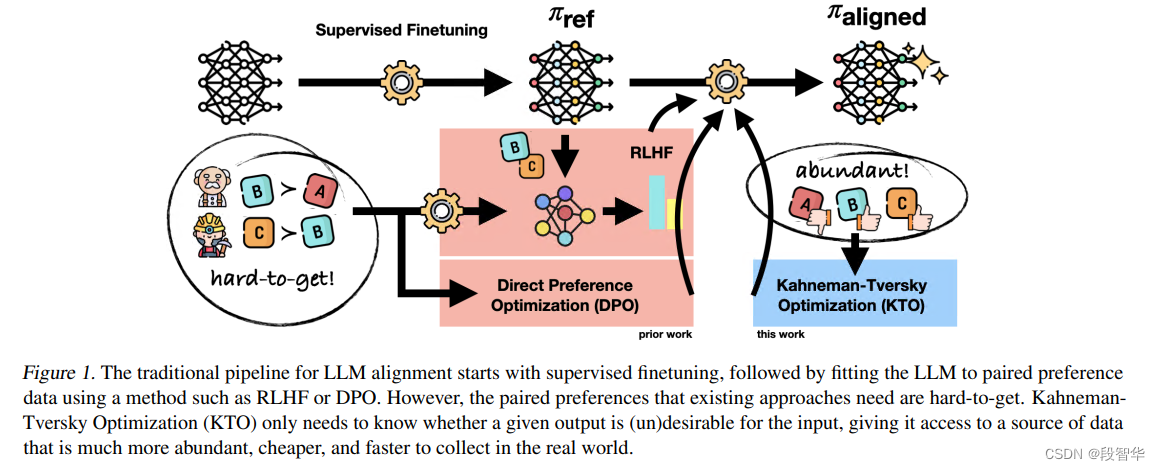

[24/05/18] 官网支持了 KTO 偏好对齐算法。详细用法请参照 examples。

https://arxiv.org/pdf/2402.01306

[24/05/14] 官网支持了昇腾 NPU 设备的训练和推理。

模型

默认模块应作为 --lora_target 参数的默认值,可使用 --lora_target all 参数指定全部模块以取得更好的效果。

对于所有“基座”(Base)模型,–template 参数可以是 default, alpaca, vicuna 等任意值。但“对话”(Instruct/Chat)模型请务必使用对应的模板。

项目所支持模型的完整列表:

from collections import OrderedDict, defaultdict

from enum import Enum

from typing import Dict, OptionalCHOICES = ["A", "B", "C", "D"]DATA_CONFIG = "dataset_info.json"DEFAULT_MODULE = defaultdict(str)DEFAULT_TEMPLATE = defaultdict(str)FILEEXT2TYPE = {"arrow": "arrow","csv": "csv","json": "json","jsonl": "json","parquet": "parquet","txt": "text",

}IGNORE_INDEX = -100IMAGE_TOKEN = "<image>"LAYERNORM_NAMES = {"norm", "ln"}METHODS = ["full", "freeze", "lora"]MOD_SUPPORTED_MODELS = ["bloom", "falcon", "gemma", "llama", "mistral", "mixtral", "phi", "starcoder2"]PEFT_METHODS = ["lora"]RUNNING_LOG = "running_log.txt"SUBJECTS = ["Average", "STEM", "Social Sciences", "Humanities", "Other"]SUPPORTED_MODELS = OrderedDict()TRAINER_CONFIG = "trainer_config.yaml"TRAINER_LOG = "trainer_log.jsonl"TRAINING_STAGES = {"Supervised Fine-Tuning": "sft","Reward Modeling": "rm","PPO": "ppo","DPO": "dpo","KTO": "kto","ORPO": "orpo","Pre-Training": "pt",

}STAGES_USE_PAIR_DATA = ["rm", "dpo", "orpo"]SUPPORTED_CLASS_FOR_S2ATTN = ["llama"]V_HEAD_WEIGHTS_NAME = "value_head.bin"V_HEAD_SAFE_WEIGHTS_NAME = "value_head.safetensors"VISION_MODELS = set()class DownloadSource(str, Enum):DEFAULT = "hf"MODELSCOPE = "ms"def register_model_group(models: Dict[str, Dict[DownloadSource, str]],module: Optional[str] = None,template: Optional[str] = None,vision: bool = False,

) -> None:prefix = Nonefor name, path in models.items():if prefix is None:prefix = name.split("-")[0]else:assert prefix == name.split("-")[0], "prefix should be identical."SUPPORTED_MODELS[name] = pathif module is not None:DEFAULT_MODULE[prefix] = moduleif template is not None:DEFAULT_TEMPLATE[prefix] = templateif vision:VISION_MODELS.add(prefix)register_model_group(models={"Baichuan-7B-Base": {DownloadSource.DEFAULT: "baichuan-inc/Baichuan-7B",DownloadSource.MODELSCOPE: "baichuan-inc/baichuan-7B",},"Baichuan-13B-Base": {DownloadSource.DEFAULT: "baichuan-inc/Baichuan-13B-Base",DownloadSource.MODELSCOPE: "baichuan-inc/Baichuan-13B-Base",},"Baichuan-13B-Chat": {DownloadSource.DEFAULT: "baichuan-inc/Baichuan-13B-Chat",DownloadSource.MODELSCOPE: "baichuan-inc/Baichuan-13B-Chat",},},module="W_pack",template="baichuan",

)register_model_group(models={"Baichuan2-7B-Base": {DownloadSource.DEFAULT: "baichuan-inc/Baichuan2-7B-Base",DownloadSource.MODELSCOPE: "baichuan-inc/Baichuan2-7B-Base",},"Baichuan2-13B-Base": {DownloadSource.DEFAULT: "baichuan-inc/Baichuan2-13B-Base",DownloadSource.MODELSCOPE: "baichuan-inc/Baichuan2-13B-Base",},"Baichuan2-7B-Chat": {DownloadSource.DEFAULT: "baichuan-inc/Baichuan2-7B-Chat",DownloadSource.MODELSCOPE: "baichuan-inc/Baichuan2-7B-Chat",},"Baichuan2-13B-Chat": {DownloadSource.DEFAULT: "baichuan-inc/Baichuan2-13B-Chat",DownloadSource.MODELSCOPE: "baichuan-inc/Baichuan2-13B-Chat",},},module="W_pack",template="baichuan2",

)register_model_group(models={"BLOOM-560M": {DownloadSource.DEFAULT: "bigscience/bloom-560m",DownloadSource.MODELSCOPE: "AI-ModelScope/bloom-560m",},"BLOOM-3B": {DownloadSource.DEFAULT: "bigscience/bloom-3b",DownloadSource.MODELSCOPE: "AI-ModelScope/bloom-3b",},"BLOOM-7B1": {DownloadSource.DEFAULT: "bigscience/bloom-7b1",DownloadSource.MODELSCOPE: "AI-ModelScope/bloom-7b1",},},module="query_key_value",

)register_model_group(models={"BLOOMZ-560M": {DownloadSource.DEFAULT: "bigscience/bloomz-560m",DownloadSource.MODELSCOPE: "AI-ModelScope/bloomz-560m",},"BLOOMZ-3B": {DownloadSource.DEFAULT: "bigscience/bloomz-3b",DownloadSource.MODELSCOPE: "AI-ModelScope/bloomz-3b",},"BLOOMZ-7B1-mt": {DownloadSource.DEFAULT: "bigscience/bloomz-7b1-mt",DownloadSource.MODELSCOPE: "AI-ModelScope/bloomz-7b1-mt",},},module="query_key_value",

)register_model_group(models={"BlueLM-7B-Base": {DownloadSource.DEFAULT: "vivo-ai/BlueLM-7B-Base",DownloadSource.MODELSCOPE: "vivo-ai/BlueLM-7B-Base",},"BlueLM-7B-Chat": {DownloadSource.DEFAULT: "vivo-ai/BlueLM-7B-Chat",DownloadSource.MODELSCOPE: "vivo-ai/BlueLM-7B-Chat",},},template="bluelm",

)register_model_group(models={"Breeze-7B": {DownloadSource.DEFAULT: "MediaTek-Research/Breeze-7B-Base-v1_0",},"Breeze-7B-Chat": {DownloadSource.DEFAULT: "MediaTek-Research/Breeze-7B-Instruct-v1_0",},},template="breeze",

)register_model_group(models={"ChatGLM2-6B-Chat": {DownloadSource.DEFAULT: "THUDM/chatglm2-6b",DownloadSource.MODELSCOPE: "ZhipuAI/chatglm2-6b",}},module="query_key_value",template="chatglm2",

)register_model_group(models={"ChatGLM3-6B-Base": {DownloadSource.DEFAULT: "THUDM/chatglm3-6b-base",DownloadSource.MODELSCOPE: "ZhipuAI/chatglm3-6b-base",},"ChatGLM3-6B-Chat": {DownloadSource.DEFAULT: "THUDM/chatglm3-6b",DownloadSource.MODELSCOPE: "ZhipuAI/chatglm3-6b",},},module="query_key_value",template="chatglm3",

)register_model_group(models={"ChineseLLaMA2-1.3B": {DownloadSource.DEFAULT: "hfl/chinese-llama-2-1.3b",DownloadSource.MODELSCOPE: "AI-ModelScope/chinese-llama-2-1.3b",},"ChineseLLaMA2-7B": {DownloadSource.DEFAULT: "hfl/chinese-llama-2-7b",DownloadSource.MODELSCOPE: "AI-ModelScope/chinese-llama-2-7b",},"ChineseLLaMA2-13B": {DownloadSource.DEFAULT: "hfl/chinese-llama-2-13b",DownloadSource.MODELSCOPE: "AI-ModelScope/chinese-llama-2-13b",},"ChineseLLaMA2-1.3B-Chat": {DownloadSource.DEFAULT: "hfl/chinese-alpaca-2-1.3b",DownloadSource.MODELSCOPE: "AI-ModelScope/chinese-alpaca-2-1.3b",},"ChineseLLaMA2-7B-Chat": {DownloadSource.DEFAULT: "hfl/chinese-alpaca-2-7b",DownloadSource.MODELSCOPE: "AI-ModelScope/chinese-alpaca-2-7b",},"ChineseLLaMA2-13B-Chat": {DownloadSource.DEFAULT: "hfl/chinese-alpaca-2-13b",DownloadSource.MODELSCOPE: "AI-ModelScope/chinese-alpaca-2-13b",},},template="llama2_zh",

)register_model_group(models={"CommandR-35B-Chat": {DownloadSource.DEFAULT: "CohereForAI/c4ai-command-r-v01",DownloadSource.MODELSCOPE: "AI-ModelScope/c4ai-command-r-v01",},"CommandR-Plus-104B-Chat": {DownloadSource.DEFAULT: "CohereForAI/c4ai-command-r-plus",DownloadSource.MODELSCOPE: "AI-ModelScope/c4ai-command-r-plus",},"CommandR-35B-4bit-Chat": {DownloadSource.DEFAULT: "CohereForAI/c4ai-command-r-v01-4bit",DownloadSource.MODELSCOPE: "mirror013/c4ai-command-r-v01-4bit",},"CommandR-Plus-104B-4bit-Chat": {DownloadSource.DEFAULT: "CohereForAI/c4ai-command-r-plus-4bit",},},template="cohere",

)register_model_group(models={"DBRX-132B-Base": {DownloadSource.DEFAULT: "databricks/dbrx-base",DownloadSource.MODELSCOPE: "AI-ModelScope/dbrx-base",},"DBRX-132B-Chat": {DownloadSource.DEFAULT: "databricks/dbrx-instruct",DownloadSource.MODELSCOPE: "AI-ModelScope/dbrx-instruct",},},module="Wqkv",template="dbrx",

)register_model_group(models={"DeepSeek-LLM-7B-Base": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-llm-7b-base",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-llm-7b-base",},"DeepSeek-LLM-67B-Base": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-llm-67b-base",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-llm-67b-base",},"DeepSeek-LLM-7B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-llm-7b-chat",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-llm-7b-chat",},"DeepSeek-LLM-67B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-llm-67b-chat",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-llm-67b-chat",},"DeepSeek-Math-7B-Base": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-math-7b-base",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-math-7b-base",},"DeepSeek-Math-7B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-math-7b-instruct",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-math-7b-instruct",},"DeepSeek-MoE-16B-Base": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-moe-16b-base",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-moe-16b-base",},"DeepSeek-MoE-16B-v2-Base": {DownloadSource.DEFAULT: "deepseek-ai/DeepSeek-V2-Lite",},"DeepSeek-MoE-236B-Base": {DownloadSource.DEFAULT: "deepseek-ai/DeepSeek-V2",DownloadSource.MODELSCOPE: "deepseek-ai/DeepSeek-V2",},"DeepSeek-MoE-16B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-moe-16b-chat",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-moe-16b-chat",},"DeepSeek-MoE-16B-v2-Chat": {DownloadSource.DEFAULT: "deepseek-ai/DeepSeek-V2-Lite-Chat",},"DeepSeek-MoE-236B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/DeepSeek-V2-Chat",DownloadSource.MODELSCOPE: "deepseek-ai/DeepSeek-V2-Chat",},},template="deepseek",

)register_model_group(models={"DeepSeekCoder-6.7B-Base": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-coder-6.7b-base",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-coder-6.7b-base",},"DeepSeekCoder-7B-Base": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-coder-7b-base-v1.5",},"DeepSeekCoder-33B-Base": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-coder-33b-base",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-coder-33b-base",},"DeepSeekCoder-6.7B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-coder-6.7b-instruct",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-coder-6.7b-instruct",},"DeepSeekCoder-7B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-coder-7b-instruct-v1.5",},"DeepSeekCoder-33B-Chat": {DownloadSource.DEFAULT: "deepseek-ai/deepseek-coder-33b-instruct",DownloadSource.MODELSCOPE: "deepseek-ai/deepseek-coder-33b-instruct",},},template="deepseekcoder",

)register_model_group(models={"Falcon-7B": {DownloadSource.DEFAULT: "tiiuae/falcon-7b",DownloadSource.MODELSCOPE: "AI-ModelScope/falcon-7b",},"Falcon-11B": {DownloadSource.DEFAULT: "tiiuae/falcon-11B",},"Falcon-40B": {DownloadSource.DEFAULT: "tiiuae/falcon-40b",DownloadSource.MODELSCOPE: "AI-ModelScope/falcon-40b",},"Falcon-180B": {DownloadSource.DEFAULT: "tiiuae/falcon-180b",DownloadSource.MODELSCOPE: "modelscope/falcon-180B",},"Falcon-7B-Chat": {DownloadSource.DEFAULT: "tiiuae/falcon-7b-instruct",DownloadSource.MODELSCOPE: "AI-ModelScope/falcon-7b-instruct",},"Falcon-40B-Chat": {DownloadSource.DEFAULT: "tiiuae/falcon-40b-instruct",DownloadSource.MODELSCOPE: "AI-ModelScope/falcon-40b-instruct",},"Falcon-180B-Chat": {DownloadSource.DEFAULT: "tiiuae/falcon-180b-chat",DownloadSource.MODELSCOPE: "modelscope/falcon-180B-chat",},},module="query_key_value",template="falcon",

)register_model_group(models={"Gemma-2B": {DownloadSource.DEFAULT: "google/gemma-2b",DownloadSource.MODELSCOPE: "AI-ModelScope/gemma-2b",},"Gemma-7B": {DownloadSource.DEFAULT: "google/gemma-7b",DownloadSource.MODELSCOPE: "AI-ModelScope/gemma-2b-it",},"Gemma-2B-Chat": {DownloadSource.DEFAULT: "google/gemma-2b-it",DownloadSource.MODELSCOPE: "AI-ModelScope/gemma-7b",},"Gemma-7B-Chat": {DownloadSource.DEFAULT: "google/gemma-7b-it",DownloadSource.MODELSCOPE: "AI-ModelScope/gemma-7b-it",},},template="gemma",

)register_model_group(models={"CodeGemma-2B": {DownloadSource.DEFAULT: "google/codegemma-1.1-2b",},"CodeGemma-7B": {DownloadSource.DEFAULT: "google/codegemma-7b",},"CodeGemma-7B-Chat": {DownloadSource.DEFAULT: "google/codegemma-1.1-7b-it",DownloadSource.MODELSCOPE: "AI-ModelScope/codegemma-7b-it",},},template="gemma",

)register_model_group(models={"InternLM-7B": {DownloadSource.DEFAULT: "internlm/internlm-7b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm-7b",},"InternLM-20B": {DownloadSource.DEFAULT: "internlm/internlm-20b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm-20b",},"InternLM-7B-Chat": {DownloadSource.DEFAULT: "internlm/internlm-chat-7b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm-chat-7b",},"InternLM-20B-Chat": {DownloadSource.DEFAULT: "internlm/internlm-chat-20b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm-chat-20b",},},template="intern",

)register_model_group(models={"InternLM2-7B": {DownloadSource.DEFAULT: "internlm/internlm2-7b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm2-7b",},"InternLM2-20B": {DownloadSource.DEFAULT: "internlm/internlm2-20b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm2-20b",},"InternLM2-7B-Chat": {DownloadSource.DEFAULT: "internlm/internlm2-chat-7b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm2-chat-7b",},"InternLM2-20B-Chat": {DownloadSource.DEFAULT: "internlm/internlm2-chat-20b",DownloadSource.MODELSCOPE: "Shanghai_AI_Laboratory/internlm2-chat-20b",},},module="wqkv",template="intern2",

)register_model_group(models={"Jambda-v0.1": {DownloadSource.DEFAULT: "ai21labs/Jamba-v0.1",DownloadSource.MODELSCOPE: "AI-ModelScope/Jamba-v0.1",}},

)register_model_group(models={"LingoWhale-8B": {DownloadSource.DEFAULT: "deeplang-ai/LingoWhale-8B",DownloadSource.MODELSCOPE: "DeepLang/LingoWhale-8B",}},module="qkv_proj",

)register_model_group(models={"LLaMA-7B": {DownloadSource.DEFAULT: "huggyllama/llama-7b",DownloadSource.MODELSCOPE: "skyline2006/llama-7b",},"LLaMA-13B": {DownloadSource.DEFAULT: "huggyllama/llama-13b",DownloadSource.MODELSCOPE: "skyline2006/llama-13b",},"LLaMA-30B": {DownloadSource.DEFAULT: "huggyllama/llama-30b",DownloadSource.MODELSCOPE: "skyline2006/llama-30b",},"LLaMA-65B": {DownloadSource.DEFAULT: "huggyllama/llama-65b",DownloadSource.MODELSCOPE: "skyline2006/llama-65b",},}

)register_model_group(models={"LLaMA2-7B": {DownloadSource.DEFAULT: "meta-llama/Llama-2-7b-hf",DownloadSource.MODELSCOPE: "modelscope/Llama-2-7b-ms",},"LLaMA2-13B": {DownloadSource.DEFAULT: "meta-llama/Llama-2-13b-hf",DownloadSource.MODELSCOPE: "modelscope/Llama-2-13b-ms",},"LLaMA2-70B": {DownloadSource.DEFAULT: "meta-llama/Llama-2-70b-hf",DownloadSource.MODELSCOPE: "modelscope/Llama-2-70b-ms",},"LLaMA2-7B-Chat": {DownloadSource.DEFAULT: "meta-llama/Llama-2-7b-chat-hf",DownloadSource.MODELSCOPE: "modelscope/Llama-2-7b-chat-ms",},"LLaMA2-13B-Chat": {DownloadSource.DEFAULT: "meta-llama/Llama-2-13b-chat-hf",DownloadSource.MODELSCOPE: "modelscope/Llama-2-13b-chat-ms",},"LLaMA2-70B-Chat": {DownloadSource.DEFAULT: "meta-llama/Llama-2-70b-chat-hf",DownloadSource.MODELSCOPE: "modelscope/Llama-2-70b-chat-ms",},},template="llama2",

)register_model_group(models={"LLaMA3-8B": {DownloadSource.DEFAULT: "meta-llama/Meta-Llama-3-8B",DownloadSource.MODELSCOPE: "LLM-Research/Meta-Llama-3-8B",},"LLaMA3-70B": {DownloadSource.DEFAULT: "meta-llama/Meta-Llama-3-70B",DownloadSource.MODELSCOPE: "LLM-Research/Meta-Llama-3-70B",},"LLaMA3-8B-Chat": {DownloadSource.DEFAULT: "meta-llama/Meta-Llama-3-8B-Instruct",DownloadSource.MODELSCOPE: "LLM-Research/Meta-Llama-3-8B-Instruct",},"LLaMA3-70B-Chat": {DownloadSource.DEFAULT: "meta-llama/Meta-Llama-3-70B-Instruct",DownloadSource.MODELSCOPE: "LLM-Research/Meta-Llama-3-70B-Instruct",},"LLaMA3-8B-Chinese-Chat": {DownloadSource.DEFAULT: "shenzhi-wang/Llama3-8B-Chinese-Chat",DownloadSource.MODELSCOPE: "LLM-Research/Llama3-8B-Chinese-Chat",},"LLaMA3-70B-Chinese-Chat": {DownloadSource.DEFAULT: "shenzhi-wang/Llama3-70B-Chinese-Chat",},},template="llama3",

)register_model_group(models={"LLaVA1.5-7B-Chat": {DownloadSource.DEFAULT: "llava-hf/llava-1.5-7b-hf",},"LLaVA1.5-13B-Chat": {DownloadSource.DEFAULT: "llava-hf/llava-1.5-13b-hf",},},template="vicuna",vision=True,

)register_model_group(models={"Mistral-7B-v0.1": {DownloadSource.DEFAULT: "mistralai/Mistral-7B-v0.1",DownloadSource.MODELSCOPE: "AI-ModelScope/Mistral-7B-v0.1",},"Mistral-7B-v0.1-Chat": {DownloadSource.DEFAULT: "mistralai/Mistral-7B-Instruct-v0.1",DownloadSource.MODELSCOPE: "AI-ModelScope/Mistral-7B-Instruct-v0.1",},"Mistral-7B-v0.2": {DownloadSource.DEFAULT: "alpindale/Mistral-7B-v0.2-hf",DownloadSource.MODELSCOPE: "AI-ModelScope/Mistral-7B-v0.2-hf",},"Mistral-7B-v0.2-Chat": {DownloadSource.DEFAULT: "mistralai/Mistral-7B-Instruct-v0.2",DownloadSource.MODELSCOPE: "AI-ModelScope/Mistral-7B-Instruct-v0.2",},},template="mistral",

)register_model_group(models={"Mixtral-8x7B-v0.1": {DownloadSource.DEFAULT: "mistralai/Mixtral-8x7B-v0.1",DownloadSource.MODELSCOPE: "AI-ModelScope/Mixtral-8x7B-v0.1",},"Mixtral-8x7B-v0.1-Chat": {DownloadSource.DEFAULT: "mistralai/Mixtral-8x7B-Instruct-v0.1",DownloadSource.MODELSCOPE: "AI-ModelScope/Mixtral-8x7B-Instruct-v0.1",},"Mixtral-8x22B-v0.1": {DownloadSource.DEFAULT: "mistralai/Mixtral-8x22B-v0.1",DownloadSource.MODELSCOPE: "AI-ModelScope/Mixtral-8x22B-v0.1",},"Mixtral-8x22B-v0.1-Chat": {DownloadSource.DEFAULT: "mistralai/Mixtral-8x22B-Instruct-v0.1",},},template="mistral",

)register_model_group(models={"OLMo-1B": {DownloadSource.DEFAULT: "allenai/OLMo-1B-hf",},"OLMo-7B": {DownloadSource.DEFAULT: "allenai/OLMo-7B-hf",},"OLMo-1.7-7B": {DownloadSource.DEFAULT: "allenai/OLMo-1.7-7B-hf",},},

)register_model_group(models={"OpenChat3.5-7B-Chat": {DownloadSource.DEFAULT: "openchat/openchat-3.5-0106",DownloadSource.MODELSCOPE: "xcwzxcwz/openchat-3.5-0106",}},template="openchat",

)register_model_group(models={"Orion-14B-Base": {DownloadSource.DEFAULT: "OrionStarAI/Orion-14B-Base",DownloadSource.MODELSCOPE: "OrionStarAI/Orion-14B-Base",},"Orion-14B-Chat": {DownloadSource.DEFAULT: "OrionStarAI/Orion-14B-Chat",DownloadSource.MODELSCOPE: "OrionStarAI/Orion-14B-Chat",},"Orion-14B-Long-Chat": {DownloadSource.DEFAULT: "OrionStarAI/Orion-14B-LongChat",DownloadSource.MODELSCOPE: "OrionStarAI/Orion-14B-LongChat",},"Orion-14B-RAG-Chat": {DownloadSource.DEFAULT: "OrionStarAI/Orion-14B-Chat-RAG",DownloadSource.MODELSCOPE: "OrionStarAI/Orion-14B-Chat-RAG",},"Orion-14B-Plugin-Chat": {DownloadSource.DEFAULT: "OrionStarAI/Orion-14B-Chat-Plugin",DownloadSource.MODELSCOPE: "OrionStarAI/Orion-14B-Chat-Plugin",},},template="orion",

)register_model_group(models={"PaliGemma-3B-pt-224": {DownloadSource.DEFAULT: "google/paligemma-3b-pt-224",},"PaliGemma-3B-pt-448": {DownloadSource.DEFAULT: "google/paligemma-3b-pt-448",},"PaliGemma-3B-pt-896": {DownloadSource.DEFAULT: "google/paligemma-3b-pt-896",},"PaliGemma-3B-mix-224": {DownloadSource.DEFAULT: "google/paligemma-3b-mix-224",},"PaliGemma-3B-mix-448": {DownloadSource.DEFAULT: "google/paligemma-3b-mix-448",},},vision=True,

)register_model_group(models={"Phi-1.5-1.3B": {DownloadSource.DEFAULT: "microsoft/phi-1_5",DownloadSource.MODELSCOPE: "allspace/PHI_1-5",},"Phi-2-2.7B": {DownloadSource.DEFAULT: "microsoft/phi-2",DownloadSource.MODELSCOPE: "AI-ModelScope/phi-2",},}

)register_model_group(models={"Phi3-3.8B-4k-Chat": {DownloadSource.DEFAULT: "microsoft/Phi-3-mini-4k-instruct",DownloadSource.MODELSCOPE: "LLM-Research/Phi-3-mini-4k-instruct",},"Phi3-3.8B-128k-Chat": {DownloadSource.DEFAULT: "microsoft/Phi-3-mini-128k-instruct",DownloadSource.MODELSCOPE: "LLM-Research/Phi-3-mini-128k-instruct",},},module="qkv_proj",template="phi",

)register_model_group(models={"Qwen-1.8B": {DownloadSource.DEFAULT: "Qwen/Qwen-1_8B",DownloadSource.MODELSCOPE: "qwen/Qwen-1_8B",},"Qwen-7B": {DownloadSource.DEFAULT: "Qwen/Qwen-7B",DownloadSource.MODELSCOPE: "qwen/Qwen-7B",},"Qwen-14B": {DownloadSource.DEFAULT: "Qwen/Qwen-14B",DownloadSource.MODELSCOPE: "qwen/Qwen-14B",},"Qwen-72B": {DownloadSource.DEFAULT: "Qwen/Qwen-72B",DownloadSource.MODELSCOPE: "qwen/Qwen-72B",},"Qwen-1.8B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-1_8B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen-1_8B-Chat",},"Qwen-7B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-7B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen-7B-Chat",},"Qwen-14B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-14B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen-14B-Chat",},"Qwen-72B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-72B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen-72B-Chat",},"Qwen-1.8B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-1_8B-Chat-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen-1_8B-Chat-Int8",},"Qwen-1.8B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-1_8B-Chat-Int4",DownloadSource.MODELSCOPE: "qwen/Qwen-1_8B-Chat-Int4",},"Qwen-7B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-7B-Chat-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen-7B-Chat-Int8",},"Qwen-7B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-7B-Chat-Int4",DownloadSource.MODELSCOPE: "qwen/Qwen-7B-Chat-Int4",},"Qwen-14B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-14B-Chat-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen-14B-Chat-Int8",},"Qwen-14B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-14B-Chat-Int4",DownloadSource.MODELSCOPE: "qwen/Qwen-14B-Chat-Int4",},"Qwen-72B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-72B-Chat-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen-72B-Chat-Int8",},"Qwen-72B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen-72B-Chat-Int4",DownloadSource.MODELSCOPE: "qwen/Qwen-72B-Chat-Int4",},},module="c_attn",template="qwen",

)register_model_group(models={"Qwen1.5-0.5B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-0.5B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-0.5B",},"Qwen1.5-1.8B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-1.8B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-1.8B",},"Qwen1.5-4B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-4B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-4B",},"Qwen1.5-7B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-7B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-7B",},"Qwen1.5-14B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-14B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-14B",},"Qwen1.5-32B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-32B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-32B",},"Qwen1.5-72B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-72B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-72B",},"Qwen1.5-110B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-110B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-110B",},"Qwen1.5-MoE-A2.7B": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-MoE-A2.7B",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-MoE-A2.7B",},"Qwen1.5-Code-7B": {DownloadSource.DEFAULT: "Qwen/CodeQwen1.5-7B",DownloadSource.MODELSCOPE: "qwen/CodeQwen1.5-7B",},"Qwen1.5-0.5B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-0.5B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-0.5B-Chat",},"Qwen1.5-1.8B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-1.8B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-1.8B-Chat",},"Qwen1.5-4B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-4B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-4B-Chat",},"Qwen1.5-7B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-7B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-7B-Chat",},"Qwen1.5-14B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-14B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-14B-Chat",},"Qwen1.5-32B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-32B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-32B-Chat",},"Qwen1.5-72B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-72B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-72B-Chat",},"Qwen1.5-110B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-110B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-110B-Chat",},"Qwen1.5-MoE-A2.7B-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-MoE-A2.7B-Chat",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-MoE-A2.7B-Chat",},"Qwen1.5-Code-7B-Chat": {DownloadSource.DEFAULT: "Qwen/CodeQwen1.5-7B-Chat",DownloadSource.MODELSCOPE: "qwen/CodeQwen1.5-7B-Chat",},"Qwen1.5-0.5B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-0.5B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-0.5B-Chat-GPTQ-Int8",},"Qwen1.5-0.5B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-0.5B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-0.5B-Chat-AWQ",},"Qwen1.5-1.8B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-1.8B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-1.8B-Chat-GPTQ-Int8",},"Qwen1.5-1.8B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-1.8B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-1.8B-Chat-AWQ",},"Qwen1.5-4B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-4B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-4B-Chat-GPTQ-Int8",},"Qwen1.5-4B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-4B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-4B-Chat-AWQ",},"Qwen1.5-7B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-7B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-7B-Chat-GPTQ-Int8",},"Qwen1.5-7B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-7B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-7B-Chat-AWQ",},"Qwen1.5-14B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-14B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-14B-Chat-GPTQ-Int8",},"Qwen1.5-14B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-14B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-14B-Chat-AWQ",},"Qwen1.5-32B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-32B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-32B-Chat-AWQ",},"Qwen1.5-72B-int8-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-72B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-72B-Chat-GPTQ-Int8",},"Qwen1.5-72B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-72B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-72B-Chat-AWQ",},"Qwen1.5-110B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-110B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-110B-Chat-AWQ",},"Qwen1.5-MoE-A2.7B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/Qwen1.5-MoE-A2.7B-Chat-GPTQ-Int4",DownloadSource.MODELSCOPE: "qwen/Qwen1.5-MoE-A2.7B-Chat-GPTQ-Int4",},"Qwen1.5-Code-7B-int4-Chat": {DownloadSource.DEFAULT: "Qwen/CodeQwen1.5-7B-Chat-AWQ",DownloadSource.MODELSCOPE: "qwen/CodeQwen1.5-7B-Chat-AWQ",},},template="qwen",

)register_model_group(models={"SOLAR-10.7B": {DownloadSource.DEFAULT: "upstage/SOLAR-10.7B-v1.0",},"SOLAR-10.7B-Chat": {DownloadSource.DEFAULT: "upstage/SOLAR-10.7B-Instruct-v1.0",DownloadSource.MODELSCOPE: "AI-ModelScope/SOLAR-10.7B-Instruct-v1.0",},},template="solar",

)register_model_group(models={"Skywork-13B-Base": {DownloadSource.DEFAULT: "Skywork/Skywork-13B-base",DownloadSource.MODELSCOPE: "skywork/Skywork-13B-base",}}

)register_model_group(models={"StarCoder2-3B": {DownloadSource.DEFAULT: "bigcode/starcoder2-3b",DownloadSource.MODELSCOPE: "AI-ModelScope/starcoder2-3b",},"StarCoder2-7B": {DownloadSource.DEFAULT: "bigcode/starcoder2-7b",DownloadSource.MODELSCOPE: "AI-ModelScope/starcoder2-7b",},"StarCoder2-15B": {DownloadSource.DEFAULT: "bigcode/starcoder2-15b",DownloadSource.MODELSCOPE: "AI-ModelScope/starcoder2-15b",},}

)register_model_group(models={"Vicuna1.5-7B-Chat": {DownloadSource.DEFAULT: "lmsys/vicuna-7b-v1.5",DownloadSource.MODELSCOPE: "Xorbits/vicuna-7b-v1.5",},"Vicuna1.5-13B-Chat": {DownloadSource.DEFAULT: "lmsys/vicuna-13b-v1.5",DownloadSource.MODELSCOPE: "Xorbits/vicuna-13b-v1.5",},},template="vicuna",

)register_model_group(models={"XuanYuan-6B": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-6B",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-6B",},"XuanYuan-70B": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-70B",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-70B",},"XuanYuan-2-70B": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan2-70B",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan2-70B",},"XuanYuan-6B-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-6B-Chat",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-6B-Chat",},"XuanYuan-70B-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-70B-Chat",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-70B-Chat",},"XuanYuan-2-70B-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan2-70B-Chat",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan2-70B-Chat",},"XuanYuan-6B-int8-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-6B-Chat-8bit",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-6B-Chat-8bit",},"XuanYuan-6B-int4-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-6B-Chat-4bit",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-6B-Chat-4bit",},"XuanYuan-70B-int8-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-70B-Chat-8bit",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-70B-Chat-8bit",},"XuanYuan-70B-int4-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan-70B-Chat-4bit",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan-70B-Chat-4bit",},"XuanYuan-2-70B-int8-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan2-70B-Chat-8bit",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan2-70B-Chat-8bit",},"XuanYuan-2-70B-int4-Chat": {DownloadSource.DEFAULT: "Duxiaoman-DI/XuanYuan2-70B-Chat-4bit",DownloadSource.MODELSCOPE: "Duxiaoman-DI/XuanYuan2-70B-Chat-4bit",},},template="xuanyuan",

)register_model_group(models={"XVERSE-7B": {DownloadSource.DEFAULT: "xverse/XVERSE-7B",DownloadSource.MODELSCOPE: "xverse/XVERSE-7B",},"XVERSE-13B": {DownloadSource.DEFAULT: "xverse/XVERSE-13B",DownloadSource.MODELSCOPE: "xverse/XVERSE-13B",},"XVERSE-65B": {DownloadSource.DEFAULT: "xverse/XVERSE-65B",DownloadSource.MODELSCOPE: "xverse/XVERSE-65B",},"XVERSE-65B-2": {DownloadSource.DEFAULT: "xverse/XVERSE-65B-2",DownloadSource.MODELSCOPE: "xverse/XVERSE-65B-2",},"XVERSE-7B-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-7B-Chat",DownloadSource.MODELSCOPE: "xverse/XVERSE-7B-Chat",},"XVERSE-13B-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-13B-Chat",DownloadSource.MODELSCOPE: "xverse/XVERSE-13B-Chat",},"XVERSE-65B-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-65B-Chat",DownloadSource.MODELSCOPE: "xverse/XVERSE-65B-Chat",},"XVERSE-MoE-A4.2B": {DownloadSource.DEFAULT: "xverse/XVERSE-MoE-A4.2B",DownloadSource.MODELSCOPE: "xverse/XVERSE-MoE-A4.2B",},"XVERSE-7B-int8-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-7B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "xverse/XVERSE-7B-Chat-GPTQ-Int8",},"XVERSE-7B-int4-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-7B-Chat-GPTQ-Int4",DownloadSource.MODELSCOPE: "xverse/XVERSE-7B-Chat-GPTQ-Int4",},"XVERSE-13B-int8-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-13B-Chat-GPTQ-Int8",DownloadSource.MODELSCOPE: "xverse/XVERSE-13B-Chat-GPTQ-Int8",},"XVERSE-13B-int4-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-13B-Chat-GPTQ-Int4",DownloadSource.MODELSCOPE: "xverse/XVERSE-13B-Chat-GPTQ-Int4",},"XVERSE-65B-int4-Chat": {DownloadSource.DEFAULT: "xverse/XVERSE-65B-Chat-GPTQ-Int4",DownloadSource.MODELSCOPE: "xverse/XVERSE-65B-Chat-GPTQ-Int4",},},template="xverse",

)register_model_group(models={"Yayi-7B": {DownloadSource.DEFAULT: "wenge-research/yayi-7b-llama2",DownloadSource.MODELSCOPE: "AI-ModelScope/yayi-7b-llama2",},"Yayi-13B": {DownloadSource.DEFAULT: "wenge-research/yayi-13b-llama2",DownloadSource.MODELSCOPE: "AI-ModelScope/yayi-13b-llama2",},},template="yayi",

)register_model_group(models={"Yi-6B": {DownloadSource.DEFAULT: "01-ai/Yi-6B",DownloadSource.MODELSCOPE: "01ai/Yi-6B",},"Yi-9B": {DownloadSource.DEFAULT: "01-ai/Yi-9B",DownloadSource.MODELSCOPE: "01ai/Yi-9B",},"Yi-34B": {DownloadSource.DEFAULT: "01-ai/Yi-34B",DownloadSource.MODELSCOPE: "01ai/Yi-34B",},"Yi-6B-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-6B-Chat",DownloadSource.MODELSCOPE: "01ai/Yi-6B-Chat",},"Yi-34B-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-34B-Chat",DownloadSource.MODELSCOPE: "01ai/Yi-34B-Chat",},"Yi-6B-int8-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-6B-Chat-8bits",DownloadSource.MODELSCOPE: "01ai/Yi-6B-Chat-8bits",},"Yi-6B-int4-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-6B-Chat-4bits",DownloadSource.MODELSCOPE: "01ai/Yi-6B-Chat-4bits",},"Yi-34B-int8-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-34B-Chat-8bits",DownloadSource.MODELSCOPE: "01ai/Yi-34B-Chat-8bits",},"Yi-34B-int4-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-34B-Chat-4bits",DownloadSource.MODELSCOPE: "01ai/Yi-34B-Chat-4bits",},"Yi-1.5-6B": {DownloadSource.DEFAULT: "01-ai/Yi-1.5-6B",DownloadSource.MODELSCOPE: "01ai/Yi-1.5-6B",},"Yi-1.5-9B": {DownloadSource.DEFAULT: "01-ai/Yi-1.5-9B",DownloadSource.MODELSCOPE: "01ai/Yi-1.5-9B",},"Yi-1.5-34B": {DownloadSource.DEFAULT: "01-ai/Yi-1.5-34B",DownloadSource.MODELSCOPE: "01ai/Yi-1.5-34B",},"Yi-1.5-6B-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-1.5-6B-Chat",DownloadSource.MODELSCOPE: "01ai/Yi-1.5-6B-Chat",},"Yi-1.5-9B-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-1.5-9B-Chat",DownloadSource.MODELSCOPE: "01ai/Yi-1.5-9B-Chat",},"Yi-1.5-34B-Chat": {DownloadSource.DEFAULT: "01-ai/Yi-1.5-34B-Chat",DownloadSource.MODELSCOPE: "01ai/Yi-1.5-34B-Chat",},},template="yi",

)register_model_group(models={"YiVL-6B-Chat": {DownloadSource.DEFAULT: "BUAADreamer/Yi-VL-6B-hf",},"YiVL-34B-Chat": {DownloadSource.DEFAULT: "BUAADreamer/Yi-VL-34B-hf",},},template="yi_vl",vision=True,

)register_model_group(models={"Yuan2-2B-Chat": {DownloadSource.DEFAULT: "IEITYuan/Yuan2-2B-hf",DownloadSource.MODELSCOPE: "YuanLLM/Yuan2.0-2B-hf",},"Yuan2-51B-Chat": {DownloadSource.DEFAULT: "IEITYuan/Yuan2-51B-hf",DownloadSource.MODELSCOPE: "YuanLLM/Yuan2.0-51B-hf",},"Yuan2-102B-Chat": {DownloadSource.DEFAULT: "IEITYuan/Yuan2-102B-hf",DownloadSource.MODELSCOPE: "YuanLLM/Yuan2.0-102B-hf",},},template="yuan",

)register_model_group(models={"Zephyr-7B-Alpha-Chat": {DownloadSource.DEFAULT: "HuggingFaceH4/zephyr-7b-alpha",DownloadSource.MODELSCOPE: "AI-ModelScope/zephyr-7b-alpha",},"Zephyr-7B-Beta-Chat": {DownloadSource.DEFAULT: "HuggingFaceH4/zephyr-7b-beta",DownloadSource.MODELSCOPE: "modelscope/zephyr-7b-beta",},"Zephyr-141B-ORPO-Chat": {DownloadSource.DEFAULT: "HuggingFaceH4/zephyr-orpo-141b-A35b-v0.1",},},template="zephyr",

)

代码中提到的大型模型名称包括:

- Baichuan-7B-Base

- Baichuan-13B-Base

- Baichuan-13B-Chat

- Baichuan2-7B-Base

- Baichuan2-13B-Base

- Baichuan2-7B-Chat

- Baichuan2-13B-Chat

- BLOOM-560M

- BLOOM-3B

- BLOOM-7B1

- BLOOMZ-560M

- BLOOMZ-3B

- BLOOMZ-7B1-mt

- BlueLM-7B-Base

- BlueLM-7B-Chat

- Breeze-7B

- Breeze-7B-Chat

- ChatGLM2-6B-Chat

- ChatGLM3-6B-Base

- ChatGLM3-6B-Chat

- ChineseLLaMA2-1.3B

- ChineseLLaMA2-7B

- ChineseLLaMA2-13B

- ChineseLLaMA2-1.3B-Chat

- ChineseLLaMA2-7B-Chat

- ChineseLLaMA2-13B-Chat

- CommandR-35B-Chat

- CommandR-Plus-104B-Chat

- CommandR-35B-4bit-Chat

- CommandR-Plus-104B-4bit-Chat

- DBRX-132B-Base

- DBRX-132B-Chat

- DeepSeek-LLM-7B-Base

- DeepSeek-LLM-67B-Base

- DeepSeek-LLM-7B-Chat

- DeepSeek-LLM-67B-Chat

- DeepSeek-Math-7B-Base

- DeepSeek-Math-7B-Chat

- DeepSeek-MoE-16B-Base

- DeepSeek-MoE-16B-v2-Base

- DeepSeek-MoE-236B-Base

- DeepSeek-MoE-16B-Chat

- DeepSeek-MoE-16B-v2-Chat

- DeepSeek-MoE-236B-Chat

- DeepSeekCoder-6.7B-Base

- DeepSeekCoder-7B-Base

- DeepSeekCoder-33B-Base

- DeepSeekCoder-6.7B-Chat

- DeepSeekCoder-7B-Chat

- DeepSeekCoder-33B-Chat

- Falcon-7B

- Falcon-11B

- Falcon-40B

- Falcon-180B

- Falcon-7B-Chat

- Falcon-40B-Chat

- Falcon-180B-Chat

- Gemma-2B

- Gemma-7B

- Gemma-2B-Chat

- Gemma-7B-Chat

- CodeGemma-2B

- CodeGemma-7B

- CodeGemma-7B-Chat

- InternLM-7B

- InternLM-20B

- InternLM-7B-Chat

- InternLM-20B-Chat

- InternLM2-7B

- InternLM2-20B

- InternLM2-7B-Chat

- InternLM2-20B-Chat

- Jambda-v0.1

- LingoWhale-8B

- LLaMA-7B

- LLaMA-13B

- LLaMA-30B

- LLaMA-65B

- LLaMA2-7B

- LLaMA2-13B

- LLaMA2-70B

- LLaMA2-7B-Chat

- LLaMA2-13B-Chat

- LLaMA2-70B-Chat

- LLaMA3-8B

- LLaMA3-70B

- LLaMA3-8B-Chat

- LLaMA3-70B-Chat

- LLaMA3-8B-Chinese-Chat

- LLaMA3-70B-Chinese-Chat

- LLaVA1.5-7B-Chat

- LLaVA1.5-13B-Chat

- Mistral-7B-v0.1

- Mistral-7B-v0.1-Chat

- Mistral-7B-v0.2

- Mistral-7B-v0.2-Chat

- Mixtral-8x7B-v0.1

- Mixtral-8x7B-v0.1-Chat

- Mixtral-8x22B-v0.1

- Mixtral-8x22B-v0.1-Chat

- OLMo-1B

- OLMo-7B

- OLMo-1.7-7B

- OpenChat3.5-7B-Chat

- Orion-14B-Base

- Orion-14B-Chat

- Orion-14B-Long-Chat

- Orion-14B-RAG-Chat

- Orion-14B-Plugin-Chat

- PaliGemma-3B-pt-224

- PaliGemma-3B-pt-448

- PaliGemma-3B-pt-896

- PaliGemma-3B-mix-224

- PaliGemma-3B-mix-448

- Phi-1.5-1.3B

- Phi-2-2.7B

- Phi3-3.8B-4k-Chat

- Phi3-3.8B-128k-Chat

- Qwen-1.8B

- Qwen-7B

- Qwen-14B

- Qwen-72B

- Qwen-1.8B-Chat

- Qwen-7B-Chat

- Qwen-14B-Chat

- Qwen-72B-Chat

- Qwen-1.8B-int8-Chat

- Qwen-1.8B-int4-Chat

- Qwen-7B-int8-Chat

- Qwen-7B-int4-Chat

- Qwen-14B-int8-Chat

- Qwen-14B-int4-Chat

- Qwen-72B-int8-Chat

- Qwen-72B-int4-Chat

- Qwen1.5-0.5B

- Qwen1.5-1.8B

- Qwen1.5-4B

- Qwen1.5-7B

- Qwen1.5-14B

- Qwen1.5-32B

- Qwen1.5-72B

- Qwen1.5-110B

- Qwen1.5-MoE-A2.7B

- Qwen1.5-Code-7B

- Qwen1.5-0.5B-Chat

- Qwen1.5-1.8B-Chat

- Qwen1.5-4B-Chat

- Qwen1.5-7B-Chat

- Qwen1.5-14B-Chat

- Qwen1.5-32B-Chat

- Qwen1.5-72B-Chat

- Qwen1.5-110B-Chat

- Qwen1.5-MoE-A2.7B-Chat

- Qwen1.5-Code-7B-Chat

- Qwen1.5-0.5B-int8-Chat

- Qwen1.5-0.5B-int4-Chat

- Qwen1.5-1.8B-int8-Chat

- Qwen1.5-1.8B-int4-Chat

- Qwen1.5-4B-int8-Chat

- Qwen1.5-4B-int4-Chat

- Qwen1.5-7B-int8-Chat

- Qwen1.5-7B-int4-Chat

- Qwen1.5-14B-int8-Chat

- Qwen1.5-14B-int4-Chat

- Qwen1.5-32B-int4-Chat

- Qwen1.5-72B-int8-Chat

- Qwen1.5-72B-int4-Chat

- Qwen1.5-110B-int4-Chat

- Qwen1.5-MoE-A2.7B-int4-Chat

- Qwen1.5-Code-7B-int4-Chat

- SOLAR-10.7B

- SOLAR-10.7B-Chat

- Skywork-13B-Base

- StarCoder2-3B

- StarCoder2-7B

- StarCoder2-15B

- Vicuna1.5-7B-Chat

- Vicuna1.5-13B-Chat

- XuanYuan-6B

- XuanYuan-70B

- XuanYuan-2-70B

- XuanYuan-6B-Chat

- XuanYuan-70B-Chat

- XuanYuan-2-70B-Chat

- XuanYuan-6B-int8-Chat

- XuanYuan-6B-int4-Chat

- XuanYuan-70B-int8-Chat

- XuanYuan-70B-int4-Chat

- XuanYuan-2-70B-int8-Chat

- XuanYuan-2-70B-int4-Chat

- XVERSE-7B

- XVERSE-13B

- XVERSE-65B

- XVERSE-65B-2

- XVERSE-7B-Chat

- XVERSE-13B-Chat

- XVERSE-65B-Chat

- XVERSE-MoE-A4.2B

- XVERSE-7B-int8-Chat

- XVERSE-7B-int4-Chat

- XVERSE-13B-int8-Chat

- XVERSE-13B-int4-Chat

- XVERSE-65B-int4-Chat

- Yayi-7B

- Yayi-13B

- Yi-6B

- Yi-9B

- Yi-34B

- Yi-6B-Chat

- Yi-34B-Chat

- Yi-6B-int8-Chat

- Yi-6B-int4-Chat

- Yi-34B-int8-Chat

- Yi-34B-int4-Chat

- Yi-1.5-6B

- Yi-1.5-9B

- Yi-1.5-34B

- Yi-1.5-6B-Chat

- Yi-1.5-9B-Chat

- Yi-1.5-34B-Chat

- YiVL-6B-Chat

- YiVL-34B-Chat

- Yuan2-2B-Chat

- Yuan2-51B-Chat

- Yuan2-102B-Chat

- Zephyr-7B-Alpha-Chat

- Zephyr-7B-Beta-Chat

- Zephyr-141B-ORPO-Chat

大模型技术分享

《企业级生成式人工智能LLM大模型技术、算法及案例实战》线上高级研修讲座

模块一:Generative AI 原理本质、技术内核及工程实践周期详解

模块二:工业级 Prompting 技术内幕及端到端的基于LLM 的会议助理实战

模块三:三大 Llama 2 模型详解及实战构建安全可靠的智能对话系统

模块四:生产环境下 GenAI/LLMs 的五大核心问题及构建健壮的应用实战

模块五:大模型应用开发技术:Agentic-based 应用技术及案例实战

模块六:LLM 大模型微调及模型 Quantization 技术及案例实战

模块七:大模型高效微调 PEFT 算法、技术、流程及代码实战进阶

模块八:LLM 模型对齐技术、流程及进行文本Toxicity 分析实战

模块九:构建安全的 GenAI/LLMs 核心技术Red Teaming 解密实战

模块十:构建可信赖的企业私有安全大模型Responsible AI 实战

Llama3关键技术深度解析与构建Responsible AI、算法及开发落地实战

1、Llama开源模型家族大模型技术、工具和多模态详解:学员将深入了解Meta Llama 3的创新之处,比如其在语言模型技术上的突破,并学习到如何在Llama 3中构建trust and safety AI。他们将详细了解Llama 3的五大技术分支及工具,以及如何在AWS上实战Llama指令微调的案例。

2、解密Llama 3 Foundation Model模型结构特色技术及代码实现:深入了解Llama 3中的各种技术,比如Tiktokenizer、KV Cache、Grouped Multi-Query Attention等。通过项目二逐行剖析Llama 3的源码,加深对技术的理解。

3、解密Llama 3 Foundation Model模型结构核心技术及代码实现:SwiGLU Activation Function、FeedForward Block、Encoder Block等。通过项目三学习Llama 3的推理及Inferencing代码,加强对技术的实践理解。

4、基于LangGraph on Llama 3构建Responsible AI实战体验:通过项目四在Llama 3上实战基于LangGraph的Responsible AI项目。他们将了解到LangGraph的三大核心组件、运行机制和流程步骤,从而加强对Responsible AI的实践能力。

5、Llama模型家族构建技术构建安全可信赖企业级AI应用内幕详解:深入了解构建安全可靠的企业级AI应用所需的关键技术,比如Code Llama、Llama Guard等。项目五实战构建安全可靠的对话智能项目升级版,加强对安全性的实践理解。

6、Llama模型家族Fine-tuning技术与算法实战:学员将学习Fine-tuning技术与算法,比如Supervised Fine-Tuning(SFT)、Reward Model技术、PPO算法、DPO算法等。项目六动手实现PPO及DPO算法,加强对算法的理解和应用能力。

7、Llama模型家族基于AI反馈的强化学习技术解密:深入学习Llama模型家族基于AI反馈的强化学习技术,比如RLAIF和RLHF。项目七实战基于RLAIF的Constitutional AI。

8、Llama 3中的DPO原理、算法、组件及具体实现及算法进阶:学习Llama 3中结合使用PPO和DPO算法,剖析DPO的原理和工作机制,详细解析DPO中的关键算法组件,并通过综合项目八从零开始动手实现和测试DPO算法,同时课程将解密DPO进阶技术Iterative DPO及IPO算法。

9、Llama模型家族Safety设计与实现:在这个模块中,学员将学习Llama模型家族的Safety设计与实现,比如Safety in Pretraining、Safety Fine-Tuning等。构建安全可靠的GenAI/LLMs项目开发。

10、Llama 3构建可信赖的企业私有安全大模型Responsible AI系统:构建可信赖的企业私有安全大模型Responsible AI系统,掌握Llama 3的Constitutional AI、Red Teaming。

解码Sora架构、技术及应用

一、为何Sora通往AGI道路的里程碑?

1,探索从大规模语言模型(LLM)到大规模视觉模型(LVM)的关键转变,揭示其在实现通用人工智能(AGI)中的作用。

2,展示Visual Data和Text Data结合的成功案例,解析Sora在此过程中扮演的关键角色。

3,详细介绍Sora如何依据文本指令生成具有三维一致性(3D consistency)的视频内容。 4,解析Sora如何根据图像或视频生成高保真内容的技术路径。

5,探讨Sora在不同应用场景中的实践价值及其面临的挑战和局限性。

二、解码Sora架构原理

1,DiT (Diffusion Transformer)架构详解

2,DiT是如何帮助Sora实现Consistent、Realistic、Imaginative视频内容的?

3,探讨为何选用Transformer作为Diffusion的核心网络,而非技术如U-Net。

4,DiT的Patchification原理及流程,揭示其在处理视频和图像数据中的重要性。

5,Conditional Diffusion过程详解,及其在内容生成过程中的作用。

三、解码Sora关键技术解密

1,Sora如何利用Transformer和Diffusion技术理解物体间的互动,及其对模拟复杂互动场景的重要性。

2,为何说Space-time patches是Sora技术的核心,及其对视频生成能力的提升作用。

3,Spacetime latent patches详解,探讨其在视频压缩和生成中的关键角色。

4,Sora Simulator如何利用Space-time patches构建digital和physical世界,及其对模拟真实世界变化的能力。

5,Sora如何实现faithfully按照用户输入文本而生成内容,探讨背后的技术与创新。

6,Sora为何依据abstract concept而不是依据具体的pixels进行内容生成,及其对模型生成质量与多样性的影响。

这篇关于Llama模型家族之使用 Supervised Fine-Tuning(SFT)微调预训练Llama 3 语言模型(一) LLaMA-Factory简介的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!