layer专题

[Linux Kernel Block Layer第一篇] block layer架构设计

目录 1. single queue架构 2. multi-queue架构(blk-mq) 3. 问题 随着SSD快速存储设备的发展,内核社区越发发现,存储的性能瓶颈从硬件存储设备转移到了内核block layer,主要因为当时的内核block layer是single hw queue的架构,导致cpu锁竞争问题严重,本文先提纲挈领的介绍内核block layer的架构演进,然

android xml之Drawable 篇 --------shape和selector和layer-list的

转自 : http://blog.csdn.net/brokge/article/details/9713041 <shape>和<selector>在Android UI设计中经常用到。比如我们要自定义一个圆角Button,点击Button有些效果的变化,就要用到<shape>和<selector>。 可以这样说,<shape>和<selector>在美化控件中的作用是至关重要。 在

Layer Normalization论文解读

基本信息 作者JL Badoi发表时间2016期刊NIPS网址https://arxiv.org/abs/1607.06450v1 研究背景 1. What’s known 既往研究已证实 batch Normalization对属于同一个Batch中的数据长度要求是相同的,不适合处理序列型的数据。因此它在NLP领域的RNN上效果并不显著,但在CV领域的CNN上效果显著。 2. What’s

区块链 链上扩容 链下扩容 Layer-2扩容

链上扩容,也常被称为layer-1扩容。 直接修改区块链的基础规则,包括区块大小、共识机制等。 链下扩容,也常被称为Layer-2扩容方案。 不直接改动区块链本身的规则(区块大小、共识机制等),而是在其之上再架设一层来做具体的活,只将必要信息、或需要共识参与(如数据出错、发生纠纷时)时才与区块链进行信息交互和传播。因为扩容本质上没有发生在区块链上,因此这类方案被直观地称为链下扩容

深度学习-TensorFlow2 :构建DNN神经网络模型【构建方式:自定义函数、keras.Sequential、CompileFit、自定义Layer、自定义Model】

1、手工创建参数、函数–>利用tf.GradientTape()进行梯度优化(手写数字识别) import osos.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'import tensorflow as tffrom tensorflow.keras import datasets# 一、获取手写数字辨识训练数据集(X_train, Y_train), (X_

layer设置弹出层的位置

layer的弹出层我不想再正中显示,我们想在距离顶部10px,然后水平居中,设置offset,如下 更offset更多设置如下 看看效果,如下 滚动的时候我想固定弹出层,不随滚动条滚动而滚动,如下 设置fix是true就行了

caffe源码解析-inner_product_layer

打开inner_product_layer.hpp文件,发现全连接层是非常清晰简单的,我们主要关注如下四个函数就行。 LayerSetUp(SetUp的作用一般用于初始化,比如网络结构参数的获取)ReshapeForward_cpuBackward_cpu ** inner_product_layer.hpp ** namespace caffe {template <typename

Convolutional layers/Pooling layers/Dense Layer 卷积层/池化层/稠密层

Convolutional layers/Pooling layers/Dense Layer 卷积层/池化层/稠密层 Convolutional layers 卷积层 Convolutional layers, which apply a specified number of convolution filters to the image. For each subregion, the

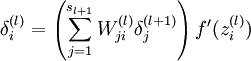

ROS naviagtion analysis: costmap_2d--Layer

这个类中有一个LayeredCostmap* layered_costmap_数据成员,这个数据成员很重要,因为这个类就是通过这个指针获取到的对master map的操作。没有这个指针,所有基于Layer继承下去的地图的类,都无法操作master map。 这个类基本上没有什么实质性的操作,主要是提供了统一的接口,要求子类必须实现这些方法。这样plugin使用的时候,就可以不用管具体是什么类

Layer Normalization(层归一化)里的可学习的参数

参考pyttorch官方文档: LayerNorm — PyTorch 2.4 documentation 在深度学习模型中,层归一化(Layer Normalization, 简称LN)是一种常用的技术,用于稳定和加速神经网络的训练。层归一化通过对单个样本内的所有激活进行归一化,使得训练过程更加稳定。 关于层归一化是否可训练,其实层归一化中确实包含可训练的参数。具体来说,层归一化会对激活值

C++卷积神经网络实例:tiny_cnn代码详解(10)——layer_base和layer类结构分析

在之前的博文中,我们已经队大部分层结构类都进行了分析,在这篇博文中我们准备针对最后两个,也是处于层结构类继承体系中最底层的两个基类layer_base和layer做一下简要分析。由于layer类只是对layer_base的一个简单实例化,因此这里着重分析layer_base类。 首先,给出layer_base类的基本结构框图: 一、成员变量 由于layer_base是这个

C++卷积神经网络实例:tiny_cnn代码详解(9)——partial_connected_layer层结构类分析(下)

在上一篇博文中我们着重分析了partial_connected_layer类的成员变量的结构,在这篇博文中我们将继续对partial_connected_layer类中的其他成员函数做一下简要介绍。 一、构造函数 由于partial_connected_layer类是继承自基类layer,因此在构造函数中同样分为两部分,即调用基类构造函数以及初始化自身成员变量: partial

C++卷积神经网络实例:tiny_cnn代码详解(8)——partial_connected_layer层结构类分析(上)

在之前的博文中我们已经将顶层的网络结构都介绍完毕,包括卷积层、下采样层、全连接层,在这篇博文中主要有两个任务,一是整体贯通一下卷积神经网络在对图像进行卷积处理的整个流程,二是继续我们的类分析,这次需要进行分析的是卷积层和下采样层的公共基类:partial_connected_layer。 一、卷积神经网络的工作流程 首先给出经典的5层模式的卷积神经网络LeNet-5结构模型:

C++卷积神经网络实例:tiny_cnn代码详解(7)——fully_connected_layer层结构类分析

之前的博文中已经将卷积层、下采样层进行了分析,在这篇博文中我们对最后一个顶层层结构fully_connected_layer类(全连接层)进行分析: 一、卷积神经网路中的全连接层 在卷积神经网络中全连接层位于网络模型的最后部分,负责对网络最终输出的特征进行分类预测,得出分类结果: LeNet-5模型中的全连接层分为全连接和高斯连接,该层的最终输出结果即为预测标签,例如

C++卷积神经网络实例:tiny_cnn代码详解(6)——average_pooling_layer层结构类分析

在之前的博文中我们着重分析了convolutional_layer类的代码结构,在这篇博文中分析对应的下采样层average_pooling_layer类: 一、下采样层的作用 下采样层的作用理论上来说由两个,主要是降维,其次是提高一点特征的鲁棒性。在LeNet-5模型中,每一个卷积层后面都跟着一个下采样层: 原因就是当图像在经过卷积层之后,由于每个卷积层都有多个卷积

C++卷积神经网络实例:tiny_cnn代码详解(5)——convolutional_layer类结构信息之其他成员函数

在上一篇博客中我们介绍了convolutional_layer类的基本结构及其成员变量、构造函数的相关信息,在这篇博文中我们对其中剩余的其他成员函数进行分析。首先把convolutional_layer类的结构图给出来: 可见,convolutional_layer类除了构造函数之外,还有另外两部分成员函数,一部分负责定义当前卷积层与前一层之间的连接关系,另一部分则完成convolu

C++卷积神经网络实例:tiny_cnn代码详解(4)——convolutional_layer类结构信息之成员变量与构造函数

在之前的博文中我们已经对tiny_cnn框架的整体类结构做了大致分析,阐明了各个类之间的继承依赖关系,在接下来的几篇博文中我们将分别对各个类进行更为详细的分析,明确其内部具体功能实现。在这篇博文中着重分析convolutional_layer类。convolutional_layer封装的是卷积神经网络中的卷积层网路结构,其在主程序中对应的初始化部分代码如下: 可见在测试程序中我们构

[Keras] 使用Keras编写自定义网络层(layer)

Keras提供众多常见的已编写好的层对象,例如常见的卷积层、池化层等,我们可以直接通过以下代码调用: # 调用一个Conv2D层from keras import layersconv2D = keras.layers.convolutional.Conv2D(filters,\kernel_size, \strides=(1, 1), \padding='valid', \...)

keras slice layer 层 实现

注意的地方: keras中每层的输入输出的tensor是张量, 比如Tensor shape是(N, H, W, C), 对于tf后台, channels_last Define a slice layer using Lamda layer def slice(x, h1, h2, w1, w2):""" Define a tensor slice function"""return x[:

每日Attention学习16——Multi-layer Multi-scale Dilated Convolution

模块出处 [CBM 22] [link] [code] Do You Need Sharpened Details? Asking MMDC-Net: Multi-layer Multi-scale Dilated Convolution Network For Retinal Vessel Segmentation 模块名称 Multi-layer Multi-scale Dilate

Layer-refined Graph Convolutional Networks for Recommendation【ICDE2023】

Layer-refined Graph Convolutional Networks for Recommendation 论文:https://arxiv.org/abs/2207.11088 源码:https://github.com/enoche/MMRec/blob/master/README.md 摘要 基于图卷积网络(GCN)的抽象推荐模型综合了用户-项目交互图的节点信息和拓

对于layer中type的理解

在layer弹出层中分为5个类型,这种类型的返回值是Number类型,默认是0; layer中5种类型传入的值有:0(信息框,默认是0),1(页面层),2(iframe层),3(加载层),4(tips层)

Stable Diffusion 使用详解(8)--- layer diffsuion

背景 layer diffusion 重点在 layer,顾名思义,就是分图层的概念,用过ps 的朋友再熟悉不过了。没使用过的,也没关系,其实很简单,本质就是各图层自身的编辑不会影响其他图层,这好比OS中运行了很多process,一个process 宕机或者修改,不会影响其他process 是一个道理。他的好处很多,可以帮我们生成一个背景透明的任何图片,你可以借助 ps 等工具进行融合。当然高阶

自定义 Layer 属性的动画

转自@nixzhu的GitHub主页(译者:@nixzhu),原文《Animating Custom Layer Properties》 默认情况下,CALayer 及其子类的绝大部分标准属性都可以执行动画,无论是添加一个 CAAnimation 到 Layer(显式动画),亦或是为属性指定一个动作然后修改它(隐式动画)。 但有时候我们希望能同时为好几个属性添加动画,使它们看起来

layer弹出框覆盖在触发mouseenter 和 mouseleave事件元素上的一种解决方法

问题描述: 需求是在table中有告警数据,当鼠标移动到告警数据上,弹出该告警数据关联的信息(关联数据也是表格形式),移开鼠标时,弹出框关闭。 但是在关闭时有这种情况,当弹出框在告警数据的上层时,移动鼠标,先触发mouseleave事件,关闭弹出框,由于弹出框关闭,鼠标回到告警数据上,又弹出,一直循环。 目前能想到的解决方案是:在触发mouseleave事件时,判断鼠标位置,

五十一、openlayers官网示例Layer Min/Max Resolution解析——设置图层最大分辨率,超过最大值换另一个图层显示

使用minResolution、maxResolution分辨率来设置图层显示最大分辨率。 <template><div class="box"><h1>Layer Min/Max Resolution</h1><div id="map" class="map"></div></div></template><script>import Map from "ol/Map.js";im

![[Linux Kernel Block Layer第一篇] block layer架构设计](https://i-blog.csdnimg.cn/direct/6f402f42143b4aac927657769404055e.png)

![[Keras] 使用Keras编写自定义网络层(layer)](/front/images/it_default.jpg)