本文主要是介绍每日Attention学习16——Multi-layer Multi-scale Dilated Convolution,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

模块出处

[CBM 22] [link] [code] Do You Need Sharpened Details? Asking MMDC-Net: Multi-layer Multi-scale Dilated Convolution Network For Retinal Vessel Segmentation

模块名称

Multi-layer Multi-scale Dilated Convolution (MMDC)

模块作用

多尺度特征提取与融合

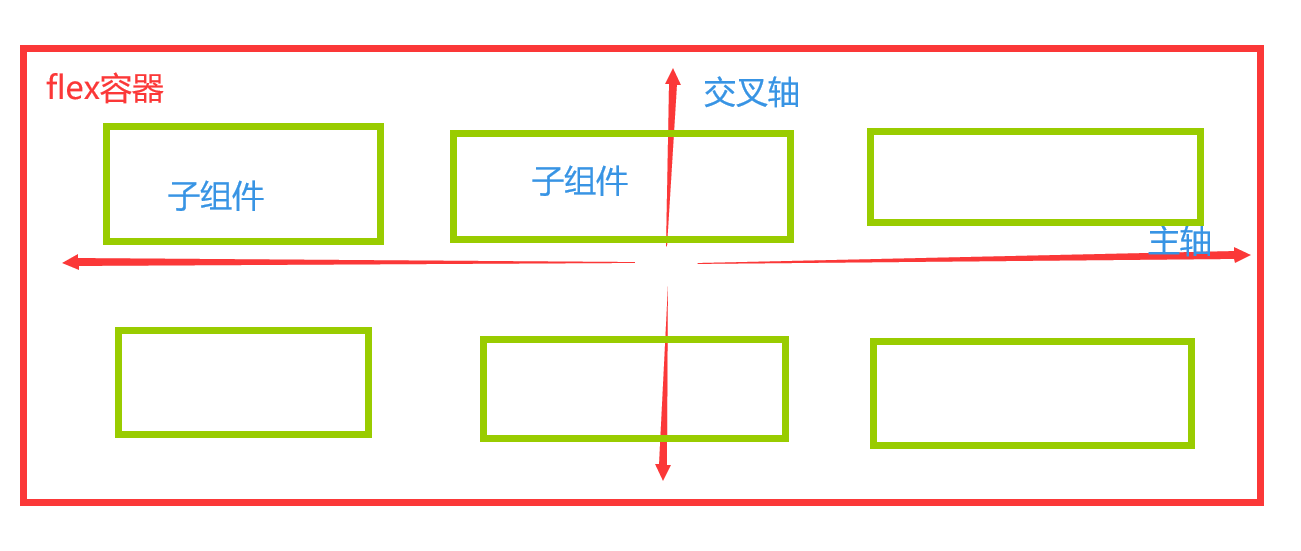

模块结构

模块思想

与传统的编码器-解码器结构相比,更好的分割模型应该使其编码器能够尽可能多地获取全局信息。然而,这通常受到小感受野应用的限制,因此传统编码器学习的图像特征包含的全局信息不足。多尺度膨胀卷积可以在一定程度上解决这个问题。但是,它仍然存在以下缺点:1)相应的图像大小通常是单一的,可能会错过不同尺度的全局信息,2)由于多层信息组合效率低下,可能会丢失更多的血管细节,特别是影响眼底图像中那些小血管的分割。为了解决这两个问题,我们提出了 MMDC 模块并将其插入 U-Net 模型的 skip 连接中。与 MSDC 或其他类似方法不同,我们的 MMDC 模块基于一种新颖的级联模式,将不同尺度组合在一起,并融合了多层特性,以更好地解决上述问题。因此,与其他工作不同,MMDC 通过提出的级联模式实现了相对更大的感受野,这有助于提取更多的全局信息并更好地完成视网膜血管分割。此外,所提出的 MMDC 模块是完全即插即用的。

模块代码

import torch.nn.functional as F

import torch.nn as nn

import torchclass MMDC(nn.Module):def __init__(self, out_channels):super().__init__()self.conv3_1 = nn.Conv2d(out_channels*2,out_channels*2, kernel_size=1)self.conv3_3_1 = nn.Sequential(nn.Conv2d(out_channels*2,out_channels*2, kernel_size=3, padding=1, dilation=1),nn.ReLU(inplace=True)) ## conv3_3_x indicates denotes the convolution of different dilation at the lower levelself.conv3_3_3 = nn.Sequential(nn.Conv2d(out_channels*2,out_channels*2, kernel_size=3, padding=3, dilation=3),nn.ReLU(inplace=True))self.conv3_3_5 = nn.Sequential(nn.Conv2d(out_channels*2,out_channels*2, kernel_size=3, padding=5, dilation=5),nn.ReLU(inplace=True))self.conv33 = nn.Sequential(nn.Conv2d(out_channels*2,out_channels, kernel_size=1),nn.ReLU(inplace=True))self.up = nn.Upsample(scale_factor=2, mode='bilinear', align_corners=True)self.conv1_1 = nn.Conv2d(out_channels // 2, out_channels //2, kernel_size=1)self.conv1_3_1 = nn.Sequential(nn.Conv2d(out_channels //2, out_channels //2, kernel_size=3, padding=1, dilation=1),nn.ReLU(inplace=True))self.conv1_3_3 = nn.Sequential(nn.Conv2d(out_channels //2, out_channels //2, kernel_size=3, padding=3, dilation=3),nn.ReLU(inplace=True))self.conv1_3_5 = nn.Sequential(nn.Conv2d(out_channels // 2, out_channels // 2, kernel_size=3, padding=5, dilation=5),nn.ReLU(inplace=True))self.max = nn.Sequential(nn.MaxPool2d(2),nn.Conv2d(out_channels // 2, out_channels, kernel_size=1),nn.BatchNorm2d(out_channels))def forward(self, x1,x2,x3):""" x1--> 2H * 2W * C/2, x2--> H * W * C, x3-->H/2 * W/2 * 2C"""x1_1 = self.conv1_1(x1)x1_2 = self.conv1_1(self.conv1_3_1(x1))x1_3 = self.conv1_1(self.conv1_3_3(self.conv1_3_1(x1)))x1_4 = self.conv1_1(self.conv1_3_5(self.conv1_3_3(self.conv1_3_1(x1))))x11 = self.max(x1_1 + x1_2 + x1_3 + x1_4)x3_1 = self.conv3_1(x3)x3_2 = self.conv3_1(self.conv3_3_1(x3))x3_3 = self.conv3_1(self.conv3_3_3(self.conv3_3_1(x3)))x3_4 = self.conv3_1(self.conv3_3_5(self.conv3_3_3(self.conv3_3_1(x3))))x33 = self.conv33(self.up(x3_1 + x3_2 + x3_3 +x3_4))return x11 + x2 + x33if __name__ == '__main__':x1 = torch.randn([1, 64, 44, 44])x2 = torch.randn([1, 128, 22, 22])x3 = torch.randn([1, 256, 11, 11])mmdc = MMDC(out_channels=128)out = mmdc(x1, x2, x3)print(out.shape) # 1, 128, 22, 22

这篇关于每日Attention学习16——Multi-layer Multi-scale Dilated Convolution的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!