本文主要是介绍Can agents learn inside of their own dreams?,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

这次阅读一篇NIPS2018的文章,关于World Models in Reinforcement Learning. 原文链接

按照惯例,直接上粗暴的摘要和笔记吧

- Large RNNs are highly expressive models that can learn rich spatial and temporal representations of data. However, many model-free RL methods in the literature often only use small neural networks with few parameters. The RL algorithm is often bottlenecked by the credit assignment problem1, which makes it hard for traditional RL algorithms to learn millions of weights of a large model, hence in practice, smaller networks are used as they iterate faster to a good policy during training.

-

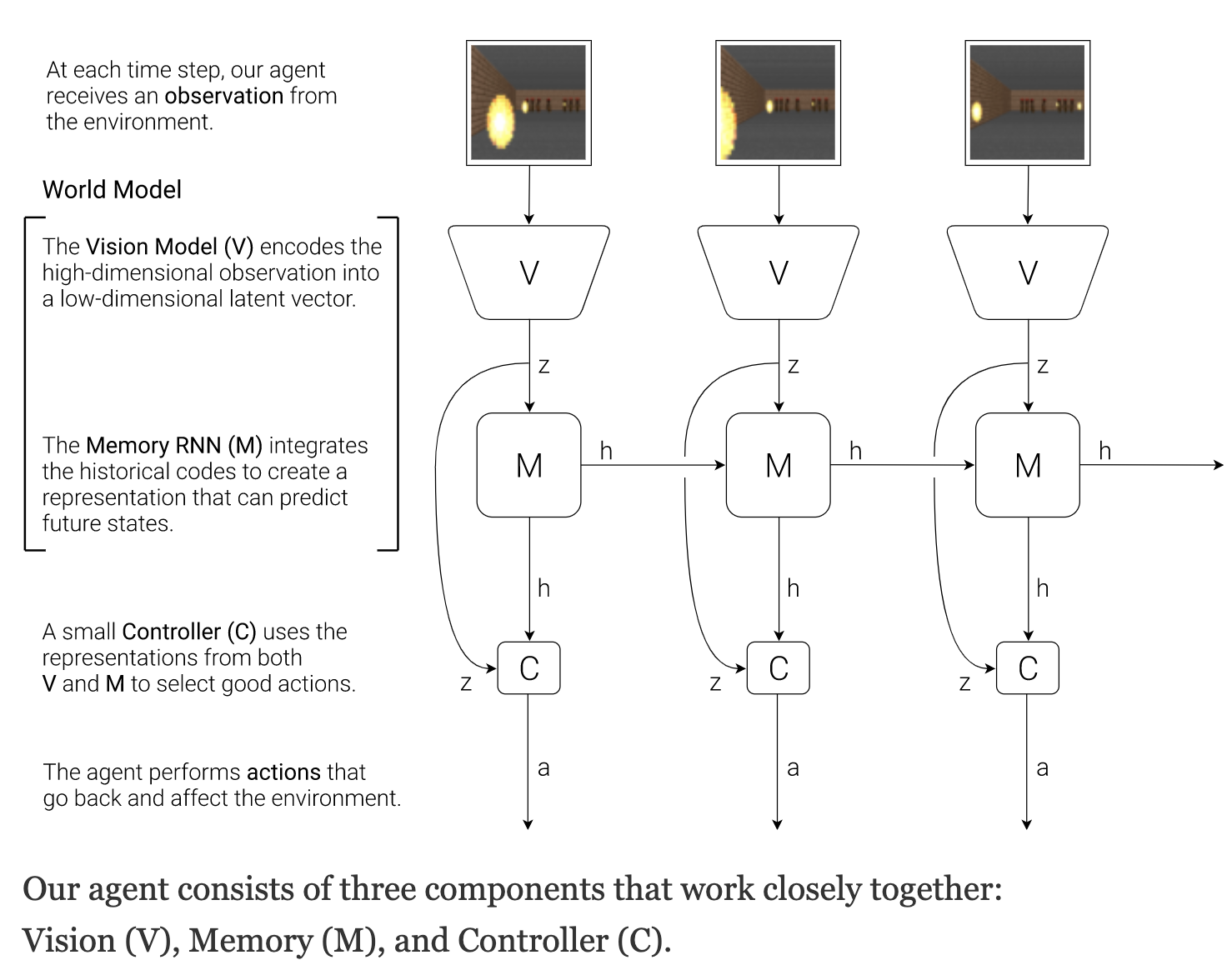

精髓在这张图里了,引入了RNN来对environment中的state transition进行一定程度的预测,基于预测来选择action。

-

Our agent consists of three components that work closely together: Vision (V), Memory (M), and Controller (C).

这篇关于Can agents learn inside of their own dreams?的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!