本文主要是介绍05_Flink-HA高可用、JobManager HA、JobManager HA配置步骤、Flink Standalone集群HA配置、Flink on yarn集群HA配置等,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

1.5.Flink-HA高可用

1.5.1.JobManager高可用(HA)

1.5.2.JobManager HA配置步骤

1.5.3.Flink Standalone集群HA配置

1.5.3.1.HA集群环境规划

1.5.3.2.开始配置

1.5.3.3.配置环境变量

1.5.3.4.启动

1.5.4.Flink on yarn集群HA配置

1.5.4.1.HA集群环境规划

1.5.4.2.开始配置+启动

1.5.4.3.启动flink on yarn,测试HA

1.5.4.4.在hadoop4上启动Flink集群

1.5.Flink-HA高可用

1.5.1.JobManager高可用(HA)

jobManager协调每个flink任务部署。它负责任务调度和资源管理。

默认情况下,每个flink集群只有一个JobManager,这将导致一个单点故障(SPOF):如果JobManager挂了,则不能提交新的任务,并且运行中的程序也会失败。

使用JobManager HA,集群可以从JobManager故障中恢复,从而避免SPOF(单点故障) 。 用户可以在standalone或 YARN集群 模式下,配置集群高可用。

1.5.2.JobManager HA配置步骤

Standalone集群的高可用

Standalone模式(独立模式)下JobManager的高可用性的基本思想是,任何时候都有一个 Master JobManager ,并且多个Standby JobManagers 。 Standby JobManagers可以在Master JobManager 挂掉的情况下接管集群成为Master JobManager。 这样保证了没有单点故障,一旦某一个Standby JobManager接管集群,程序就可以继续运行。 Standby JobManager和Master JobManager实例之间没有明确区别。 每个JobManager都可以成为Master或Standby节点。

Yarn集群高可用

Flink on yarn的HA其实主要是利用yarn自己的job恢复机制。

1.5.3.Flink Standalone集群HA配置

1.5.3.1.HA集群环境规划

使用三台节点实现两主两从集群

Jobmanager : hadoop4 hadoop5

Taskmanager : hadoop5 hadoop6

Zookeeper : hadoop4 【建议使用外置zk集群,在这里使用单节点zk来代替】

注意:

要启用JobManager高可用,必须将高可用性模式设置为zookeeper,配置一个ZooKeeper quorum,并配置一个master文件存储所有JobManager hostname及其Web UI端口号。

Flink利用ZooKeeper实现运行中的JobManager节点之间的分布式协调。Zookeeper是独立于Flink的服务。它通过领导选举制和轻量级状态一致性存储来提供高可靠的分布式协调。

1.5.3.2.开始配置

集群内所有节点的配置都一样,所以先从第一台机器hadoop4开始配置

ssh hadoop4

# 首先按照之前配置standalone的参数进行修改

[root@hadoop4 flink-1.11.1]# vim conf/flink-conf.yaml

jobmanager.rpc.address: hadoop4[root@hadoop4 flink-1.11.1]# vim conf/workers

hadoop5

hadoop6# 然后修改配置HA需要的参数

[root@hadoop4 flink-1.11.1]# vim conf/masters

hadoop4:8081

hadoop5:8081[root@hadoop4 flink-1.11.1]# vim conf/flink-conf.yaml

jobmanager.rpc.address: hadoop4

jobmanager.memory.process.size: 1024m (这里我设置成hadoop中每个container的最小值)

taskmanager.memory.process.size: 1024m (这里我设置成hadoop中每个container的最小值,这个值不能大于container的最大值)

taskmanager.numberOfTaskSlots: 4 (该值设置成CPU的数量,表示并行度的能力,并不是实际使用的并行度,3台机器的话就是3*4个taskslot)

parallelism.default: 1 (全局的并行度设置,若代码中不设置并行度,总的并行度就是1)

high-availability: zookeeper

high-availability.zookeeper.quorum: hadoop4:2181,hadoop5:2181,hadoop6:2181

# The root ZooKeeper node, under which all cluster nodes are placed

high-availability.zookeeper.path.root: /flink

# Zookeeper节点集群id,其中放置了集群所需的所有协调数据

high-availability.cluster-id: /cluster_one

# Zookeeper节点根目录,其下放置所有集群节点的namespace

high-availability.storageDir: /flink/standaloneha/ (可以是具体的路径。)classloader.resolve-order: parent-firstrest.address: hadoop4 (可以是具体的ip地址)

rest.bind-port: 48080-48090

rest.bind-address: xxx.xxx.xxx.xxx (最好是绑定具体的ip地址,否则将会出现端口号已经被占用的问题)

把hadoop5节点上这些配置文件拷贝到其它的节点

[root@hadoop4 conf]# pwd

/home/admin/installed/flink-1.11.1/conf

[root@hadoop4 conf]# scp * root@hadoop5:$PWD

flink-conf.yaml 100% 10KB 6.8MB/s 00:00

log4j-cli.properties 100% 2643 8.0MB/s 00:00

log4j-console.properties 100% 2755 9.9MB/s 00:00

log4j.properties 100% 2130 8.4MB/s 00:00

log4j-session.properties 100% 1850 7.6MB/s 00:00

logback-console.xml 100% 2740 11.3MB/s 00:00

logback-session.xml 100% 1550 7.0MB/s 00:00

logback.xml 100% 2331 10.1MB/s 00:00

masters 100% 50 62.3KB/s 00:00

sql-client-defaults.yaml 100% 5435 15.1MB/s 00:00

workers 100% 40 180.7KB/s 00:00

zoo.cfg 100% 1434 5.9MB/s 00:00

[root@hadoop4 conf]# scp * root@hadoop6:$PWD

flink-conf.yaml 100% 10KB 7.1MB/s 00:00

log4j-cli.properties 100% 2643 8.5MB/s 00:00

log4j-console.properties 100% 2755 10.1MB/s 00:00

log4j.properties 100% 2130 8.3MB/s 00:00

log4j-session.properties 100% 1850 8.1MB/s 00:00

logback-console.xml 100% 2740 11.7MB/s 00:00

logback-session.xml 100% 1550 6.9MB/s 00:00

logback.xml 100% 2331 10.2MB/s 00:00

masters 100% 50 66.8KB/s 00:00

sql-client-defaults.yaml 100% 5435 16.4MB/s 00:00

workers 100% 40 189.5KB/s 00:00

zoo.cfg 100% 1434 6.2MB/s 00:00

[root@hadoop4 conf]#

1.5.3.3.配置环境变量

vim /etc/profile

内容如下:

# 设置hadoop的配置

export HADOOP_HOME=/usr/hdp/current/hadoop-client

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

export HADOOP_CLASSPATH=`hadoop classpath`

1.5.3.4.启动

要先启动hadoop的服务 : sbin/start-all.sh

启动zk服务:bin/zkServer.sh start

启动flink standalone HA集群,在hadoop4节点上启动如下命令:

[root@hadoop4 flink-1.11.1]# bin/start-cluster.sh

Starting HA cluster with 2 masters.

Starting standalonesession daemon on host hadoop4.

Starting standalonesession daemon on host hadoop5.

Starting taskexecutor daemon on host hadoop5.

Starting taskexecutor daemon on host hadoop6.

停止

[root@hadoop4 flink-1.11.1]# bin/stop-cluster.sh

Stopping taskexecutor daemon (pid: 23807) on host hadoop5.

Stopping taskexecutor daemon (pid: 9631) on host hadoop6.

Stopping standalonesession daemon (pid: 4664) on host hadoop4.

Stopping standalonesession daemon (pid: 23265) on host hadoop5.

[root@hadoop4 flink-1.11.1]#

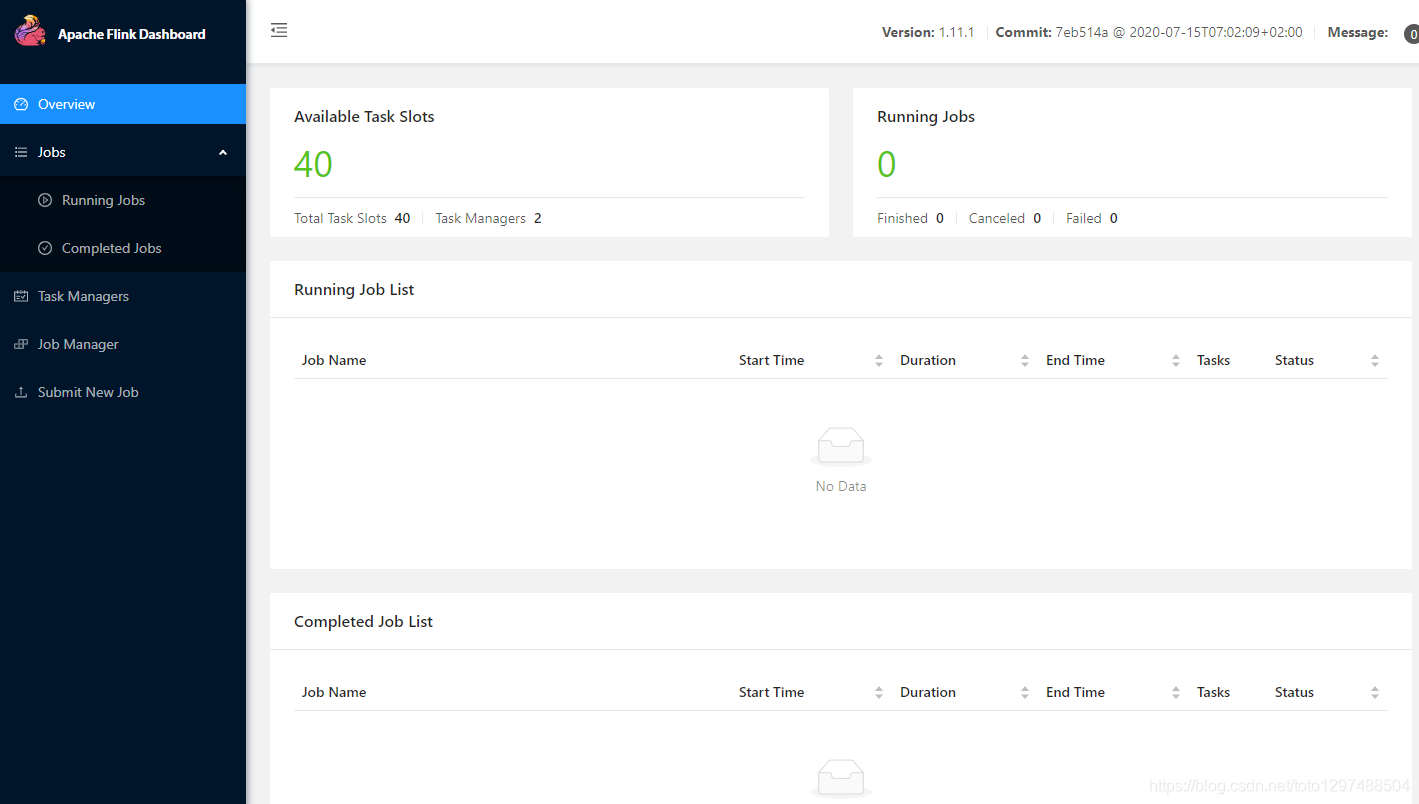

访问web界面:

在hadoop4-3节点上可以看到:

[root@hadoop4 flink-1.11.1]# jps

9916 StandaloneSessionClusterEntrypoint[root@hadoop5 ~]# jps

29072 TaskManagerRunner

28696 StandaloneSessionClusterEntrypoint[root@hadoop6 ~]# jps

13182 TaskManagerRunner

1.5.4.Flink on yarn集群HA配置

1.5.4.1.HA集群环境规划

Flink on yarn的HA其实就是利用yarn自己的恢复机制。

在这需要用到zk,主要是因为虽然flink-on-yarn cluster HA依赖于Yarn自己的集群机制,但是Flink Job在恢复时,需要依赖检查点产生的快照,而这些快照虽然配置在hdfs,但是其元数据信息保存在zookeeper中,所以我们还要配置zookeeper的信息。

Hadoop搭建的集群,在hadoop4,hadoop5,hadoop6节点上面[flink on yarn使用伪分布式hadoop集群和真正分布式hadoop集群,在操作上没有区别]

zookeeper服务也在hadoop4节点上。

1.5.4.2.开始配置+启动

首先需要修改hadoop中的yarn-site.xml中的配置,设置提交应用程序的最大尝试次数。

<property><name>yarn.resourcemanager.am.max-attempts</name><value>4</value><description>The maximum number of application master execution attempts. </description>

</property># 把修改后的配置文件同步到hadoop集群的其他节点。

可以解压一份新的flink-1.11.1的包

[root@hadoop4 software]# pwd

/home/admin/software

[root@hadoop4 software]# tar -zxvf flink-1.11.1-bin-scala_2.12.tgz -C ../installed/

修改配置文件【标红的目录名称建议和standalone HA中的配置区分开】

修改配置文件【标红的目录名称建议和standalone HA中的配置区分开】

vim conf/flink-conf.yaml################################################################################

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#################################################################################==============================================================================

# Common

#==============================================================================# The external address of the host on which the JobManager runs and can be

# reached by the TaskManagers and any clients which want to connect. This setting

# is only used in Standalone mode and may be overwritten on the JobManager side

# by specifying the --host <hostname> parameter of the bin/jobmanager.sh executable.

# In high availability mode, if you use the bin/start-cluster.sh script and setup

# the conf/masters file, this will be taken care of automatically. Yarn/Mesos

# automatically configure the host name based on the hostname of the node where the

# JobManager runs.

# jobmanager所在节点

jobmanager.rpc.address: hadoop6# The RPC port where the JobManager is reachable.

# jobManager端口,默认为6123

jobmanager.rpc.port: 6123# The total process memory size for the JobManager.

#

# Note this accounts for all memory usage within the JobManager process, including JVM metaspace and other overhead.# jobmanager.memory.process.size: 1600m

jobmanager.memory.process.size: 1024m# The total process memory size for the TaskManager.

#

# Note this accounts for all memory usage within the TaskManager process, including JVM metaspace and other overhead.# taskmanager.memory.process.size: 1728m

taskmanager.memory.process.size: 1024m# To exclude JVM metaspace and overhead, please, use total Flink memory size instead of 'taskmanager.memory.process.size'.

# It is not recommended to set both 'taskmanager.memory.process.size' and Flink memory.

#

# taskmanager.memory.flink.size: 1280m# The number of task slots that each TaskManager offers. Each slot runs one parallel pipeline.

# 每个taskmanager的并行度(这里是和机器上CPU数量相同的)

taskmanager.numberOfTaskSlots: 3# The parallelism used for programs that did not specify and other parallelism.

# 启动应用的默认并行度(该应用所使用总的CPU数)

parallelism.default: 1# The default file system scheme and authority.

#

# By default file paths without scheme are interpreted relative to the local

# root file system 'file:///'. Use this to override the default and interpret

# relative paths relative to a different file system,

# for example 'hdfs://mynamenode:12345'

#

# fs.default-scheme#==============================================================================

# High Availability

#==============================================================================# The high-availability mode. Possible options are 'NONE' or 'zookeeper'.

#

high-availability: zookeeper# The path where metadata for master recovery is persisted. While ZooKeeper stores

# the small ground truth for checkpoint and leader election, this location stores

# the larger objects, like persisted dataflow graphs.

#

# Must be a durable file system that is accessible from all nodes

# (like HDFS, S3, Ceph, nfs, ...)

#

high-availability.storageDir: hdfs://tqHadoopCluster/flink/ha-yarn# The list of ZooKeeper quorum peers that coordinate the high-availability

# setup. This must be a list of the form:

# "host1:clientPort,host2:clientPort,..." (default clientPort: 2181)

#

high-availability.zookeeper.quorum: hadoop4:2181,hadoop5:2181,hadoop6:2181

high-availability.zookeeper.path.root: /flink-yarn

yarn.application-attempts: 10# ACL options are based on https://zookeeper.apache.org/doc/r3.1.2/zookeeperProgrammers.html#sc_BuiltinACLSchemes

# It can be either "creator" (ZOO_CREATE_ALL_ACL) or "open" (ZOO_OPEN_ACL_UNSAFE)

# The default value is "open" and it can be changed to "creator" if ZK security is enabled

#

# high-availability.zookeeper.client.acl: open#==============================================================================

# Fault tolerance and checkpointing

#==============================================================================# The backend that will be used to store operator state checkpoints if

# checkpointing is enabled.

#

# Supported backends are 'jobmanager', 'filesystem', 'rocksdb', or the

# <class-name-of-factory>.

#

state.backend: filesystem# Directory for checkpoints filesystem, when using any of the default bundled

# state backends.

#

state.checkpoints.dir: hdfs://tqHadoopCluster/flink-checkpoints# Default target directory for savepoints, optional.

#

state.savepoints.dir: hdfs://tqHadoopCluster/flink-checkpoints# Flag to enable/disable incremental checkpoints for backends that

# support incremental checkpoints (like the RocksDB state backend).

#

# state.backend.incremental: false# The failover strategy, i.e., how the job computation recovers from task failures.

# Only restart tasks that may have been affected by the task failure, which typically includes

# downstream tasks and potentially upstream tasks if their produced data is no longer available for consumption.jobmanager.execution.failover-strategy: region#==============================================================================

# Rest & web frontend

#==============================================================================# The port to which the REST client connects to. If rest.bind-port has

# not been specified, then the server will bind to this port as well.

# Flink web UI默认端口

rest.port: 38081# The address to which the REST client will connect to

#

#rest.address: 0.0.0.0# Port range for the REST and web server to bind to.

#

rest.bind-port: 38090-38200# The address that the REST & web server binds to

#

rest.bind-address: hadoop6# Flag to specify whether job submission is enabled from the web-based

# runtime monitor. Uncomment to disable.#web.submit.enable: false#==============================================================================

# Advanced

#==============================================================================# Override the directories for temporary files. If not specified, the

# system-specific Java temporary directory (java.io.tmpdir property) is taken.

#

# For framework setups on Yarn or Mesos, Flink will automatically pick up the

# containers' temp directories without any need for configuration.

#

# Add a delimited list for multiple directories, using the system directory

# delimiter (colon ':' on unix) or a comma, e.g.:

# /data1/tmp:/data2/tmp:/data3/tmp

#

# Note: Each directory entry is read from and written to by a different I/O

# thread. You can include the same directory multiple times in order to create

# multiple I/O threads against that directory. This is for example relevant for

# high-throughput RAIDs.

#

# io.tmp.dirs: /tmp# The classloading resolve order. Possible values are 'child-first' (Flink's default)

# and 'parent-first' (Java's default).

#

# Child first classloading allows users to use different dependency/library

# versions in their application than those in the classpath. Switching back

# to 'parent-first' may help with debugging dependency issues.

#

# classloader.resolve-order: child-first# The amount of memory going to the network stack. These numbers usually need

# no tuning. Adjusting them may be necessary in case of an "Insufficient number

# of network buffers" error. The default min is 64MB, the default max is 1GB.

#

# taskmanager.memory.network.fraction: 0.1

# taskmanager.memory.network.min: 64mb

# taskmanager.memory.network.max: 1gb#==============================================================================

# Flink Cluster Security Configuration

#==============================================================================# Kerberos authentication for various components - Hadoop, ZooKeeper, and connectors -

# may be enabled in four steps:

# 1. configure the local krb5.conf file

# 2. provide Kerberos credentials (either a keytab or a ticket cache w/ kinit)

# 3. make the credentials available to various JAAS login contexts

# 4. configure the connector to use JAAS/SASL# The below configure how Kerberos credentials are provided. A keytab will be used instead of

# a ticket cache if the keytab path and principal are set.# security.kerberos.login.use-ticket-cache: true

# security.kerberos.login.keytab: /path/to/kerberos/keytab

# security.kerberos.login.principal: flink-user# The configuration below defines which JAAS login contexts# security.kerberos.login.contexts: Client,KafkaClient#==============================================================================

# ZK Security Configuration

#==============================================================================# Below configurations are applicable if ZK ensemble is configured for security# Override below configuration to provide custom ZK service name if configured

# zookeeper.sasl.service-name: zookeeper# The configuration below must match one of the values set in "security.kerberos.login.contexts"

# zookeeper.sasl.login-context-name: Client#==============================================================================

# HistoryServer

#==============================================================================# The HistoryServer is started and stopped via bin/historyserver.sh (start|stop)# Directory to upload completed jobs to. Add this directory to the list of

# monitored directories of the HistoryServer as well (see below).

# 因为配置了HA,所以hdfs nameservices指定为tqHadoopCluster

jobmanager.archive.fs.dir: hdfs:///tqHadoopCluster/flink-logs# The address under which the web-based HistoryServer listens.

# historyserver web UI地址(需要在本地hosts文件中指定该映射关系)

historyserver.web.address: hadoop6# The port under which the web-based HistoryServer listens.

historyserver.web.port: 28082# Comma separated list of directories to monitor for completed jobs.

# 值与“jobmanager.archive.fs.dir”保持一致

historyserver.archive.fs.dir: hdfs:///tqHadoopCluster/flink-logs# Interval in milliseconds for refreshing the monitored directories.

# history server页面默认刷新时长

historyserver.archive.fs.refresh-interval: 10000

配置vim conf/masters

hadoop5:38081

hadoop6:38081

配置vim conf/workers

hadoop4

hadoop5

hadoop6

设置环境变量

# 设置hadoop的配置

export JAVA_HOME=/usr/java/jdk1.8.0_221-amd64

export MAVEM_HOME=/usr/local/apache-maven-3.6.1

export ANT_HOME=/usr/local/apache-ant-1.10.5

export PATH=$JAVA_HOME/bin:$MAVEM_HOME/bin:$ANT_HOME/bin:$PATH# 设置scala环境变量

export SCALA_HOME=/home/admin/installed/scala-2.12.12

export PATH=$PATH:$SCALA_HOME/bin# 设置flink环境变量

export FLINK_HOME=/home/admin/installed/flink-1.11.1

export PATH=$PATH:$FLINK_HOME/bin# 设置hadoop的配置

export HADOOP_HOME=/usr/hdp/current/hadoop-client

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

export HADOOP_CLASSPATH=`hadoop classpath`

1.5.4.3.启动flink on yarn,测试HA

先启动hadoop集群和zookeeper。

bin/zkServer.sh start

sbin/start-all.sh

1.5.4.4.在hadoop4上启动Flink集群

[root@hadoop4 flink-1.11.1]# cd $FLINK_HOME/

[root@hadoop4 flink-1.11.1]# $FLINK_HOME/bin/yarn-session.sh -d -nm yarnsession02 -n 2 -s 3 -jm 1024m -tm 2048m

可以在yarn上直接执行一个flink任务,方式如下:

[root@hadoop4 flink-1.11.1]# ./bin/flink run -m yarn-cluster ./examples/batch/WordCount.jar

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/home/admin/installed/flink-1.11.1/lib/log4j-slf4j-impl-2.12.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

Job Runtime: 11670 ms

Accumulator Results:

- 8ee108b283c043ae51e7287842b98267 (java.util.ArrayList) [170 elements](a,5)

(action,1)

(after,1)

(against,1)

(all,2)

(and,12)

(arms,1)

(arrows,1)

(awry,1)

(ay,1)

(bare,1)

(be,4)

(bear,3)

(you,1)

这篇关于05_Flink-HA高可用、JobManager HA、JobManager HA配置步骤、Flink Standalone集群HA配置、Flink on yarn集群HA配置等的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!