本文主要是介绍【零基础强化学习】100行代码教你实现基于DQN的gym登山车,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

基于DQN的gym登山车🤔

- 写在前面

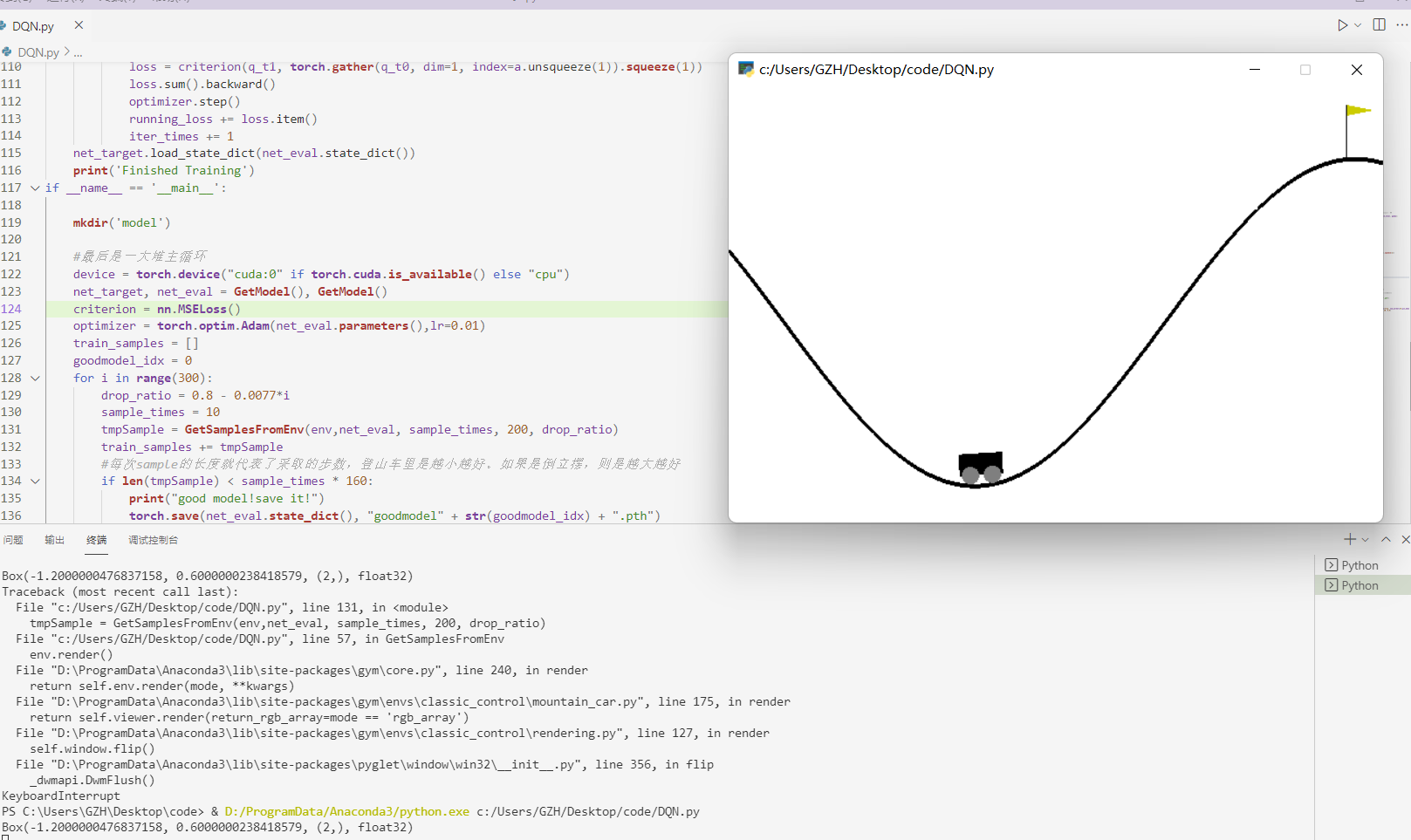

- show me code, no bb

- 界面展示

- 写在最后

- 谢谢点赞交流!(❁´◡`❁)

更多代码: gitee主页:https://gitee.com/GZHzzz

博客主页: CSDN:https://blog.csdn.net/gzhzzaa

写在前面

作为一个新手,写这个强化学习-基础知识专栏是想和大家分享一下自己强化学习的学习历程,希望大家互相交流一起进步。希望自己在2022年能保证把强化学习基础概念都过一遍,主要是成体系介绍强化学习的基础知识,而且在gitee收集了强化学习经典论文和基于pytorch的经典模型 ,大家一起互相学习啊!可能会有很多错漏,希望大家批评指正!不要高估一年的努力,也不要低估十年的积累,与君共勉!

show me code, no bb

#这是一堆初始化

import gym

import random

import torch

import torch.nn as nn

from torch.utils.data import Dataset

import os

#env = gym.make('CartPole-v0')

env = gym.make('MountainCar-v0') #action = (0,1,2) = (left, no_act, right)

#env = gym.make('Hopper-v3')

print(env.observation_space)

#print(env.action_space)

#简单的线性模型

def mkdir(path):folder = os.path.exists(path)if not folder: os.makedirs(path)

def GetModel():#In features:2(state) ,out:3 action qreturn nn.Sequential(nn.Linear(2, 16), nn.LeakyReLU(inplace=True), nn.Linear(16,24),nn.LeakyReLU(inplace=True), nn.Linear(24,3))

#创建数据集

class RLDataset(Dataset):def __init__(self, samples, transform = None, target_transform = None):#samples = [(s,a,r,s_), ...]self.samples = self.transform(samples)def __getitem__(self, index):#if self.transform is not None:# img = self.transform(img) return self.samples[index]def __len__(self):return len(self.samples)def transform(self, samples):transSamples = []for (s,a,r,s_) in samples:sT = torch.tensor(s,).to(torch.float32)sT_ = torch.tensor(s_).to(torch.float32)transSamples.append((sT, a, r, sT_))return transSamples#采样环境函数,可以设置随机操作的概率。重点在于reward的设计

def GetSamplesFromEnv(env, model, epoch, max_steps, drop_ratio = 0.8):train_samples = []each_sample = Noneenv.reset()observation_new = Noneobservation_old = Nonemodel.eval()for i_episode in range(epoch):observation_new = env.reset()observation_old = env.reset()for t in range(max_steps):env.render()#print(observation)if random.random() > 1-drop_ratio:action = env.action_space.sample()else:inputT = torch.tensor(observation_new).to(torch.float32)action = torch.argmax(model(inputT)).item()#print(action)observation_new, reward, done, info = env.step(action)#print(reward)#We record samples.if t > 0 :#reward += observation_new[0]#if observation_new[0] > -0.35:# reward += (observation_new[0] + 0.36)*5if observation_new[0] > -0.2:reward += 0.2elif observation_new[0] > -0.15:reward += 0.5elif observation_new[0] > -0.1:reward += 0.7each_sample = (observation_old, action, reward, observation_new)train_samples.append(each_sample)observation_old = observation_newif done:#失败的采样不打印出来if t != 199:print("Episode finished after {} timesteps".format(t+1))breakreturn train_samples

#训练网络。这里可能gather函数比较绕,还有双网络更新比较费解。忽略掉这些,和正常训练循环一样

#gamma是贝尔曼方程里的衰减因子

def TrainNet(net_target, net_eval, trainloader, criterion, optimizer, device, epoch_total, gamma):running_loss = 0.0iter_times = 0net_target.eval()net_eval.train()for epoch in range(epoch_total + 1):if epoch > 0: print('epoch %d, loss %.5f' % (epoch, running_loss))running_loss = 0.0if epoch == epoch_total: break for i, data in enumerate(trainloader, 0):if iter_times % 100 == 0:net_target.load_state_dict(net_eval.state_dict())s,a,r,s_ = dataoptimizer.zero_grad()#output = Q_predicted.q_t0 = net_eval(s)q_t1 = net_target(s_).detach()q_t1 = gamma * (r + torch.max(q_t1,dim=1)[0]).to(torch.float32)loss = criterion(q_t1, torch.gather(q_t0, dim=1, index=a.unsqueeze(1)).squeeze(1))loss.sum().backward()optimizer.step()running_loss += loss.item()iter_times += 1net_target.load_state_dict(net_eval.state_dict()) print('Finished Training')

if __name__ == '__main__':mkdir('model')#最后是一大堆主循环device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")net_target, net_eval = GetModel(), GetModel()criterion = nn.MSELoss()optimizer = torch.optim.Adam(net_eval.parameters(),lr=0.01)train_samples = []goodmodel_idx = 0for i in range(300):drop_ratio = 0.8 - 0.0077*isample_times = 10tmpSample = GetSamplesFromEnv(env,net_eval, sample_times, 200, drop_ratio)train_samples += tmpSample#每次sample的长度就代表了采取的步数,登山车里是越小越好。如果是倒立摆,则是越大越好if len(tmpSample) < sample_times * 160:print("good model!save it!")torch.save(net_eval.state_dict(), "goodmodel" + str(goodmodel_idx) + ".pth")goodmodel_idx += 1#dataset里存着最新的不超过4000的样本if len(train_samples) > 4000:train_samples = train_samples[len(tmpSample):len(train_samples)]trainset = RLDataset(train_samples)trainloader = torch.utils.data.DataLoader(trainset, batch_size=64, shuffle=True, num_workers=0,pin_memory=True)TrainNet(net_target, net_eval, trainloader, criterion, optimizer, device, 10, 0.9)if i%50 == 0:PATH = "model/model"+str(i)+".pth"torch.save(net_eval.state_dict(), PATH)env.close()#这一堆是测试看效果用的#PATH = 'model/model42.pth'#net_eval.load_state_dict(torch.load(PATH))#net_target.load_state_dict(torch.load(PATH))#GetSamplesFromEnv(env,net_eval, 20, 200, 0)- 自己过了一遍,代码可直接跑通😎,包括模型保存,模型测试,你懂的!

界面展示

写在最后

十年磨剑,与君共勉!

更多代码:gitee主页:https://gitee.com/GZHzzz

博客主页:CSDN:https://blog.csdn.net/gzhzzaa

- Fighting!😎

while True:Go life

谢谢点赞交流!(❁´◡`❁)

这篇关于【零基础强化学习】100行代码教你实现基于DQN的gym登山车的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!