本文主要是介绍[每日一氵] Nsight Systems (nsys) 使用记录以及cuda程序优化,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

主要内容来自这个课程界面:

https://www.nvidia.cn/training/instructor-led-workshops/

Nsight Systems (nsys) 原来这么有用啊,我每次安装cuda的时候,都不安装他,不过配置DL环境确实不需要[手动狗头]

这样运行文件

nvcc -o xx xx.cu -run

Nsys这么用

# 运用 nsys profile 分析刚编译好的可执行文件

nsys profile --stats=true ./xx

nsys profile将生成一个qdrep报告文件,该文件可以以多种方式使用。 我们在这里使用--stats = true标志表示我们希望打印输出摘要统计信息。 输出的信息有很多,包括:

- 配置文件配置详细信息

- 报告文件的生成详细信息

- CUDA API统计信息

- CUDA核函数的统计信息

- CUDA内存操作统计信息(时间和大小)

- 操作系统内核调用接口的统计信息

如下:

Warning: LBR backtrace method is not supported on this platform. DWARF backtrace method will be used.

Collecting data...

Success! All values calculated correctly.

Processing events...

Capturing symbol files...

Saving temporary "/tmp/nsys-report-a10b-4a77-7d5c-f462.qdstrm" file to disk...

Creating final output files...Processing [==============================================================100%]

Saved report file to "/tmp/nsys-report-a10b-4a77-7d5c-f462.qdrep"

Exporting 22723 events: [=================================================100%]Exported successfully to

/tmp/nsys-report-a10b-4a77-7d5c-f462.sqliteCUDA API Statistics: # CUDA API统计信息Time(%) Total Time (ns) Num Calls Average Minimum Maximum Name ------- --------------- --------- ----------- --------- --------- ---------------------55.9 220024635 3 73341545.0 35564 219942207 cudaMallocManaged 39.1 154081013 1 154081013.0 154081013 154081013 cudaDeviceSynchronize5.0 19599393 3 6533131.0 5868170 7536695 cudaFree 0.0 54357 1 54357.0 54357 54357 cudaLaunchKernel CUDA Kernel Statistics: # CUDA核函数的统计信息Time(%) Total Time (ns) Instances Average Minimum Maximum Name ------- --------------- --------- ----------- --------- --------- -------------------------------------------100.0 154061080 1 154061080.0 154061080 154061080 addVectorsInto(float*, float*, float*, int)CUDA Memory Operation Statistics (by time): # CUDA内存操作统计信息(时间)Time(%) Total Time (ns) Operations Average Minimum Maximum Operation ------- --------------- ---------- ------- ------- ------- ---------------------------------82.6 99842969 20879 4782.0 1823 169216 [CUDA Unified Memory memcpy HtoD]17.4 21020960 768 27371.0 1375 159872 [CUDA Unified Memory memcpy DtoH]CUDA Memory Operation Statistics (by size in KiB): # CUDA内存操作统计信息(大小)Total Operations Average Minimum Maximum Operation ---------- ---------- ------- ------- -------- ---------------------------------393216.000 20879 18.833 4.000 1012.000 [CUDA Unified Memory memcpy HtoD]131072.000 768 170.667 4.000 1020.000 [CUDA Unified Memory memcpy DtoH]Operating System Runtime API Statistics: # 操作系统内核调用接口的统计信息Time(%) Total Time (ns) Num Calls Average Minimum Maximum Name ------- --------------- --------- ---------- ------- --------- --------------53.9 1349784189 74 18240326.9 24368 100131135 poll 41.7 1042453633 74 14087211.3 15428 100074482 sem_timedwait 3.5 87328279 587 148770.5 1023 16811695 ioctl 0.9 21850661 90 242785.1 1235 7474212 mmap 0.0 624849 77 8114.9 2460 18975 open64 0.0 113233 4 28308.3 23925 32553 pthread_create0.0 107072 23 4655.3 1296 13371 fopen 0.0 86168 3 28722.7 20436 43529 fgets 0.0 85314 11 7755.8 4313 13945 write 0.0 40344 14 2881.7 1294 4315 munmap 0.0 29311 16 1831.9 1057 3519 fclose 0.0 27759 5 5551.8 2789 8032 open 0.0 26388 13 2029.8 1114 3558 read 0.0 16141 3 5380.3 3831 6160 pipe2 0.0 8240 2 4120.0 3544 4696 socket 0.0 7423 2 3711.5 1435 5988 fgetc 0.0 6363 4 1590.8 1442 1841 mprotect 0.0 6290 2 3145.0 2664 3626 fread 0.0 5900 1 5900.0 5900 5900 connect 0.0 4790 2 2395.0 1221 3569 fcntl 0.0 1913 1 1913.0 1913 1913 bind 0.0 1418 1 1418.0 1418 1418 listen Report file moved to "/xxx/task/report3.qdrep"

Report file moved to "/xxx/task/report3.sqlite"

由于 GPU 上的 SM 数量会因所用的特定 GPU 而异,因此为支持可移植性,我们不能将 SM 数量硬编码到代码库中。相反,应该以编程方式获取此信息。

以下所示为在 CUDA C/C++ 中获取 C 结构的方法,该结构包含当前处于活动状态的 GPU 设备的多个属性,其中包括设备的 SM 数量:

int deviceId;

cudaGetDevice(&deviceId); // `deviceId` now points to the id of the currently active GPU.cudaDeviceProp props;

cudaGetDeviceProperties(&props, deviceId); // `props` now has many useful properties about// the active GPU device.

具体的属性名称可以参考这里:

https://docs.nvidia.com/cuda/cuda-runtime-api/structcudaDeviceProp.html

查询信息的时候这样用:

#include <stdio.h>int main()

{/** Assign values to these variables so that the output string below prints the* requested properties of the currently active GPU.*/int deviceId;int computeCapabilityMajor;int computeCapabilityMinor;int multiProcessorCount;int warpSize;cudaGetDevice(&deviceId);cudaDeviceProp prop;cudaGetDeviceProperties(&prop, deviceId);warpSize = prop.warpSize;multiProcessorCount = prop.multiProcessorCount;computeCapabilityMajor = prop.major;computeCapabilityMinor = prop.minor;/** There should be no need to modify the output string below.*/printf("Device ID: %d\nNumber of SMs: %d\nCompute Capability Major: %d\nCompute Capability Minor: %d\nWarp Size: %d\n", deviceId, multiProcessorCount, computeCapabilityMajor, computeCapabilityMinor, warpSize);

}

另外,重点理解这句话:

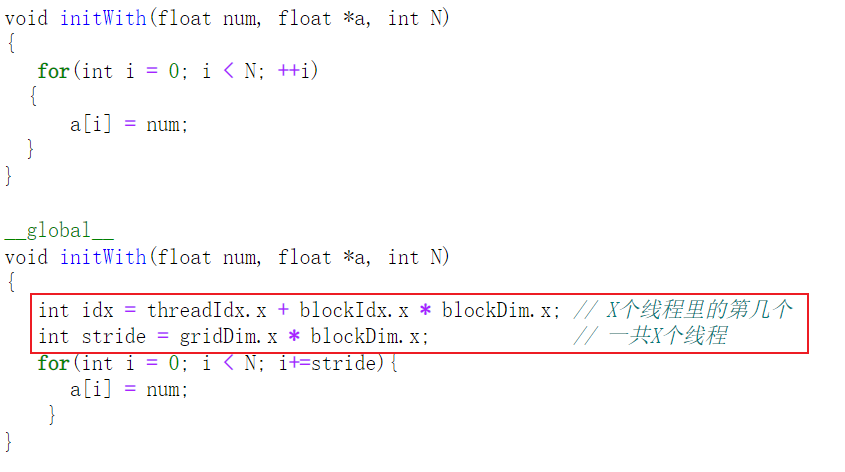

优化一个cuda程序,大概有以下几个方向:

- 将变量初始化在GPU上,或者用

cudaMemPrefetchAsync异步搬运,搬到GPU上,然后再搬回来 - 修改核函数,加上

stride如上边那个截图 - 读取GPU上SM数量,

cudaDeviceGetAttribute(&numberOfSMs, cudaDevAttrMultiProcessorCount, deviceId);根据这个数量给CUDA核函数传入block/grid数和thread/block数 - 除了节省GPU跑的时间,也得节省开发者的时间,加上这个,时间不会消耗多少的:

cudaError_t kernelFuncErrs;

cudaError_t asyncErr;kernelFunc<<<numberOfBlocks, threadsPerBlock>>>(c, a, b, N);kernelFuncErrs= cudaGetLastError();

if(kernelFuncErrs != cudaSuccess) printf("Error: %s\n", cudaGetErrorString(kernelFuncErrs));asyncErr = cudaDeviceSynchronize();

if(asyncErr != cudaSuccess) printf("Error: %s\n", cudaGetErrorString(asyncErr));

- 然后就是,

nsys profile --stats=true ./xx迭代优化程序,详见:

https://docs.nvidia.com/cuda/cuda-c-best-practices-guide/index.html#memory-optimizations

这篇关于[每日一氵] Nsight Systems (nsys) 使用记录以及cuda程序优化的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!