本文主要是介绍torch.nn.CrossEntropyLoss,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

1.说明

交叉熵损失函数是神经网路中比较有用的函数,作用是为了计算出初始化的 y 和实际标签 label 的差别。

torch.nn.CrossEntropyLoss(weight=None, size_average=None, ignore_index=- 100, reduce=None, reduction='mean', label_smoothing=0.0)

- 公式:

l ( x , y ) = L = { l 1 , . . . , l N } T (1) l(x,y)=L=\{l_1,...,l_N\}^T\tag{1} l(x,y)=L={l1,...,lN}T(1)

l n = − w y n log exp ( x n , y n ) ∑ c = 1 C exp ( x n , c ) ⋅ 1 { y n ≠ i g n o r _ i n d e x } (2) l_n=-w_{yn}\log{\frac{\exp(x_{n,yn})}{\sum_{c=1}^C\exp(x_{n,c})}}·1\{y_n ≠ignor\_index\}\tag{2} ln=−wynlog∑c=1Cexp(xn,c)exp(xn,yn)⋅1{yn=ignor_index}(2)

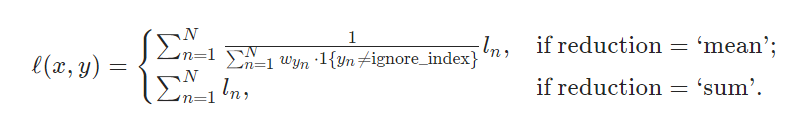

- mean : 求得损失的均值

- sum : 求得损失的和

- none: 求得损失

2. 代码

# -*- coding: utf-8 -*-

# @Project: zc

# @Author: zc

# @File name: CrossEntropyLoss_Test

# @Create time: 2022/1/3 10:07# 1. 导入相关数据库

import torch

from torch.nn import functional as F# 2.定义一个初始化的 y_out

y_out = torch.Tensor([[1, 2, 3], [3, 4, 1]])# 3.定义一个目标张量 target

target = torch.LongTensor([0, 1])# 4.定义交叉熵损失,reduction用默认的,reduction='mean',

# 返回交叉熵损失的均值,最后得到一个张量 (L1+L2)/2

loss_defaut = F.cross_entropy(y_out, target)# 5.定义交叉熵损失,reduction='mean',返回交叉熵损失的均值,最后得到一个张量 (L1+L2)/2

loss_mean = F.cross_entropy(y_out, target, reduction='mean')# 6.定义交叉熵损失,reduction='sum',返回交叉熵损失的和,最后得到一个张量L1+L2

loss_sum = F.cross_entropy(y_out, target, reduction='sum')# 7.定义交叉熵损失,reduction='none',返回交叉熵损失,一个元祖(L1,L2)

loss_none = F.cross_entropy(y_out, target, reduction='none')

print(f'y_out={y_out}')

print(f'target={target}')

print(f'loss_defaut={loss_defaut}')

print(f'loss_mean={loss_mean}')

print(f'loss_sum={loss_sum}')

print(f'loss_none={loss_none}')

3. 结果

y_out=tensor([[1., 2., 3.],[3., 4., 1.]])

target=tensor([0, 1])

loss_defaut=1.3783090114593506

loss_mean=1.3783090114593506

loss_sum=2.756618022918701

loss_none=tensor([2.4076, 0.3490])

这篇关于torch.nn.CrossEntropyLoss的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!