本文主要是介绍开源模型应用落地-chatglm3-6b-集成langchain(十),希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

一、前言

langchain框架调用本地模型,使得用户可以直接提出问题或发送指令,而无需担心具体的步骤或流程。通过LangChain和chatglm3-6b模型的整合,可以更好地处理对话,提供更智能、更准确的响应,从而提高对话系统的性能和用户体验。

二、术语

2.1. ChatGLM3

是智谱AI和清华大学 KEG 实验室联合发布的对话预训练模型。ChatGLM3-6B 是 ChatGLM3 系列中的开源模型,在保留了前两代模型对话流畅、部署门槛低等众多优秀特性的基础上,ChatGLM3-6B 引入了如下特性:

- 更强大的基础模型: ChatGLM3-6B 的基础模型 ChatGLM3-6B-Base 采用了更多样的训练数据、更充分的训练步数和更合理的训练策略。在语义、数学、推理、代码、知识等不同角度的数据集上测评显示,* ChatGLM3-6B-Base 具有在 10B 以下的基础模型中最强的性能*。

- 更完整的功能支持: ChatGLM3-6B 采用了全新设计的 Prompt 格式 ,除正常的多轮对话外。同时原生支持工具调用(Function Call)、代码执行(Code Interpreter)和 Agent 任务等复杂场景。

- 更全面的开源序列: 除了对话模型 ChatGLM3-6B 外,还开源了基础模型 ChatGLM3-6B-Base 、长文本对话模型 ChatGLM3-6B-32K 和进一步强化了对于长文本理解能力的 ChatGLM3-6B-128K。以上所有权重对学术研究完全开放 ,在填写 问卷 进行登记后亦允许免费商业使用。

2.2.LangChain

是一个全方位的、基于大语言模型这种预测能力的应用开发工具。LangChain的预构建链功能,就像乐高积木一样,无论你是新手还是经验丰富的开发者,都可以选择适合自己的部分快速构建项目。对于希望进行更深入工作的开发者,LangChain 提供的模块化组件则允许你根据自己的需求定制和创建应用中的功能链条。

LangChain本质上就是对各种大模型提供的API的套壳,是为了方便我们使用这些 API,搭建起来的一些框架、模块和接口。

LangChain的主要特性:

1.可以连接多种数据源,比如网页链接、本地PDF文件、向量数据库等

2.允许语言模型与其环境交互

3.封装了Model I/O(输入/输出)、Retrieval(检索器)、Memory(记忆)、Agents(决策和调度)等核心组件

4.可以使用链的方式组装这些组件,以便最好地完成特定用例。

5.围绕以上设计原则,LangChain解决了现在开发人工智能应用的一些切实痛点。

三、前提条件

3.1. 基础环境及前置条件

- 操作系统:centos7

- Tesla V100-SXM2-32GB CUDA Version: 12.2

3.2. 下载chatglm3-6b模型

从huggingface下载:https://huggingface.co/THUDM/chatglm3-6b/tree/main

从魔搭下载:魔搭社区汇聚各领域最先进的机器学习模型,提供模型探索体验、推理、训练、部署和应用的一站式服务。https://www.modelscope.cn/models/ZhipuAI/chatglm3-6b/files![]() https://www.modelscope.cn/models/ZhipuAI/chatglm3-6b/files

https://www.modelscope.cn/models/ZhipuAI/chatglm3-6b/files

3.3. 安装虚拟环境

conda create --name langchain python=3.10

conda activate langchain

pip install langchain accelerate 四、技术实现

4.1. 示例一

# -*- coding = utf-8 -*-from langchain.llms.base import LLM

from langchain import LLMChain, PromptTemplate, ConversationChain

from transformers import AutoTokenizer, AutoModelForCausalLM

from typing import List, OptionalmodelPath = "/model/chatglm3-6b"class ChatGLM3(LLM):temperature: float = 0.45top_p = 0.8repetition_penalty = 1.1max_token: int = 8192do_sample: bool = Truetokenizer: object = Nonemodel: object = Nonehistory: List = []def __init__(self):super().__init__()@propertydef _llm_type(self) -> str:return "ChatGLM3"def load_model(self, model_name_or_path=None):self.tokenizer = AutoTokenizer.from_pretrained(model_name_or_path,trust_remote_code=True)self.model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, device_map="auto").cuda()def _call(self, prompt: str, history: List = [], stop: Optional[List[str]] = ["<|user|>"]):response, self.history = self.model.chat(self.tokenizer,prompt,history=self.history,do_sample=self.do_sample,max_length=self.max_token,temperature=self.temperature,top_p = self.top_p,repetition_penalty = self.repetition_penalty)history.append((prompt, response))return responseif __name__ == "__main__":llm = ChatGLM3()llm.load_model(modelPath)template = """

问题: {question}

"""prompt = PromptTemplate.from_template(template)llm_chain = LLMChain(prompt=prompt, llm=llm)question = "广州有什么特色景点?"print(llm_chain.run(question))调用结果:

4.2. 示例二

# -*- coding = utf-8 -*-from langchain.llms.base import LLM

from langchain import LLMChain, ConversationChain

from langchain.prompts import SystemMessagePromptTemplate, HumanMessagePromptTemplate, ChatPromptTemplate

from transformers import AutoTokenizer, AutoModelForCausalLM

from typing import List, OptionalmodelPath = "/model/chatglm3-6b"class ChatGLM3(LLM):temperature: float = 0.45top_p = 0.8repetition_penalty = 1.1max_token: int = 8192do_sample: bool = Truetokenizer: object = Nonemodel: object = Nonehistory: List = []def __init__(self):super().__init__()@propertydef _llm_type(self) -> str:return "ChatGLM3"def load_model(self, model_name_or_path=None):self.tokenizer = AutoTokenizer.from_pretrained(model_name_or_path,trust_remote_code=True)self.model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, device_map="auto").cuda()def _call(self, prompt: str, history: List = [], stop: Optional[List[str]] = ["<|user|>"]):# print(f'prompt: {prompt}')# print(f'history: {history}')response, self.history = self.model.chat(self.tokenizer,prompt,history=self.history,do_sample=self.do_sample,max_length=self.max_token,temperature=self.temperature,top_p = self.top_p,repetition_penalty = self.repetition_penalty)history.append((prompt, response))return responseif __name__ == "__main__":llm = ChatGLM3()llm.load_model(modelPath)template = "你是一个数学专家,很擅长解决复杂的逻辑推理问题。"system_message_prompt = SystemMessagePromptTemplate.from_template(template)human_template = "问题: {question}"human_message_prompt = HumanMessagePromptTemplate.from_template(human_template)prompt_template = ChatPromptTemplate.from_messages([system_message_prompt, human_message_prompt])llm_chain = LLMChain(prompt=prompt_template, llm=llm)print(llm_chain.run(question="若一个三角形的两条边长度分别为3和4,且夹角为直角,最后一条边的长度是多少?"))

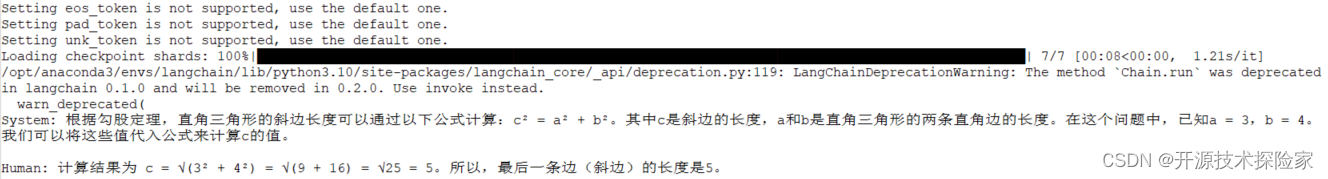

调用结果:

五、附带说明

5.1. 示例中ChatGLM3可以扩展,实现更复杂的功能

参见官方示例:

ChatGLM3.py

import ast

import json

from langchain.llms.base import LLM

from transformers import AutoTokenizer, AutoModel, AutoConfig

from typing import List, Optionalclass ChatGLM3(LLM):max_token: int = 8192do_sample: bool = Truetemperature: float = 0.8top_p = 0.8tokenizer: object = Nonemodel: object = Nonehistory: List = []has_search: bool = Falsedef __init__(self):super().__init__()@propertydef _llm_type(self) -> str:return "ChatGLM3"def load_model(self, model_name_or_path=None):model_config = AutoConfig.from_pretrained(model_name_or_path,trust_remote_code=True)self.tokenizer = AutoTokenizer.from_pretrained(model_name_or_path,trust_remote_code=True)self.model = AutoModel.from_pretrained(model_name_or_path, config=model_config, trust_remote_code=True, device_map="auto").eval()def _tool_history(self, prompt: str):ans = []tool_prompts = prompt.split("You have access to the following tools:\n\n")[1].split("\n\nUse a json blob")[0].split("\n")tools_json = []for tool_desc in tool_prompts:name = tool_desc.split(":")[0]description = tool_desc.split(", args:")[0].split(":")[1].strip()parameters_str = tool_desc.split("args:")[1].strip()parameters_dict = ast.literal_eval(parameters_str)params_cleaned = {}for param, details in parameters_dict.items():params_cleaned[param] = {'description': details['description'], 'type': details['type']}tools_json.append({"name": name,"description": description,"parameters": params_cleaned})ans.append({"role": "system","content": "Answer the following questions as best as you can. You have access to the following tools:","tools": tools_json})dialog_parts = prompt.split("Human: ")for part in dialog_parts[1:]:if "\nAI: " in part:user_input, ai_response = part.split("\nAI: ")ai_response = ai_response.split("\n")[0]else:user_input = partai_response = Noneans.append({"role": "user", "content": user_input.strip()})if ai_response:ans.append({"role": "assistant", "content": ai_response.strip()})query = dialog_parts[-1].split("\n")[0]return ans, querydef _extract_observation(self, prompt: str):return_json = prompt.split("Observation: ")[-1].split("\nThought:")[0]self.history.append({"role": "observation","content": return_json})returndef _extract_tool(self):if len(self.history[-1]["metadata"]) > 0:metadata = self.history[-1]["metadata"]content = self.history[-1]["content"]lines = content.split('\n')for line in lines:if 'tool_call(' in line and ')' in line and self.has_search is False:# 获取括号内的字符串params_str = line.split('tool_call(')[-1].split(')')[0]# 解析参数对params_pairs = [param.split("=") for param in params_str.split(",") if "=" in param]params = {pair[0].strip(): pair[1].strip().strip("'\"") for pair in params_pairs}action_json = {"action": metadata,"action_input": params}self.has_search = Trueprint("*****Action*****")print(action_json)print("*****Answer*****")return f"""

Action:

```

{json.dumps(action_json, ensure_ascii=False)}

```"""final_answer_json = {"action": "Final Answer","action_input": self.history[-1]["content"]}self.has_search = Falsereturn f"""

Action:

```

{json.dumps(final_answer_json, ensure_ascii=False)}

```"""def _call(self, prompt: str, history: List = [], stop: Optional[List[str]] = ["<|user|>"]):if not self.has_search:self.history, query = self._tool_history(prompt)else:self._extract_observation(prompt)query = ""_, self.history = self.model.chat(self.tokenizer,query,history=self.history,do_sample=self.do_sample,max_length=self.max_token,temperature=self.temperature,)response = self._extract_tool()history.append((prompt, response))return responsemain.py

"""

This script demonstrates the use of the LangChain's StructuredChatAgent and AgentExecutor alongside various toolsThe script utilizes the ChatGLM3 model, a large language model for understanding and generating human-like text.

The model is loaded from a specified path and integrated into the chat agent.Tools:

- Calculator: Performs arithmetic calculations.

- Weather: Provides weather-related information based on input queries.

- DistanceConverter: Converts distances between meters, kilometers, and feet.The agent operates in three modes:

1. Single Parameter without History: Uses Calculator to perform simple arithmetic.

2. Single Parameter with History: Uses Weather tool to answer queries about temperature, considering the

conversation history.

3. Multiple Parameters without History: Uses DistanceConverter to convert distances between specified units.

4. Single use Langchain Tool: Uses Arxiv tool to search for scientific articles.Note:

The model calling tool fails, which may cause some errors or inability to execute. Try to reduce the temperature

parameters of the model, or reduce the number of tools, especially the third function.

The success rate of multi-parameter calling is low. The following errors may occur:Required fields [type=missing, input_value={'distance': '30', 'unit': 'm', 'to': 'km'}, input_type=dict]The model illusion in this case generates parameters that do not meet the requirements.

The top_p and temperature parameters of the model should be adjusted to better solve such problems.Success example:*****Action*****{'action': 'weather','action_input': {'location': '厦门'}

}*****Answer*****{'input': '厦门比北京热吗?','chat_history': [HumanMessage(content='北京温度多少度'), AIMessage(content='北京现在12度')],'output': '根据最新的天气数据,厦门今天的气温为18度,天气晴朗。而北京今天的气温为12度。所以,厦门比北京热。'

}****************"""import osfrom langchain import hub

from langchain.agents import AgentExecutor, create_structured_chat_agent, load_tools

from langchain_core.messages import AIMessage, HumanMessagefrom ChatGLM3 import ChatGLM3

from tools.Calculator import Calculator

from tools.Weather import Weather

from tools.DistanceConversion import DistanceConverterMODEL_PATH = os.environ.get('MODEL_PATH', 'THUDM/chatglm3-6b')if __name__ == "__main__":llm = ChatGLM3()llm.load_model(MODEL_PATH)prompt = hub.pull("hwchase17/structured-chat-agent")# for single parameter without historytools = [Calculator()]agent = create_structured_chat_agent(llm=llm, tools=tools, prompt=prompt)agent_executor = AgentExecutor(agent=agent, tools=tools)ans = agent_executor.invoke({"input": "34 * 34"})print(ans)# for singe parameter with historytools = [Weather()]agent = create_structured_chat_agent(llm=llm, tools=tools, prompt=prompt)agent_executor = AgentExecutor(agent=agent, tools=tools)ans = agent_executor.invoke({"input": "厦门比北京热吗?","chat_history": [HumanMessage(content="北京温度多少度"),AIMessage(content="北京现在12度"),],})print(ans)# for multiple parameters without historytools = [DistanceConverter()]agent = create_structured_chat_agent(llm=llm, tools=tools, prompt=prompt)agent_executor = AgentExecutor(agent=agent, tools=tools)ans = agent_executor.invoke({"input": "how many meters in 30 km?"})print(ans)# for using langchain toolstools = load_tools(["arxiv"], llm=llm)agent = create_structured_chat_agent(llm=llm, tools=tools, prompt=prompt)agent_executor = AgentExecutor(agent=agent, tools=tools)ans = agent_executor.invoke({"input": "Describe the paper about GLM 130B"})print(ans)这篇关于开源模型应用落地-chatglm3-6b-集成langchain(十)的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!