本文主要是介绍让DeepLearning4j阅读小说并给出关联度最高的词,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

DeepLearning4j是一个java的神经网络框架,便于java程序员使用神经网络来完成一些机器学习工程。

不管什么机器学习框架,NLP是一个不能不谈的领域,DL4J也提供了nlp的相关实现。其中入门的例子就是从一大堆文字中找到最相关的词。

我们先来看看官方的demo,然后再模仿一个类似的程序,只不过是阅读中文的小说。

官方的demo叫Word2VecRawTextExample,我们直接新建一个java的maven项目,pom.xml如下:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"><modelVersion>4.0.0</modelVersion><groupId>com.tianyalei</groupId><artifactId>wolf_ml_mnist</artifactId><version>1.0-SNAPSHOT</version><properties><project.build.sourceEncoding>UTF-8</project.build.sourceEncoding><nd4j.version>1.0.0-beta</nd4j.version><dl4j.version>1.0.0-beta</dl4j.version><datavec.version>1.0.0-beta</datavec.version><logback.version>1.1.7</logback.version><scala.binary.version>2.10</scala.binary.version></properties><dependencies><!--神经网络的实现方法--><dependency><groupId>org.deeplearning4j</groupId><artifactId>deeplearning4j-core</artifactId><version>${dl4j.version}</version></dependency><!--ND4J库的CPU版本,驱动DL4J--><!--nd4j-cuda-9.1-platform,写成这个就是GPU--><dependency><groupId>org.nd4j</groupId><artifactId>nd4j-native-platform</artifactId><!--<artifactId>nd4j-cuda-9.1-platform</artifactId>--><version>${nd4j.version}</version></dependency><dependency><groupId>org.deeplearning4j</groupId><artifactId>deeplearning4j-nlp</artifactId><version>${dl4j.version}</version></dependency><dependency><groupId>org.deeplearning4j</groupId><artifactId>deeplearning4j-ui_2.11</artifactId><version>${dl4j.version}</version></dependency><dependency><groupId>ch.qos.logback</groupId><artifactId>logback-classic</artifactId><version>${logback.version}</version></dependency><!--中文分词start--><!--<dependency><groupId>org.fnlp</groupId><artifactId>fnlp-core</artifactId><version>2.1-SNAPSHOT</version></dependency>--><dependency><groupId>net.sf.trove4j</groupId><artifactId>trove4j</artifactId><version>3.0.3</version></dependency><dependency><groupId>commons-cli</groupId><artifactId>commons-cli</artifactId><version>1.2</version></dependency><!--中文分词end--></dependencies>

</project>package com.tianyalei.nlp;import org.deeplearning4j.models.word2vec.Word2Vec;

import org.deeplearning4j.text.sentenceiterator.BasicLineIterator;

import org.deeplearning4j.text.sentenceiterator.SentenceIterator;

import org.deeplearning4j.text.tokenization.tokenizer.preprocessor.CommonPreprocessor;

import org.deeplearning4j.text.tokenization.tokenizerfactory.DefaultTokenizerFactory;

import org.deeplearning4j.text.tokenization.tokenizerfactory.TokenizerFactory;

import org.nd4j.linalg.io.ClassPathResource;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;import java.util.Collection;/*** Created by agibsonccc on 10/9/14.** Neural net that processes text into wordvectors. See below url for an in-depth explanation.* https://deeplearning4j.org/word2vec.html*/

public class Word2VecRawTextExample {private static Logger log = LoggerFactory.getLogger(Word2VecRawTextExample.class);public static void main(String[] args) throws Exception {// Gets Path to Text fileString filePath = new ClassPathResource("raw_sentences.txt").getFile().getAbsolutePath();log.info("Load & Vectorize Sentences....");// Strip white space before and after for each lineSentenceIterator iter = new BasicLineIterator(filePath);// Split on white spaces in the line to get wordsTokenizerFactory t = new DefaultTokenizerFactory();/*CommonPreprocessor will apply the following regex to each token: [\d\.:,"'\(\)\[\]|/?!;]+So, effectively all numbers, punctuation symbols and some special symbols are stripped off.Additionally it forces lower case for all tokens.*/t.setTokenPreProcessor(new CommonPreprocessor());log.info("Building model....");Word2Vec vec = new Word2Vec.Builder()//是一个词在语料中必须出现的最少次数。本例中出现不到五次的词都不予学习。.minWordFrequency(5)//是网络在处理一批数据时允许更新系数的次数。迭代次数太少,网络可能来不及学习所有能学到的信息;迭代次数太多则会导致网络定型时间变长。.iterations(1)//指定词向量中的特征数量,与特征空间的维度数量相等。以500个特征值表示的词会成为一个500维空间中的点。.layerSize(100).seed(42).windowSize(5)//告知网络当前定型的是哪一批数据集.iterate(iter)//将当前一批的词输入网络.tokenizerFactory(t).build();log.info("Fitting Word2Vec model....");vec.fit();log.info("Writing word vectors to text file....");// Prints out the closest 10 words to "day". An example on what to do with these Word Vectors.log.info("Closest Words:");Collection<String> lst = vec.wordsNearestSum("day", 10);//Collection<String> lst = vec.wordsNearest(Arrays.asList("king", "woman"), Arrays.asList("queen"), 10);log.info("10 Words closest to 'day': {}", lst);}

}这就是NLP的helloworld级的入门项目,目标是从给定的raw_sentences.txt中找到与day最相近的词,将资源放到resource中,运行该程序即可。

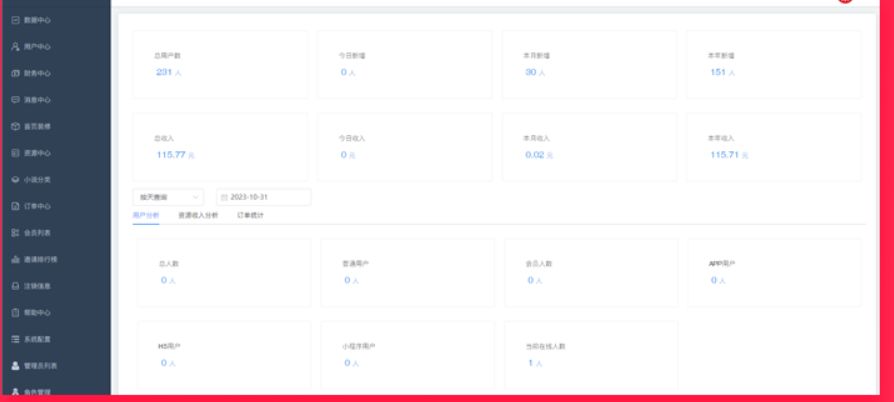

运行结果:

可以看到,day的最相近的词有week、night、year等,还算非常靠谱。至于原理呢,大家可以在文档里去搜索day这个词,看看它的附近的词和用法,然后再去搜索week、night等词的旁边的词和用法,就大概知道怎么回事了。

该文用的相关资源去我项目里找https://github.com/tianyaleixiaowu/wolf_ml_mnist

从代码的注释中可以看看基本的概念,下面我们来让它学习一下中文的小说,并给出最接近的词。

和英文自带空格分词不同,中文是额外需要一个中文分词器的,不然中文全是一句一句的,根本分不开。所以我们在让机器学习读中文前,需要先将中文句子分成一个个的词语。

中文分词器很多,论使用的简易程度和效果,还是复旦的NLP比较靠谱,https://github.com/FudanNLP/fnlp。

GitHub上面有文档,讲怎么使用的,这里我直接简单说一下,下载models里的三个.m文件,和libs里的fnlp-code.jar,将jar添加为工程的依赖lib即可。至于复旦nlp额外需要的两个jar,我已经放在pom.xml里了。

然后就可以使用fnlp来对文档进行分词了。我们选择的文档是天龙八部tlbb.txt,这是没分词时的样子。

分词的代码

package com.tianyalei.nlp.tlbb;import java.io.*;/*** 运行后将得到一个分词后的文档* @author wuweifeng wrote on 2018/6/29.*/

public class FenCi {private FudanTokenizer tokenizer = new FudanTokenizer();public void processFile() throws Exception {String filePath = this.getClass().getClassLoader().getResource("text/tlbb.txt").getPath();BufferedReader in = new BufferedReader(new FileReader(filePath));File outfile = new File("/Users/wuwf/project/tlbb_t.txt");if (outfile.exists()) {outfile.delete();}FileOutputStream fop = new FileOutputStream(outfile);// 构建FileOutputStream对象,文件不存在会自动新建String line = in.readLine();OutputStreamWriter writer = new OutputStreamWriter(fop, "UTF-8");while (line != null) {line = tokenizer.processSentence(line);writer.append(line);line = in.readLine();}in.close();writer.close(); // 关闭写入流,同时会把缓冲区内容写入文件fop.close(); // 关闭输出流,释放系统资源}public static void main(String[] args) throws Exception {new FenCi().processFile();}

}

package com.tianyalei.nlp.tlbb;import org.fnlp.ml.types.Dictionary;

import org.fnlp.nlp.cn.tag.CWSTagger;

import org.fnlp.nlp.corpus.StopWords;

import org.fnlp.util.exception.LoadModelException;import java.io.IOException;

import java.util.List;/*** @author wuweifeng wrote on 2018/6/29.*/

public class FudanTokenizer {private CWSTagger tag;private StopWords stopWords;public FudanTokenizer() {String path = this.getClass().getClassLoader().getResource("").getPath();System.out.println(path);try {tag = new CWSTagger(path + "models/seg.m");} catch (LoadModelException e) {e.printStackTrace();}}public String processSentence(String context) {return tag.tag(context);}public String processSentence(String sentence, boolean english) {if (english) {tag.setEnFilter(true);}return tag.tag(sentence);}public String processFile(String filename) {return tag.tagFile(filename);}/*** 设置分词词典*/public boolean setDictionary() {String dictPath = this.getClass().getClassLoader().getResource("models/dict.txt").getPath();Dictionary dict;try {dict = new Dictionary(dictPath);} catch (IOException e) {return false;}tag.setDictionary(dict);return true;}/*** 去除停用词*/public List<String> flitStopWords(String[] words) {try {return stopWords.phraseDel(words);} catch (Exception e) {e.printStackTrace();return null;}}} 然后运行一下,过一会就得到了分词后的文档tlbb_t.txt,将分词后的拷贝到resource下,将来机器学的就是分词后的文档。

package com.tianyalei.nlp.tlbb;import org.deeplearning4j.models.embeddings.loader.WordVectorSerializer;

import org.deeplearning4j.models.word2vec.Word2Vec;

import org.deeplearning4j.text.sentenceiterator.BasicLineIterator;

import org.deeplearning4j.text.sentenceiterator.SentenceIterator;

import org.deeplearning4j.text.tokenization.tokenizer.preprocessor.CommonPreprocessor;

import org.deeplearning4j.text.tokenization.tokenizerfactory.DefaultTokenizerFactory;

import org.deeplearning4j.text.tokenization.tokenizerfactory.TokenizerFactory;

import org.nd4j.linalg.io.ClassPathResource;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;import java.io.File;

import java.io.IOException;

import java.util.Collection;/*** @author wuweifeng wrote on 2018/6/29.*/

public class Tlbb {private static Logger log = LoggerFactory.getLogger(Tlbb.class);public static void main(String[] args) throws IOException {String filePath = new ClassPathResource("text/tlbb_t.txt").getFile().getAbsolutePath();log.info("Load & Vectorize Sentences....");SentenceIterator iter = new BasicLineIterator(new File(filePath));TokenizerFactory t = new DefaultTokenizerFactory();t.setTokenPreProcessor(new CommonPreprocessor());log.info("Building model....");Word2Vec vec = new Word2Vec.Builder().minWordFrequency(5).iterations(1).layerSize(100).seed(42).windowSize(5).iterate(iter).tokenizerFactory(t).build();log.info("Fitting Word2Vec model....");vec.fit();log.info("Writing word vectors to text file....");// Write word vectors to filelog.info("Writing word vectors to text file....");WordVectorSerializer.writeWordVectors(vec, "tlbb_vectors.txt");WordVectorSerializer.writeFullModel(vec, "tlbb_model.txt");String[] names = {"萧峰", "乔峰", "段誉", "虚竹", "王语嫣", "阿紫", "阿朱", "木婉清"};log.info("Closest Words:");for (String name : names) {System.out.println(name + ">>>>>>");Collection<String> lst = vec.wordsNearest(name, 10);System.out.println(lst);}}}代码和之前的demo区别不大,运行后,就能看到这几个人的关联度最高的词了。

参考篇:https://blog.csdn.net/a398942089/article/details/51970691

这篇关于让DeepLearning4j阅读小说并给出关联度最高的词的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!