本文主要是介绍信号处理--情绪分类数据集DEAP预处理(python版),希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

关于

DEAP数据集是一个常用的情绪分类公共数据,在日常研究中经常被使用到。如何合理地预处理DEAP数据集,对于后端任务的成功与否,非常重要。本文主要介绍DEAP数据集的预处理流程。

工具

图片来源:DEAP: A Dataset for Emotion Analysis using Physiological and Audiovisual Signals

DEAP数据集详细描述:https://www.eecs.qmul.ac.uk/mmv/datasets/deap/doc/tac_special_issue_2011.pdf

方法实现

加载.bdf文件+去除非脑电通道

bdf_file_name = 's{:02d}.bdf'.format(subject_id)bdf_file_path = os.path.join(root_folder, bdf_file_name)print('Loading .bdf file {}'.format(bdf_file_path))raw = mne.io.read_raw_bdf(bdf_file_path, preload=True, verbose=False).load_data()ch_names = raw.ch_nameseeg_channels = ch_names[:N_EEG_electrodes]non_eeg_channels = ch_names[N_EEG_electrodes:]stim_ch_name = ch_names[-1]stim_channels = [ stim_ch_name ]设置脑电电极规范

raw_copy = raw.copy()raw_stim = raw_copy.pick_channels(stim_channels)raw.pick_channels(eeg_channels)print('Setting montage with BioSemi32 electrode locations')biosemi_montage = mne.channels.make_standard_montage(kind='biosemi32', head_size=0.095)raw.set_montage(biosemi_montage)带通滤波器(4-45Hz),陷波滤波器(50Hz)

print('Applying notch filter (50Hz) and bandpass filter (4-45Hz)')raw.notch_filter(np.arange(50, 251, 50), n_jobs=1, fir_design='firwin')raw.filter(4, 45, fir_design='firwin')脑电通道相对于均值重标定

# Average reference. This is normally added by default, but can also be added explicitly.print('Re-referencing all electrodes to the common average reference')raw.set_eeg_reference()获取事件标签

print('Getting events from the status channel')events = mne.find_events(raw_stim, stim_channel=stim_ch_name, verbose=True)if subject_id<=23:# Subject 1-22 and Subjects 23-28 have 48 channels.# Subjects 29-32 have 49 channels.# For Subjects 1-22 and Subject 23, the stimuli channel has the name 'Status'# For Subjects 24-28, the stimuli channel has the name ''# For Subjects 29-32, the stimuli channels have the names '-0' and '-1'passelse:# The values of the stimuli channel have to be changed for Subjects 24-32# Trigger channel has a non-zero initial value of 1703680 (consider using initial_event=True to detect this event)events[:,2] -= 1703680 # subtracting initial valueevents[:,2] = events[:,2] % 65536 # getting modulo with 65536print('')event_IDs = np.unique(events[:,2])for event_id in event_IDs:col = events[:,2]print('Event ID {} : {:05}'.format(event_id, np.sum( 1.0*(col==event_id) ) ) )获取事件对应信号

inds_new_trial = np.where(events[:,2] == 4)[0]events_new_trial = events[inds_new_trial,:]baseline = (0, 0)print('Epoching the data, into [-5sec, +60sec] epochs')epochs = mne.Epochs(raw, events_new_trial, event_id=4, tmin=-5.0, tmax=60.0, picks=eeg_channels, baseline=baseline, preload=True)ICA去除伪迹

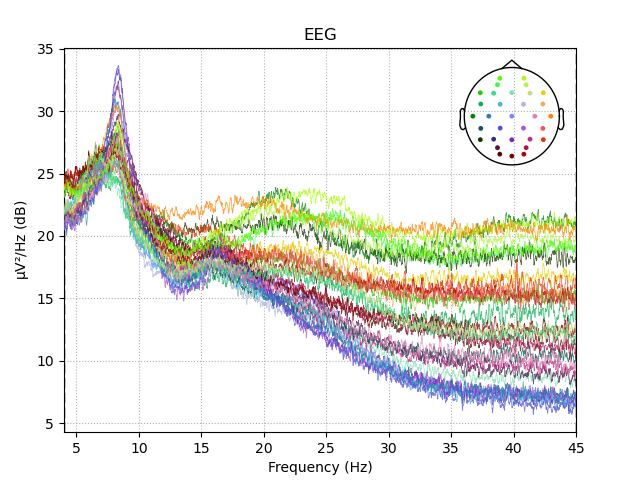

print('Fitting ICA to the epoched data, using {} ICA components'.format(N_ICA))ica = ICA(n_components=N_ICA, method='fastica', random_state=23)ica.fit(epochs)ICA_model_file = os.path.join(ICA_models_folder, 's{:02}_ICA_model.pkl'.format(subject_id))with open(ICA_model_file, 'wb') as pkl_file:pickle.dump(ica, pkl_file)# ica.plot_sources(epochs)print('Plotting ICA components')fig = ica.plot_components()cnt = 1for fig_x in fig:print(fig_x)fig_ICA_path = os.path.join(ICA_components_folder, 's{:02}_ICA_components_{}.png'.format(subject_id, cnt))fig_x.savefig(fig_ICA_path)cnt += 1# Inspect frontal channels to check artifact removal # ica.plot_overlay(raw, picks=['Fp1'])# ica.plot_overlay(raw, picks=['Fp2'])# ica.plot_overlay(raw, picks=['AF3'])# ica.plot_overlay(raw, picks=['AF4'])N_excluded_channels = len(ica.exclude)print('Excluding {:02} ICA component(s): {}'.format(N_excluded_channels, ica.exclude))epochs_clean = ica.apply(epochs.copy())#############################print('Plotting PSD of epoched data')fig = epochs_clean.plot_psd(fmin=4, fmax=45, area_mode='range', average=False, picks=eeg_channels, spatial_colors=True)fig_PSD_path = os.path.join(PSD_folder, 's{:02}_PSD.png'.format(subject_id))fig.savefig(fig_PSD_path)print('Saving ICA epoched data as .pkl file')mneraw_pkl_path = os.path.join(mneraw_as_pkl_folder, 's{:02}.pkl'.format(subject_id))with open(mneraw_pkl_path, 'wb') as pkl_file:pickle.dump(epochs_clean, pkl_file)epochs_clean_copy = epochs_clean.copy()数据降采样和保存

print('Downsampling epoched data to 128Hz')epochs_clean_downsampled = epochs_clean_copy.resample(sfreq=128.0)print('Plotting PSD of epoched downsampled data')fig = epochs_clean_downsampled.plot_psd(fmin=4, fmax=45, area_mode='range', average=False, picks=eeg_channels, spatial_colors=True)fig_PSD_path = os.path.join(PSD_folder, 's{:02}_PSD_downsampled.png'.format(subject_id))fig.savefig(fig_PSD_path)data = epochs_clean.get_data()data_downsampled = epochs_clean_downsampled.get_data()print('Original epoched data shape: {}'.format(data.shape))print('Downsampled epoched data shape: {}'.format(data_downsampled.shape))###########################################EEG_channels_table = pd.read_excel(DEAP_EEG_channels_xlsx_path)EEG_channels_geneva = EEG_channels_table['Channel_name_Geneva'].valueschannel_pick_indices = []print('\nPreparing EEG channel reordering to comply with the Geneva order')for (geneva_ch_index, geneva_ch_name) in zip(range(N_EEG_electrodes), EEG_channels_geneva):bdf_ch_index = eeg_channels.index(geneva_ch_name)channel_pick_indices.append(bdf_ch_index)print('Picking source (raw) channel #{:02} to fill target (npy) channel #{:02} | Electrode position: {}'.format(bdf_ch_index + 1, geneva_ch_index + 1, geneva_ch_name))ratings = pd.read_csv(ratings_csv_path)is_subject = (ratings['Participant_id'] == subject_id)ratings_subj = ratings[is_subject]trial_pick_indices = []print('\nPreparing EEG trial reordering, from presentation order, to video (Experiment_id) order')for i in range(N_trials):exp_id = i+1is_exp = (ratings['Experiment_id'] == exp_id)trial_id = ratings_subj[is_exp]['Trial'].values[0]trial_pick_indices.append(trial_id - 1)print('Picking source (raw) trial #{:02} to fill target (npy) trial #{:02} | Experiment_id: {:02}'.format(trial_id, exp_id, exp_id))# Store clean and reordered data to numpy arrayepoch_duration = data_downsampled.shape[-1]data_npy = np.zeros((N_trials, N_EEG_electrodes, epoch_duration))print('\nStoring the final EEG data in a numpy array of shape {}'.format(data_npy.shape))for trial_source, trial_target in zip(trial_pick_indices, range(N_trials)):data_trial = data_downsampled[trial_source]data_trial_reordered_channels = data_trial[channel_pick_indices,:]data_npy[trial_target,:,:] = data_trial_reordered_channels.copy()print('Saving the final EEG data in a .npy file')np.save(npy_path, data_npy)print('Raw EEG has been filtered, common average referenced, epoched, artifact-rejected, downsampled, trial-reordered and channel-reordered.')print('Finished.')

图片来源: Blog - Ayan's Blog

代码获取

后台私信,请注明文章名称;

相关项目和代码问题,欢迎交流。

这篇关于信号处理--情绪分类数据集DEAP预处理(python版)的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!