本文主要是介绍在Ascend昇腾硬件用npu加速paddleLite版本ocr(nnadapter),希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

在Ascend昇腾硬件用npu加速paddleLite版本ocr(nnadapter)

- 参考文档

- * nnadapter参考文档地址

- * 华为昇腾 NPU参考文档地址

- * PaddleLite的C++API参考文档

- 一.确保cpu版本运行正常

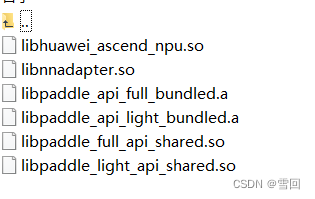

- 二.编译Ascend上npu加速库

- 三.跑通npu加速版本Demo

- 1.Demo下载地址

- 2.参考手册网址

- 3.改脚本run.sh

- (1).改参数HUAWEI_ASCEND_TOOLKIT_HOME

- (2).改参数NNADAPTER_DEVICE_NAMES,NNADAPTER_MODEL_CACHE_DIR,NNADAPTER_MODEL_CACHE_TOKEN

- 4.完整log日志

- 不包含转nnc模型的日志

- 包含转nnc模型的日志

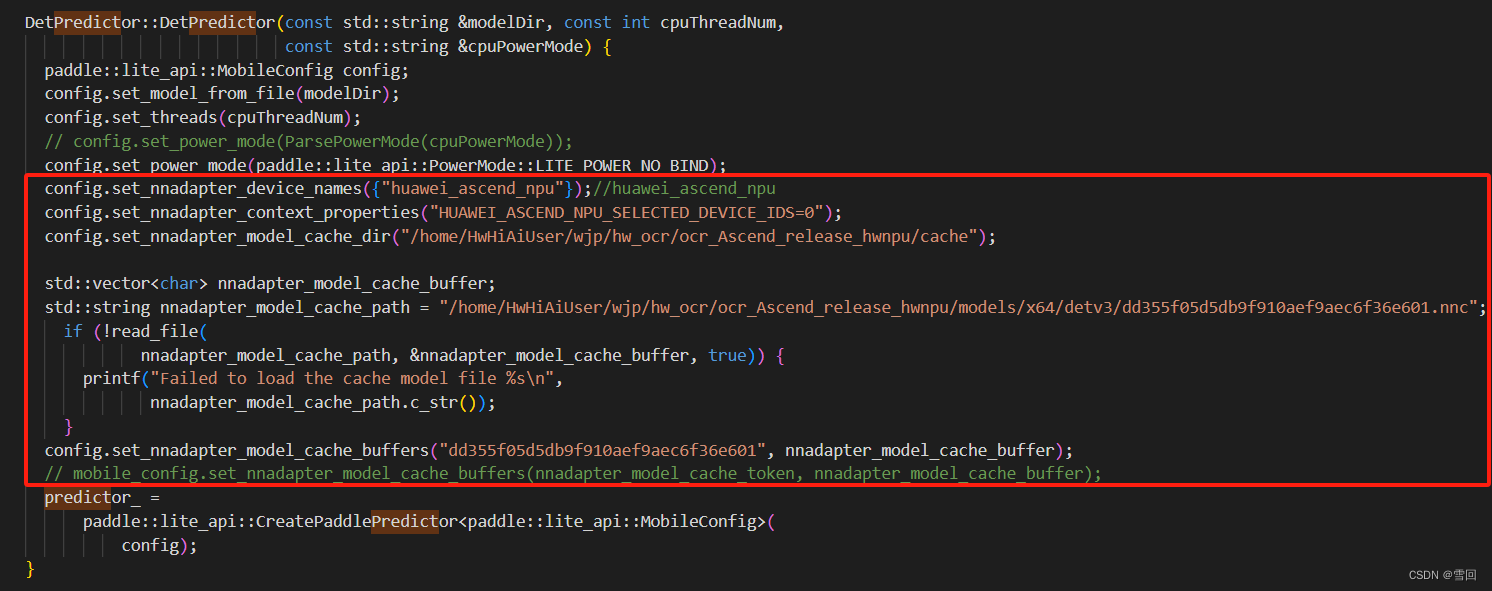

- 四.将ocr代码改成nnadapter与ascendnpu格式

- 五.必须设置环境变量!

- 1.写入环境变量

- 2.脚本里写进临时环境变量

- 五.部分模型不能成功转成nnc格式加速的问题

- 六.考虑其他办法

参考文档

* nnadapter参考文档地址

https://www.paddlepaddle.org.cn/inference/develop_guides/nnadapter.html#id3

* 华为昇腾 NPU参考文档地址

https://www.paddlepaddle.org.cn/lite/develop/demo_guides/huawei_ascend_npu.html#npu

* PaddleLite的C++API参考文档

https://www.paddlepaddle.org.cn/lite/develop/api_reference/cxx_api_doc.html#place

一.确保cpu版本运行正常

参考我之前的教程

http://t.csdnimg.cn/Nd4Qv

二.编译Ascend上npu加速库

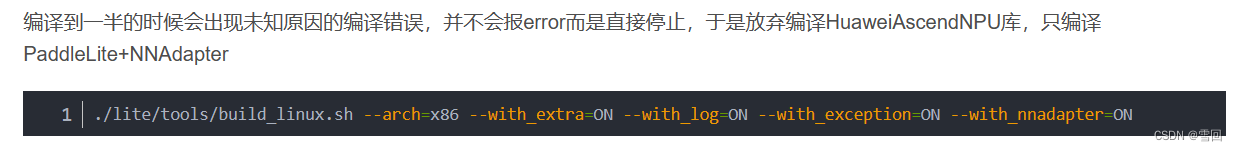

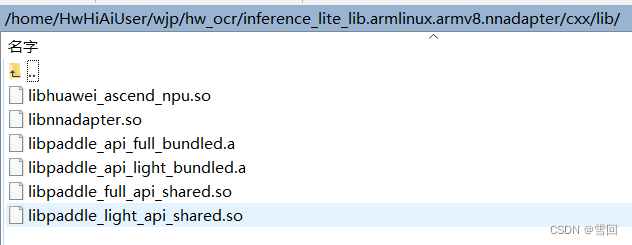

之前的教程有提到过编译ascend_npu加速库失败,后来发现是paddleLite与ascend-toolkit版本不兼容的问题,所以导致未知原因的编译失败,这次用到的是2.10版本的paddleLite与5.0.2.alpha003的toolkit最后编译成功

三.跑通npu加速版本Demo

1.Demo下载地址

https://paddlelite-demo.bj.bcebos.com/devices/generic/PaddleLite-generic-demo.tar.gz

2.参考手册网址

https://www.paddlepaddle.org.cn/lite/develop/demo_guides/huawei_ascend_npu.html#npu-paddle-lite

3.改脚本run.sh

(1).改参数HUAWEI_ASCEND_TOOLKIT_HOME

注意将HUAWEI_ASCEND_TOOLKIT_HOME这个参数的地址改成自己的昇腾toolkit地址,不然不能调用npu加速

export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:.:../../libs/PaddleLite/$TARGET_OS/$TARGET_ABI/lib:../../libs/PaddleLite/$TARGET_OS/$TARGET_ABI/lib/$NNADAPTER_DEVICE_NAMES:../../libs/PaddleLite/$TARGET_OS/$TARGET_ABI/lib/cpu

if [ "$NNADAPTER_DEVICE_NAMES" == "huawei_ascend_npu" ]; thenHUAWEI_ASCEND_TOOLKIT_HOME="/home/HwHiAiUser/Ascend/ascend-toolkit/5.0.2.alpha003" #"/usr/local/Ascend/ascend-toolkit/6.0.RC1.alpha003"if [ "$TARGET_OS" == "linux" ]; thenif [[ "$TARGET_ABI" != "arm64" && "$TARGET_ABI" != "amd64" ]]; thenecho "Unknown OS $TARGET_OS, only supports 'arm64' or 'amd64' for Huawei Ascend NPU."exit -1fielseecho "Unknown OS $TARGET_OS, only supports 'linux' for Huawei Ascend NPU."exit -1fiNNADAPTER_CONTEXT_PROPERTIES="HUAWEI_ASCEND_NPU_SELECTED_DEVICE_IDS=0"export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/lib64:$HUAWEI_ASCEND_TOOLKIT_HOME/fwkacllib/lib64:$HUAWEI_ASCEND_TOOLKIT_HOME/acllib/lib64:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/lib64:$HUAWEI_ASCEND_TOOLKIT_HOME/opp/op_proto/built-inexport PYTHONPATH=$PYTHONPATH:$HUAWEI_ASCEND_TOOLKIT_HOME/fwkacllib/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/acllib/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/toolkit/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/pyACL/python/site-packages/aclexport PATH=$PATH:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/ccec_compiler/bin:${HUAWEI_ASCEND_TOOLKIT_HOME}/acllib/bin:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/binexport ASCEND_AICPU_PATH=$HUAWEI_ASCEND_TOOLKIT_HOMEexport ASCEND_OPP_PATH=$HUAWEI_ASCEND_TOOLKIT_HOME/oppexport TOOLCHAIN_HOME=$HUAWEI_ASCEND_TOOLKIT_HOME/toolkitexport ASCEND_SLOG_PRINT_TO_STDOUT=1export ASCEND_GLOBAL_LOG_LEVEL=1

fi

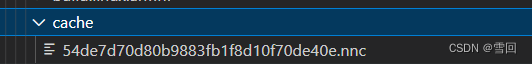

(2).改参数NNADAPTER_DEVICE_NAMES,NNADAPTER_MODEL_CACHE_DIR,NNADAPTER_MODEL_CACHE_TOKEN

NNADAPTER_DEVICE_NAMES="huawei_ascend_npu"

NNADAPTER_MODEL_CACHE_DIR是装nnc模型的文件夹,最开始没有生成nnc模型的时候就只用写指定生成模型地址,

NNADAPTER_MODEL_CACHE_TOKEN=“null”,在保存nnc模型后,将NNADAPTER_MODEL_CACHE_TOKEN参数写为生成后的模型名字

4.完整log日志

发现调用npu是用了这样一个过程,先将nb格式的模型转成,nnc格式,然后再npu调用这个nnc格式模型进行加速推理,如果已经转过nnc格式保存在相应文件夹,就会直接调用这个nnc模型,省略了这个转模型的步骤,直接进行推理

不包含转nnc模型的日志

huawei_ascend_npu null /home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/cache 54de7d70d80b9883fb1f8d10f70de40e null

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 268----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 284----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 288----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 300----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 305----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 307----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 371----------------

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1118 Setup] ARM multiprocessors name:

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1119 Setup] ARM multiprocessors number: 8

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 0, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 1, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 2, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 3, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 4, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 5, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.580 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 6, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1121 Setup] ARM multiprocessors ID: 7, max freq: 0, min freq: 0, cluster ID: 0, CPU ARCH: A55

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1127 Setup] L1 DataCache size is:

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1129 Setup] 32 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1131 Setup] L2 Cache size is:

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1133 Setup] 512 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1135 Setup] L3 Cache size is:

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1137 Setup] 0 KB

[I 11/16 2:59: 7.581 ...lic/paddle-lite/lite/core/device_info.cc:1139 Setup] Total memory: 7952452KB

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 398----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 399----------------

[4 11/16 2:59: 7.619 ...e-lite/lite/model_parser/model_parser.cc:780 LoadModelNaiveFromFile] Meta_version:2

[4 11/16 2:59: 7.619 ...e-lite/lite/model_parser/model_parser.cc:873 LoadModelFbsFromFile] Opt_version:9dd4e2655

[4 11/16 2:59: 7.619 ...e-lite/lite/model_parser/model_parser.cc:888 LoadModelFbsFromFile] topo_size: 74904

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.621 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.622 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.623 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.624 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.625 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:226 InputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:247 OutputArgumentNames] This function call is expensive.

[5 11/16 2:59: 7.626 .../lite/model_parser/flatbuffers/op_desc.h:272 AttrNames] This function call is expensive.

[4 11/16 2:59: 7.673 ...e-lite/lite/model_parser/model_parser.cc:803 LoadModelNaiveFromFile] paddle_version:0

[4 11/16 2:59: 7.673 ...e-lite/lite/model_parser/model_parser.cc:804 LoadModelNaiveFromFile] Load naive buffer model in '/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/assets/models/mobilenet_v1_fp32_224.nb' successfully

[3 11/16 2:59: 7.674 .../public/paddle-lite/lite/core/program.cc:327 RuntimeProgram] Found the attr '__@kernel_type_attr@__': feed/def/1/4/2 for feed

[5 11/16 2:59: 7.674 .../public/paddle-lite/lite/core/op_lite.cc:90 operator()] pick kernel for feed host/any/any get 1 kernels

[5 11/16 2:59: 7.674 .../public/paddle-lite/lite/core/op_lite.cc:124 CreateKernels] op feed get 1 kernels

[3 11/16 2:59: 7.674 .../public/paddle-lite/lite/core/program.cc:327 RuntimeProgram] Found the attr '__@kernel_type_attr@__': subgraph/def/18/4/1 for subgraph

[5 11/16 2:59: 7.674 .../public/paddle-lite/lite/core/op_lite.cc:90 operator()] pick kernel for subgraph nnadapter/any/NCHW get 1 kernels

[5 11/16 2:59: 7.675 .../public/paddle-lite/lite/core/op_lite.cc:90 operator()] pick kernel for subgraph nnadapter/any/any get 0 kernels

[5 11/16 2:59: 7.675 .../public/paddle-lite/lite/core/op_lite.cc:124 CreateKernels] op subgraph get 1 kernels

[3 11/16 2:59: 7.675 .../public/paddle-lite/lite/core/program.cc:327 RuntimeProgram] Found the attr '__@kernel_type_attr@__': fetch/def/1/4/2 for fetch

[5 11/16 2:59: 7.675 .../public/paddle-lite/lite/core/op_lite.cc:90 operator()] pick kernel for fetch host/any/any get 1 kernels

[5 11/16 2:59: 7.675 .../public/paddle-lite/lite/core/op_lite.cc:124 CreateKernels] op fetch get 1 kernels

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 403----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 196----------------

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 202----------------

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:60 Initialize] The NNAdapter library libnnadapter.so is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:73 Initialize] NNAdapter_getVersion is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:74 Initialize] NNAdapter_getDeviceCount is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:75 Initialize] NNAdapterDevice_acquire is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:76 Initialize] NNAdapterDevice_release is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:77 Initialize] NNAdapterDevice_getName is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:78 Initialize] NNAdapterDevice_getVendor is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:79 Initialize] NNAdapterDevice_getType is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:80 Initialize] NNAdapterDevice_getVersion is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:81 Initialize] NNAdapterContext_create is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:82 Initialize] NNAdapterContext_destroy is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:83 Initialize] NNAdapterModel_create is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:84 Initialize] NNAdapterModel_destroy is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:85 Initialize] NNAdapterModel_finish is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:86 Initialize] NNAdapterModel_addOperand is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:87 Initialize] NNAdapterModel_setOperandValue is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:88 Initialize] NNAdapterModel_getOperandType is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:89 Initialize] NNAdapterModel_addOperation is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:90 Initialize] NNAdapterModel_identifyInputsAndOutputs is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:91 Initialize] NNAdapterCompilation_create is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:92 Initialize] NNAdapterCompilation_destroy is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:93 Initialize] NNAdapterCompilation_finish is loaded.

[4 11/16 2:59: 7.693 .../backends/nnadapter/nnadapter_wrapper.cc:94 Initialize] NNAdapterCompilation_queryInputsAndOutputs is loaded.

[4 11/16 2:59: 7.694 .../backends/nnadapter/nnadapter_wrapper.cc:95 Initialize] NNAdapterExecution_create is loaded.

[4 11/16 2:59: 7.694 .../backends/nnadapter/nnadapter_wrapper.cc:96 Initialize] NNAdapterExecution_destroy is loaded.

[4 11/16 2:59: 7.694 .../backends/nnadapter/nnadapter_wrapper.cc:97 Initialize] NNAdapterExecution_setInput is loaded.

[4 11/16 2:59: 7.694 .../backends/nnadapter/nnadapter_wrapper.cc:98 Initialize] NNAdapterExecution_setOutput is loaded.

[4 11/16 2:59: 7.694 .../backends/nnadapter/nnadapter_wrapper.cc:99 Initialize] NNAdapterExecution_compute is loaded.

[4 11/16 2:59: 7.694 .../backends/nnadapter/nnadapter_wrapper.cc:101 Initialize] Extract all of symbols from libnnadapter.so done.

[5 11/16 2:59: 7.878 ...pter/driver/huawei_ascend_npu/utility.cc:36 InitializeAscendCL] Initialize AscendCL.

[3 11/16 2:59: 7.990 ...le-lite/lite/kernels/nnadapter/engine.cc:295 Engine] NNAdapter device huawei_ascend_npu: vendor=Huawei type=2 version=1

[3 11/16 2:59: 7.990 ...le-lite/lite/kernels/nnadapter/engine.cc:306 Engine] NNAdapter context_properties:

[I 11/16 2:59: 7.990 ...apter/driver/huawei_ascend_npu/engine.cc:34 Context] properties:

[I 11/16 2:59: 7.990 ...apter/driver/huawei_ascend_npu/engine.cc:51 Context] selected device ids:

[I 11/16 2:59: 7.990 ...apter/driver/huawei_ascend_npu/engine.cc:53 Context] 0

[3 11/16 2:59: 7.990 ...le-lite/lite/kernels/nnadapter/engine.cc:313 Engine] NNAdapter model_cache_dir: /home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/cache

[3 11/16 2:59: 7.990 ...le-lite/lite/kernels/nnadapter/engine.cc:348 Run] NNAdapter model_cache_token: 54de7d70d80b9883fb1f8d10f70de40e

[3 11/16 2:59: 8. 6 ...le-lite/lite/kernels/nnadapter/engine.cc:351 Run] NNAdapter model_cache_buffer size: 10308356

[I 11/16 2:59: 8.262 ...adapter/nnadapter/runtime/compilation.cc:65 Compilation] Deserialize the cache models from memory success.

[I 11/16 2:59: 8.262 ...apter/driver/huawei_ascend_npu/driver.cc:66 CreateProgram] Create program for huawei_ascend_npu.

[3 11/16 2:59: 8.262 ...apter/driver/huawei_ascend_npu/engine.cc:91 Build] Model input count: 1

[3 11/16 2:59: 8.262 ...apter/driver/huawei_ascend_npu/engine.cc:94 Build] Model output count: 1

[5 11/16 2:59: 8.262 ...driver/huawei_ascend_npu/model_client.cc:29 AclModelClient] Create a ACL model client(device_id=0)

[3 11/16 2:59: 8.263 ...driver/huawei_ascend_npu/model_client.cc:33 AclModelClient] device_count: 1

[5 11/16 2:59: 8.263 ...driver/huawei_ascend_npu/model_client.cc:48 InitAclClientEnv] ACL set device(device_id_=0)

[5 11/16 2:59: 8.395 ...driver/huawei_ascend_npu/model_client.cc:50 InitAclClientEnv] ACL create context

[3 11/16 2:59: 8.551 ...driver/huawei_ascend_npu/model_client.cc:199 CreateModelIODataset] input_count: 1

[5 11/16 2:59: 8.551 ...driver/huawei_ascend_npu/model_client.cc:202 CreateModelIODataset] The buffer length of model input tensor 0:602112

[3 11/16 2:59: 8.552 ...driver/huawei_ascend_npu/model_client.cc:217 CreateModelIODataset] output_count: 1

[5 11/16 2:59: 8.552 ...driver/huawei_ascend_npu/model_client.cc:220 CreateModelIODataset] The buffer length of model output tensor 0:4000

[5 11/16 2:59: 8.552 ...driver/huawei_ascend_npu/model_client.cc:229 CreateModelIODataset] Create input and output dataset success.

[3 11/16 2:59: 8.552 ...driver/huawei_ascend_npu/model_client.cc:113 LoadModel] Load a ACL model success.

[3 11/16 2:59: 8.552 ...driver/huawei_ascend_npu/model_client.cc:156 GetModelIOTensorDim] input_count: 1

[5 11/16 2:59: 8.553 ...pter/driver/huawei_ascend_npu/utility.cc:365 ConvertACLFormatToGEFormat] geFormat: FORMAT_ND = 2

[5 11/16 2:59: 8.553 ...pter/driver/huawei_ascend_npu/utility.cc:344 ConvertACLDataTypeToGEDataType] geDataType: DT_FLOAT=0

[3 11/16 2:59: 8.553 ...driver/huawei_ascend_npu/model_client.cc:170 GetModelIOTensorDim] output_count: 1

[5 11/16 2:59: 8.553 ...pter/driver/huawei_ascend_npu/utility.cc:365 ConvertACLFormatToGEFormat] geFormat: FORMAT_ND = 2

[5 11/16 2:59: 8.553 ...pter/driver/huawei_ascend_npu/utility.cc:344 ConvertACLDataTypeToGEDataType] geDataType: DT_FLOAT=0

[5 11/16 2:59: 8.553 ...driver/huawei_ascend_npu/model_client.cc:183 GetModelIOTensorDim] Get input and output dimensions from a ACL model success.

[3 11/16 2:59: 8.553 ...apter/driver/huawei_ascend_npu/engine.cc:171 Build] CANN input tensors[0]: {1,3,224,224} 1,3,224,224

[3 11/16 2:59: 8.553 ...apter/driver/huawei_ascend_npu/engine.cc:192 Build] CANN output tensors[0]: {1,1000} 1,1000

[3 11/16 2:59: 8.553 ...apter/driver/huawei_ascend_npu/engine.cc:208 Build] Build success.

[3 11/16 2:59: 8.563 ...driver/huawei_ascend_npu/model_client.cc:343 Process] Process cost 2444 us

[5 11/16 2:59: 8.563 ...driver/huawei_ascend_npu/model_client.cc:370 Process] Process a ACL model success.

[3 11/16 2:59: 8.563 ...le-lite/lite/kernels/nnadapter/engine.cc:232 Execute] Process cost 3503 us

--------------/home/HwHiAiUser/wjp/hw_ocr/govern_test/image_classification_demo/shell/image_classification_demo.cc Line: 205----------------

[3 11/16 2:59: 8.566 ...driver/huawei_ascend_npu/model_client.cc:343 Process] Process cost 2102 us

[5 11/16 2:59: 8.566 ...driver/huawei_ascend_npu/model_client.cc:370 Process] Process a ACL model success.

[3 11/16 2:59: 8.566 ...le-lite/lite/kernels/nnadapter/engine.cc:232 Execute] Process cost 2734 us

iter 0 cost: 2.798000 ms

[3 11/16 2:59: 8.579 ...driver/huawei_ascend_npu/model_client.cc:343 Process] Process cost 1997 us

[5 11/16 2:59: 8.579 ...driver/huawei_ascend_npu/model_client.cc:370 Process] Process a ACL model success.

[3 11/16 2:59: 8.579 ...le-lite/lite/kernels/nnadapter/engine.cc:232 Execute] Process cost 2906 us

iter 1 cost: 2.994000 ms

[3 11/16 2:59: 8.592 ...driver/huawei_ascend_npu/model_client.cc:343 Process] Process cost 2004 us

[5 11/16 2:59: 8.592 ...driver/huawei_ascend_npu/model_client.cc:370 Process] Process a ACL model success.

[3 11/16 2:59: 8.592 ...le-lite/lite/kernels/nnadapter/engine.cc:232 Execute] Process cost 2917 us

iter 2 cost: 2.995000 ms

[3 11/16 2:59: 8.605 ...driver/huawei_ascend_npu/model_client.cc:343 Process] Process cost 2653 us

[5 11/16 2:59: 8.606 ...driver/huawei_ascend_npu/model_client.cc:370 Process] Process a ACL model success.

[3 11/16 2:59: 8.606 ...le-lite/lite/kernels/nnadapter/engine.cc:232 Execute] Process cost 3519 us

iter 3 cost: 3.594000 ms

[3 11/16 2:59: 8.618 ...driver/huawei_ascend_npu/model_client.cc:343 Process] Process cost 1777 us

[5 11/16 2:59: 8.619 ...driver/huawei_ascend_npu/model_client.cc:370 Process] Process a ACL model success.

[3 11/16 2:59: 8.619 ...le-lite/lite/kernels/nnadapter/engine.cc:232 Execute] Process cost 2637 us

iter 4 cost: 2.721000 ms

warmup: 1 repeat: 5, average: 3.020400 ms, max: 3.594000 ms, min: 2.721000 ms

results: 3

Top0 tabby, tabby cat - 0.529785

Top1 Egyptian cat - 0.418945

Top2 tiger cat - 0.045227

Preprocess time: 1.720000 ms

Prediction time: 3.020400 ms

Postprocess time: 0.393000 ms[I 11/16 2:59: 8.633 ...apter/driver/huawei_ascend_npu/driver.cc:85 DestroyProgram] Destroy program for huawei_ascend_npu.

[5 11/16 2:59: 8.633 ...driver/huawei_ascend_npu/model_client.cc:252 DestroyDataset] Destroy a ACL dataset success.

[5 11/16 2:59: 8.633 ...driver/huawei_ascend_npu/model_client.cc:252 DestroyDataset] Destroy a ACL dataset success.

[5 11/16 2:59: 8.643 ...driver/huawei_ascend_npu/model_client.cc:141 UnloadModel] Unload a ACL model success(model_id=1)

[5 11/16 2:59: 8.643 ...driver/huawei_ascend_npu/model_client.cc:55 FinalizeAclClientEnv] Destroy ACL context

[5 11/16 2:59: 8.645 ...driver/huawei_ascend_npu/model_client.cc:60 FinalizeAclClientEnv] Reset ACL device(device_id_=0)

[5 11/16 2:59: 8.649 ...driver/huawei_ascend_npu/model_client.cc:79 FinalizeAclProfilingEnv] Destroy ACL profiling config

[5 11/16 2:59: 8.657 ...pter/driver/huawei_ascend_npu/utility.cc:26 FinalizeAscendCL] Finalize AscendCL.

包含转nnc模型的日志

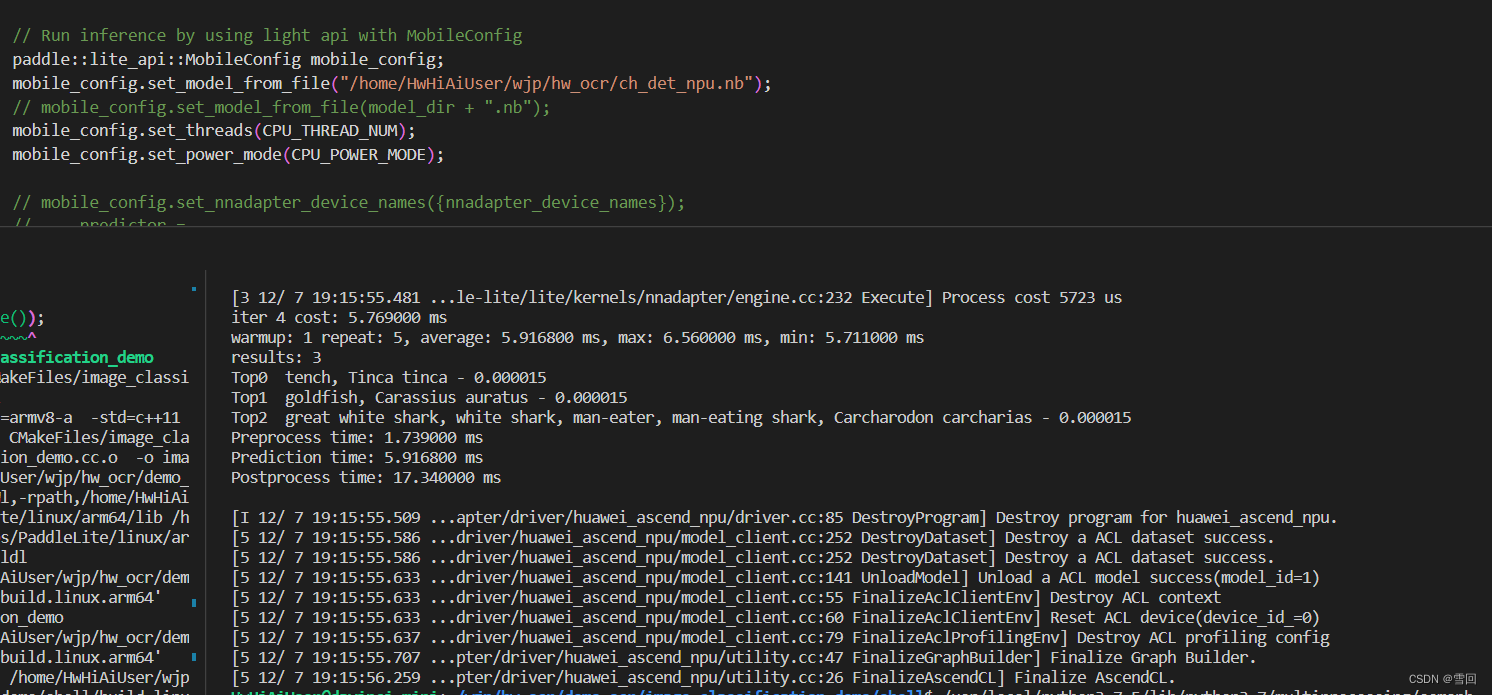

四.将ocr代码改成nnadapter与ascendnpu格式

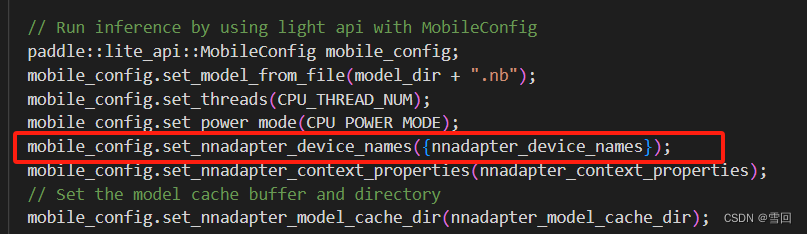

通过研究demo发现,,paddleLite调用ascend加速npu的代码最重要的是在添加nnadapter_device参数,在昇腾上就应该设置为huawei_ascend_npu

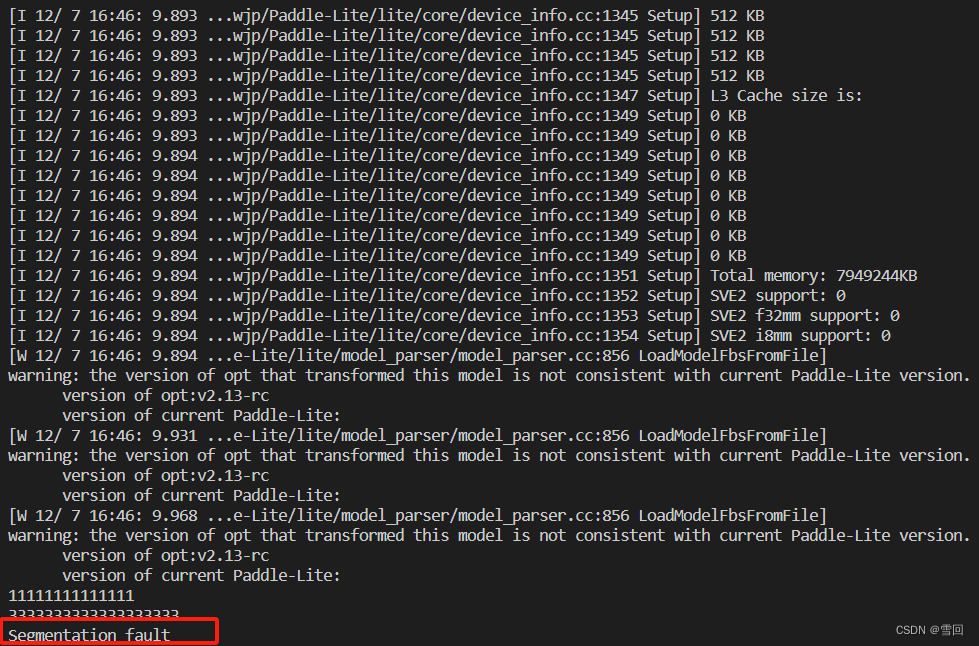

下面的红框部分是我依据Demo改写的,但是会报不知名错误

暂不知道具体因为什么原因

五.必须设置环境变量!

必须将ascend相关环境写进环境变量,且不可再调用可执行文件的脚本里重复export环境变量,不然还会出现奇奇怪怪的错误

1.写入环境变量

vim ~/.bashrc

参考自己环境里的相关ascend-toolkit装在什么地址来改写

export LD_LIBRARY_PATH=/usr/local/Ascend/acllib/lib64

export install_path=/usr/local/Ascend/ascend-toolkit/latest

#软件包安装路径,请根据实际情况修改

export ASCEND_OPP_PATH=${install_path}/opp

export ASCEND_AICPU_PATH=${install_path}/arm64-linux #其中{arch}请根据实际情况替换(arm64或x86_64)

export TOOLCHAIN_HOME=${install_path}/toolkit

#开发离线推理程序时配置

export LD_LIBRARY_PATH=${install_path}/acllib/lib64:$LD_LIBRARY_PATH

export PYTHONPATH=${install_path}/toolkit/python/site-packages:${install_path}/pyACL/python/site-packages/acl:$PYTHONPATH

#进行模型转换算子开发时配置

export LD_LIBRARY_PATH=${install_path}/atc/lib64:$LD_LIBRARY_PATH

export PATH=${install_path}/atc/ccec_compiler/bin:${install_path}/atc/bin:$PATH

export PYTHONPATH=${install_path}/toolkit/python/site-packages:${install_path}/atc/python/site-packages:$PYTHONPATH

# 配置python3.7.5环境变量,请根据实际路径替换

export LD_LIBRARY_PATH=/usr/local/python3.7.5/lib:/home/HwHiAiUser/aicamera/lib:$LD_LIBRARY_PATH

export PATH=/usr/local/python3.7.5/bin:$PATH

export LD_LIBRARY_PATH=/usr/local/protobuf/lib:$LD_LIBRARY_PATH182,1 Bot

2.脚本里写进临时环境变量

# export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:.:../../libs/PaddleLite/$TARGET_OS/$TARGET_ABI/lib:../../libs/PaddleLite/$TARGET_OS/$TARGET_ABI/lib/$NNADAPTER_DEVICE_NAMES:../../libs/PaddleLite/$TARGET_OS/$TARGET_ABI/lib/cpuexport LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/home/HwHiAiUser/wjp/hw_ocr/govern_test/libs/PaddleLite/linux/arm64/lib# HUAWEI_ASCEND_TOOLKIT_HOME="/usr/local/Ascend/ascend-toolkit/5.0.2.alpha003" #"/usr/local/Ascend/ascend-toolkit/6.0.RC1.alpha003"NNADAPTER_CONTEXT_PROPERTIES="HUAWEI_ASCEND_NPU_SELECTED_DEVICE_IDS=0"# export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/Ascend/driver/lib64# export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/Ascend/driver/lib64/stub# export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$HUAWEI_ASCEND_TOOLKIT_HOME/fwkacllib/lib64# export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$HUAWEI_ASCEND_TOOLKIT_HOME/acllib/lib64# export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/lib64# export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$HUAWEI_ASCEND_TOOLKIT_HOME/opp/op_proto/built-in# export PYTHONPATH=$PYTHONPATH:$HUAWEI_ASCEND_TOOLKIT_HOME/fwkacllib/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/acllib/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/toolkit/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/python/site-packages:$HUAWEI_ASCEND_TOOLKIT_HOME/pyACL/python/site-packages/acl# export PATH=$PATH:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/ccec_compiler/bin:${HUAWEI_ASCEND_TOOLKIT_HOME}/acllib/bin:$HUAWEI_ASCEND_TOOLKIT_HOME/atc/bin# export ASCEND_AICPU_PATH=$HUAWEI_ASCEND_TOOLKIT_HOME# export ASCEND_OPP_PATH=$HUAWEI_ASCEND_TOOLKIT_HOME/opp# export TOOLCHAIN_HOME=$HUAWEI_ASCEND_TOOLKIT_HOME/toolkit# export ASCEND_SLOG_PRINT_TO_STDOUT=1# export ASCEND_GLOBAL_LOG_LEVEL=1

可执行程序在运行的时候需要调用相应动态库才能运行,所以需要写入临时环境变量。

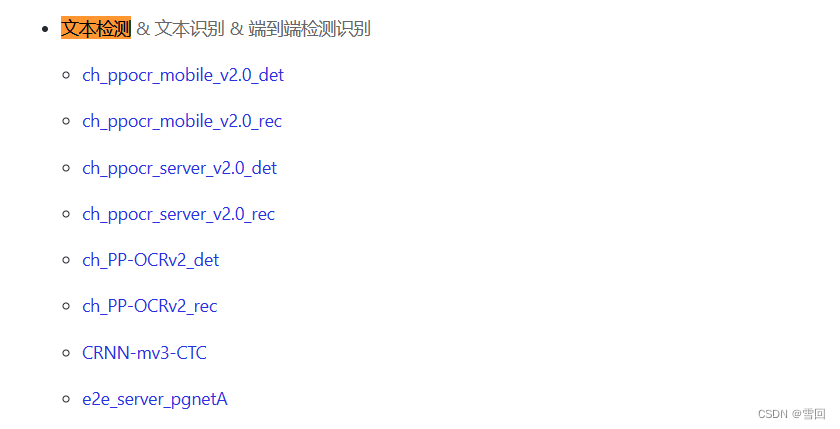

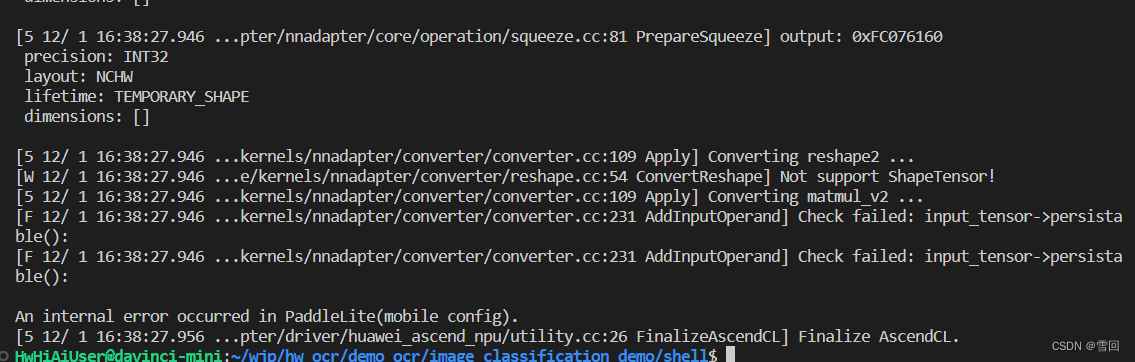

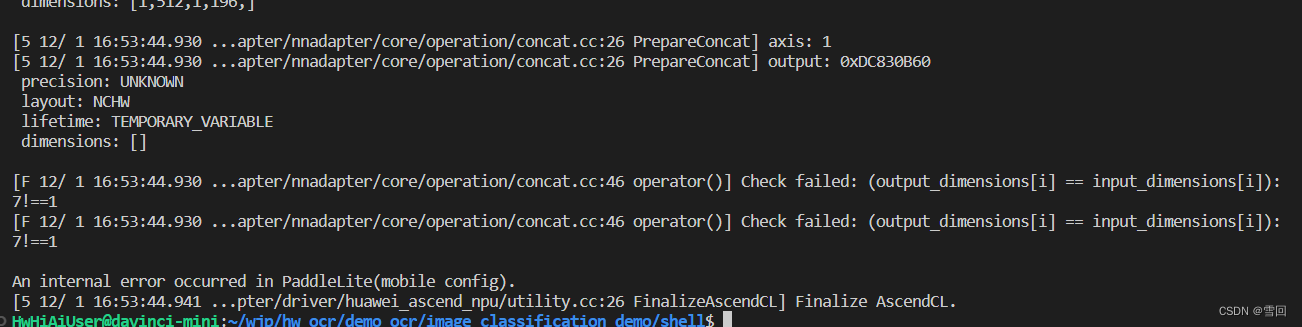

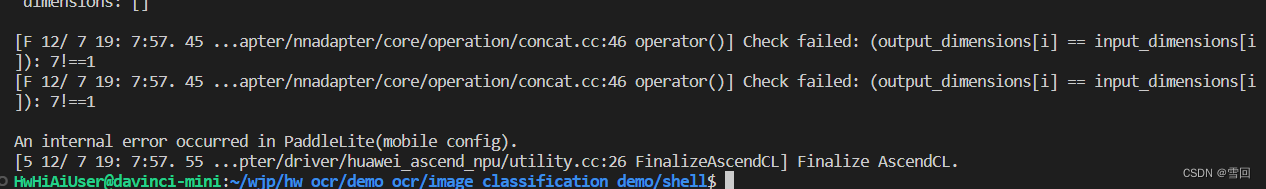

五.部分模型不能成功转成nnc格式加速的问题

然后我想到了一个新方法,直接用能成功调用npu加速的Demo来直接将这些ocr模型转换成加密nnc格式

- ascend文档

https://www.paddlepaddle.org.cn/lite/develop/demo_guides/huawei_ascend_npu.html#npu

然后发现在文档种写的支持的上列模型,det模型能成功转换,而rec模型并不能成功转换

上面三张截图是ch-ppocr-mobile v2.O_rec,0 ch ppocr_server v2 .0rec,0 ch PP-OCRv2 rec的转换结果,可以看到都转换失败。

det模型转换成功

说明抛开其他因素,一些模型本身也是无法转换的

六.考虑其他办法

待续

这篇关于在Ascend昇腾硬件用npu加速paddleLite版本ocr(nnadapter)的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!