本文主要是介绍YOLOv8 C2f模块融合shuffleAttention注意力机制,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

1. 引言

1.1YOLOv8直接添加注意力机制

yolov8添加注意力机制是一个非常常见的操作,常见的操作直接将注意力机制添加至YOLOv8的某一层之后,这种改进特别常见。

示例如下:

新版yolov8添加注意力机制(以NAMAttention注意力机制为例)

YOLOv8添加注意力机制(ShuffleAttention为例)

知网上常见的添加注意力机制的论文均使用的上述方式。

下面展示一种将注意力机制融合至模块中的方法。

1.2 YOLOv8 C2f融合注意力机制

C2f模块融合注意力机制,而不是直接放置在某一层后面。

示例如下:

YOLOv8将注意力机制融合进入C2f模块(SE注意力机制为例)

以及本篇shuffleAttention注意力机制。

1.3常见注意力机制

以下是一些常见的注意力机制实现的代码,具体看此贴。

常见注意力机制代码实现

2. 实验

2.1 ShuffleAttention

Shuffle注意力机制,代码如下:

class ShuffleAttention(nn.Module):def __init__(self, channel=512, reduction=16, G=8):super().__init__()self.G = Gself.channel = channelself.avg_pool = nn.AdaptiveAvgPool2d(1)self.gn = nn.GroupNorm(channel // (2 * G), channel // (2 * G))self.cweight = Parameter(torch.zeros(1, channel // (2 * G), 1, 1))self.cbias = Parameter(torch.ones(1, channel // (2 * G), 1, 1))self.sweight = Parameter(torch.zeros(1, channel // (2 * G), 1, 1))self.sbias = Parameter(torch.ones(1, channel // (2 * G), 1, 1))self.sigmoid = nn.Sigmoid()def init_weights(self):for m in self.modules():if isinstance(m, nn.Conv2d):init.kaiming_normal_(m.weight, mode='fan_out')if m.bias is not None:init.constant_(m.bias, 0)elif isinstance(m, nn.BatchNorm2d):init.constant_(m.weight, 1)init.constant_(m.bias, 0)elif isinstance(m, nn.Linear):init.normal_(m.weight, std=0.001)if m.bias is not None:init.constant_(m.bias, 0)@staticmethoddef channel_shuffle(x, groups):b, c, h, w = x.shapex = x.reshape(b, groups, -1, h, w)x = x.permute(0, 2, 1, 3, 4)# flattenx = x.reshape(b, -1, h, w)return xdef forward(self, x):b, c, h, w = x.size()# group into subfeaturesx = x.view(b * self.G, -1, h, w) # bs*G,c//G,h,w# channel_splitx_0, x_1 = x.chunk(2, dim=1) # bs*G,c//(2*G),h,w# channel attentionx_channel = self.avg_pool(x_0) # bs*G,c//(2*G),1,1x_channel = self.cweight * x_channel + self.cbias # bs*G,c//(2*G),1,1x_channel = x_0 * self.sigmoid(x_channel)# spatial attentionx_spatial = self.gn(x_1) # bs*G,c//(2*G),h,wx_spatial = self.sweight * x_spatial + self.sbias # bs*G,c//(2*G),h,wx_spatial = x_1 * self.sigmoid(x_spatial) # bs*G,c//(2*G),h,w# concatenate along channel axisout = torch.cat([x_channel, x_spatial], dim=1) # bs*G,c//G,h,wout = out.contiguous().view(b, -1, h, w)# channel shuffleout = self.channel_shuffle(out, 2)return out

可以将以上注意力机制的代码放到ultralytics/nn/modules/conv.py目录的最后。

2.2 模块添加

ShuffleAttention_Bottleneck和C2f_ShuffleAttention模块代码如下:

class ShuffleAttention_Bottleneck(nn.Module):def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):super().__init__()c_ = int(c2 * e)self.cv1 = Conv(c1, c_, k[0], 1)self.cv2 = Conv(c_, c2, k[1], 1, g=g)self.se = ShuffleAttention(c2, 16, 8)self.add = shortcut and c1 == c2def forward(self, x):return x + self.se(self.cv2(self.cv1(x))) if self.add else self.se(self.cv2(self.cv1(x)))class C2f_ShuffleAttention(nn.Module):def __init__(self, c1, c2, shortcut = False, g = 1, n = 1, e = 0.5):super().__init__()self.c = int(c2 * e)self.cv1 = Conv(c1, 2 * self.c, 1, 1)self.cv2 = Conv((2 + n) * self.c, c2, 1)self.m = nn.ModuleList(ShuffleAttention_Bottleneck(self.c, self.c, shortcut, g, k=((3,3),(3,3)), e = 1.0) for _ in range(n))def forward(self, x):y = list(self.cv1(x).chunk(2,1))y.extend(m(y[-1]) for m in self.m)return self.cv2(torch.cat(y, 1))def forward_split(self, x):y = list(self.cv1(x).split((self.c, self.c), 1))y.extend(m(y[-1]) for m in self.m)return self.cv2(torch.cat(y, 1))可以将以上ShuffleAttention_Bottleneck和C2f_ShuffleAttention模块的代码放到ultralytics/nn/modules/conv.py目录的最后。

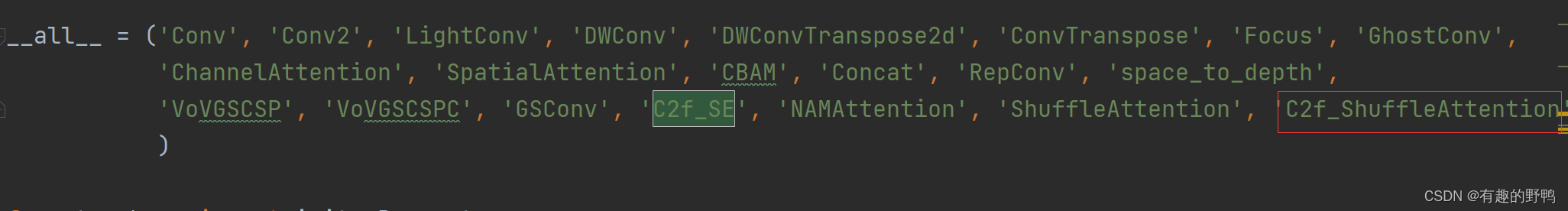

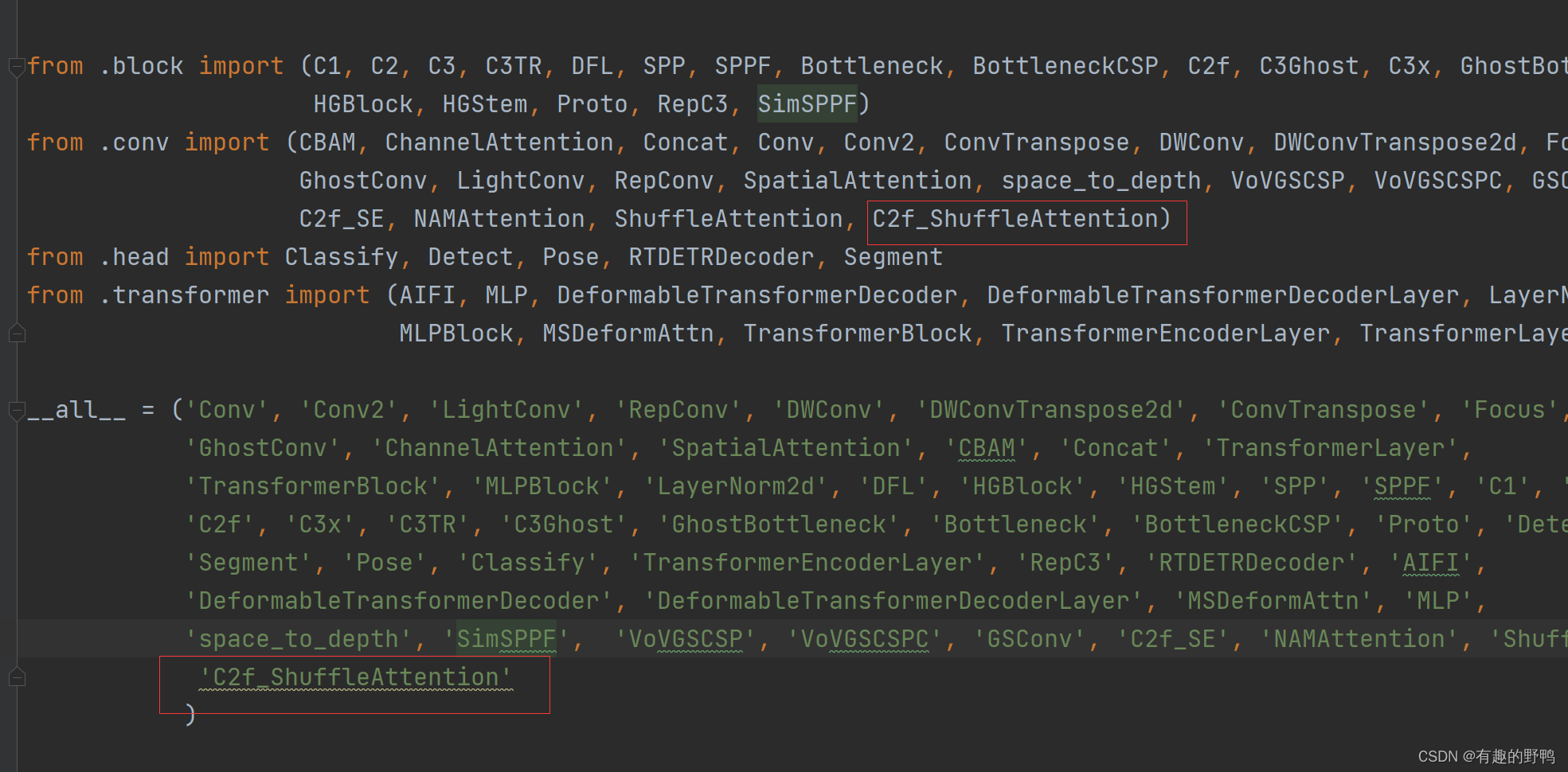

在ultralytics/nn/modules/conv.py文件的最前面添加C2f_ShuffleAttention。

在ultralytics/nn/modules/ __ init__.py中,添加C2f_ShuffleAttention模块。

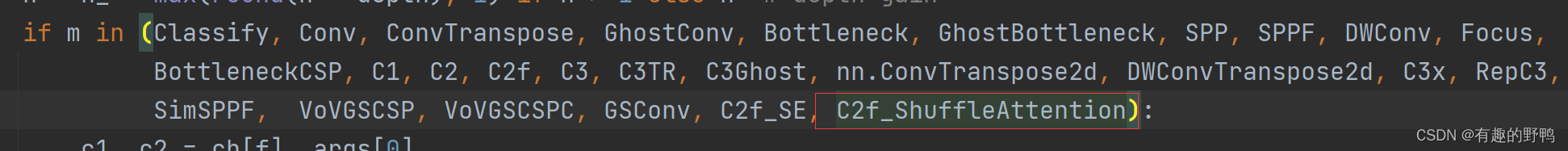

2.3 task.py改写

在ultralytics/nn/tasks.py中,在parse_model(d, ch, verbose=True)方法中,添加C2f_ShuffleAttention即可。

保持与C2f的调用一样。

2.4 模型改写

创建模块:ultralytics/cfg/models/v8/yolov8n-C2f_ShuffleAttention.yaml,以yolov8n为例:修改后的模型如下:

# Ultralytics YOLO 🚀, GPL-3.0 license# Parameters

nc: 1 # number of classes

depth_multiple: 0.33 # scales module repeats

width_multiple: 0.25 # scales convolution channels# YOLOv8.0n backbone

backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 3, C2f_ShuffleAttention, [128, True]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 6, C2f_ShuffleAttention, [256, True]]- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16- [-1, 6, C2f_ShuffleAttention, [512, True]]- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32- [-1, 3, C2f_ShuffleAttention, [1024, True]]- [-1, 1, SPPF, [1024, 5]] # 9# YOLOv8.0n head

head:- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 3, C2f, [512]] # 12- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 3, C2f, [256]] # 15 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]]- [[-1, 12], 1, Concat, [1]] # cat head P4- [-1, 3, C2f, [512]] # 18 (P4/16-medium)- [-1, 1, Conv, [512, 3, 2]]- [[-1, 9], 1, Concat, [1]] # cat head P5- [-1, 3, C2f, [1024]] # 21 (P5/32-large)- [[15, 18, 21], 1, Detect, [nc]] # Detect(P3, P4, P5)也可以尝试不替换backbone中的C2f模块而替换head模块中的某些模块。

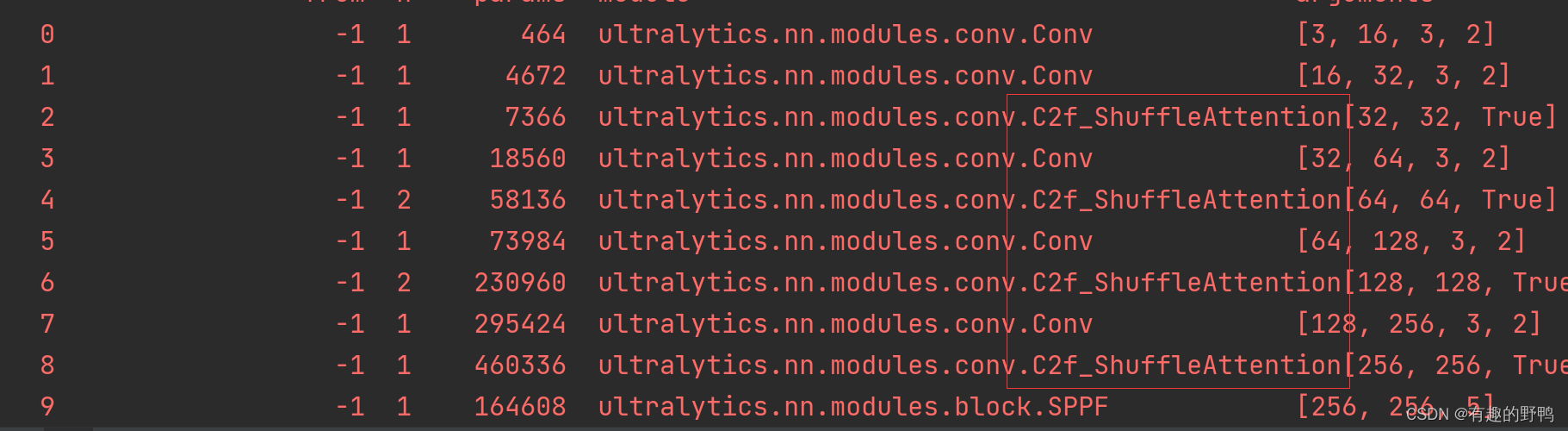

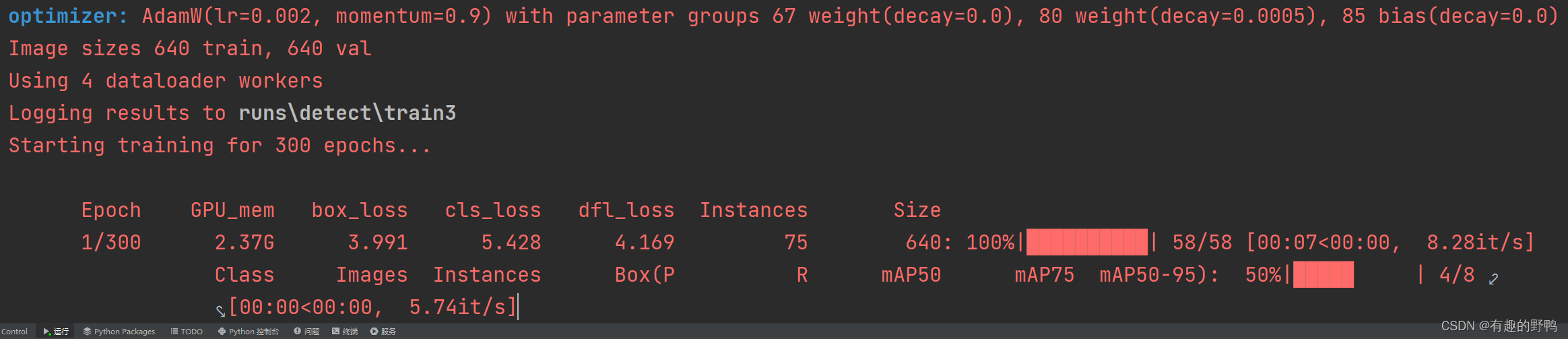

3.运行截图

模型运行图片如下

没有报错

这篇关于YOLOv8 C2f模块融合shuffleAttention注意力机制的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!