本文主要是介绍阿里云搭建大数据平台(8):flume安装部署和测试,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

一、flume安装

1.解压缩

tar -zxvf flume-ng-1.6.0-cdh5.15.0.tar.gz -C /opt/modules/2.修改名字

mv apache-flume-1.6.0-cdh5.15.0-bin/ flume-1.6.0-cdh5.15.0-bin/ 3.配置文件:

conf/flume-env.sh(没有则重命名flume-env.sh.template)

export JAVA_HOME=/opt/modules/jdk1.8.0_1514.测试是否成功

bin/flume-ng version结果:Flume 1.6.0-cdh5.15.0

Source code repository: https://git-wip-us.apache.org/repos/asf/flume.git

Revision: efd9b9d9eccdb177341c096d73bcaf70f9ea31c6

Compiled by jenkins on Thu May 24 04:26:40 PDT 2018

From source with checksum ae1e74e47187f6790f7fd226a8ca1920二、flume的flume-ng命令

Usage: bin/flume-ng <command> [options]...

1.commands:

agent run a Flume agentavro-client run an avro Flume client2.options

(1)global options:

--conf,-c <conf> use configs in <conf> directory(2)agent options:

--name,-n <name> the name of this agent (required)--conf-file,-f <file> specify a config file (required if -z missing)(3)avro-client options:

--rpcProps,-P <file> RPC client properties file with server connection params--host,-H <host> hostname to which events will be sent--port,-p <port> port of the avro source--dirname <dir> directory to stream to avro source--filename,-F <file> text file to stream to avro source (default: std input)--headerFile,-R <file> File containing event headers as key/value pairs on each new line(4)提交任务的命令:

bin/flume-ng agent --conf conf --name agent --conf-file conf/test.properties

bin/flume-ng agent -c conf -n agent -f conf/test.properties Dflume.root.logger=INFO,console

bin/flume-ng avro-client --conf conf --host hadoop --port 8080三、配置情况选择

1.flume安装在hadoop集群中(自己情况)

配置JAVA_HOME:

export JAVA_HOME=/opt/modules/jdk1.8.0_1512 flume安装在hadoop集群中,而且还配置了HA

(1)HDFS访问入口变化

(2)配置JAVA_HOME:export JAVA_HOME=/opt/modules/jdk1.8.0_151

(3)还需要添加hadoop的core-site.xml和hdfs-site.xml拷贝到flume的conf目录

3.flume不在hadoop集群里

(1)配置JAVA_HOME

export JAVA_HOME=/opt/modules/jdk1.8.0_151(2)还需要添加hadoop的core-site.xml和hdfs-site.xml拷贝到flume的conf目录

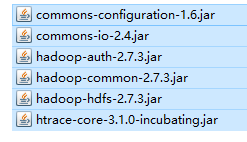

(3)将hadoop的一些jar包添加到flume的lib目录下,需要时对应版本的jar包

四、运行官网案例

1.配置flume运行文件flume-conf.properties

# 1.Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1# 2.Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = hadoop

a1.sources.r1.port = 44444# 3.Describe the sink

a1.sinks.k1.type = logger# 4.Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100# 5.Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c12.运行flume

bin/flume-ng agent --name a1 --conf conf --conf-file conf/flume-conf.properties -Dflume.root.logger=INFO,console3.安装telnet

sudo yum -y install telnet4.打开44444端口并且输入测试

telnet hadoop 44444结果:flume可以接收telnet输入数据~

这篇关于阿里云搭建大数据平台(8):flume安装部署和测试的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!