本文主要是介绍Hadoop+Spark大数据技术(微课版)曾国荪、曹洁版 第七章 Spark RDD编程实验,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

Apache Spark中,`sc` 和 `reduce` 分别代表 SparkContext 和 reduce 函数。

使用文本文件创建RDD

注意:需要先在hadoop分布式文件系统中创建文件

1.先在本地文件系统创建data.txt文件

cd /usr/local/hadoop/input

gedit data.txt

2.启动hadoop分布式文件系统

./sbin/start-dfs.sh

3.上传本地文件data.txt到hadoop分布式文件系统

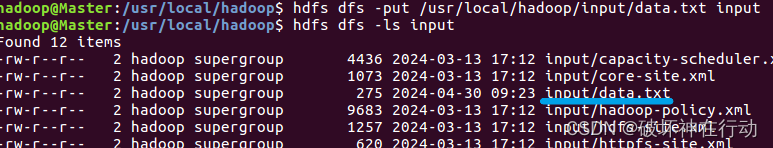

hdfs dfs -put /usr/local/hadoop/input/data.txt input

查看分布式文件系统中是否存在data.txt

hdfs dfs -ls input

textFile 函数用于读取文本文件中的内容,并将其转换为RDD。

rdd.map(line => line.length):这一行代码将每一行文本映射为其长度,即字符数。

.map()函数对RDD中的每个元素应用给定的函数,并返回结果组成的新RDD。

.reduce(_ + _):这一行代码对RDD中的所有元素进行累加,得到它们的总和。.reduce()函数将RDD中的元素两两合并,直到只剩下一个元素,这里使用的匿名函数 _ + _ 表示将两个元素相加。

val wordCount = rdd.map(line => line.length).reduce(_ + _):这行代码将文本文件中所有行的字符数求和,并将结果保存在wordCount变量中。

wordCount:最后,wordCount 变量中保存着文本文件中所有行的字符总数。

val rdd = sc.textFile("/user/hadoop/input/data.txt")

rdd: org.apache.spark.rdd.RDD[String] = /user/hadoop/input/data.txt MapPartitionsRDD[31] at textFile at <console>:25val wordCount = rdd.map(line => line.length).reduce(_ + _)

wordCountwordCount: Int = 274

res11: Int = 274

一些JSON数据

{

"name": "中国",

"province": [

{

"name": "河南",

"cities": [

{

"city": ["郑州","洛阳"]

]

}

]

}

```

{

"code": 0,

"msg": "",

"count": 2,

"data": [

{

"id": 101,

"username": "zhangsan",

"city": "xiamen"

},

{

"id": 102,

"username": "liming",

"city": "zhengzhou"

}

]

}

{"学号":"106","姓名":"李明","数据结构":"92"}

{"学号":"242","姓名":"李乐","数据结构":"93"}

{"学号":"107","姓名":"冯涛","数据结构":"99"}

完整程序练习

seq是一个包含元组的列表,每个元组都有一个字符串键和一个字符串列表值。

sc.parallelize方法将seq转换为一个RDD。

rddP.partitions.size是用来获取RDD的分区数量的。RDD的分区是数据的逻辑划分,决定了数据在集群中的分布方式。每个分区都可以在集群的不同节点上进行并行处理。

//使用程序中的数据创建RDDval arr = Array(1,2,3,4,5,6)val rdd = Array(1,2,3,4,5,6)arr: Array[Int] = Array(1, 2, 3, 4, 5, 6)

rdd: Array[Int] = Array(1, 2, 3, 4, 5, 6)val sum = rdd.reduce(_ + _)sum: Int = 21//parallelize() 创建RDDval seq = List( ("num", List("one","two","three") ), ("study",List("Scala","Python","Hadoop")) , ("color", List("blue","white","black")) )val rddP = sc.parallelize(seq) rddp.partitions.sizeseq: List[(String, List[String])] = List((num,List(one, two, three)), (study,List(Scala, Python, Hadoop)), (color,List(blue, white, black)))

rddP: org.apache.spark.rdd.RDD[(String, List[String])] = ParallelCollectionRDD[7] at parallelize at <console>:31

res5: Int = 2//makeRDD() 创建RDDval rddM = sc.makeRDD(seq)rddM.partitions.sizerddM: org.apache.spark.rdd.RDD[String] = ParallelCollectionRDD[8] at makeRDD at <console>:30

res6: Int = 3//hdfs的文件创建RDDval rdd = sc.textFile("/user/hadoop/input/data.txt")rdd: org.apache.spark.rdd.RDD[String] = /user/hadoop/input/data.txt MapPartitionsRDD[31] at textFile at <console>:25val wordCount = rdd.map(line => line.length).reduce(_ + _)wordCountwordCount: Int = 274

res11: Int = 274//本地文件创建RDDval rdd = sc.textFile("file:/home/hadoop/data.txt")val wordCount = rdd.map(line => line.length).reduce(_ + _)wordCountrdd: org.apache.spark.rdd.RDD[String] = file:/home/hadoop/data.txt MapPartitionsRDD[34] at textFile at <console>:29

wordCount: Int = 274

res12: Int = 274val rddw1 = sc.textFile("file:/home/hadoop/input")rddw1.collect()rddw1: org.apache.spark.rdd.RDD[String] = file:/home/hadoop/input MapPartitionsRDD[37] at textFile at <console>:25

res13: Array[String] = Array(Hello Spark!, Hello Scala!)val rddw1 = sc.wholeTextFiles("file:/home/hadoop/input")rddw1.collect()rddw1: org.apache.spark.rdd.RDD[(String, String)] = file:/home/hadoop/input MapPartitionsRDD[39] at wholeTextFiles at <console>:27

res14: Array[(String, String)] =

Array((file:/home/hadoop/input/text1.txt,"Hello Spark!

"), (file:/home/hadoop/input/text2.txt,"Hello Scala!

"))val jsonStr = sc.textFile("file:/home/hadoop/student.json")jsonStr.collect()jsonStr: org.apache.spark.rdd.RDD[String] = file:/home/hadoop/student.json MapPartitionsRDD[48] at textFile at <console>:28

res18: Array[String] = Array({"学号":"106","姓名":"李明","数据结构":"92"}, {"学号":"242","姓名":"李乐","数据结构":"93"}, {"学号":"107","姓名":"冯涛","数据结构":"99"})import scala.util.parsing.json.JSONval jsonStr = sc.textFile("file:/home/hadoop/student.json")val result = jsonStr.map(s => JSON.parseFull(s))result.foreach(println)Some(Map(学号 -> 107, 姓名 -> 冯涛, 数据结构 -> 99))

Some(Map(学号 -> 106, 姓名 -> 李明, 数据结构 -> 92))

Some(Map(学号 -> 242, 姓名 -> 李乐, 数据结构 -> 93))import scala.util.parsing.json.JSON

jsonStr: org.apache.spark.rdd.RDD[String] = file:/home/hadoop/student.json MapPartitionsRDD[50] at textFile at <console>:31

result: org.apache.spark.rdd.RDD[Option[Any]] = MapPartitionsRDD[51] at map at <console>:32import java.io.StringReaderimport au.com.bytecode.opencsv.CSVReaderval gradeRDD = sc.textFile("file:/home/hadoop/sparkdata/grade.csv")val result = gradeRDD.map{line => val reader = new CSVReader(new StringReader(line));reader.readNext()}result.collect().foreach(x => println(x(0),x(1),x(2)))(101,LiNing,95)

(102,LiuTao,90)

(103,WangFei,96)import java.io.StringReader

import au.com.bytecode.opencsv.CSVReader

gradeRDD: org.apache.spark.rdd.RDD[String] = file:/home/hadoop/sparkdata/grade.csv MapPartitionsRDD[53] at textFile at <console>:31

result: org.apache.spark.rdd.RDD[Array[String]] = MapPartitionsRDD[54] at map at <console>:32

这篇关于Hadoop+Spark大数据技术(微课版)曾国荪、曹洁版 第七章 Spark RDD编程实验的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!