本文主要是介绍解决:PytorchStreamWriter failed writing file data,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

文章目录

- 问题内容

- 问题分析

- 解决思路

问题内容

今天在炼丹的时候,我发现模型跑到140步的时候保存权重突然报了个问题,详细内容如下:

Traceback (most recent call last):File "/public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/serialization.py", line 423, in save_save(obj, opened_zipfile, pickle_module, pickle_protocol)File "/public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/serialization.py", line 650, in _savezip_file.write_record(name, storage.data_ptr(), num_bytes)

RuntimeError: [enforce fail at inline_container.cc:450] . PytorchStreamWriter failed writing file data/1125: file write failedDuring handling of the above exception, another exception occurred:Traceback (most recent call last):File "ds_train.py", line 160, in <module>main()File "ds_train.py", line 135, in mainmodel_engine.save_checkpoint(f"{cfg.output_dir}")File "/public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/deepspeed/runtime/engine.py", line 2890, in save_checkpointself._save_checkpoint(save_dir, tag, client_state=client_state)File "/public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/deepspeed/runtime/engine.py", line 3092, in _save_checkpointself.checkpoint_engine.save(state, save_path)File "/public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/deepspeed/runtime/checkpoint_engine/torch_checkpoint_engine.py", line 22, in savetorch.save(state_dict, path)File "/public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/serialization.py", line 424, in savereturnFile "/public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/serialization.py", line 290, in __exit__self.file_like.write_end_of_file()

RuntimeError: [enforce fail at inline_container.cc:325] . unexpected pos 286145984 vs 286145872

terminate called after throwing an instance of 'c10::Error'what(): [enforce fail at inline_container.cc:325] . unexpected pos 286145984 vs 286145872

frame #0: c10::ThrowEnforceNotMet(char const*, int, char const*, std::string const&, void const*) + 0x47 (0x2b48b09ef7d7 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libc10.so)

frame #1: <unknown function> + 0x2fd16e0 (0x2b487a93e6e0 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_cpu.so)

frame #2: mz_zip_writer_add_mem_ex_v2 + 0x723 (0x2b487a9392c3 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_cpu.so)

frame #3: caffe2::serialize::PyTorchStreamWriter::writeRecord(std::string const&, void const*, unsigned long, bool) + 0xb5 (0x2b487a941835 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_cpu.so)

frame #4: caffe2::serialize::PyTorchStreamWriter::writeEndOfFile() + 0x2c3 (0x2b487a941d43 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_cpu.so)

frame #5: caffe2::serialize::PyTorchStreamWriter::~PyTorchStreamWriter() + 0x125 (0x2b487a941ff5 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_cpu.so)

frame #6: <unknown function> + 0x66a353 (0x2b486ea82353 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_python.so)

frame #7: <unknown function> + 0x23c986 (0x2b486e654986 in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_python.so)

frame #8: <unknown function> + 0x23debe (0x2b486e655ebe in /public/home/dyedd/.conda/envs/diffusers/lib/python3.8/site-packages/torch/lib/libtorch_python.so)

frame #9: <unknown function> + 0x110632 (0x560fa3677632 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #10: <unknown function> + 0x110059 (0x560fa3677059 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #11: <unknown function> + 0x110043 (0x560fa3677043 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #12: <unknown function> + 0x110043 (0x560fa3677043 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #13: <unknown function> + 0x110043 (0x560fa3677043 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #14: <unknown function> + 0x110043 (0x560fa3677043 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #15: <unknown function> + 0x110043 (0x560fa3677043 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #16: <unknown function> + 0x177ce7 (0x560fa36dece7 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #17: PyDict_SetItemString + 0x4c (0x560fa36e1d8c in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #18: PyImport_Cleanup + 0xaa (0x560fa3754a2a in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #19: Py_FinalizeEx + 0x79 (0x560fa37ba4c9 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #20: Py_RunMain + 0x1bc (0x560fa37bd83c in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #21: Py_BytesMain + 0x39 (0x560fa37bdc29 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

frame #22: __libc_start_main + 0xf5 (0x2b4852ea13d5 in /lib64/libc.so.6)

frame #23: <unknown function> + 0x1f9ad7 (0x560fa3760ad7 in /public/home/dyedd/.conda/envs/diffusers/bin/python)

问题分析

这个问题实际上是说,模型权重在保存的时候不完整。

这时候我惊呆了,我的模型都已经保存了140次了,怎么回事?难道是我的程序写出了隐藏BUG?

吃惊的同时召唤魔法去搜索,果然网友也有我的这个问题,但是他们说保存的目录有问题。我立马转回去看我的权重保存路径,没错呢,程序也不可能自动删除目录呀。看来我们遇到的不是同一个问题。

直到…

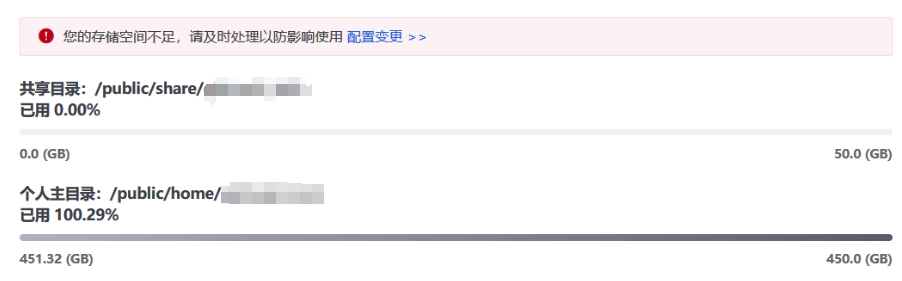

我看了下的硬盘,好家伙。怪不得模型权重保存不完整,原先是我的硬盘被前面的140次保存给吃饱了,一丁点空间都没有~

哎,十分感叹,果然大模型的时代,训练的东西都不是小孩,在以前就是保存几千次都没有这么多问题。

解决思路

所以这个问题如何解决呢?

那就是删除被占用的空间,当然你还可以设置保存权重的频率,例如在deepspeed:

for epoch in range(cfg.num_epochs) :model_engine.train()for i, data in enumerate(training_dataloader):if i % cfg.save_interval == 0:# save checkpointmodel_engine.save_checkpoint(f"{cfg.output_dir}")

又回到刚刚说的删除被占用的空间,我建议不要全删了,因为deepspeed会把目前最优的权重文件夹保存在latest文件,你只要双击查看,然后删除多余的其它文件即可。

这就是典型的排他思想,哈哈,又回想起学JS的时候Pink老师说的。

代码我用GPT4写好了,并且经过了充分的验证:

import os

import shutildef cleanup_except_latest(directory_path):# 读取 latest 文件的内容latest_file_path = os.path.join(directory_path, "latest")with open(latest_file_path, "r") as file:# 读取要保留的文件夹名称folders_to_keep = file.read().strip().split('\n')# 添加"latest"到保留列表folders_to_keep.append("latest")# 获取目录下的所有文件和文件夹all_items = os.listdir(directory_path)# 过滤出所有文件夹folders = [item for item in all_items if os.path.isdir(os.path.join(directory_path, item))]# 删除不在保留列表中的文件夹for folder in folders:if folder not in folders_to_keep:folder_path = os.path.join(directory_path, folder)shutil.rmtree(folder_path)# 返回更新后的目录内容print(os.listdir(directory_path))if __name__ == '__main__':cleanup_except_latest("/home/dcuuser/dxm/diffusers/train/FineTunedStableDiffusion-lora")

在deepspeed重新训练的时候会检测到当前保留的最优值,然后继续开始训练的,所以不要担心删除了会造成什么影响。

- 转载于染念的博客

这篇关于解决:PytorchStreamWriter failed writing file data的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!