本文主要是介绍王学岗——————FFmpeg同步原理机制 与 Opensl es 播放器流程(43节课-47节课),希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

前言

1,AudioTrack只能播放声音不能播放冷门的音频,支持的格式少,不能实现特效。

2,OpenSL Es,专门用来播放声音。

架构

1,架构分为Java层和native层,Java层用来控制,native层用来播放。

2,播放的功能,本身是一个服务,肯定在一个服务类里面。

3,播放控制(播放暂停快进等)肯定写在Actvity。

4,服务和控制之间的通信使用广播。

5,service持有MNPlayer引用。

6,MNffmpeg统一调用播放器的东西。

7,native-lib只负责JNI接口。

8,OpenSL ES开发流程,

创建:create 初始化:Realize得到接口:GetInterface

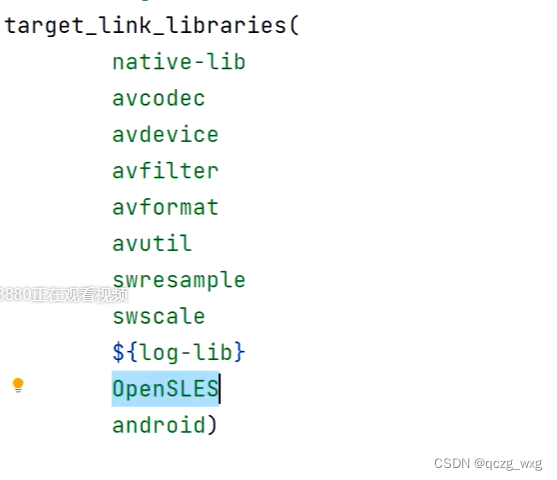

9,openSl ES 是系统支持的,需要导入系统包

10,MNFFmpeg负责读取数据(音频和视频等)和初始化FFmpeg各个模块

11,opensles 不能播放aac数据,只能播放解码后的数据。

12,AvFrame就是解码后的数据。

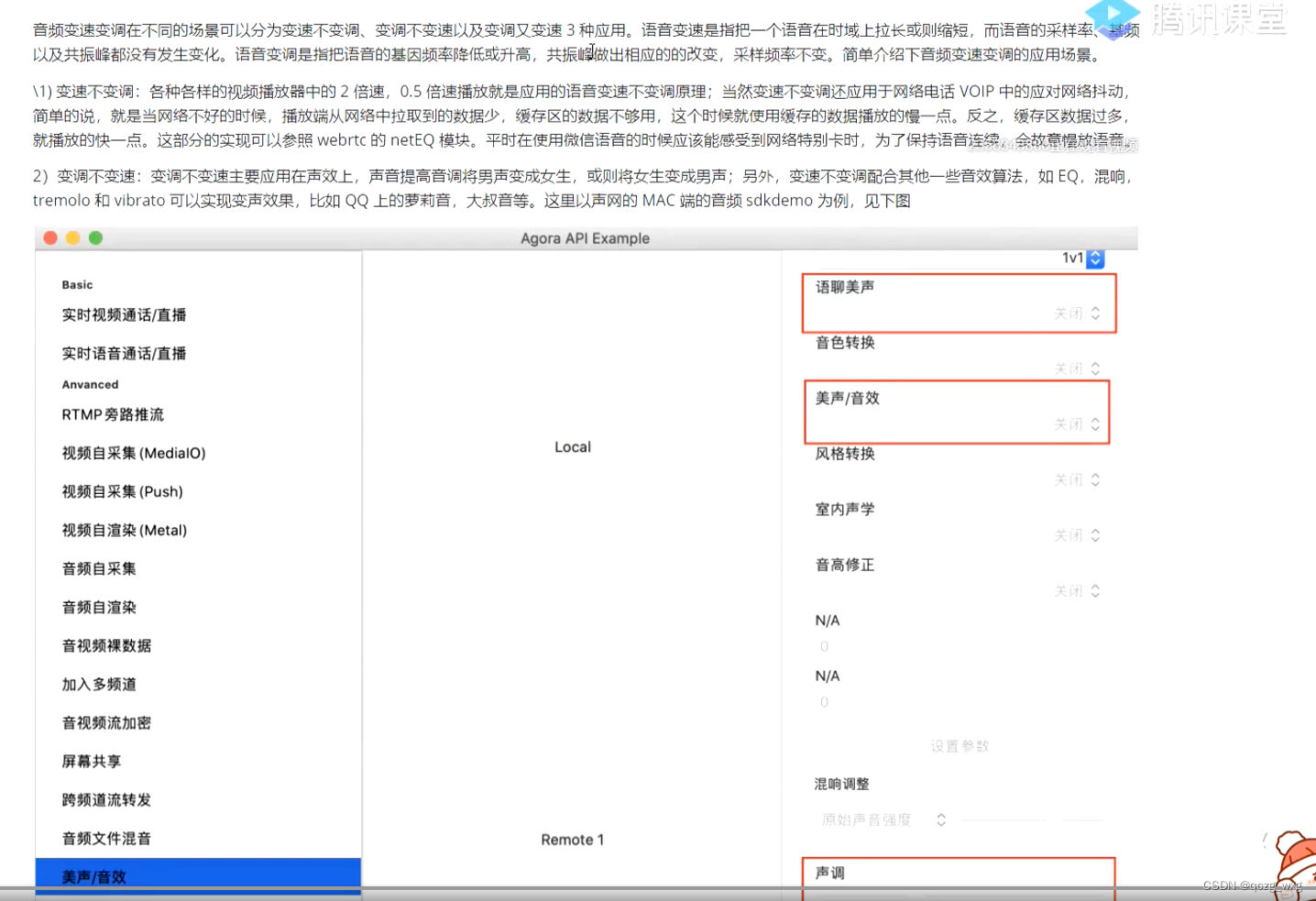

13,音频倍速:重新整理波形,需要使用soundTouch库。

14,视频倍速:丢帧

15,soundTouch

16,变音(萝莉音,大叔音)要通过fmod库实现。

17,整理波形,最好在喇叭前面整理,对压缩数据整理没意义

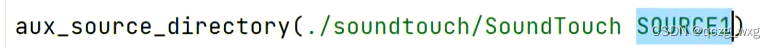

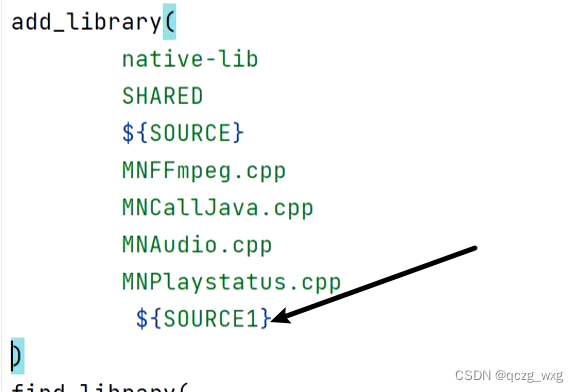

18,导入头文件

19,导入库文件

后面是变量名。

20,一个音量值两个字节,short也是两个字节。

21,每帧音频字节很小,而且恒定。

22,视频渲染使用OpenGL,音频渲染使用Opensl Es。

23,线程只能C开,C++用的也是C的线程。

24,做同步以音频为主,因为音频是线性的。

总架构图

代码

//

// Created by maniu on 2022/8/5.

//#include <pthread.h>#include "MNAudio.h"

#include "AndroidLog.h"

MNAudio::MNAudio(MNPlaystatus *playstatus, int sample_rate, MNCallJava *callJava) {this->playstatus = playstatus;this->sample_rate = sample_rate;

// 构造函数中对缓冲区赋值,缓冲区每帧大小可以确定下来,大小只与采样频率、通道数(双通道所以乘以2)、采样位数(一般是两个字节)有关buffer = (uint8_t *) av_malloc(sample_rate * 2 * 2);queue = new MNQueue(playstatus);this->callJava = callJava;soundTouch = new SoundTouch();

// 音频缓冲区都是固定的,采样频率 X 采样位数(16位) X 通道数sampleBuffer= static_cast<SAMPLETYPE *>(malloc(sample_rate * 2 * 2));

// 设值采样频率soundTouch->setSampleRate(sample_rate);

// 设值通道数据soundTouch->setChannels(2);soundTouch->setTempo(1.0);soundTouch->setPitch(this->speed);

}

//c方法,c方法里没有对象,虽然在MNAudio方法里面,但不能用this访问对象,

//如果想访问,只能传参,把this传进去

void *decodPlay(void *data) {

// 强转拿到对象。MNAudio *mnAudio = (MNAudio *) data;mnAudio->initOpenSLES();pthread_exit(&mnAudio->thread_play);

}void MNAudio::play() {

//开辟线程,decodPlay是C方法,

//c方法里没有对象,虽然在MNAudio方法里面,但不能用this访问对象,

//如果想访问,只能传参,把this传进去pthread_create(&thread_play, NULL, decodPlay, this);}

//喇叭没数据了就会不断的从接口拿数据,该方法主动调用,主动解码。

void pcmBufferCallBack(SLAndroidSimpleBufferQueueItf bf, void * context)

{

//强转为MNAudio对象MNAudio *mnAudio = (MNAudio *) context;if(mnAudio != NULL) {

// 最终目的是在这里提供PCM数据。

//解码函数,

// buffersize实际大小int buffersize = mnAudio->getSoundTouchData();if(buffersize > 0){

// 播放一帧花费的时间=实际大小/单位值mnAudio->clock+=buffersize/((double)(mnAudio->sample_rate * 2 * 2));

// 把播放时间回调给Java层if(mnAudio->clock - mnAudio->last_tiem >= 0.1){mnAudio->last_tiem = mnAudio->clock;

// 回调Java层mnAudio->callJava->onCallTimeInfo(CHILD_THREAD,mnAudio->clock,mnAudio->duration);}

// 播放,音频的数据送往喇叭,可以在这里整理音频数据。mnAudio-> sampleBuffer是整理后的新波形(*mnAudio->pcmBufferQueue)->Enqueue(mnAudio->pcmBufferQueue,(char *) mnAudio-> sampleBuffer,buffersize*2*2);}}}

void MNAudio::initOpenSLES() {

// 创建引擎 engineObject 创建 操作 直接 拿到对应节 接口SLresult result;

// 创建引擎函数,

/*** engineObject,引擎对象*/result= slCreateEngine(&engineObject, 0, 0,0, 0, 0);if(result!=SL_RESULT_SUCCESS) {return;}LOGE("-------->initOpenSLES 1 ");

//初始化引擎,

/*** SL_BOOLEAN_FALSE:同步初始化,如果是异步初始化,需要等它初始化完成才能使用*/result = (*engineObject)->Realize(engineObject, SL_BOOLEAN_FALSE);

// 好比我自己写了一个对象,这个对象有很多功能,每个功能都有不同的优先级。我们就把某些方法抽到一个接口里面

// 接口提供给外部调用,外面只需要通过接口调用引擎。这样既保护了引擎对象,又选择性的暴露出去。

//引擎对象并不直接操作,而是操作接口,这里有很多接口。

/*** SL_IID_ENGINE:引擎接口ID,* &engineEngine:引擎接口,也是一个出参入参对象。后续操作引擎都是操作这个接口*/(*engineObject)->GetInterface(engineObject, SL_IID_ENGINE, &engineEngine);if(result!=SL_RESULT_SUCCESS) {return;}

//创建混音器const SLInterfaceID mids[1] = {SL_IID_ENVIRONMENTALREVERB};const SLboolean mreq[1] = {SL_BOOLEAN_FALSE};

// 创建混音器对象

/*** engineEngine:引擎接口* outputMixObject:混音对象,混音对象自然也对应一个混音接口* 1:创建一个*/result =(*engineEngine)->CreateOutputMix(engineEngine, &outputMixObject, 1,mids, mreq);if(result!=SL_RESULT_SUCCESS) {return;}LOGE("-------->initOpenSLES 2");

// 初始化,Realize是初始化result = (*outputMixObject)->Realize(outputMixObject, SL_BOOLEAN_FALSE);if(result!=SL_RESULT_SUCCESS) {return;}

// 拿到接口,调用

/*** outputMixEnvironmentalReverb混音器接口*/result = (*outputMixObject)->GetInterface(outputMixObject, SL_IID_ENVIRONMENTALREVERB, &outputMixEnvironmentalReverb);if(result!=SL_RESULT_SUCCESS) {return;}SLDataFormat_PCM pcm={SL_DATAFORMAT_PCM,//播放pcm格式的数据,操作喇叭,不是用来解码音频,解码音频ffmpeg已经做好了,所以这里是Pcm,不是aac2,//2个声道(立体声)static_cast<SLuint32>(getCurrentSampleRateForOpensles(sample_rate)),//44100hz的频率,采样频率,由外面传入SL_PCMSAMPLEFORMAT_FIXED_16,//位数 16位 采样位数SL_PCMSAMPLEFORMAT_FIXED_16,//和位数一致就行SL_SPEAKER_FRONT_LEFT | SL_SPEAKER_FRONT_RIGHT,//立体声(前左前右)通道数SL_BYTEORDER_LITTLEENDIAN//结束标志};LOGE("-------->initOpenSLES 3 ");

// 流数据SLDataLocator_AndroidSimpleBufferQueue android_queue={SL_DATALOCATOR_ANDROIDSIMPLEBUFFERQUEUE,2};// 配置混音器SLDataLocator_OutputMix outputMix = {SL_DATALOCATOR_OUTPUTMIX, outputMixObject};SLDataSink audioSnk ={&outputMix, 0};

// 播放器跟混音器建立联系LOGE("-------->initOpenSLES 4 ");SLDataSource slDataSource = {&android_queue, &pcm};

//SLDataSource *pAudioSrc, 音频源和音频配置const SLInterfaceID ids[3] = {SL_IID_BUFFERQUEUE,SL_IID_VOLUME,SL_IID_MUTESOLO};

// 需要什么就打开什么,与ids[3]一一对应const SLboolean req[3] = {SL_BOOLEAN_TRUE,SL_BOOLEAN_TRUE,SL_BOOLEAN_TRUE};

//创建播放器对象。可以播放,也可以录音(recod),我们这里是播放

/*** pcmPlayerObject播放器对象* slDataSource 音频源和音频配置* audioSnk 混音器*/(*engineEngine)->CreateAudioPlayer(engineEngine,&pcmPlayerObject,&slDataSource, &audioSnk,2,ids ,req);//初始化播放器(*pcmPlayerObject)->Realize(pcmPlayerObject, SL_BOOLEAN_FALSE);

// 得到接口后调用 获取Player(播放)接口(*pcmPlayerObject)->GetInterface(pcmPlayerObject, SL_IID_PLAY, &pcmPlayerPlay);

//是否静音接口(*pcmPlayerObject)->GetInterface(pcmPlayerObject, SL_IID_MUTESOLO, &pcmMutePlay);// 拿控制接口,播放暂停恢复(*pcmPlayerObject)->GetInterface(pcmPlayerObject,SL_IID_VOLUME,&pcmVolumePlay);

// 注册回调缓冲区 获取缓冲队列接口(*pcmPlayerObject)->GetInterface(pcmPlayerObject, SL_IID_BUFFERQUEUE, &pcmBufferQueue);//告诉喇叭怎么拉取数据(*pcmBufferQueue)->RegisterCallback(pcmBufferQueue, pcmBufferCallBack, this);

// 操作播放器的接口 ,我们拿不到播放器播放,只能使用接口。有播放接口,声道接口,暂停恢复 接口,注册 缓冲接口,注册回调缓冲接口等// 获取播放状态接口(*pcmPlayerPlay)->SetPlayState(pcmPlayerPlay, SL_PLAYSTATE_PLAYING);LOGE("-------->initOpenSLES 5 ");

// 激活pcmBufferCallBack(pcmBufferQueue, this);LOGE("-------->initOpenSLES 6");

}int MNAudio::getCurrentSampleRateForOpensles(int sample_rate) {int rate = 0;switch (sample_rate){case 8000:rate = SL_SAMPLINGRATE_8;break;case 11025:rate = SL_SAMPLINGRATE_11_025;break;case 12000:rate = SL_SAMPLINGRATE_12;break;case 16000:rate = SL_SAMPLINGRATE_16;break;case 22050:rate = SL_SAMPLINGRATE_22_05;break;case 24000:rate = SL_SAMPLINGRATE_24;break;case 32000:rate = SL_SAMPLINGRATE_32;break;case 44100:rate = SL_SAMPLINGRATE_44_1;break;case 48000:rate = SL_SAMPLINGRATE_48;break;case 64000:rate = SL_SAMPLINGRATE_64;break;case 88200:rate = SL_SAMPLINGRATE_88_2;break;case 96000:rate = SL_SAMPLINGRATE_96;break;case 192000:rate = SL_SAMPLINGRATE_192;break;default:rate = SL_SAMPLINGRATE_44_1;}return rate;}//解码一帧数据

int MNAudio::resampleAudio(void **pcmbuf) {

//播放器有三种状态,只要不是退出状态,就开始解码。while(playstatus != NULL && !playstatus->exit){avPacket = av_packet_alloc();if(queue->getAvpacket(avPacket) != 0){

// 队列里拿不到数据就释放掉av_packet_free(&avPacket);av_free(avPacket);avPacket = NULL;continue;}

// 发数据ret = avcodec_send_packet(avCodecContext, avPacket);if(ret != 0){

// 解码不成功也要释放av_packet_free(&avPacket);av_free(avPacket);avPacket = NULL;continue;}avFrame = av_frame_alloc();

// 收数据ret = avcodec_receive_frame(avCodecContext, avFrame);if(ret == 0) {SwrContext *swr_ctx;

// 设值输入参数和输出参数swr_ctx = swr_alloc_set_opts(NULL,AV_CH_LAYOUT_STEREO,AV_SAMPLE_FMT_S16,avFrame->sample_rate,avFrame->channel_layout,(AVSampleFormat) avFrame->format,avFrame->sample_rate,NULL, NULL);if(!swr_ctx || swr_init(swr_ctx) <0){av_packet_free(&avPacket);//释放avPacket内部的data容器av_free(avPacket);//avPacket是创建的,肯定需要释放avPacket = NULL;av_frame_free(&avFrame);av_free(avFrame);avFrame = NULL;swr_free(&swr_ctx);continue;}// 开始转换,把avFrame->data中的pcm数据转移到buffer中

// 返回值nb是一通道转换的数据量nb = swr_convert(swr_ctx,&buffer,avFrame->nb_samples,(const uint8_t **) avFrame->data,avFrame->nb_samples);int out_channels = av_get_channel_layout_nb_channels(AV_CH_LAYOUT_STEREO);

//获取转换后的真实大小,buffer里的数据可能没装满Buffer,所以不能用buffer来计算转换后的大小

/*** out_channels:通道的数量*/data_size = nb * out_channels * av_get_bytes_per_sample(AV_SAMPLE_FMT_S16);*pcmbuf = buffer;

// pts是时间戳代表第几帧,now_time解码时间,解码时间不等于播放时间,因为还有渲染时间。now_time= avFrame->pts * av_q2d(time_base);

// 纠正时间,播放久了会出现偏差,因为配置帧的存在if(now_time < clock){now_time = clock;}clock = now_time;av_packet_free(&avPacket);av_free(avPacket);avPacket = NULL;av_frame_free(&avFrame);av_free(avFrame);avFrame = NULL;swr_free(&swr_ctx);break;} else{av_packet_free(&avPacket);av_free(avPacket);avPacket = NULL;av_frame_free(&avFrame);av_free(avFrame);avFrame = NULL;continue;}}

// data_size很重要,我们需要把实际大小data_size和buffer(PCM数据)输出到喇叭里面return data_size;

}void MNAudio::pause() {queue->lock();if(pcmPlayerPlay != NULL){(*pcmPlayerPlay)->SetPlayState(pcmPlayerPlay, SL_PLAYSTATE_PAUSED);}

}void MNAudio::resume() {queue->unlock();if(pcmPlayerPlay != NULL){(*pcmPlayerPlay)->SetPlayState(pcmPlayerPlay, SL_PLAYSTATE_PLAYING);}

}

void MNAudio::setVolume(int percent) {if(pcmVolumePlay != NULL) {if (percent > 30) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -20);} else if (percent > 25) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -22);} else if (percent > 20) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -25);} else if (percent > 15) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -28);} else if (percent > 10) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -30);} else if (percent > 5) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -34);} else if (percent > 3) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -37);} else if (percent > 0) {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -40);} else {(*pcmVolumePlay)->SetVolumeLevel(pcmVolumePlay, (100 - percent) * -100);}}}

void MNAudio::setMute(int mute) {LOGE(" 声道 接口%p", pcmMutePlay);LOGE(" 声道 接口%d", mute);

// true表示不静音,false表示静音if(pcmMutePlay == NULL){return;}this->mute = mute;if(mute == 0)//right 0 做通道播放{(*pcmMutePlay)->SetChannelMute(pcmMutePlay, 1, false);(*pcmMutePlay)->SetChannelMute(pcmMutePlay, 0, true);} else if(mute == 1)//left{(*pcmMutePlay)->SetChannelMute(pcmMutePlay, 1, true);(*pcmMutePlay)->SetChannelMute(pcmMutePlay, 0, false);}else if(mute == 2)//center{(*pcmMutePlay)->SetChannelMute(pcmMutePlay, 1, false);(*pcmMutePlay)->SetChannelMute(pcmMutePlay, 0, false);}

}//获得整理后的波形并返回。如果是快进,整理后的波形变大。

int MNAudio::getSoundTouchData() {

//我们先取数据 pcm 的数据 就在 outbufferwhile(playstatus != NULL && !playstatus->exit){LOGE("------------------循环---------------------------finished %d",finished)

//out_buffer = NULL;

// 波形整理耗时,finish是标志位if(finished){

// 开始整理波形,没有完成finished = false;

//out_buffer是容器。入参出参对象data_size = this->resampleAudio(reinterpret_cast<void **>(&out_buffer));

// 大于0代表有波形。if (data_size > 0) {

// 遍历每一帧音频for(int i = 0; i < data_size / 2 + 1; i++){

// 低八位与高八位相与。sampleBuffer就是一个完整的波形了。sampleBuffer[i]=(out_buffer[i * 2] | ((out_buffer[i * 2 + 1]) << 8));}

// for循环结束后整理波形,

/*** sampleBuffer输入的波形* nb 数据量,实际长度。*/soundTouch->putSamples(sampleBuffer, nb);

// 输出的缓冲区与输出的最大数据量,输出的是一个新波,放到sampleBuffer。返回值是整理后的波形长度,0代表还可以放。num=soundTouch->receiveSamples(sampleBuffer, data_size / 4);LOGE("------------第一个num %d ",num);}else{soundTouch->flush();}}if (num == 0) {

// 还有数据放进去finished = true;continue;} else{

// 返回整理的实际波形值num。if(out_buffer == NULL){num=soundTouch->receiveSamples(sampleBuffer, data_size / 4);LOGE("------------第二个num %d ",num);if(num == 0){finished = true;continue;}}LOGE("---------------- 结束1 -----------------------")return num;}}LOGE("---------------- 结束2 -----------------------")return 0;

}

//设值倍速播放

void MNAudio::setSpeed(float speed) {this->speed = speed;if (soundTouch != NULL) {soundTouch->setTempo(speed);}

}

//设值 高音 低音

void MNAudio::setHigth(float higth) {if (soundTouch != NULL) {

// 变调soundTouch->setPitch(higth);}

}//

// Created by maniu on 2022/8/5.

//#include <pthread.h>

#include "MNFFmpeg.h"void *decodeFFmpeg(void *data) {MNFFmpeg *mnFFmpeg = (MNFFmpeg *) data;mnFFmpeg->decodeFFmpegThread();pthread_exit(&mnFFmpeg->decodeThread);}void MNFFmpeg::parpared() {

// 解码pthread_create(&decodeThread, NULL, decodeFFmpeg, this);

}void MNFFmpeg::decodeFFmpegThread() {

// 初始化锁pthread_mutex_lock(&init_mutex);av_register_all();avformat_network_init();

// 声明总上下文pFormatCtx = avformat_alloc_context();if (avformat_open_input(&pFormatCtx, url, NULL, NULL) != 0) {if (LOG_DEBUG) {LOGE("can not open url :%s", url);}return;}LOGE("------------->6");if (avformat_find_stream_info(pFormatCtx, NULL) < 0) {if (LOG_DEBUG) {LOGE("can not find streams from %s", url);}return;}for (int i = 0; i < pFormatCtx->nb_streams; i++) {if (pFormatCtx->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_AUDIO)//得到音频流{if (audio == NULL) {

// 实例化音频数据audio = new MNAudio(playstatus, pFormatCtx->streams[i]->codecpar->sample_rate,callJava);audio->streamIndex = i;audio->codecpar = pFormatCtx->streams[i]->codecpar;audio->time_base = pFormatCtx->streams[i]->time_base;// 拿到总时间,进度条需要audio->duration = pFormatCtx->duration / AV_TIME_BASE;duration = audio->duration;}} else if (pFormatCtx->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_VIDEO) {

// 如果是视频if (video == NULL) {video = new MNVideo(playstatus, callJava);video->streamIndex = i;video->codecpar = pFormatCtx->streams[i]->codecpar;

// 时间单位video->time_base = pFormatCtx->streams[i]->time_base;LOGE("时间基 video->time_base %d num %d ", video->time_base.num,video->time_base.num)int num = pFormatCtx->streams[i]->avg_frame_rate.num;int den = pFormatCtx->streams[i]->avg_frame_rate.den;if (num != 0 && den != 0) {int fps = num / den;//[25 / 1]video->defaultDelayTime = 1.0 / fps;//秒}video->delayTime = video->defaultDelayTime;}}}if (audio != NULL) {

// 实例化解码器getCodecContext(audio->codecpar, &audio->avCodecContext);}if (video != NULL) {getCodecContext(video->codecpar, &video->avCodecContext);}pthread_mutex_unlock(&init_mutex);// callJava主要用于回调,把native层的回调回调到Java层callJava->onCallParpared(CHILD_THREAD);}int MNFFmpeg::getCodecContext(AVCodecParameters *codecpar, AVCodecContext **avCodecContext) {AVCodec *dec = avcodec_find_decoder(codecpar->codec_id);if (!dec) {exit = true;

// 失败了要释放同步锁pthread_mutex_unlock(&init_mutex);return -1;}*avCodecContext = avcodec_alloc_context3(dec);if (!*avCodecContext) {exit = true;pthread_mutex_unlock(&init_mutex);return -1;}if (avcodec_parameters_to_context(*avCodecContext, codecpar) < 0) {exit = true;pthread_mutex_unlock(&init_mutex);return -1;}if (avcodec_open2(*avCodecContext, dec, 0) != 0) {exit = true;pthread_mutex_unlock(&init_mutex);return -1;}LOGE("------------->");return 0;

}//在这里解码

void MNFFmpeg::start() {if (audio == NULL) {if (LOG_DEBUG) {LOGE("audio is null");return;}}audio->play();video->play();

// 做同步以音频为主,视频要持有音频video->audio = audio;int count = 0;while (playstatus != NULL && !playstatus->exit) {if (playstatus->seek) {continue;}if (playstatus->pause) {av_usleep(500 * 1000);continue;}

// 放入队列if (audio->queue->getQueueSize() > 40 || video->queue->getQueueSize() > 40) {continue;}AVPacket *avPacket = av_packet_alloc();if (av_read_frame(pFormatCtx, avPacket) == 0) {if (avPacket->stream_index == audio->streamIndex) {audio->queue->putAvpacket(avPacket);} else if (avPacket->stream_index == video->streamIndex) {LOGE("解码视频第 %d 帧", count);video->queue->putAvpacket(avPacket);} else {av_packet_free(&avPacket);av_free(avPacket);}} else {//非0代表读到了文件末尾//如果用户暂停了,或者读到文件的末尾。别忘了释放掉。av_packet_free(&avPacket);av_free(avPacket);

//如果文件读到了末尾,此时队列中还有缓存的AvPacket,我们要把这些缓存的AvPacket播放出来。while (playstatus != NULL && !playstatus->exit) {if (audio->queue->getQueueSize() > 0) {continue;}if (video->queue->getQueueSize() > 0) {continue;}playstatus->exit = true;break;}}

//如果用户暂停了,清空队列中的AvPacket。if (playstatus != NULL && playstatus->exit) {audio->queue->clearAvpacket();playstatus->exit = true;}}}MNFFmpeg::MNFFmpeg(MNPlaystatus *playstatus, MNCallJava *callJava, const char *url) {this->callJava = callJava;this->url = url;this->playstatus = playstatus;pthread_mutex_init(&seek_mutex, NULL);pthread_mutex_init(&init_mutex, NULL);

}void MNFFmpeg::pause() {playstatus->pause = true;playstatus->seek = false;playstatus->play = false;if (audio != NULL) {audio->pause();}if (video != NULL) {video->pause();}

}void MNFFmpeg::seek(jint secds) {if (duration <= 0) {return;}LOGE("duration------> %d", duration)if (secds >= 0 && secds <= duration) {

// 同步锁pthread_mutex_lock(&seek_mutex);if (audio != NULL) {playstatus->seek = true;

// 清空队列audio->queue->clearAvpacket();video->queue->clearAvpacket();

// 清空时间戳audio->clock = 0;audio->last_tiem = 0;// s * usint64_t rel = secds * AV_TIME_BASE;

// seek文件

/*** INT64_MIN,最小时间* rel,最大时间* INT64_MAX,*/avformat_seek_file(pFormatCtx, -1, INT64_MIN, rel, INT64_MAX, 0);playstatus->seek = false;}pthread_mutex_unlock(&seek_mutex);}}void MNFFmpeg::resume() {playstatus->pause = false;playstatus->play = true;playstatus->seek = false;if (audio != NULL) {audio->resume();}if (video != NULL) {video->resume();}

}void MNFFmpeg::setMute(jint mute) {if (audio != NULL) {audio->setMute(mute);}

}void MNFFmpeg::setSpeed(float speed) {if (audio != NULL) {audio->setSpeed(speed);}

}void MNFFmpeg::setHigth(float speed) {if (audio != NULL) {audio->setHigth(speed);}

}//

// Created by maniu on 2022/8/5.

//#include "MNCallJava.h"MNCallJava::MNCallJava(_JavaVM *javaVM, JNIEnv *env, jobject obj) {this->javaVM = javaVM;this->jniEnv = env;this->jobj = env->NewGlobalRef(obj);jclass jlz = jniEnv->GetObjectClass(jobj);jmid_parpared = env->GetMethodID(jlz, "onCallParpared", "()V");jmid_timeinfo = env->GetMethodID(jlz, "onCallTimeInfo", "(II)V");jmid_load = env->GetMethodID(jlz, "onCallLoad", "(Z)V");jmid_renderyuv = env->GetMethodID(jlz, "onCallRenderYUV", "(II[B[B[B)V");}

//回调服务类,type表示调用的子线程

void MNCallJava::onCallParpared(int type) {if(type == MAIN_THREAD){jniEnv->CallVoidMethod(jobj, jmid_parpared);} else if(type == CHILD_THREAD){JNIEnv *jniEnv;if(javaVM->AttachCurrentThread(&jniEnv, 0) != JNI_OK){if(LOG_DEBUG){LOGE("get child thread jnienv worng");}return;}jniEnv->CallVoidMethod(jobj, jmid_parpared);javaVM->DetachCurrentThread();}

}

//回调 java

void MNCallJava::onCallTimeInfo(int type, int curr, int total) {if (type == MAIN_THREAD) {jniEnv->CallVoidMethod(jobj, jmid_timeinfo, curr, total);} else if (type == CHILD_THREAD) {JNIEnv *jniEnv;if (javaVM->AttachCurrentThread(&jniEnv, 0) != JNI_OK) {if (LOG_DEBUG) {LOGE("call onCallTimeInfo worng");}return;}jniEnv->CallVoidMethod(jobj, jmid_timeinfo, curr, total);javaVM->DetachCurrentThread();}

}

//加载中的状态

void MNCallJava::onCallLoad(int type, bool load) {if(type == MAIN_THREAD){jniEnv->CallVoidMethod(jobj, jmid_load, load);}else if(type == CHILD_THREAD) {JNIEnv *jniEnv;if (javaVM->AttachCurrentThread(&jniEnv, 0) != JNI_OK) {if (LOG_DEBUG) {LOGE("call onCallLoad worng");}return;}jniEnv->CallVoidMethod(jobj, jmid_load, load);javaVM->DetachCurrentThread();}

}

/**** @param width* @param height* @param fy Y的数据* @param fu U的数据* @param fv v的数据*/

void MNCallJava::onCallRenderYUV(int width, int height, uint8_t *fy, uint8_t *fu, uint8_t *fv){JNIEnv *jniEnv;if(javaVM->AttachCurrentThread(&jniEnv, 0) != JNI_OK){if(LOG_DEBUG){LOGE("call onCallComplete worng");}return;}

//y的大小就是with X height。UV是y的四分之一jbyteArray y = jniEnv->NewByteArray(width * height);

// y数据塞到jbyte中jniEnv->SetByteArrayRegion(y, 0, width * height, reinterpret_cast<const jbyte *>(fy));jbyteArray u = jniEnv->NewByteArray(width * height / 4);jniEnv->SetByteArrayRegion(u, 0, width * height / 4, reinterpret_cast<const jbyte *>(fu));jbyteArray v = jniEnv->NewByteArray(width * height / 4);jniEnv->SetByteArrayRegion(v, 0, width * height / 4, reinterpret_cast<const jbyte *>(fv));jniEnv->CallVoidMethod(jobj, jmid_renderyuv, width, height, y, u, v);jniEnv->DeleteLocalRef(y);jniEnv->DeleteLocalRef(u);jniEnv->DeleteLocalRef(v);javaVM->DetachCurrentThread();

}

package com.maniu.wangyimusicplayer.service;import android.text.TextUtils;

import android.util.Log;import com.maniu.wangyimusicplayer.lisnter.IPlayerListener;

import com.maniu.wangyimusicplayer.lisnter.MNOnParparedListener;

import com.maniu.wangyimusicplayer.opengl.MNGLSurfaceView;public class MNPlayer {MNOnParparedListener mnOnParparedListener;private MNGLSurfaceView davidView;static {

// 导入库System.loadLibrary("native-lib");}private String source;//数据源public void setSource(String source){this.source = source;}public void setMNGLSurfaceView(MNGLSurfaceView davidView) {this.davidView = davidView;}public void parpared(){if(TextUtils.isEmpty(source)){Log.d("david","source not be empty");return;}// 准备肯定是natve层准备,打开网络,读取文件等准备操作应该放到子线程。new Thread(new Runnable() {@Overridepublic void run() {n_parpared(source);}}).start();}public void start(){if(TextUtils.isEmpty(source)){Log.d("david","source is empty");return;}new Thread(new Runnable() {@Overridepublic void run() {

// 播放也是耗时操作n_start();}}).start();}public void pause() {n_pause();}public native void n_start();private native void n_pause();public native void n_parpared(String source);public void onCallRenderYUV(int width, int height, byte[] y, byte[] u, byte[] v){

//opengl渲染 被动if( this.davidView != null){this.davidView.setYUVData(width, height, y, u, v);}}public void onCallTimeInfo(int currentTime, int totalTime){duration = totalTime;if (playerListener == null) {return;}playerListener.onCurrentTime(currentTime, totalTime);}

// 通过接口返回,不能写死public void onCallParpared() {Log.d("david--->", "onCallParpared");if (mnOnParparedListener != null) {mnOnParparedListener.onParpared();}}public void setMnOnParparedListener(MNOnParparedListener mnOnParparedListener) {this.mnOnParparedListener = mnOnParparedListener;}private IPlayerListener playerListener;public void setPlayerListener(IPlayerListener playerListener) {this.playerListener = playerListener;}public void onCallLoad(boolean load){

// 队列 网络 有问题 加载框}public void seek(int secds) {n_seek(secds);}private native void n_seek(int secds);private native void n_resume();private native void n_mute(int mute);private native void n_volume(int percent);private native void n_speed(float speed);private native void n_higth(float speed);public void setMute(int mute) {n_mute(mute);}public void resume() {n_resume();}public int getDuration() {return duration;}private int duration = 0;public void setSpeed(float speed) {n_speed(speed);}public void setHigth(float v) {n_higth(v);}

}这篇关于王学岗——————FFmpeg同步原理机制 与 Opensl es 播放器流程(43节课-47节课)的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!