本文主要是介绍4.7 构建onnx结构模型-Transpose,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

前言

构建onnx方式通常有两种:

1、通过代码转换成onnx结构,比如pytorch —> onnx

2、通过onnx 自定义结点,图,生成onnx结构

本文主要是简单学习和使用两种不同onnx结构,

下面以transpose 结点进行分析

方式

方法一:pytorch --> onnx

暂缓,主要研究方式二

方法二: onnx

import onnx

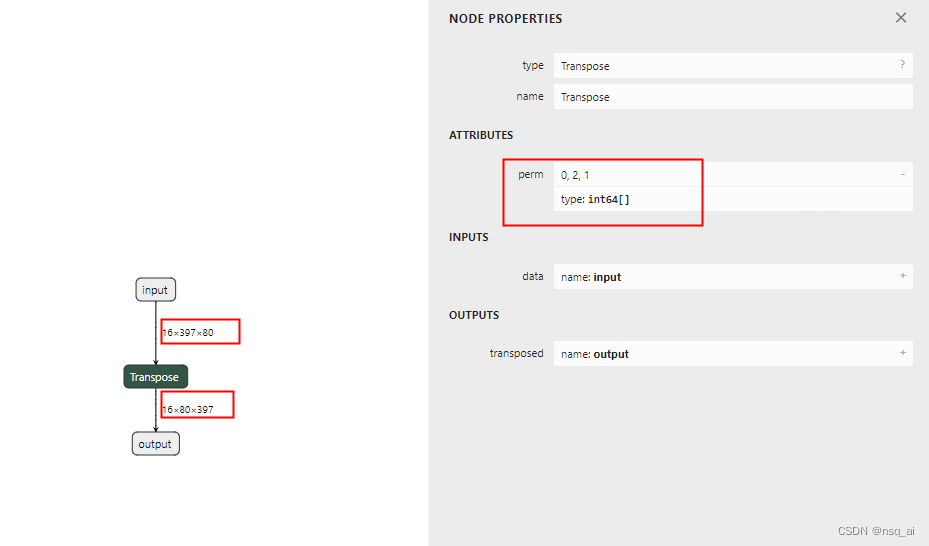

from onnx import TensorProto, helper, numpy_helperdef run():print("run start....\n")perm = [0,2,1]Transpose = helper.make_node("Transpose",perm=perm,inputs=["input"],outputs=["output"],name="Transpose",)graph = helper.make_graph(nodes=[Transpose],name="test_graph",inputs=[helper.make_tensor_value_info("input", TensorProto.FLOAT, (16,397,80))], # use your inputoutputs=[helper.make_tensor_value_info("output", TensorProto.FLOAT, (16,80,397))], # use your outputinitializer=[helper.make_tensor("perm", TensorProto.INT64, [len(perm)], perm),],)op = onnx.OperatorSetIdProto()op.version = 11model = helper.make_model(graph, opset_imports=[op])print("run done....\n")return modelif __name__ == "__main__":model = run()onnx.save(model, "./test_transpose.onnx")运行onnx

import onnx

import onnxruntime

import numpy as np# 检查onnx计算图

def check_onnx(mdoel):onnx.checker.check_model(model)# print(onnx.helper.printable_graph(model.graph))def run(model):print(f'run start....\n')session = onnxruntime.InferenceSession(model,providers=['CPUExecutionProvider'])input_name1 = session.get_inputs()[0].name input_data1= np.random.randn(16,397,80).astype(np.float32)print(f'input_data1 shape:{input_data1.shape}\n')output_name1 = session.get_outputs()[0].namepred_onx = session.run([output_name1], {input_name1: input_data1})[0]print(f'pred_onx shape:{pred_onx.shape} \n')print(f'run end....\n')if __name__ == '__main__':path = "./test_transpose.onnx"model = onnx.load("./test_transpose.onnx")check_onnx(model)run(path)

这篇关于4.7 构建onnx结构模型-Transpose的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!