本文主要是介绍Tensorflow训练MobileNet V1 retrain图片分类,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

1.数据准备

(1)建立TrainData文件夹

(2)在该文件夹内将你将要训练分类的属性按照类别建立对应的文件夹

(3)将各个类别图片放入对应文件夹

(4)在当前目录下建立labels.txt和label_map.txt两个文件。

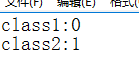

label_map.txt内容为: labels.txt内容为:

labels.txt内容为:

2.预训练模型准备

下载好相应的ImageNet预训练模型,并放入当前目录下的./tmp/imagenet/文件夹

3.训练代码

# Copyright 2015 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

r"""Simple transfer learning with Inception v3 or Mobilenet models.

With support for TensorBoard.

This example shows how to take a Inception v3 or Mobilenet model trained on

ImageNet images, and train a new top layer that can recognize other classes of

images.

The top layer receives as input a 2048-dimensional vector (1001-dimensional for

Mobilenet) for each image. We train a softmax layer on top of this

representation. Assuming the softmax layer contains N labels, this corresponds

to learning N + 2048*N (or 1001*N) model parameters corresponding to the

learned biases and weights.

Here's an example, which assumes you have a folder containing class-named

subfolders, each full of images for each label. The example folder flower_photos

should have a structure like this:

~/flower_photos/daisy/photo1.jpg

~/flower_photos/daisy/photo2.jpg

...

~/flower_photos/rose/anotherphoto77.jpg

...

~/flower_photos/sunflower/somepicture.jpg

The subfolder names are important, since they define what label is applied to

each image, but the filenames themselves don't matter. Once your images are

prepared, you can run the training with a command like this:

```bash

bazel build tensorflow/examples/image_retraining:retrain && \

bazel-bin/tensorflow/examples/image_retraining/retrain \--image_dir ~/flower_photos

```

Or, if you have a pip installation of tensorflow, `retrain.py` can be run

without bazel:

```bash

python tensorflow/examples/image_retraining/retrain.py \--image_dir ~/flower_photos

```

You can replace the image_dir argument with any folder containing subfolders of

images. The label for each image is taken from the name of the subfolder it's

in.

This produces a new model file that can be loaded and run by any TensorFlow

program, for example the label_image sample code.

By default this script will use the high accuracy, but comparatively large and

slow Inception v3 model architecture. It's recommended that you start with this

to validate that you have gathered good training data, but if you want to deploy

on resource-limited platforms, you can try the `--architecture` flag with a

Mobilenet model. For example:

```bash

python tensorflow/examples/image_retraining/retrain.py \--image_dir ~/flower_photos --architecture mobilenet_1.0_224

```

There are 32 different Mobilenet models to choose from, with a variety of file

size and latency options. The first number can be '1.0', '0.75', '0.50', or

'0.25' to control the size, and the second controls the input image size, either

'224', '192', '160', or '128', with smaller sizes running faster. See

https://research.googleblog.com/2017/06/mobilenets-open-source-models-for.html

for more information on Mobilenet.

To use with TensorBoard:

By default, this script will log summaries to /tmp/retrain_logs directory

Visualize the summaries with this command:

tensorboard --logdir /tmp/retrain_logs

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_functionimport argparse

from datetime import datetime

import hashlib

import os.path

import random

import re

import sys

import tarfileimport numpy as np

from six.moves import urllib

import tensorflow as tffrom tensorflow.python.framework import graph_util

from tensorflow.python.framework import tensor_shape

from tensorflow.python.platform import gfile

from tensorflow.python.util import compatFLAGS = Noneos.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID"

os.environ["CUDA_VISIBLE_DEVICES"] = "0" # 4为2080,2,1,3为k80,0为1080

# config = tf.ConfigProto()

# config.gpu_options.per_process_gpu_memory_fraction = 0.95 # 占用GPU90%的显存

# session = tf.Session(config=config)gpu_options = tf.GPUOptions(allow_growth=True)

sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))# These are all parameters that are tied to the particular model architecture

# we're using for Inception v3. These include things like tensor names and their

# sizes. If you want to adapt this script to work with another model, you will

# need to update these to reflect the values in the network you're using.

MAX_NUM_IMAGES_PER_CLASS = 2 ** 27 - 1 # ~134Mdef create_label_txt(indexlist):passdef create_image_lists(image_dir, testing_percentage, validation_percentage):"""Builds a list of training images from the file system.Analyzes the sub folders in the image directory, splits them into stabletraining, testing, and validation sets, and returns a data structuredescribing the lists of images for each label and their paths.Args:image_dir: String path to a folder containing subfolders of images.testing_percentage: Integer percentage of the images to reserve for tests.validation_percentage: Integer percentage of images reserved for validation.Returns:A dictionary containing an entry for each label subfolder, with images splitinto training, testing, and validation sets within each label."""print('图片路径是:',image_dir)if not gfile.Exists(image_dir):tf.logging.error("Image directory '" + image_dir + "' not found.")return Noneresult = {}sub_dirs = [x[0] for x in gfile.Walk(image_dir)]# The root directory comes first, so skip it.is_root_dir = Truefor sub_dir in sub_dirs:if is_root_dir:is_root_dir = Falsecontinueextensions = ['jpg', 'jpeg', 'JPG', 'JPEG']file_list = []dir_name = os.path.basename(sub_dir)if dir_name == image_dir:continueprint("The sub dir is:",sub_dir)print("The dir name is:", dir_name)tf.logging.info("Looking for images in '" + dir_name + "'")for extension in extensions:file_glob = os.path.join(image_dir, dir_name, '*.' + extension)print("The file_glob is:", file_glob)#tf.gfile.Glob('/media/chou/TF/TFcode/retrian/OriginalData/bankcard/*.jpg')file_list.extend(gfile.Glob(file_glob))if not file_list:tf.logging.warning('meiwenjianNo files found')print('bu cun zai file list aaaaa')continueif len(file_list) < 20:tf.logging.warning('WARNING: Folder has less than 20 images, which may cause issues.')elif len(file_list) > MAX_NUM_IMAGES_PER_CLASS:tf.logging.warning('WARNING: Folder {} has more than {} images. Some images will ''never be selected.'.format(dir_name, MAX_NUM_IMAGES_PER_CLASS))label_name = re.sub(r'[^a-z0-9]+', ' ', dir_name.lower())print("label_name:",label_name)training_images = []testing_images = []validation_images = []for file_name in file_list:base_name = os.path.basename(file_name)# We want to ignore anything after '_nohash_' in the file name when# deciding which set to put an image in, the data set creator has a way of# grouping photos that are close variations of each other. For example# this is used in the plant disease data set to group multiple pictures of# the same leaf.hash_name = re.sub(r'_nohash_.*$', '', file_name)# This looks a bit magical, but we need to decide whether this file should# go into the training, testing, or validation sets, and we want to keep# existing files in the same set even if more files are subsequently# added.# To do that, we need a stable way of deciding based on just the file name# itself, so we do a hash of that and then use that to generate a# probability value that we use to assign it.hash_name_hashed = hashlib.sha1(compat.as_bytes(hash_name)).hexdigest()percentage_hash = ((int(hash_name_hashed, 16) %(MAX_NUM_IMAGES_PER_CLASS + 1)) *(100.0 / MAX_NUM_IMAGES_PER_CLASS))if percentage_hash < validation_percentage:validation_images.append(base_name)elif percentage_hash < (testing_percentage + validation_percentage):testing_images.append(base_name)else:training_images.append(base_name)result[label_name] = {'dir': dir_name,'training': training_images,'testing': testing_images,'validation': validation_images,}return resultdef get_image_path(image_lists, label_name, index, image_dir, category):""""Returns a path to an image for a label at the given index.Args:image_lists: Dictionary of training images for each label.label_name: Label string we want to get an image for.index: Int offset of the image we want. This will be moduloed by theavailable number of images for the label, so it can be arbitrarily large.image_dir: Root folder string of the subfolders containing the trainingimages.category: Name string of set to pull images from - training, testing, orvalidation.Returns:File system path string to an image that meets the requested parameters."""if label_name not in image_lists:tf.logging.fatal('Label does not exist %s.', label_name)label_lists = image_lists[label_name]if category not in label_lists:tf.logging.fatal('Category does not exist %s.', category)category_list = label_lists[category]if not category_list:tf.logging.fatal('Label %s has no images in the category %s.',label_name, category)mod_index = index % len(category_list)base_name = category_list[mod_index]sub_dir = label_lists['dir']full_path = os.path.join(image_dir, sub_dir, base_name)return full_pathdef get_bottleneck_path(image_lists, label_name, index, bottleneck_dir,category, architecture):""""Returns a path to a bottleneck file for a label at the given index.Args:image_lists: Dictionary of training images for each label.label_name: Label string we want to get an image for.index: Integer offset of the image we want. This will be moduloed by theavailable number of images for the label, so it can be arbitrarily large.bottleneck_dir: Folder string holding cached files of bottleneck values.category: Name string of set to pull images from - training, testing, orvalidation.architecture: The name of the model architecture.Returns:File system path string to an image that meets the requested parameters."""return get_image_path(image_lists, label_name, index, bottleneck_dir,category) + '_' + architecture + '.txt'def create_model_graph(model_info):""""Creates a graph from saved GraphDef file and returns a Graph object.Args:model_info: Dictionary containing information about the model architecture.Returns:Graph holding the trained Inception network, and various tensors we'll bemanipulating."""with tf.Graph().as_default() as graph:model_path = os.path.join(FLAGS.model_dir, model_info['model_file_name'])with gfile.FastGFile(model_path, 'rb') as f:graph_def = tf.GraphDef()graph_def.ParseFromString(f.read())bottleneck_tensor, resized_input_tensor = (tf.import_graph_def(graph_def,name='',return_elements=[model_info['bottleneck_tensor_name'],model_info['resized_input_tensor_name'],]))return graph, bottleneck_tensor, resized_input_tensordef run_bottleneck_on_image(sess, image_data, image_data_tensor,decoded_image_tensor, resized_input_tensor,bottleneck_tensor):"""Runs inference on an image to extract the 'bottleneck' summary layer.Args:sess: Current active TensorFlow Session.image_data: String of raw JPEG data.image_data_tensor: Input data layer in the graph.decoded_image_tensor: Output of initial image resizing and preprocessing.resized_input_tensor: The input node of the recognition graph.bottleneck_tensor: Layer before the final softmax.Returns:Numpy array of bottleneck values."""# First decode the JPEG image, resize it, and rescale the pixel values.resized_input_values = sess.run(decoded_image_tensor,{image_data_tensor: image_data})# Then run it through the recognition network.bottleneck_values = sess.run(bottleneck_tensor,{resized_input_tensor: resized_input_values})bottleneck_values = np.squeeze(bottleneck_values)return bottleneck_valuesdef maybe_download_and_extract(data_url):"""Download and extract model tar file.If the pretrained model we're using doesn't already exist, this functiondownloads it from the TensorFlow.org website and unpacks it into a directory.Args:data_url: Web location of the tar file containing the pretrained model."""dest_directory = FLAGS.model_dir#the model store pathif not os.path.exists(dest_directory):os.makedirs(dest_directory)filename = data_url.split('/')[-1]filepath = os.path.join(dest_directory, filename)#using the filename to build the file pathif not os.path.exists(filepath):#if this model file is not exist download itdef _progress(count, block_size, total_size):sys.stdout.write('\r>> Downloading %s %.1f%%' %(filename,float(count * block_size) / float(total_size) * 100.0))sys.stdout.flush()filepath, _ = urllib.request.urlretrieve(data_url, filepath, _progress)print()statinfo = os.stat(filepath)tf.logging.info('Successfully downloaded', filename, statinfo.st_size,'bytes.')tarfile.open(filepath, 'r:gz').extractall(dest_directory)def ensure_dir_exists(dir_name):"""Makes sure the folder exists on disk.Args:dir_name: Path string to the folder we want to create."""if not os.path.exists(dir_name):os.makedirs(dir_name)bottleneck_path_2_bottleneck_values = {}def create_bottleneck_file(bottleneck_path, image_lists, label_name, index,image_dir, category, sess, jpeg_data_tensor,decoded_image_tensor, resized_input_tensor,bottleneck_tensor):"""Create a single bottleneck file."""tf.logging.info('Creating bottleneck at ' + bottleneck_path)image_path = get_image_path(image_lists, label_name, index,image_dir, category)if not gfile.Exists(image_path):tf.logging.fatal('File does not exist %s', image_path)image_data = gfile.FastGFile(image_path, 'rb').read()try:bottleneck_values = run_bottleneck_on_image(sess, image_data, jpeg_data_tensor, decoded_image_tensor,resized_input_tensor, bottleneck_tensor)except Exception as e:raise RuntimeError('Error during processing file %s (%s)' % (image_path,str(e)))bottleneck_string = ','.join(str(x) for x in bottleneck_values)with open(bottleneck_path, 'w') as bottleneck_file:bottleneck_file.write(bottleneck_string)def get_or_create_bottleneck(sess, image_lists, label_name, index, image_dir,category, bottleneck_dir, jpeg_data_tensor,decoded_image_tensor, resized_input_tensor,bottleneck_tensor, architecture):"""Retrieves or calculates bottleneck values for an image.If a cached version of the bottleneck data exists on-disk, return that,otherwise calculate the data and save it to disk for future use.Args:sess: The current active TensorFlow Session.image_lists: Dictionary of training images for each label.label_name: Label string we want to get an image for.index: Integer offset of the image we want. This will be modulo-ed by theavailable number of images for the label, so it can be arbitrarily large.image_dir: Root folder string of the subfolders containing the trainingimages.category: Name string of which set to pull images from - training, testing,or validation.bottleneck_dir: Folder string holding cached files of bottleneck values.jpeg_data_tensor: The tensor to feed loaded jpeg data into.decoded_image_tensor: The output of decoding and resizing the image.resized_input_tensor: The input node of the recognition graph.bottleneck_tensor: The output tensor for the bottleneck values.architecture: The name of the model architecture.Returns:Numpy array of values produced by the bottleneck layer for the image."""label_lists = image_lists[label_name]sub_dir = label_lists['dir']sub_dir_path = os.path.join(bottleneck_dir, sub_dir)ensure_dir_exists(sub_dir_path)bottleneck_path = get_bottleneck_path(image_lists, label_name, index,bottleneck_dir, category, architecture)if not os.path.exists(bottleneck_path):create_bottleneck_file(bottleneck_path, image_lists, label_name, index,image_dir, category, sess, jpeg_data_tensor,decoded_image_tensor, resized_input_tensor,bottleneck_tensor)with open(bottleneck_path, 'r') as bottleneck_file:bottleneck_string = bottleneck_file.read()did_hit_error = Falsetry:bottleneck_values = [float(x) for x in bottleneck_string.split(',')]except ValueError:tf.logging.warning('Invalid float found, recreating bottleneck')did_hit_error = Trueif did_hit_error:create_bottleneck_file(bottleneck_path, image_lists, label_name, index,image_dir, category, sess, jpeg_data_tensor,decoded_image_tensor, resized_input_tensor,bottleneck_tensor)with open(bottleneck_path, 'r') as bottleneck_file:bottleneck_string = bottleneck_file.read()# Allow exceptions to propagate here, since they shouldn't happen after a# fresh creationbottleneck_values = [float(x) for x in bottleneck_string.split(',')]return bottleneck_valuesdef cache_bottlenecks(sess, image_lists, image_dir, bottleneck_dir,jpeg_data_tensor, decoded_image_tensor,resized_input_tensor, bottleneck_tensor, architecture):"""Ensures all the training, testing, and validation bottlenecks are cached.Because we're likely to read the same image multiple times (if there are nodistortions applied during training) it can speed things up a lot if wecalculate the bottleneck layer values once for each image duringpreprocessing, and then just read those cached values repeatedly duringtraining. Here we go through all the images we've found, calculate thosevalues, and save them off.Args:sess: The current active TensorFlow Session.image_lists: Dictionary of training images for each label.image_dir: Root folder string of the subfolders containing the trainingimages.bottleneck_dir: Folder string holding cached files of bottleneck values.jpeg_data_tensor: Input tensor for jpeg data from file.decoded_image_tensor: The output of decoding and resizing the image.resized_input_tensor: The input node of the recognition graph.bottleneck_tensor: The penultimate output layer of the graph.architecture: The name of the model architecture.Returns:Nothing."""how_many_bottlenecks = 0ensure_dir_exists(bottleneck_dir)for label_name, label_lists in image_lists.items():for category in ['training', 'testing', 'validation']:category_list = label_lists[category]for index, unused_base_name in enumerate(category_list):get_or_create_bottleneck(sess, image_lists, label_name, index, image_dir, category,bottleneck_dir, jpeg_data_tensor, decoded_image_tensor,resized_input_tensor, bottleneck_tensor, architecture)how_many_bottlenecks += 1if how_many_bottlenecks % 100 == 0:tf.logging.info(str(how_many_bottlenecks) + ' bottleneck files created.')def get_random_cached_bottlenecks(sess, image_lists, how_many, category,bottleneck_dir, image_dir, jpeg_data_tensor,decoded_image_tensor, resized_input_tensor,bottleneck_tensor, architecture):"""Retrieves bottleneck values for cached images.If no distortions are being applied, this function can retrieve the cachedbottleneck values directly from disk for images. It picks a random set ofimages from the specified category.Args:sess: Current TensorFlow Session.image_lists: Dictionary of training images for each label.how_many: If positive, a random sample of this size will be chosen.If negative, all bottlenecks will be retrieved.category: Name string of which set to pull from - training, testing, orvalidation.bottleneck_dir: Folder string holding cached files of bottleneck values.image_dir: Root folder string of the subfolders containing the trainingimages.jpeg_data_tensor: The layer to feed jpeg image data into.decoded_image_tensor: The output of decoding and resizing the image.resized_input_tensor: The input node of the recognition graph.bottleneck_tensor: The bottleneck output layer of the CNN graph.architecture: The name of the model architecture.Returns:List of bottleneck arrays, their corresponding ground truths, and therelevant filenames."""class_count = len(image_lists.keys())bottlenecks = []ground_truths = []filenames = []#create_label_txt()if how_many >= 0:# Retrieve a random sample of bottlenecks.for unused_i in range(how_many):label_index = random.randrange(class_count)label_name = list(image_lists.keys())[label_index]image_index = random.randrange(MAX_NUM_IMAGES_PER_CLASS + 1)image_name = get_image_path(image_lists, label_name, image_index,image_dir, category)bottleneck = get_or_create_bottleneck(sess, image_lists, label_name, image_index, image_dir, category,bottleneck_dir, jpeg_data_tensor, decoded_image_tensor,resized_input_tensor, bottleneck_tensor, architecture)ground_truth = np.zeros(class_count, dtype=np.float32)ground_truth[label_index] = 1.0bottlenecks.append(bottleneck)ground_truths.append(ground_truth)filenames.append(image_name)else:# Retrieve all bottlenecks.for label_index, label_name in enumerate(image_lists.keys()):for image_index, image_name in enumerate(image_lists[label_name][category]):image_name = get_image_path(image_lists, label_name, image_index,image_dir, category)bottleneck = get_or_create_bottleneck(sess, image_lists, label_name, image_index, image_dir, category,bottleneck_dir, jpeg_data_tensor, decoded_image_tensor,resized_input_tensor, bottleneck_tensor, architecture)ground_truth = np.zeros(class_count, dtype=np.float32)ground_truth[label_index] = 1.0bottlenecks.append(bottleneck)ground_truths.append(ground_truth)filenames.append(image_name)return bottlenecks, ground_truths, filenamesdef get_random_distorted_bottlenecks(sess, image_lists, how_many, category, image_dir, input_jpeg_tensor,distorted_image, resized_input_tensor, bottleneck_tensor):"""Retrieves bottleneck values for training images, after distortions.If we're training with distortions like crops, scales, or flips, we have torecalculate the full model for every image, and so we can't use cachedbottleneck values. Instead we find random images for the requested category,run them through the distortion graph, and then the full graph to get thebottleneck results for each.Args:sess: Current TensorFlow Session.image_lists: Dictionary of training images for each label.how_many: The integer number of bottleneck values to return.category: Name string of which set of images to fetch - training, testing,or validation.image_dir: Root folder string of the subfolders containing the trainingimages.input_jpeg_tensor: The input layer we feed the image data to.distorted_image: The output node of the distortion graph.resized_input_tensor: The input node of the recognition graph.bottleneck_tensor: The bottleneck output layer of the CNN graph.Returns:List of bottleneck arrays and their corresponding ground truths."""class_count = len(image_lists.keys())bottlenecks = []ground_truths = []for unused_i in range(how_many):label_index = random.randrange(class_count)label_name = list(image_lists.keys())[label_index]image_index = random.randrange(MAX_NUM_IMAGES_PER_CLASS + 1)image_path = get_image_path(image_lists, label_name, image_index, image_dir,category)if not gfile.Exists(image_path):tf.logging.fatal('File does not exist %s', image_path)jpeg_data = gfile.FastGFile(image_path, 'rb').read()# Note that we materialize the distorted_image_data as a numpy array before# sending running inference on the image. This involves 2 memory copies and# might be optimized in other implementations.distorted_image_data = sess.run(distorted_image,{input_jpeg_tensor: jpeg_data})bottleneck_values = sess.run(bottleneck_tensor,{resized_input_tensor: distorted_image_data})bottleneck_values = np.squeeze(bottleneck_values)ground_truth = np.zeros(class_count, dtype=np.float32)ground_truth[label_index] = 1.0bottlenecks.append(bottleneck_values)ground_truths.append(ground_truth)return bottlenecks, ground_truthsdef should_distort_images(flip_left_right, random_crop, random_scale,random_brightness):"""Whether any distortions are enabled, from the input flags.Args:flip_left_right: Boolean whether to randomly mirror images horizontally.random_crop: Integer percentage setting the total margin used around thecrop box.random_scale: Integer percentage of how much to vary the scale by.random_brightness: Integer range to randomly multiply the pixel values by.Returns:Boolean value indicating whether any distortions should be applied."""return (flip_left_right or (random_crop != 0) or (random_scale != 0) or(random_brightness != 0))def add_input_distortions(flip_left_right, random_crop, random_scale,random_brightness, input_width, input_height,input_depth, input_mean, input_std):"""Creates the operations to apply the specified distortions.During training it can help to improve the results if we run the imagesthrough simple distortions like crops, scales, and flips. These reflect thekind of variations we expect in the real world, and so can help train themodel to cope with natural data more effectively. Here we take the suppliedparameters and construct a network of operations to apply them to an image.Cropping~~~~~~~~Cropping is done by placing a bounding box at a random position in the fullimage. The cropping parameter controls the size of that box relative to theinput image. If it's zero, then the box is the same size as the input and nocropping is performed. If the value is 50%, then the crop box will be half thewidth and height of the input. In a diagram it looks like this:< width >+---------------------+| || width - crop% || < > || +------+ || | | || | | || | | || +------+ || || |+---------------------+Scaling~~~~~~~Scaling is a lot like cropping, except that the bounding box is alwayscentered and its size varies randomly within the given range. For example ifthe scale percentage is zero, then the bounding box is the same size as theinput and no scaling is applied. If it's 50%, then the bounding box will be ina random range between half the width and height and full size.Args:flip_left_right: Boolean whether to randomly mirror images horizontally.random_crop: Integer percentage setting the total margin used around thecrop box.random_scale: Integer percentage of how much to vary the scale by.random_brightness: Integer range to randomly multiply the pixel values by.graph.input_width: Horizontal size of expected input image to model.input_height: Vertical size of expected input image to model.input_depth: How many channels the expected input image should have.input_mean: Pixel value that should be zero in the image for the graph.input_std: How much to divide the pixel values by before recognition.Returns:The jpeg input layer and the distorted result tensor."""jpeg_data = tf.placeholder(tf.string, name='DistortJPGInput')decoded_image = tf.image.decode_jpeg(jpeg_data, channels=input_depth)decoded_image_as_float = tf.cast(decoded_image, dtype=tf.float32)decoded_image_4d = tf.expand_dims(decoded_image_as_float, 0)margin_scale = 1.0 + (random_crop / 100.0)resize_scale = 1.0 + (random_scale / 100.0)margin_scale_value = tf.constant(margin_scale)resize_scale_value = tf.random_uniform(tensor_shape.scalar(),minval=1.0,maxval=resize_scale)scale_value = tf.multiply(margin_scale_value, resize_scale_value)precrop_width = tf.multiply(scale_value, input_width)precrop_height = tf.multiply(scale_value, input_height)precrop_shape = tf.stack([precrop_height, precrop_width])precrop_shape_as_int = tf.cast(precrop_shape, dtype=tf.int32)precropped_image = tf.image.resize_bilinear(decoded_image_4d,precrop_shape_as_int)precropped_image_3d = tf.squeeze(precropped_image, squeeze_dims=[0])cropped_image = tf.random_crop(precropped_image_3d,[input_height, input_width, input_depth])if flip_left_right:flipped_image = tf.image.random_flip_left_right(cropped_image)else:flipped_image = cropped_imagebrightness_min = 1.0 - (random_brightness / 100.0)brightness_max = 1.0 + (random_brightness / 100.0)brightness_value = tf.random_uniform(tensor_shape.scalar(),minval=brightness_min,maxval=brightness_max)brightened_image = tf.multiply(flipped_image, brightness_value)offset_image = tf.subtract(brightened_image, input_mean)mul_image = tf.multiply(offset_image, 1.0 / input_std)distort_result = tf.expand_dims(mul_image, 0, name='DistortResult')return jpeg_data, distort_resultdef variable_summaries(var):"""Attach a lot of summaries to a Tensor (for TensorBoard visualization)."""with tf.name_scope('summaries'):mean = tf.reduce_mean(var)tf.summary.scalar('mean', mean)with tf.name_scope('stddev'):stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))tf.summary.scalar('stddev', stddev)tf.summary.scalar('max', tf.reduce_max(var))tf.summary.scalar('min', tf.reduce_min(var))tf.summary.histogram('histogram', var)def add_final_training_ops(class_count, final_tensor_name, bottleneck_tensor,bottleneck_tensor_size):"""Adds a new softmax and fully-connected layer for training.We need to retrain the top layer to identify our new classes, so this functionadds the right operations to the graph, along with some variables to hold theweights, and then sets up all the gradients for the backward pass.The set up for the softmax and fully-connected layers is based on:https://www.tensorflow.org/versions/master/tutorials/mnist/beginners/index.htmlArgs:class_count: Integer of how many categories of things we're trying torecognize.final_tensor_name: Name string for the new final node that produces results.bottleneck_tensor: The output of the main CNN graph.bottleneck_tensor_size: How many entries in the bottleneck vector.Returns:The tensors for the training and cross entropy results, and tensors for thebottleneck input and ground truth input."""with tf.name_scope('input'):bottleneck_input = tf.placeholder_with_default(bottleneck_tensor,shape=[None, bottleneck_tensor_size],name='BottleneckInputPlaceholder')ground_truth_input = tf.placeholder(tf.float32,[None, class_count],name='GroundTruthInput')# Organizing the following ops as `final_training_ops` so they're easier# to see in TensorBoardlayer_name = 'final_training_ops'with tf.name_scope(layer_name):with tf.name_scope('weights'):initial_value = tf.truncated_normal([bottleneck_tensor_size, class_count], stddev=0.001)layer_weights = tf.Variable(initial_value, name='final_weights')variable_summaries(layer_weights)with tf.name_scope('biases'):layer_biases = tf.Variable(tf.zeros([class_count]), name='final_biases')variable_summaries(layer_biases)with tf.name_scope('Wx_plus_b'):logits = tf.matmul(bottleneck_input, layer_weights) + layer_biasestf.summary.histogram('pre_activations', logits)final_tensor = tf.nn.softmax(logits, name=final_tensor_name)tf.summary.histogram('activations', final_tensor)with tf.name_scope('cross_entropy'):cross_entropy = tf.nn.softmax_cross_entropy_with_logits(labels=ground_truth_input, logits=logits)with tf.name_scope('total'):cross_entropy_mean = tf.reduce_mean(cross_entropy)tf.summary.scalar('cross_entropy', cross_entropy_mean)with tf.name_scope('train'):optimizer = tf.train.GradientDescentOptimizer(FLAGS.learning_rate)train_step = optimizer.minimize(cross_entropy_mean)return (train_step, cross_entropy_mean, bottleneck_input, ground_truth_input,final_tensor)def add_evaluation_step(result_tensor, ground_truth_tensor):"""Inserts the operations we need to evaluate the accuracy of our results.Args:result_tensor: The new final node that produces results.ground_truth_tensor: The node we feed ground truth datainto.Returns:Tuple of (evaluation step, prediction)."""with tf.name_scope('accuracy'):with tf.name_scope('correct_prediction'):prediction = tf.argmax(result_tensor, 1)correct_prediction = tf.equal(prediction, tf.argmax(ground_truth_tensor, 1))#false positive#Only useful at two-catagory classification nowwith tf.name_scope('accuracy'):evaluation_step = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))with tf.name_scope('ture_positive'):prediction = tf.argmax(result_tensor, 1)first = tf.shape(prediction)[0]# second=tf.shape(prediction)[2]contrast = tf.constant(2, dtype=tf.int64)correct_positive = tf.greater_equal(tf.add(prediction, tf.argmax(ground_truth_tensor, 1)),contrast)all_positive = tf.greater_equal(tf.add(prediction, tf.constant(2, dtype=tf.int64)),contrast)correct_positive = tf.cast(correct_positive, tf.int32)all_positive = tf.cast(all_positive, tf.int32)evaluation_alter_step = tf.reduce_sum(correct_positive)tf.summary.scalar('accuracy', evaluation_step)tf.summary.scalar('ture_positive', evaluation_alter_step)return evaluation_step, prediction,evaluation_alter_stepdef save_graph_to_file(sess, graph, graph_file_name):output_graph_def = graph_util.convert_variables_to_constants(sess, graph.as_graph_def(), [FLAGS.final_tensor_name])with gfile.FastGFile(graph_file_name, 'wb') as f:f.write(output_graph_def.SerializeToString())returndef prepare_file_system():# Setup the directory we'll write summaries to for TensorBoardif tf.gfile.Exists(FLAGS.summaries_dir):tf.gfile.DeleteRecursively(FLAGS.summaries_dir)tf.gfile.MakeDirs(FLAGS.summaries_dir)if FLAGS.intermediate_store_frequency > 0:ensure_dir_exists(FLAGS.intermediate_output_graphs_dir)returndef create_model_info(architecture):"""Given the name of a model architecture, returns information about it.There are different base image recognition pretrained models that can beretrained using transfer learning, and this function translates from the nameof a model to the attributes that are needed to download and train with it.Args:architecture: Name of a model architecture.Returns:Dictionary of information about the model, or None if the name isn'trecognizedRaises:ValueError: If architecture name is unknown."""architecture = architecture.lower()if architecture == 'inception_v3':# pylint: disable=line-too-longdata_url = 'http://download.tensorflow.org/models/image/imagenet/inception-2015-12-05.tgz'# pylint: enable=line-too-longbottleneck_tensor_name = 'pool_3/_reshape:0'bottleneck_tensor_size = 2048input_width = 299input_height = 299input_depth = 3resized_input_tensor_name = 'Mul:0'model_file_name = 'classify_image_graph_def.pb'input_mean = 128input_std = 128elif architecture.startswith('mobilenet_'):#mobilenet_1.0_224parts = architecture.split('_')if len(parts) != 3 and len(parts) != 4:tf.logging.error("Couldn't understand architecture name '%s'",architecture)return Noneversion_string = parts[1]if (version_string != '1.0' and version_string != '0.75' andversion_string != '0.50' and version_string != '0.25'):tf.logging.error(""""The Mobilenet version should be '1.0', '0.75', '0.50', or '0.25',but found '%s' for architecture '%s'""",version_string, architecture)return Nonesize_string = parts[2]if (size_string != '224' and size_string != '192' and size_string != '160' and size_string != '128'):tf.logging.error( """The Mobilenet input size should be '224', '192', '160', or '128',but found '%s' for architecture '%s'""",size_string, architecture)return Noneif len(parts) == 3:is_quantized = Falseelse:if parts[3] != 'quantized':tf.logging.error("Couldn't understand architecture suffix '%s' for '%s'", parts[3],architecture)return Noneis_quantized = Truedata_url = 'http://download.tensorflow.org/models/mobilenet_v1_'data_url += version_string + '_' + size_string + '_frozen.tgz'bottleneck_tensor_name = 'MobilenetV1/Predictions/Reshape:0'bottleneck_tensor_size = 1001input_width = int(size_string)input_height = int(size_string)input_depth = 3resized_input_tensor_name = 'input:0'if is_quantized:model_base_name = 'quantized_graph.pb'else:model_base_name = 'frozen_graph.pb'model_dir_name = 'mobilenet_v1_' + version_string + '_' + size_stringmodel_file_name = os.path.join(model_dir_name, model_base_name)input_mean = 127.5input_std = 127.5else:tf.logging.error("Couldn't understand architecture name '%s'", architecture)raise ValueError('Unknown architecture', architecture)return {'data_url': data_url,'bottleneck_tensor_name': bottleneck_tensor_name,'bottleneck_tensor_size': bottleneck_tensor_size,'input_width': input_width,'input_height': input_height,'input_depth': input_depth,'resized_input_tensor_name': resized_input_tensor_name,'model_file_name': model_file_name,'input_mean': input_mean,'input_std': input_std,}def add_jpeg_decoding(input_width, input_height, input_depth, input_mean,input_std):"""Adds operations that perform JPEG decoding and resizing to the graph..Args:input_width: Desired width of the image fed into the recognizer graph.input_height: Desired width of the image fed into the recognizer graph.input_depth: Desired channels of the image fed into the recognizer graph.input_mean: Pixel value that should be zero in the image for the graph.input_std: How much to divide the pixel values by before recognition.Returns:Tensors for the node to feed JPEG data into, and the output of thepreprocessing steps."""jpeg_data = tf.placeholder(tf.string, name='DecodeJPGInput')decoded_image = tf.image.decode_jpeg(jpeg_data, channels=input_depth)decoded_image_as_float = tf.cast(decoded_image, dtype=tf.float32)decoded_image_4d = tf.expand_dims(decoded_image_as_float, 0)resize_shape = tf.stack([input_height, input_width])resize_shape_as_int = tf.cast(resize_shape, dtype=tf.int32)resized_image = tf.image.resize_bilinear(decoded_image_4d,resize_shape_as_int)offset_image = tf.subtract(resized_image, input_mean)mul_image = tf.multiply(offset_image, 1.0 / input_std)return jpeg_data, mul_imagedef main(_):# Needed to make sure the logging output is visible.# See https://github.com/tensorflow/tensorflow/issues/3047tf.logging.set_verbosity(tf.logging.INFO)# Prepare necessary directories that can be used during trainingprepare_file_system()# Gather information about the model architecture we'll be using.model_info = create_model_info(FLAGS.architecture)if not model_info:tf.logging.error('Did not recognize architecture flag')return -1# Set up the pre-trained graph.maybe_download_and_extract(model_info['data_url'])graph, bottleneck_tensor, resized_image_tensor = (create_model_graph(model_info))# Look at the folder structure, and create lists of all the images.image_lists = create_image_lists(FLAGS.image_dir, FLAGS.testing_percentage,FLAGS.validation_percentage)class_count = len(image_lists.keys())if class_count == 0:tf.logging.error('No valid folders of images found at ' + FLAGS.image_dir)return -1if class_count == 1:tf.logging.error('Only one valid folder of images found at ' +FLAGS.image_dir +' - multiple classes are needed for classification.')return -1# See if the command-line flags mean we're applying any distortions.do_distort_images = should_distort_images(FLAGS.flip_left_right, FLAGS.random_crop, FLAGS.random_scale,FLAGS.random_brightness)with tf.Session(graph=graph) as sess:# Set up the image decoding sub-graph.jpeg_data_tensor, decoded_image_tensor = add_jpeg_decoding(model_info['input_width'], model_info['input_height'],model_info['input_depth'], model_info['input_mean'],model_info['input_std'])if do_distort_images:# We will be applying distortions, so setup the operations we'll need.(distorted_jpeg_data_tensor,distorted_image_tensor) = add_input_distortions(FLAGS.flip_left_right, FLAGS.random_crop, FLAGS.random_scale,FLAGS.random_brightness, model_info['input_width'],model_info['input_height'], model_info['input_depth'],model_info['input_mean'], model_info['input_std'])else:# We'll make sure we've calculated the 'bottleneck' image summaries and# cached them on disk.cache_bottlenecks(sess, image_lists, FLAGS.image_dir,FLAGS.bottleneck_dir, jpeg_data_tensor,decoded_image_tensor, resized_image_tensor,bottleneck_tensor, FLAGS.architecture)# Add the new layer that we'll be training.(train_step, cross_entropy, bottleneck_input, ground_truth_input,final_tensor) = add_final_training_ops(len(image_lists.keys()), FLAGS.final_tensor_name, bottleneck_tensor,model_info['bottleneck_tensor_size'])# Create the operations we need to evaluate the accuracy of our new layer.evaluation_step, prediction,evaluation_alter_step = add_evaluation_step(final_tensor, ground_truth_input)# Merge all the summaries and write them out to the summaries_dirmerged = tf.summary.merge_all()train_writer = tf.summary.FileWriter(FLAGS.summaries_dir + '/train',sess.graph)validation_writer = tf.summary.FileWriter(FLAGS.summaries_dir + '/validation')# Set up all our weights to their initial default values.init = tf.global_variables_initializer()sess.run(init)# Run the training for as many cycles as requested on the command line.for i in range(FLAGS.how_many_training_steps):# Get a batch of input bottleneck values, either calculated fresh every# time with distortions applied, or from the cache stored on disk.if do_distort_images:(train_bottlenecks,train_ground_truth) = get_random_distorted_bottlenecks(sess, image_lists, FLAGS.train_batch_size, 'training',FLAGS.image_dir, distorted_jpeg_data_tensor,distorted_image_tensor, resized_image_tensor, bottleneck_tensor)else:(train_bottlenecks,train_ground_truth, _) = get_random_cached_bottlenecks(sess, image_lists, FLAGS.train_batch_size, 'training',FLAGS.bottleneck_dir, FLAGS.image_dir, jpeg_data_tensor,decoded_image_tensor, resized_image_tensor, bottleneck_tensor,FLAGS.architecture)# Feed the bottlenecks and ground truth into the graph, and run a training# step. Capture training summaries for TensorBoard with the `merged` op.train_summary, _ = sess.run([merged, train_step],feed_dict={bottleneck_input: train_bottlenecks,ground_truth_input: train_ground_truth})train_writer.add_summary(train_summary, i)# Every so often, print out how well the graph is training.is_last_step = (i + 1 == FLAGS.how_many_training_steps)if (i % FLAGS.eval_step_interval) == 0 or is_last_step:train_accuracy, cross_entropy_value = sess.run([evaluation_step, cross_entropy],feed_dict={bottleneck_input: train_bottlenecks,ground_truth_input: train_ground_truth})tf.logging.info('%s: Step %d: Train accuracy = %.1f%%' %(datetime.now(), i, train_accuracy * 100))tf.logging.info('%s: Step %d: Cross entropy = %f' %(datetime.now(), i, cross_entropy_value))validation_bottlenecks, validation_ground_truth, _ = (get_random_cached_bottlenecks(sess, image_lists, FLAGS.validation_batch_size, 'validation',FLAGS.bottleneck_dir, FLAGS.image_dir, jpeg_data_tensor,decoded_image_tensor, resized_image_tensor, bottleneck_tensor,FLAGS.architecture))# Run a validation step and capture training summaries for TensorBoard# with the `merged` op.validation_summary, validation_accuracy = sess.run([merged, evaluation_step],feed_dict={bottleneck_input: validation_bottlenecks,ground_truth_input: validation_ground_truth})validation_writer.add_summary(validation_summary, i)tf.logging.info('%s: Step %d: Validation accuracy = %.1f%% (N=%d)' %(datetime.now(), i, validation_accuracy * 100,len(validation_bottlenecks)))# Store intermediate resultsintermediate_frequency = FLAGS.intermediate_store_frequencyif (intermediate_frequency > 0 and (i % intermediate_frequency == 0)and i > 0):intermediate_file_name = (FLAGS.intermediate_output_graphs_dir +'intermediate_' + str(i) + '.pb')tf.logging.info('Save intermediate result to : ' +intermediate_file_name)save_graph_to_file(sess, graph, intermediate_file_name)# model_name = FLAGS.intermediate_output_graphs_dir + str(i) + '_' + 'my-model.ckpt'# saver.save(sess,model_name)# We've completed all our training, so run a final test evaluation on# some new images we haven't used before.test_bottlenecks, test_ground_truth, test_filenames = (get_random_cached_bottlenecks(sess, image_lists, FLAGS.test_batch_size, 'testing',FLAGS.bottleneck_dir, FLAGS.image_dir, jpeg_data_tensor,decoded_image_tensor, resized_image_tensor, bottleneck_tensor,FLAGS.architecture))test_accuracy, predictions = sess.run([evaluation_step, prediction],feed_dict={bottleneck_input: test_bottlenecks,ground_truth_input: test_ground_truth})tf.logging.info('Final test accuracy = %.1f%% (N=%d)' %(test_accuracy * 100, len(test_bottlenecks)))if FLAGS.print_misclassified_test_images:tf.logging.info('=== MISCLASSIFIED TEST IMAGES ===')for i, test_filename in enumerate(test_filenames):if predictions[i] != test_ground_truth[i].argmax():tf.logging.info('%70s %s' %(test_filename,list(image_lists.keys())[predictions[i]]))# Write out the trained graph and labels with the weights stored as# constants.save_graph_to_file(sess, graph, FLAGS.output_graph)with gfile.FastGFile(FLAGS.output_labels, 'w') as f:f.write('\n'.join(image_lists.keys()) + '\n')if __name__ == '__main__':parser = argparse.ArgumentParser()parser.add_argument('--image_dir',type=str,default = '/media/ubuntu_data2/02_dataset/视频质量诊断现场视频/深度学习质量诊断/MobileNet_V2训练测试/TrainData/Train/',# default='/media/chou/TF/TFcode/hongshi_person_classify/retrain/trainingData',help='Path to folders of labeled images.')parser.add_argument('--output_graph',type=str,# default='/media/chou/TF/TFcode/hongshi_person_classify/retrain/modelForWang/topRec_8_06.pb',default = './Occlusion_cls.pb',help='Where to save the trained graph.')parser.add_argument('--intermediate_output_graphs_dir',type=str,default='./Occlusion_cls222/',help='Where to save the intermediate graphs.')parser.add_argument('--intermediate_store_frequency',type=int,default=100,help="""\How many steps to store intermediate graph. If "0" then will notstore.\""")parser.add_argument('--output_labels',type=str,default='./Occlusion_cls.txt',help='Where to save the trained graph\'s labels.')parser.add_argument('--summaries_dir',type=str,default='./model/',help='Where to save summary logs for TensorBoard.')parser.add_argument('--how_many_training_steps',type=int,default=10000,help='How many training steps to run before ending.')parser.add_argument('--learning_rate',type=float,default=0.001,help='How large a learning rate to use when training.')parser.add_argument('--testing_percentage',type=int,default=1,help='What percentage of images to use as a test set.')parser.add_argument('--validation_percentage',type=int,default=50,help='What percentage of images to use as a validation set.')parser.add_argument('--eval_step_interval',type=int,default=150,help='How often to evaluate the training results.')parser.add_argument('--train_batch_size',type=int,default=1024, #60help='How many images to train on at a time.')parser.add_argument('--test_batch_size',type=int,default=-1,help="""\How many images to test on. This test set is only used once, to evaluatethe final accuracy of the model after training completes.A value of -1 causes the entire test set to be used, which leads to morestable results across runs.\""")parser.add_argument('--validation_batch_size',type=int,default=2000,help="""\How many images to use in an evaluation batch. This validation set isused much more often than the test set, and is an early indicator of howaccurate the model is during training.A value of -1 causes the entire validation set to be used, which leads tomore stable results across training iterations, but may be slower on largetraining sets.\""")parser.add_argument('--print_misclassified_test_images',default=False,help="""\Whether to print out a list of all misclassified test images.\""",action='store_true')parser.add_argument('--model_dir',type=str,default='./tmp/imagenet',help="""\Path to classify_image_graph_def.pb,imagenet_synset_to_human_label_map.txt, andimagenet_2012_challenge_label_map_proto.pbtxt.\""")parser.add_argument('--bottleneck_dir',type=str,default='./tmp/bottleneck',help='Path to cache bottleneck layer values as files.')parser.add_argument('--final_tensor_name',type=str,default='final_result',help="""\The name of the output classification layer in the retrained graph.\""")parser.add_argument('--flip_left_right',default=True,help="""\Whether to randomly flip half of the training images horizontally.\""",action='store_true')parser.add_argument('--random_crop',type=int,default=0,help="""\A percentage determining how much of a margin to randomly crop off thetraining images.\""")parser.add_argument('--random_scale',type=int,default=0,help="""\A percentage determining how much to randomly scale up the size of thetraining images by.\""")parser.add_argument('--random_brightness',type=int,default=0,help="""\A percentage determining how much to randomly multiply the training imageinput pixels up or down by.\""")parser.add_argument('--architecture',type=str,default='mobilenet_1.0_224', #''mobilenet_1.0_224',help="""\Which model architecture to use. 'inception_v3' is the most accurate, butalso the slowest. For faster or smaller models, chose a MobileNet with theform 'mobilenet_<parameter size>_<input_size>[_quantized]'. For example,'mobilenet_1.0_224' will pick a model that is 17 MB in size and takes 224pixel input images, while 'mobilenet_0.25_128_quantized' will choose a muchless accurate, but smaller and faster network that's 920 KB on disk andtakes 128x128 images. See https://research.googleblog.com/2017/06/mobilenets-open-source-models-for.htmlfor more information on Mobilenet.\""")FLAGS, unparsed = parser.parse_known_args()tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

调用方式:python retrain.py --image_dir ./TrainData/ --intermediate_output_graphs_dir ./model_train_0.25_224/ --learning_rate 0.001

输入依次是:你的训练数据文件夹、模型保存地址以及学习率。其他参数请详细阅读代码。

4.测试代码

import tensorflow as tf

import os

from tensorflow.python.platform import gfile

from tensorflow.python.framework import graph_utilimport cv2

import numpy as np

import argparse

from PIL import Image

from matplotlib import pyplot as plt

import tensorflow.contrib.graph_editor as ge

import tensorflow as tf

os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID"

os.environ["CUDA_VISIBLE_DEVICES"] = "1"gpu_options = tf.GPUOptions(allow_growth=True)

sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))def GetImgNameByEveryDir(file_dir,videoProperty): # Input Root Dir and get all img in per Dir.# Out Every img with its filename and its dir and its path FileNameWithPath = [] FileName = []FileDir = []# videoProperty=['.png','jpg','bmp']for root, dirs, files in os.walk(file_dir): for file in files: if os.path.splitext(file)[1] in videoProperty: FileNameWithPath.append(os.path.join(root, file)) # 保存图片路径FileName.append(file) # 保存图片名称FileDir.append(root[len(file_dir):]) # 保存图片所在文件夹return FileName,FileNameWithPath,FileDirdef append_prepocess_input_in_graph(graph_filedir):'''2017/9/8:append an custom input layer into model graph'''with tf.Session() as sess2:#image_data = tf.gfile.FastGFile('./mutilabeltrain/3.jpg', 'rb').read()prepocess= image_process(sess2)graph_def = load_graph(graph_filedir)tf.import_graph_def(graph_def,input_map={'input':prepocess})[print(n.name) for n in tf.get_default_graph().as_graph_def().node]return tf.get_default_graph().as_graph_def()def load_graph(filename):"""Unpersists graph from file as default graph."""with tf.gfile.FastGFile(filename, 'rb') as f: graph_def = tf.GraphDef() graph_def.ParseFromString(f.read())return graph_defdef image_process(sess,mean = 127.5,std = 127.5):#liadiyuan add:以下内容均获取自retrain.py 的预处理代码可以参考那边代码#2017-9-6 直接使用sess,删除原有的sessionwith tf.name_scope('preprocess'):jpeg_data = tf.placeholder(tf.string, name='OriginalInput')decoded_image = tf.image.decode_jpeg(jpeg_data, channels=3,name='DecodedImage')decoded_image_as_float = tf.cast(decoded_image, dtype=tf.uint8, name='cast')decoded_image_4d = tf.expand_dims(decoded_image_as_float, 0,name='expenddim')'''图像解码,并将原图像从rank 2 扩展为 rank 4'''resize_shape = tf.stack([128, 128])resize_shape_as_int = tf.cast(resize_shape, dtype=tf.int32)preprocessed = tf.image.resize_bilinear(decoded_image_4d,resize_shape_as_int,name='Resize')preprocessed = tf.cast(preprocessed, dtype=tf.float32)''''归一化图像大小'''offset_image = tf.subtract(preprocessed, mean)preprocessed = tf.multiply(offset_image, 1.0 / std)'''图像样本零均值,方差归一化?'''return preprocessed

def Test(Graph_Def,TestDir,FileProperty,input_layer_name, output_layer_name,SaveResultDir):FileName,FileNameWithPath,FileDir = GetImgNameByEveryDir(TestDir,FileProperty)graph_def = append_prepocess_input_in_graph(Graph_Def)tf.import_graph_def(graph_def)label_graph = tf.get_default_graph()print(list(tf.get_default_graph().as_graph_def().node)[-1])with tf.Session() as sess:clsResult = {}ImageNumber = 0for k in range(len(FileName)):if FileProperty in ['.avi','.mp4']:FileNum = 0breakif FileProperty in ['.jpg']:frame = cv2.imread(FileNameWithPath[k])frame = frame[0:int(0.6*frame.shape[0]),0:frame.shape[1],:]image_data_path = SaveResultDir + '_TemporarySave.jpg'cv2.imwrite(image_data_path,frame)image_data = tf.gfile.FastGFile(image_data_path, 'rb').read()input_layer = label_graph.get_tensor_by_name(input_layer_name)softmax_tensor = label_graph.get_tensor_by_name('import/' + output_layer_name)predictions = sess.run(softmax_tensor, {input_layer: image_data})print("frame number = ",k,predictions)font = cv2.FONT_HERSHEY_SIMPLEXif predictions[0][0]>0.75:imgzi = cv2.putText(frame,'P',(int(frame.shape[1]/2),int(frame.shape[0]/2)),font,1.2,(0,0,255),2)cv2.imshow(" ",frame)cv2.waitKey(0)cv2.imwrite(SaveResultDir + str(k) +'_' +'.jpg',frame)else:imgzi = cv2.putText(frame,'N',(int(frame.shape[1]/2),int(frame.shape[0]/2)),font,1.2,(255,0,0),2)cv2.imshow(" ",frame)cv2.waitKey(0)cv2.imwrite(SaveResultDir + str(k) +'_' +'.jpg',frame)def main(args):SaveResultDir = args.SaveDirTestDir = args.TestDirFileProperty = args.FilePropertyInputLayer = args.InputLayerOutputLayer = args.OutputLayerGraphDef = args.GraphDefif os.path.exists(SaveResultDir) ==False:os.makedirs(SaveResultDir)Test(GraphDef,TestDir,FileProperty,InputLayer,OutputLayer,SaveResultDir)def parse_args():parser = argparse.ArgumentParser()parser.add_argument('--TestDir',default = './TestData/测试图片/')parser.add_argument('--FileProperty',default = '.jpg')parser.add_argument('--SaveDir',default = './TestData/测试图片结果/')# parser.add_argument('--GraphDef',default = './model_train_1/intermediate_300.pb')parser.add_argument('--GraphDef',default = './model_train_1.0_128/intermediate_200.pb')parser.add_argument('--InputLayer',default = 'preprocess/OriginalInput:0')parser.add_argument('--OutputLayer',default = 'final_result:0')return parser.parse_args()if __name__ == '__main__':main(parse_args())测试脚本请阅读代码。

这篇关于Tensorflow训练MobileNet V1 retrain图片分类的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!