本文主要是介绍数据集006:中药材识别数据集(含数据集下载链接),希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

数据集简介:

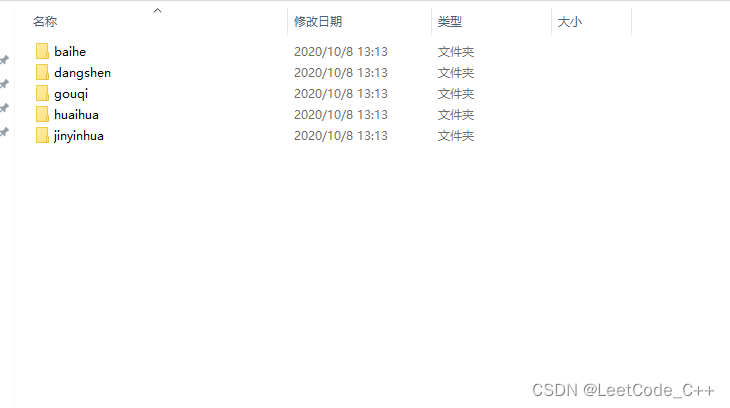

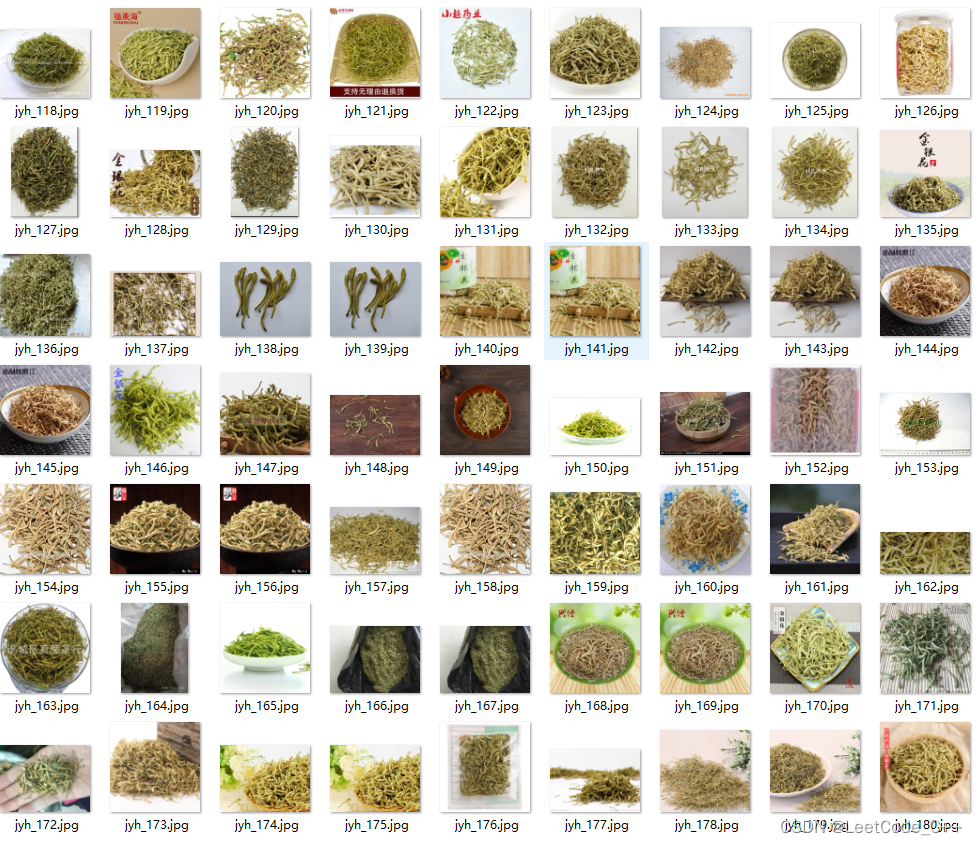

中药材共5类 900张图片 分别是百合 枸杞 党参 槐花 金银花

部分代码:

def get_data_list(target_path,train_list_path,eval_list_path):'''生成数据列表'''#存放所有类别的信息class_detail = []#获取所有类别保存的文件夹名称data_list_path=target_path+"Chinese Medicine/"class_dirs = os.listdir(data_list_path) #总的图像数量all_class_images = 0#存放类别标签class_label=0#存放类别数目class_dim = 0#存储要写进eval.txt和train.txt中的内容trainer_list=[]eval_list=[]#读取每个类别,['river', 'lawn','church','ice','desert']for class_dir in class_dirs:if class_dir != ".DS_Store":class_dim += 1#每个类别的信息class_detail_list = {}eval_sum = 0trainer_sum = 0#统计每个类别有多少张图片class_sum = 0#获取类别路径 path = data_list_path + class_dir# 获取所有图片img_paths = os.listdir(path)for img_path in img_paths: # 遍历文件夹下的每个图片name_path = path + '/' + img_path # 每张图片的路径if class_sum % 8 == 0: # 每8张图片取一个做验证数据eval_sum += 1 # test_sum为测试数据的数目eval_list.append(name_path + "\t%d" % class_label + "\n")else:trainer_sum += 1 trainer_list.append(name_path + "\t%d" % class_label + "\n")#trainer_sum测试数据的数目class_sum += 1 #每类图片的数目all_class_images += 1 #所有类图片的数目# 说明的json文件的class_detail数据class_detail_list['class_name'] = class_dir #类别名称class_detail_list['class_label'] = class_label #类别标签class_detail_list['class_eval_images'] = eval_sum #该类数据的测试集数目class_detail_list['class_trainer_images'] = trainer_sum #该类数据的训练集数目class_detail.append(class_detail_list) #初始化标签列表train_parameters['label_dict'][str(class_label)] = class_dirclass_label += 1 #初始化分类数train_parameters['class_dim'] = class_dim#乱序 random.shuffle(eval_list)with open(eval_list_path, 'a') as f:for eval_image in eval_list:f.write(eval_image) random.shuffle(trainer_list)with open(train_list_path, 'a') as f2:for train_image in trainer_list:f2.write(train_image) # 说明的json文件信息readjson = {}readjson['all_class_name'] = data_list_path #文件父目录readjson['all_class_images'] = all_class_imagesreadjson['class_detail'] = class_detailjsons = json.dumps(readjson, sort_keys=True, indent=4, separators=(',', ': '))with open(train_parameters['readme_path'],'w') as f:f.write(jsons)print ('生成数据列表完成!')class dataset(Dataset):def __init__(self, data_path, mode='train'):"""数据读取器:param data_path: 数据集所在路径:param mode: train or eval"""super().__init__()self.data_path = data_pathself.img_paths = []self.labels = []if mode == 'train':with open(os.path.join(self.data_path, "train.txt"), "r", encoding="utf-8") as f:self.info = f.readlines()for img_info in self.info:img_path, label = img_info.strip().split('\t')self.img_paths.append(img_path)self.labels.append(int(label))else:with open(os.path.join(self.data_path, "eval.txt"), "r", encoding="utf-8") as f:self.info = f.readlines()for img_info in self.info:img_path, label = img_info.strip().split('\t')self.img_paths.append(img_path)self.labels.append(int(label))def __getitem__(self, index):"""获取一组数据:param index: 文件索引号:return:"""# 第一步打开图像文件并获取label值img_path = self.img_paths[index]img = Image.open(img_path)if img.mode != 'RGB':img = img.convert('RGB') img = img.resize((224, 224), Image.BILINEAR)img = np.array(img).astype('float32')img = img.transpose((2, 0, 1)) / 255label = self.labels[index]label = np.array([label], dtype="int64")return img, labeldef print_sample(self, index: int = 0):print("文件名", self.img_paths[index], "\t标签值", self.labels[index])def __len__(self):return len(self.img_paths)

model = VGGNet()

model.train()

cross_entropy = paddle.nn.CrossEntropyLoss()

optimizer = paddle.optimizer.Adam(learning_rate=train_parameters['learning_strategy']['lr'],parameters=model.parameters()) steps = 0

Iters, total_loss, total_acc = [], [], []for epo in range(train_parameters['num_epochs']):for _, data in enumerate(train_loader()):steps += 1x_data = data[0]y_data = data[1]predicts, acc = model(x_data, y_data)loss = cross_entropy(predicts, y_data)loss.backward()optimizer.step()optimizer.clear_grad()if steps % train_parameters["skip_steps"] == 0:Iters.append(steps)total_loss.append(loss.numpy()[0])total_acc.append(acc.numpy()[0])#打印中间过程print('epo: {}, step: {}, loss is: {}, acc is: {}'\.format(epo, steps, loss.numpy(), acc.numpy()))#保存模型参数if steps % train_parameters["save_steps"] == 0:save_path = train_parameters["checkpoints"]+"/"+"save_dir_" + str(steps) + '.pdparams'print('save model to: ' + save_path)paddle.save(model.state_dict(),save_path)

paddle.save(model.state_dict(),train_parameters["checkpoints"]+"/"+"save_dir_final.pdparams")

draw_process("trainning loss","red",Iters,total_loss,"trainning loss")

draw_process("trainning acc","green",Iters,total_acc,"trainning acc")数据集链接:中药材识别数据集

这篇关于数据集006:中药材识别数据集(含数据集下载链接)的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!