本文主要是介绍【新人推荐】Python爬取巨潮资讯网指定PDF,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

文章目录

- 前言

- 一、项目分析

- 1. 前期准备

- 2. 代码整体思路

- 二、快速上手

- 1. data.xlsx文件整理

- 2.初始股票信息填充

- 3.指定股票筛选

- 4.获取指定股票页面数

- 5.获取指定股票PDF的url

- 6.下载指定的PDF

- 总结

前言

由于会计、金融等毕业论文数据需要爬取数据,这里教大家怎么批量简单爬取巨潮咨询网指定的PDF。该例子为获取对应股票、对应年份带有“董事会”与“决议会议”的PDF。

一、项目分析

1. 前期准备

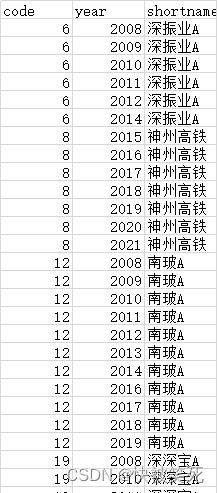

- 得到由stata整合、国泰安下载的“data.xlsx”,如下所示:

- 该代码例子为获取对应股票、对应年份带有“董事会”与“决议会议”的PDF。

2. 代码整体思路

- data.xlsx文件整理

- 初始股票信息填充

- 指定股票筛选

- 获取指定股票页面数

- 获取指定股票PDF的url

- 下载指定PDF

二、快速上手

1. data.xlsx文件整理

代码如下:

import requests

from bs4 import BeautifulSoup

import pandas as pd

import os

from urllib.parse import urljoin

import json

import math

import xlwt

import xlrd

import time# 读取Excel文件

df = pd.read_excel("D:\\MyCoding\\pachong_JuChao\\data.xlsx")# 将code由int型转为string型,填充0,补齐6位

df["code"] = df["code"].apply(lambda x: str(x).zfill(6))# 使用groupby将相同的id分组,并将year列合并为一个years列

df["years"] = df.groupby("code")["year"].transform(lambda x: ",".join(x.astype(str)))# 删除原来的year列

df = df.drop("year", axis=1)# 去重

df = df.drop_duplicates()# 重新排列列的顺序,如果需要的话

df = df[["code", "shortname", "years"]]# 保存到新的Excel文件

df.to_excel("D:\\MyCoding\\pachong_JuChao\\1_data_initial.xlsx", index=False)# 由于一些公司中途改名,可能存在相同code,但是shortname不一致的情况,这个情景没有判断。

# 在1_统计年份.py中有函数解决2.初始股票信息填充

代码如下:

import requests

from bs4 import BeautifulSoup

import pandas as pd

import os

from urllib.parse import urljoin

import json

import math

import xlwt

import xlrd

import time# 获取股票信息

url = "http://www.cninfo.com.cn/new/data/szse_stock.json"

ret = requests.get(url=url)

ret = ret.content

stock_list = json.loads(ret)["stockList"]# 只保留A股

stock_list_new = []

for stock in stock_list:if stock["category"] == "A股":stock_list_new.append(stock)# 添加column和plate信息,后续需要用到

i = 0

for stock in stock_list_new:if stock["code"][0] == "0" or stock["code"][0] == "3":stock_list_new[i]["column"] = "szse"stock_list_new[i]["plate"] = "sz"else:stock_list_new[i]["column"] = "sse"stock_list_new[i]["plate"] = "sh"i = i + 1# 保存到data_all.xlsx文件中

# 创建一个DataFrame

df = pd.DataFrame(stock_list_new)# 将DataFrame写入Excel文件

output_file = "D:\\MyCoding\\pachong_JuChao\\2_data_all.xlsx"

df.to_excel(output_file, index=False)# 需要注意,此时的code是文本类型,这样子才会是000001,而不是13.指定股票筛选

代码如下:

import requests

from bs4 import BeautifulSoup

import pandas as pd

import os

from urllib.parse import urljoin

import json

import math

import xlwt

import xlrd

import time# 读取两个Excel文件

data_all = pd.read_excel("D:\\MyCoding\\pachong_JuChao\\2_data_all.xlsx")

data_initial = pd.read_excel("D:\\MyCoding\\pachong_JuChao\\1_data_initial.xlsx")# 将data_initial中的years数据合并到data_all

data_all = data_all.merge(data_initial[["code", "years"]], on="code", how="left")# 删除在data_all中没有出现在data_initial中的行

data_all = data_all.dropna(subset=["years"])# 将code由int型转为string型,填充0,补齐6位

data_all["code"] = data_all["code"].apply(lambda x: str(x).zfill(6))# 保存到新的Excel文件

data_all.to_excel("D:\\MyCoding\\pachong_JuChao\\3_data_merged.xlsx", index=False)4.获取指定股票页面数

代码如下

import requests

from bs4 import BeautifulSoup

import pandas as pd

import os

from urllib.parse import urljoin

import json

import math

import xlwt

import xlrd

import time# 从inputout.xls中过滤所有A股信息,保存到stock_list_new列表中

df1 = pd.read_excel("D:\\MyCoding\\pachong_JuChao\\3_data_merged.xlsx")

df1["code"] = df1["code"].apply(lambda x: str(x).zfill(6))

stock_list = df1.values.tolist()

stock_list_new = []

for stock in stock_list:if stock[2] == "A股":stock_list_new.append(stock) # 准备创建一个新的Excel,Excel中创建了一个叫“股票信息”的sheet

# sheet中的第一行列表如下所示

# code pinyin category orgId zwjc column plate years pages

w = xlwt.Workbook()

ws = w.add_sheet("股票信息")

title_list = ["code","pinyin","category","orgId","zwjc","column","plate","years","pages",

]

j = 0

for title in title_list:ws.write(0, j, title)j = j + 1# 获取股票公告页数

url = "http://www.cninfo.com.cn/new/hisAnnouncement/query"

i = 0

for stock in stock_list_new:data = {"stock": str(stock[0]) + "," + str(stock[3]),"tabName": "fulltext","pageSize": 30,"pageNum": 1,"column": stock[5],"plate": stock[6],"isHLtitle": "true",}# print(data)ret = requests.post(url=url, data=data)if ret.status_code == 200:ret = ret.contentret = str(ret, encoding="utf-8")total_ann = json.loads(ret)["totalAnnouncement"]# stock_list_new[i]["pages"] = math.ceil(total_ann / 30)stock = stock_list_new[i]stock.append(math.ceil(total_ann / 30)) # 这会将新元素添加到列表的末尾print(f"成功获取第{i}个股票页数!")i = i + 1content_list = [stock[0],stock[1],stock[2],stock[3],stock[4],stock[5],stock[6],stock[7],stock[8],]j = 0for content in content_list:ws.write(i, j, content)j = j + 1# 每得到10支股票信息变保持一次xls文件,防止意外中断时,xls文件未保存而丢失if i % 10 == 0:w.save("D:\\MyCoding\\pachong_JuChao\\4_data_pages.xlsx")print(f"成功保存第{i}个股票信息!")# 在每次请求后暂停运行,暂停1秒,防止被反爬虫机制检测出来,该机制虽然慢,但是不会被发现time.sleep(1)else:breakif i % 10 != 0:w.save("D:\\MyCoding\\pachong_JuChao\\4_data_pages.xlsx")print("成功保存所有股票信息!")5.获取指定股票PDF的url

代码如下

import requests

from bs4 import BeautifulSoup

import pandas as pd

import os

from urllib.parse import urljoin

import json

import math

import xlwt

import xlrd

import time

import redef get_announcements(stock):url = "http://www.cninfo.com.cn/new/hisAnnouncement/query"name = stock["code"]w = xlwt.Workbook()ws = w.add_sheet(name)title_list = ["secCode", "secName", "announcementTitle", "adjunctUrl", "columnId"]j = 0for title in title_list:ws.write(0, j, title)j += 1i = 1count = 1years = stock["years"].split(",") # 提取年份信息,生成列表for page in range(1, int(stock["pages"]) + 1):data = {"stock": stock["code"] + "," + stock["orgId"],"tabName": "fulltext","pageSize": 30,"pageNum": page,"column": stock["column"],"plate": stock["plate"],"isHLtitle": "true",}hasValue = 0count = count + 1while True:try:ret = requests.post(url=url, data=data)ret.raise_for_status() # 抛出异常以处理请求错误ret = ret.contentret = str(ret, encoding="utf-8")if not ret:print("响应内容为空")breakann_list = json.loads(ret)["announcements"]for ann in ann_list:title = ann["announcementTitle"]urlyear = ann["adjunctUrl"]"""if ("董事会" in titleand "决议公告" in titleand any("/" + year + "-" in urlyear for year in years)):"""keyword_pattern = re.compile(r"(董事会.*决议的公告|董事会.*会议决议)")if keyword_pattern.search(title) and any("/" + year + "-" in urlyear for year in years):content_list = [ann["secCode"],ann["secName"],ann["announcementTitle"],ann["adjunctUrl"],ann["columnId"],]hasValue = 1j = 0for content in content_list:ws.write(i, j, content)j += 1i += 1if hasValue == 1:# print(f"成功写入{name}!")w.save(f"D:\\MyCoding\\pachong_JuChao\\url\\{name}.xlsx")break # 如果成功写入,跳出重试循环except requests.exceptions.RequestException as e:print(f"请求失败,正在重试 {name},错误信息: {e}")time.sleep(1) # 等待一段时间后重试if count % 5 == 0:time.sleep(1)# 读入excel文件,获取股票信息

excel_file = "D:\\MyCoding\\pachong_JuChao\\4_data_pages.xlsx"

w = xlrd.open_workbook(excel_file)

ws = w.sheet_by_name("股票信息")

nor = ws.nrowsstock_list = []

for i in range(1, nor):dict = {}for j in range(ws.ncols):title = ws.cell_value(0, j)value = ws.cell_value(i, j)dict[title] = valuestock_list.append(dict)# 遍历股票信息并获取公告信息

for stock in stock_list:get_announcements(stock)6.下载指定的PDF

代码如下

import requests

from bs4 import BeautifulSoup

import pandas as pd

import os

from urllib.parse import urljoin

import json

import math

import xlwt

import xlrd

import time

import os

import xlrd

from urllib.parse import quote

from urllib.request import urlretrieve

import time

import sys# 确保PDF文件夹存在

pdf_folder = "D:\\MyCoding\\pachong_JuChao\\PDF"

if not os.path.exists(pdf_folder):os.mkdir(pdf_folder)folder_path = "D:\\MyCoding\\pachong_JuChao\\url" # 指定xls或xlsx文件所在的目录xls_files = [f for f in os.listdir(folder_path) if f.endswith(".xlsx") or f.endswith(".xls")

]for xls_file in xls_files:w = xlrd.open_workbook(os.path.join(folder_path, xls_file))ws = w.sheet_by_name(xls_file.replace(".xlsx", "").replace(".xls", ""))nor = ws.nrowsnol = ws.ncolsfile_count = 0 # 初始化计数器for i in range(1, nor):url = "http://static.cninfo.com.cn/" + ws.cell_value(i, 3)name = ws.cell_value(i, 0) + "-" + ws.cell_value(i, 3).split("/")[-1]pdf_path = os.path.join(pdf_folder, name) # PDF文件保存的完整路径while True: # 重试直到成功try:urlretrieve(url, filename=pdf_path)# print(f"Successfully downloaded {name}!")file_count += 1 # 每次成功下载文件后,计数器加1break # 下载成功后退出循环except Exception as e:print(f"An error occurred while downloading {name}: ", e)time.sleep(1) # 下载失败后暂停1秒继续尝试# 检查计数器if file_count == 20:time.sleep(1) # 如果已经下载了20个文件,暂停1秒file_count = 0 # 重置计数器总结

至此,所有的PDF都已下载到PDF目录下,请读者依据自己需要自行修改文件保存路径以及筛选条件。

这篇关于【新人推荐】Python爬取巨潮资讯网指定PDF的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!