本文主要是介绍Android Camera(三) MediaRecorder的基本流程,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

Android MediaRecoder

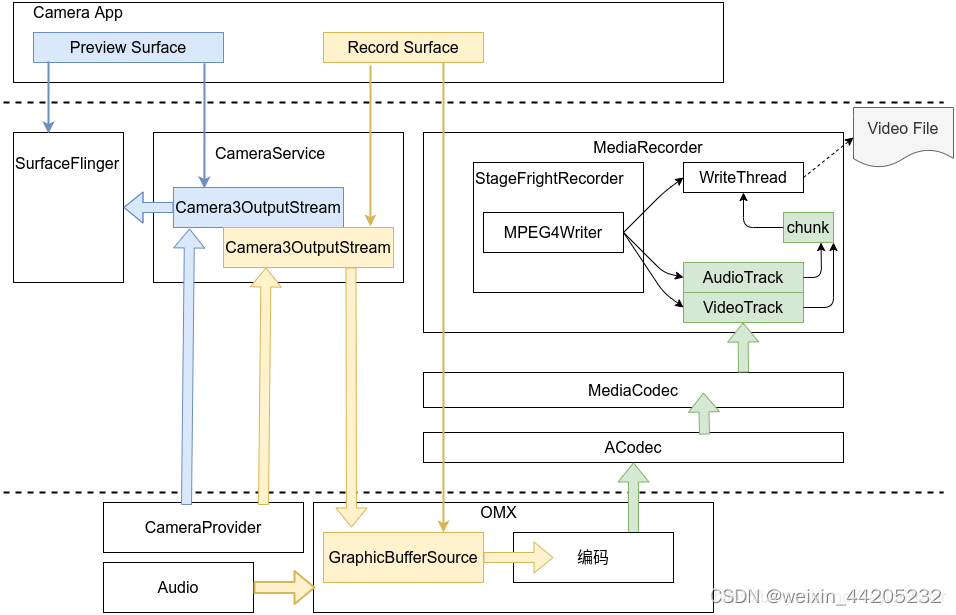

一.MediaRecorder整体架构

1.1 MediaRecorder录制数据流框架

简单过程:

1.Camera应用中至少有两个Surface,一个使用于preview的,另一个使用来record的,record的surface是PersistentSurface类型,PersistentSurface中的GraphicBufferSource类型的成员变量mBufferSource最终由编码器创建引用;

2.CameraServer中持有Record Surface的producer引用和Preview Surface的producer引用,因此预览和录制CameraServer都充当着生产者的角色;

3.在向CameraProvider发request的时候先dequeueBuffer送至HAL去填充,填充完HAL发回result这时queueBuffer将数据填充至BufferQueue中,由BufferQueue的原理,可知这时候BufferQueue的Consumer将回调onFrameAvailable函数去收到数据准备完成通知,接下来Consumer使用acquireBuffer去消费即可,消费完即releaseBuffer去释放Buffer;

4.对于preview,消费者就是Surfaceflinger了,合成消费后拿去显示,对于record,消费者就是编码器了,举例OMX一种,编码器获取到数据消费用于编码;

5.编码器编完码之后将调用Framework中MediaServer的回调,将编码后的数据传递至MediaRecorder;

6.MediaRecorder在start之后将启动一个WriteThread,两个TrackThread(分别是Video和Audio),当TrackThread跟踪到有相应数据后将video或audio的数据分装成Chunk数据结构,保存在MPEG4Writer成员变量mChunks中.这时WriteThread发现有数据可写会将mChunks中的chunk写到文件中.

以上参考 Android MediaRecorder框架简洁梳理

二、MediaRecoder的相关流程

2.1 MediaRecoder的创建

在Android Camera(一)的4.2中会创建一个MediaRecoder对象。

/frameworks/base/media/java/android/media/MediaRecorder.java

141 @Deprecated

142 public MediaRecorder() {

143 this(ActivityThread.currentApplication());

144 }

145

146 /**

147 * Default constructor.

148 *

149 * @param context Context the recorder belongs to

150 */

151 public MediaRecorder(@NonNull Context context) {

152 Objects.requireNonNull(context);

153 Looper looper;

154 if ((looper = Looper.myLooper()) != null) {

155 mEventHandler = new EventHandler(this, looper);

156 } else if ((looper = Looper.getMainLooper()) != null) {

157 mEventHandler = new EventHandler(this, looper);

158 } else {

159 mEventHandler = null;

160 }

161

162 mChannelCount = 1;

163 /* Native setup requires a weak reference to our object.

164 * It's easier to create it here than in C++.

165 */

166 try (ScopedParcelState attributionSourceState = context.getAttributionSource()

167 .asScopedParcelState()) {

168 native_setup(new WeakReference<>(this), ActivityThread.currentPackageName(),

169 attributionSourceState.getParcel());

170 }

171 }

在加载MediaRecoder的类时会加载media_jni的库接着调用native_init初始化meida

/frameworks/base/media/java/android/media/MediaRecorder.java

106 static {

107 System.loadLibrary("media_jni");108 native_init();

109 }

在MediaRecoder的构造函数中则是调用了native_setup。

native_init和native_setup时JNI函数最终会调用到下面的两个函数:

/frameworks/base/media/jni/android_media_MediaRecorder.cpp

580 static void

581 android_media_MediaRecorder_native_init(JNIEnv *env)

582 {

583 jclass clazz;

584 //native_init只是将native的成员变量和java的成员变量关联起来

585 clazz = env->FindClass("android/media/MediaRecorder");

586 if (clazz == NULL) {

587 return;

588 }

589

590 fields.context = env->GetFieldID(clazz, "mNativeContext", "J");

591 if (fields.context == NULL) {

592 return;

593 }

594

595 fields.surface = env->GetFieldID(clazz, "mSurface", "Landroid/view/Surface;");

596 if (fields.surface == NULL) {

597 return;

598 }

599

600 jclass surface = env->FindClass("android/view/Surface");

601 if (surface == NULL) {

602 return;

603 }

604

605 fields.post_event = env->GetStaticMethodID(clazz, "postEventFromNative",

606 "(Ljava/lang/Object;IIILjava/lang/Object;)V");

607 if (fields.post_event == NULL) {

608 return;

609 }

610

611 clazz = env->FindClass("java/util/ArrayList");

612 if (clazz == NULL) {

613 return;

614 }

615 gArrayListFields.add = env->GetMethodID(clazz, "add", "(Ljava/lang/Object;)Z");

616 gArrayListFields.classId = static_cast<jclass>(env->NewGlobalRef(clazz));

617 }

618

619

620 static void

621 android_media_MediaRecorder_native_setup(JNIEnv *env, jobject thiz, jobject weak_this,

622 jstring packageName, jobject jAttributionSource)

623 {

624 ALOGV("setup");

625

626 Parcel* parcel = parcelForJavaObject(env, jAttributionSource);

627 android::content::AttributionSourceState attributionSource;

628 attributionSource.readFromParcel(parcel);//创建native层的MediaRecorder

629 sp<MediaRecorder> mr = new MediaRecorder(attributionSource);

630

631 if (mr == NULL) {

632 jniThrowException(env, "java/lang/RuntimeException", "Out of memory");

633 return;

634 }

635 if (mr->initCheck() != NO_ERROR) {

636 jniThrowException(env, "java/lang/RuntimeException", "Unable to initialize media recorder");

637 return;

638 }

639

640 // create new listener and give it to MediaRecorder//创建一个回调监听,当有回调时会调用到java层的postEventFromNative

641 sp<JNIMediaRecorderListener> listener = new JNIMediaRecorderListener(env, thiz, weak_this);

642 mr->setListener(listener);

643

644 // Convert client name jstring to String16

645 const char16_t *rawClientName = reinterpret_cast<const char16_t*>(

646 env->GetStringChars(packageName, NULL));

647 jsize rawClientNameLen = env->GetStringLength(packageName);

648 String16 clientName(rawClientName, rawClientNameLen);

649 env->ReleaseStringChars(packageName,

650 reinterpret_cast<const jchar*>(rawClientName));

651

652 // pass client package name for permissions tracking

653 mr->setClientName(clientName);

654

655 setMediaRecorder(env, thiz, mr);

656 }

2.1.1 native层的MediaRecorder

/frameworks/av/media/libmedia/mediarecorder.cpp

763 MediaRecorder::MediaRecorder(const AttributionSourceState &attributionSource)

764 : mSurfaceMediaSource(NULL)

765 {

766 ALOGV("constructor");

767 //通过binder连接到MediaPlayerService来创建一个MediaRecorder

768 const sp<IMediaPlayerService> service(getMediaPlayerService());

769 if (service != NULL) {

770 mMediaRecorder = service->createMediaRecorder(attributionSource);

771 }

772 if (mMediaRecorder != NULL) {

773 mCurrentState = MEDIA_RECORDER_IDLE;

774 }

775

776 //设置一些变量为false

777 doCleanUp();

778 }

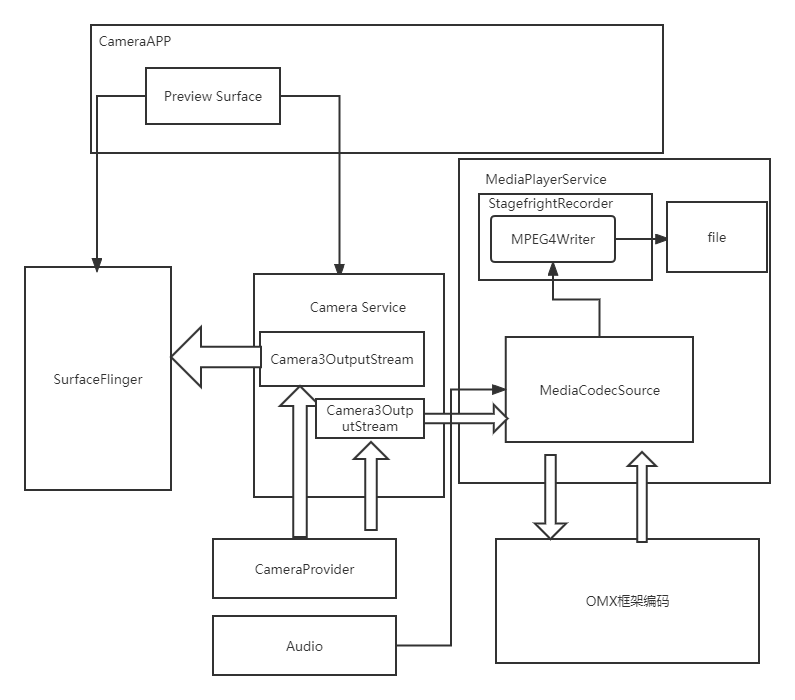

从上面我们知道了native层的MediaRecoder最终会调用到MediaPlayerService中创建MediaRecorder从这里开始就跨进程了,从app进程到media server进程(MediaPlayerService时运行在media server进程中的)

/frameworks/av/media/libmediaplayerservice/MediaPlayerService.cpp

458 sp<IMediaRecorder> MediaPlayerService::createMediaRecorder(

459 const AttributionSourceState& attributionSource)

460 {

461 // TODO b/182392769: use attribution source util

462 AttributionSourceState verifiedAttributionSource = attributionSource;

463 verifiedAttributionSource.uid = VALUE_OR_FATAL(

464 legacy2aidl_uid_t_int32_t(IPCThreadState::self()->getCallingUid()));

465 verifiedAttributionSource.pid = VALUE_OR_FATAL(

466 legacy2aidl_pid_t_int32_t(IPCThreadState::self()->getCallingPid()));//创建一个MediaRecorderClient并返回给app进程

467 sp<MediaRecorderClient> recorder =

468 new MediaRecorderClient(this, verifiedAttributionSource);

469 wp<MediaRecorderClient> w = recorder;

470 Mutex::Autolock lock(mLock);

471 mMediaRecorderClients.add(w);

472 ALOGV("Create new media recorder client from pid %s",

473 verifiedAttributionSource.toString().c_str());

474 return recorder;

475 }

/frameworks/av/media/libmediaplayerservice/MediaRecorderClient.cpp

380 MediaRecorderClient::MediaRecorderClient(const sp<MediaPlayerService>& service,

381 const AttributionSourceState& attributionSource)

382 {

383 ALOGV("Client constructor");

384 // attribution source already validated in createMediaRecorder

385 mAttributionSource = attributionSource;//创建一个StagefrightRecorder

386 mRecorder = new StagefrightRecorder(attributionSource);

387 mMediaPlayerService = service;

388 }

所以media recorder最终是创建了StagefrightRecorder来做media recorder的工作。

2.2 设置MediaRecorder

media recorder的设置主要在Android Camera模块(一)的4.2 其中设置了很多相关的属性

1146 mMediaRecorder.setCamera(camera); //对应StagefrightRecorder 的 setCamera

1147 mMediaRecorder.setAudioSource(MediaRecorder.AudioSource.CAMCORDER);//对应StagefrightRecorder 的 setAudioSource

1148 mMediaRecorder.setVideoSource(MediaRecorder.VideoSource.CAMERA);//对应StagefrightRecorder 的 setVideoSource

1149 mMediaRecorder.setProfile(mProfile);//设置默认的各种参数

1150 mMediaRecorder.setVideoSize(mProfile.videoFrameWidth, mProfile.videoFrameHeight);//对应StagefrightRecorder 的 setVideoSize

1151 mMediaRecorder.setMaxDuration(mMaxVideoDurationInMs);//对应StagefrightRecorder 的 setParameters...

1203 Location loc = mLocationManager.getCurrentLocation();

1204 if (loc != null) {

1205 mMediaRecorder.setLocation((float) loc.getLatitude(),

1206 (float) loc.getLongitude());//对应StagefrightRecorder 的 setParameters

1207 }

1156 if (mVideoFileDescriptor != null) {

1157 mMediaRecorder.setOutputFile(mVideoFileDescriptor.getFileDescriptor());//对应StagefrightRecorder 的 setOutputFile

1158 } else {

1159 releaseMediaRecorder();

1160 throw new RuntimeException("No valid video file descriptor");

1161 }

1169 try {

1170 mMediaRecorder.setMaxFileSize(maxFileSize);//对应StagefrightRecorder 的 setParameters

1171 } catch (RuntimeException exception) {

1172 // We are going to ignore failure of setMaxFileSize here, as

1173 // a) The composer selected may simply not support it, or

1174 // b) The underlying media framework may not handle 64-bit range

1175 // on the size restriction.

1176 }

1184 mMediaRecorder.setOrientationHint(rotation);//对应StagefrightRecorder 的 setParameters

从上面的代码中可以看到MediaRecorder设置的VideoSource为CAMERA,AudioSource是CAMCORDER 因为VideoSource不是SURFACE,所以不需要传Surface进去。

2.3 MediaRecorder.prepare

根据上面所描述的 MediaRecorder.prepare的调用分为两种情况,

1.MediaRecorder持有Surface的情况

2.MediaRecorder没有Surface的情况

主要区别在于jni的调用流程不同

/frameworks/base/media/jni/android_media_MediaRecorder.cpp

438 static void

439 android_media_MediaRecorder_prepare(JNIEnv *env, jobject thiz)

440 {

441 ALOGV("prepare");

442 sp<MediaRecorder> mr = getMediaRecorder(env, thiz);

443 if (mr == NULL) {

444 jniThrowException(env, "java/lang/IllegalStateException", NULL);

445 return;

446 }

447

448 jobject surface = env->GetObjectField(thiz, fields.surface);

449 if (surface != NULL) {//如果java层的MeidaRecorder持有sufrace,那么prepare最终会调用到StagefrightRecorder的setPreviewSurface

450 const sp<Surface> native_surface = get_surface(env, surface);

451

452 // The application may misbehave and

453 // the preview surface becomes unavailable

454 if (native_surface.get() == 0) {

455 ALOGE("Application lost the surface");

456 jniThrowException(env, "java/io/IOException", "invalid preview surface");

457 return;

458 }

459

460 ALOGI("prepare: surface=%p", native_surface.get());

461 if (process_media_recorder_call(env, mr->setPreviewSurface(native_surface->getIGraphicBufferProducer()), "java/lang/RuntimeException", "setPreviewSurface failed.")) {

462 return;

463 }

464 }//java层的MeidaRecorder持有sufrace为null,调用StagefrightRecorder的prepare

465 process_media_recorder_call(env, mr->prepare(), "java/io/IOException", "prepare failed.");

466 }

在JNI的android_media_MediaRecorder_prepare函数调用中如果如果java层的MeidaRecorder持有sufrace,那么prepare最终会调用到StagefrightRecorder的setPreviewSurface,将surface设置给StagefrightRecorder的成员变量mPreviewSurface,但是如果没有surface那么就会调用StagefrightRecorder的prepare

/frameworks/av/media/libmediaplayerservice/StagefrightRecorder.cpp

1204 status_t StagefrightRecorder::prepare() {

1205 ALOGV("prepare");

1206 Mutex::Autolock autolock(mLock);

1207 if (mVideoSource == VIDEO_SOURCE_SURFACE) {

1208 return prepareInternal();

1209 }

1210 return OK;

1211 }

1212

由于我们这里的mVideoSource == VIDEO_SOURCE_CAMERA(因为我们设置的VideoSource是MediaRecorder.VideoSource.CAMERA)所以相当于什么也没做。

2.4 MediaRecorder.start

同样的 MediaRecorder.start最终也是调用到StagefrightRecorder的start函数

/frameworks/av/media/libmediaplayerservice/StagefrightRecorder.cpp

1213 status_t StagefrightRecorder::start() {

1214 ALOGV("start");

1215 Mutex::Autolock autolock(mLock);

1216 if (mOutputFd < 0) {

1217 ALOGE("Output file descriptor is invalid");

1218 return INVALID_OPERATION;

1219 }

1220

1221 status_t status = OK;

1222 //我们的mVideoSource是VIDEO_SOURCE_CAMERA 所以这边会调用prepareInternal去根据输出文件的格式来设置这里以mWriter

1223 if (mVideoSource != VIDEO_SOURCE_SURFACE) {

1224 status = prepareInternal();

1225 if (status != OK) {

1226 return status;

1227 }

1228 }

1229

1230 if (mWriter == NULL) {

1231 ALOGE("File writer is not avaialble");

1232 return UNKNOWN_ERROR;

1233 }

1234 //同样需要根据输出文件格式设置MetaData接着调用对应的writer的start函数开始录制

1235 switch (mOutputFormat) {//这里以MPEG4为例介绍 文件封装为mp4

1236 case OUTPUT_FORMAT_DEFAULT:

1237 case OUTPUT_FORMAT_THREE_GPP:

1238 case OUTPUT_FORMAT_MPEG_4:

1239 case OUTPUT_FORMAT_WEBM:

1240 {

1241 bool isMPEG4 = true;

1242 if (mOutputFormat == OUTPUT_FORMAT_WEBM) {

1243 isMPEG4 = false;

1244 }

1245 sp<MetaData> meta = new MetaData;

1246 setupMPEG4orWEBMMetaData(&meta);

1247 status = mWriter->start(meta.get());

1248 break;

1249 }

1250 ...

1276

1277 default:

1278 {

1279 ALOGE("Unsupported output file format: %d", mOutputFormat);

1280 status = UNKNOWN_ERROR;

1281 break;

1282 }

1283 }

1284

1285 if (status != OK) {

1286 mWriter.clear();

1287 mWriter = NULL;

1288 }

1289

1290 if ((status == OK) && (!mStarted)) {

1291 mAnalyticsDirty = true;

1292 mStarted = true;

1293

1294 mStartedRecordingUs = systemTime() / 1000;

1295

1296 uint32_t params = IMediaPlayerService::kBatteryDataCodecStarted;

1297 if (mAudioSource != AUDIO_SOURCE_CNT) {

1298 params |= IMediaPlayerService::kBatteryDataTrackAudio;

1299 }

1300 if (mVideoSource != VIDEO_SOURCE_LIST_END) {

1301 params |= IMediaPlayerService::kBatteryDataTrackVideo;

1302 }

1303

1304 addBatteryData(params);

1305 }

1306

1307 return status;

1308 }1153 status_t StagefrightRecorder::prepareInternal() {

1154 ALOGV("prepare");

1155 if (mOutputFd < 0) {

1156 ALOGE("Output file descriptor is invalid");

1157 return INVALID_OPERATION;

1158 }

1159

1160 status_t status = OK;

1161

1162 switch (mOutputFormat) {

1163 case OUTPUT_FORMAT_DEFAULT:

1164 case OUTPUT_FORMAT_THREE_GPP:

1165 case OUTPUT_FORMAT_MPEG_4:

1166 case OUTPUT_FORMAT_WEBM://调用setupMPEG4orWEBMRecording来新建MediaWriter

1167 status = setupMPEG4orWEBMRecording();

1168 break;

1169 ...

1191

1192 default:

1193 ALOGE("Unsupported output file format: %d", mOutputFormat);

1194 status = UNKNOWN_ERROR;

1195 break;

1196 }

1197

1198 ALOGV("Recording frameRate: %d captureFps: %f",

1199 mFrameRate, mCaptureFps);

1200

1201 return status;

1202 }2146 status_t StagefrightRecorder::setupMPEG4orWEBMRecording() {

2147 mWriter.clear();

2148 mTotalBitRate = 0;

2149

2150 status_t err = OK;

2151 sp<MediaWriter> writer;

2152 sp<MPEG4Writer> mp4writer;

2153 if (mOutputFormat == OUTPUT_FORMAT_WEBM) {

2154 writer = new WebmWriter(mOutputFd);

2155 } else {

2156 writer = mp4writer = new MPEG4Writer(mOutputFd);

2157 }

2158 //mVideoSource == VIDEO_SOURCE_CAMERA < VIDEO_SOURCE_LIST_END

2159 if (mVideoSource < VIDEO_SOURCE_LIST_END) {

2160 setDefaultVideoEncoderIfNecessary();

2161

2162 sp<MediaSource> mediaSource;//当前是录像所以这边的mediaSource返回的是Camera的数据

2163 err = setupMediaSource(&mediaSource);

2164 if (err != OK) {

2165 return err;

2166 }

2167

2168 sp<MediaCodecSource> encoder;//设置H264/mpeg_4-avc编码

2169 err = setupVideoEncoder(mediaSource, &encoder);

2170 if (err != OK) {

2171 return err;

2172 }

2173 //将视频编码器包装成Track加入到MPEG4Writer中的mTracks

2174 writer->addSource(encoder);

2175 mVideoEncoderSource = encoder;

2176 mTotalBitRate += mVideoBitRate;

2177 }

2178

2179 // Audio source is added at the end if it exists.

2180 // This help make sure that the "recoding" sound is suppressed for

2181 // camcorder applications in the recorded files.

2182 // disable audio for time lapse recording

2183 const bool disableAudio = mCaptureFpsEnable && mCaptureFps < mFrameRate;

2184 if (!disableAudio && mAudioSource != AUDIO_SOURCE_CNT) {//设置音频解码器并包装成Track加入到MPEG4Writer中的mTracks

2185 err = setupAudioEncoder(writer);

2186 if (err != OK) return err;

2187 mTotalBitRate += mAudioBitRate;

2188 }

2189

2190 if (mOutputFormat != OUTPUT_FORMAT_WEBM) {

2191 if (mCaptureFpsEnable) {

2192 mp4writer->setCaptureRate(mCaptureFps);

2193 }

2194

2195 if (mInterleaveDurationUs > 0) {

2196 mp4writer->setInterleaveDuration(mInterleaveDurationUs);

2197 }

2198 if (mLongitudex10000 > -3600000 && mLatitudex10000 > -3600000) {

2199 mp4writer->setGeoData(mLatitudex10000, mLongitudex10000);

2200 }

2201 }

2202 if (mMaxFileDurationUs != 0) {

2203 writer->setMaxFileDuration(mMaxFileDurationUs);

2204 }

2205 if (mMaxFileSizeBytes != 0) {

2206 writer->setMaxFileSize(mMaxFileSizeBytes);

2207 }

2208 if (mVideoSource == VIDEO_SOURCE_DEFAULT

2209 || mVideoSource == VIDEO_SOURCE_CAMERA) {

2210 mStartTimeOffsetMs = mEncoderProfiles->getStartTimeOffsetMs(mCameraId);

2211 } else if (mVideoSource == VIDEO_SOURCE_SURFACE) {

2212 // surface source doesn't need large initial delay

2213 mStartTimeOffsetMs = 100;

2214 }

2215 if (mStartTimeOffsetMs > 0) {

2216 writer->setStartTimeOffsetMs(mStartTimeOffsetMs);

2217 }

2218

2219 writer->setListener(mListener);

2220 mWriter = writer;

2221 return OK;

2222 }

在StagefrightRecorder::start函数由于我们设置的Video的资源类型是Camera,所以我们是在start函数中调用了prepareInternal来设置我们的writer,但是如果你传入了 surface 并设置Video的资源类型是Surface,那么其实在调用MediaRecorder.prepare时就会设置writer了。此外我们一般使用的是MP4格式,所以对应的mOutputFormat是OUTPUT_FORMAT_MPEG_4,所以我们创建的writer是MPEG4Writer。接着还需要设置视频和音频的解码器到MPEG4Writer中。

2.4.1 设置视频编码器

代码如下:

2146 status_t StagefrightRecorder::setupMPEG4orWEBMRecording() {...

2159 if (mVideoSource < VIDEO_SOURCE_LIST_END) {

2160 setDefaultVideoEncoderIfNecessary();

2161

2162 sp<MediaSource> mediaSource;

2163 err = setupMediaSource(&mediaSource);

2164 if (err != OK) {

2165 return err;

2166 }

2167

2168 sp<MediaCodecSource> encoder;

2169 err = setupVideoEncoder(mediaSource, &encoder);

2170 if (err != OK) {

2171 return err;

2172 }

2173

2174 writer->addSource(encoder);

2175 mVideoEncoderSource = encoder;

2176 mTotalBitRate += mVideoBitRate;

2177 }

...}

可以看到上面的流程主要是三步:

1.设置媒体资源 (可能是camera传过来的数据,也可能是surface的数据)

2.设置视频解码器

3.将解码器加入到MPEG4Writer中

先看第一步设置媒体资源

/frameworks/av/media/libmediaplayerservice/StagefrightRecorder.cpp

1857 status_t StagefrightRecorder::setupMediaSource(

1858 sp<MediaSource> *mediaSource) {//由于我们这里是Camera的资源,所以走if分支

1859 if (mVideoSource == VIDEO_SOURCE_DEFAULT

1860 || mVideoSource == VIDEO_SOURCE_CAMERA) {

1861 sp<CameraSource> cameraSource;

1862 status_t err = setupCameraSource(&cameraSource);

1863 if (err != OK) {

1864 return err;

1865 }

1866 *mediaSource = cameraSource;

1867 } else if (mVideoSource == VIDEO_SOURCE_SURFACE) {

1868 *mediaSource = NULL;

1869 } else {

1870 return INVALID_OPERATION;

1871 }

1872 return OK;

1873 }1875 status_t StagefrightRecorder::setupCameraSource(

1876 sp<CameraSource> *cameraSource) {

1877 status_t err = OK;

1878 if ((err = checkVideoEncoderCapabilities()) != OK) {

1879 return err;

1880 }

1881 Size videoSize;

1882 videoSize.width = mVideoWidth;

1883 videoSize.height = mVideoHeight;

1884 uid_t uid = VALUE_OR_RETURN_STATUS(aidl2legacy_int32_t_uid_t(mAttributionSource.uid));

1885 pid_t pid = VALUE_OR_RETURN_STATUS(aidl2legacy_int32_t_pid_t(mAttributionSource.pid));

1886 String16 clientName = VALUE_OR_RETURN_STATUS(

1887 aidl2legacy_string_view_String16(mAttributionSource.packageName.value_or("")));//这里一般走else 和 MediaRecorder.java的setCaptureRate函数是否调用有关

1888 if (mCaptureFpsEnable) {

1889 if (!(mCaptureFps > 0.)) {

1890 ALOGE("Invalid mCaptureFps value: %lf", mCaptureFps);

1891 return BAD_VALUE;

1892 }

1893

1894 mCameraSourceTimeLapse = CameraSourceTimeLapse::CreateFromCamera(

1895 mCamera, mCameraProxy, mCameraId, clientName, uid, pid,

1896 videoSize, mFrameRate, mPreviewSurface,

1897 std::llround(1e6 / mCaptureFps));

1898 *cameraSource = mCameraSourceTimeLapse;

1899 } else {//创建CameraSource

1900 *cameraSource = CameraSource::CreateFromCamera(

1901 mCamera, mCameraProxy, mCameraId, clientName, uid, pid,

1902 videoSize, mFrameRate,

1903 mPreviewSurface);

1904 }

1905 mCamera.clear();

1906 mCameraProxy.clear();

1907 if (*cameraSource == NULL) {

1908 return UNKNOWN_ERROR;

1909 }

1910

1911 if ((*cameraSource)->initCheck() != OK) {

1912 (*cameraSource).clear();

1913 *cameraSource = NULL;

1914 return NO_INIT;

1915 }

1916

1917 // When frame rate is not set, the actual frame rate will be set to

1918 // the current frame rate being used.

1919 if (mFrameRate == -1) {

1920 int32_t frameRate = 0;

1921 CHECK ((*cameraSource)->getFormat()->findInt32(

1922 kKeyFrameRate, &frameRate));

1923 ALOGI("Frame rate is not explicitly set. Use the current frame "

1924 "rate (%d fps)", frameRate);

1925 mFrameRate = frameRate;

1926 }

1927

1928 CHECK(mFrameRate != -1);

1929

1930 mMetaDataStoredInVideoBuffers =

1931 (*cameraSource)->metaDataStoredInVideoBuffers();

1932

1933 return OK;

1934 }

setupCameraSource主要就是创建CameraSource对象,接着需要将CameraSource设置给视频编码器,

1936 status_t StagefrightRecorder::setupVideoEncoder(

1937 const sp<MediaSource> &cameraSource,

1938 sp<MediaCodecSource> *source) {

1939 source->clear();

1940

1941 sp<AMessage> format = new AMessage();

1942 //设置各种参数给format...//把CameraSource传入创建编码器

2094 sp<MediaCodecSource> encoder = MediaCodecSource::Create(

2095 mLooper, format, cameraSource, mPersistentSurface, flags);

2096 if (encoder == NULL) {

2097 ALOGE("Failed to create video encoder");

2098 // When the encoder fails to be created, we need

2099 // release the camera source due to the camera's lock

2100 // and unlock mechanism.

2101 if (cameraSource != NULL) {

2102 cameraSource->stop();

2103 }

2104 return UNKNOWN_ERROR;

2105 }

2106

2107 if (cameraSource == NULL) {

2108 mGraphicBufferProducer = encoder->getGraphicBufferProducer();

2109 }

2110

2111 *source = encoder;

2112

2113 return OK;

2114 }

/frameworks/av/media/libstagefright/MediaCodecSource.cpp

453 MediaCodecSource::MediaCodecSource(

454 const sp<ALooper> &looper,

455 const sp<AMessage> &outputFormat,

456 const sp<MediaSource> &source,

457 const sp<PersistentSurface> &persistentSurface,

458 uint32_t flags)

459 ... {

476 CHECK(mLooper != NULL);

477

478 if (!(mFlags & FLAG_USE_SURFACE_INPUT)) {

479 mPuller = new Puller(source);

480 }

481 }

109 MediaCodecSource::Puller::Puller(const sp<MediaSource> &source)

110 : mSource(source),

111 mLooper(new ALooper()),

112 mIsAudio(false)

113 {

114 sp<MetaData> meta = source->getFormat();

115 const char *mime;

116 CHECK(meta->findCString(kKeyMIMEType, &mime));

117

118 mIsAudio = !strncasecmp(mime, "audio/", 6);

119

120 mLooper->setName("pull_looper");

121 }

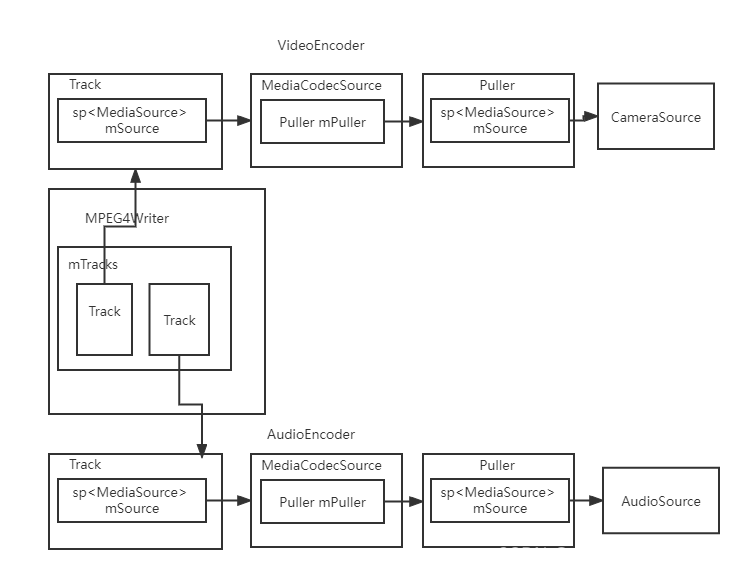

从上面可以知道视频编码器对象就是MediaCodecSource对象,而CameraSource对象则被传给了Puller的成员变量mSource

接着会将创建的视频编码器对象(MediaCodecSource)加入到MPEG4Writer中

/frameworks/av/media/libstagefright/MPEG4Writer.cpp

653 status_t MPEG4Writer::addSource(const sp<MediaSource> &source) {...

678

679 // This is a metadata track or the first track of either audio or video

680 // Go ahead to add the track.//将编码器包装成Track放到mTracks中

681 Track *track = new Track(this, source, 1 + mTracks.size());

682 mTracks.push_back(track);

683

684 mHasMoovBox |= !track->isHeic();

685 mHasFileLevelMeta |= track->isHeic();

686

687 return OK;

688 }2128 MPEG4Writer::Track::Track(

2129 MPEG4Writer *owner, const sp<MediaSource> &source, uint32_t aTrackId)

2130 : mOwner(owner),

2131 mMeta(source->getFormat()),

2132 mSource(source),...

}

所以在MPEG4Writer中视频编码器被包装成了Track并且被赋给了Track的mSource成员变量

2.4.2 设置音频资源

设置音频资源其实和视频资源类似核心代码如下:

/frameworks/av/media/libmediaplayerservice/StagefrightRecorder.cpp

2146 status_t StagefrightRecorder::setupMPEG4orWEBMRecording() {...

2179 // Audio source is added at the end if it exists.

2180 // This help make sure that the "recoding" sound is suppressed for

2181 // camcorder applications in the recorded files.

2182 // disable audio for time lapse recording

2183 const bool disableAudio = mCaptureFpsEnable && mCaptureFps < mFrameRate;

2184 if (!disableAudio && mAudioSource != AUDIO_SOURCE_CNT) {//设置音频编码器

2185 err = setupAudioEncoder(writer);

2186 if (err != OK) return err;

2187 mTotalBitRate += mAudioBitRate;

2188 }...

}

2116 status_t StagefrightRecorder::setupAudioEncoder(const sp<MediaWriter>& writer) {

2117 status_t status = BAD_VALUE;2135 //创建音频编码器

2136 sp<MediaCodecSource> audioEncoder = createAudioSource();

2137 if (audioEncoder == NULL) {

2138 return UNKNOWN_ERROR;

2139 }

2140 //将编码器加入到writer中

2141 writer->addSource(audioEncoder);

2142 mAudioEncoderSource = audioEncoder;

2143 return OK;

2144 }

1310 sp<MediaCodecSource> StagefrightRecorder::createAudioSource() {

1311 int32_t sourceSampleRate = mSampleRate;

1312 ...

1355 //创建音频资源

1356 sp<AudioSource> audioSource =

1357 new AudioSource(

1358 &attr,

1359 mAttributionSource,

1360 sourceSampleRate,

1361 mAudioChannels,

1362 mSampleRate,

1363 mSelectedDeviceId,

1364 mSelectedMicDirection,

1365 mSelectedMicFieldDimension);

1366

1367 status_t err = audioSource->initCheck();

1368

1369 if (err != OK) {

1370 ALOGE("audio source is not initialized");

1371 return NULL;

1372 }

1373 //设置编码的格式...

1424 //以音频资源为参数创建编码器

1425 sp<MediaCodecSource> audioEncoder =

1426 MediaCodecSource::Create(mLooper, format, audioSource);

1427 sp<AudioSystem::AudioDeviceCallback> callback = mAudioDeviceCallback.promote();

1428 if (mDeviceCallbackEnabled && callback != 0) {

1429 audioSource->addAudioDeviceCallback(callback);

1430 }

1431 mAudioSourceNode = audioSource;

1432

1433 if (audioEncoder == NULL) {

1434 ALOGE("Failed to create audio encoder");

1435 }

1436

1437 return audioEncoder;

1438 }

可以看到和视频编码器的流程是一样的。

2.4.3 MPEG4Writer.start

接着会调用MPEG4Writer的start开启录制流程。

/frameworks/av/media/libstagefright/MPEG4Writer.cpp

849 status_t MPEG4Writer::start(MetaData *param) {

850 if (mInitCheck != OK) {

851 return UNKNOWN_ERROR;

852 }

853 mStartMeta = param;

854 ...

971 //启动 writer线程

972 err = startWriterThread();

973 if (err != OK) {

974 return err;

975 }

976 //设置并启动Looper

977 err = setupAndStartLooper();

978 if (err != OK) {

979 return err;

980 }

981 //写入录制文件的文件头部信息

982 writeFtypBox(param);

983 ...

1021

1022 mOffset = mMdatOffset;

1023 seekOrPostError(mFd, mMdatOffset, SEEK_SET);

1024 write("\x00\x00\x00\x01mdat????????", 16);

1025

1026 /* Confirm whether the writing of the initial file atoms, ftyp and free,

1027 * are written to the file properly by posting kWhatNoIOErrorSoFar to the

1028 * MP4WtrCtrlHlpLooper that's handling write and seek errors also. If there

1029 * was kWhatIOError, the following two scenarios should be handled.

1030 * 1) If kWhatIOError was delivered and processed, MP4WtrCtrlHlpLooper

1031 * would have stopped all threads gracefully already and posting

1032 * kWhatNoIOErrorSoFar would fail.

1033 * 2) If kWhatIOError wasn't delivered or getting processed,

1034 * kWhatNoIOErrorSoFar should get posted successfully. Wait for

1035 * response from MP4WtrCtrlHlpLooper.

1036 */

1037 sp<AMessage> msg = new AMessage(kWhatNoIOErrorSoFar, mReflector);

1038 sp<AMessage> response;

1039 err = msg->postAndAwaitResponse(&response);

1040 if (err != OK || !response->findInt32("err", &err) || err != OK) {

1041 return ERROR_IO;

1042 }

1043 //开启音视频序列

1044 err = startTracks(param);

1045 if (err != OK) {

1046 return err;

1047 }

1048

1049 mStarted = true;

1050 return OK;

1051 }

2.4.4 startWriterThread

/frameworks/av/media/libstagefright/MPEG4Writer.cpp

2829 status_t MPEG4Writer::startWriterThread() {

2830 ALOGV("startWriterThread");

2831

2832 mDone = false;

2833 mIsFirstChunk = true;

2834 mDriftTimeUs = 0;//前面加入的音频和视频的编码器会被包装成track放到mTracks

2835 for (List<Track *>::iterator it = mTracks.begin();

2836 it != mTracks.end(); ++it) {

2837 ChunkInfo info;

2838 info.mTrack = *it;

2839 info.mPrevChunkTimestampUs = 0;

2840 info.mMaxInterChunkDurUs = 0;//这里取出再包装成ChunkInfo放到mChunkInfos中

2841 mChunkInfos.push_back(info);

2842 }

2843

2844 pthread_attr_t attr;

2845 pthread_attr_init(&attr);

2846 pthread_attr_setdetachstate(&attr, PTHREAD_CREATE_JOINABLE);//创建子线程mThread,并在子线程中执行MPEG4Writer的ThreadWrapper函数

2847 pthread_create(&mThread, &attr, ThreadWrapper, this);

2848 pthread_attr_destroy(&attr);

2849 mWriterThreadStarted = true;

2850 return OK;

2851 }2671 // static

2672 void *MPEG4Writer::ThreadWrapper(void *me) {

2673 ALOGV("ThreadWrapper: %p", me);

2674 MPEG4Writer *writer = static_cast<MPEG4Writer *>(me);

2675 writer->threadFunc();

2676 return NULL;

2677 }

2789 void MPEG4Writer::threadFunc() {

2790 ALOGV("threadFunc");

2791

2792 prctl(PR_SET_NAME, (unsigned long)"MPEG4Writer", 0, 0, 0);

2793

2794 if (mIsBackgroundMode) {

2795 // Background priority for media transcoding.

2796 androidSetThreadPriority(0 /* tid (0 = current) */, ANDROID_PRIORITY_BACKGROUND);

2797 }

2798

2799 Mutex::Autolock autoLock(mLock);

2800 while (!mDone) {

2801 Chunk chunk;

2802 bool chunkFound = false;

2803 //findChunkToWrite寻找需要写入的数据块

2804 while (!mDone && !(chunkFound = findChunkToWrite(&chunk))) {//等待mChunkReadyCondition信号

2805 mChunkReadyCondition.wait(mLock);

2806 }

2807

2808 // In real time recording mode, write without holding the lock in order

2809 // to reduce the blocking time for media track threads.

2810 // Otherwise, hold the lock until the existing chunks get written to the

2811 // file.

2812 if (chunkFound) {

2813 if (mIsRealTimeRecording) {

2814 mLock.unlock();

2815 }//写入chunk到file中

2816 writeChunkToFile(&chunk);

2817 if (mIsRealTimeRecording) {

2818 mLock.lock();

2819 }

2820 }

2821 }

2822

2823 writeAllChunks();

2824 ALOGV("threadFunc mOffset:%lld, mMaxOffsetAppend:%lld", (long long)mOffset,

2825 (long long)mMaxOffsetAppend);

2826 mOffset = std::max(mOffset, mMaxOffsetAppend);

2827 }

2.4.5 startTracks

/frameworks/av/media/libstagefright/MPEG4Writer.cpp

690 status_t MPEG4Writer::startTracks(MetaData *params) {

691 if (mTracks.empty()) {

692 ALOGE("No source added");

693 return INVALID_OPERATION;

694 }

695

696 for (List<Track *>::iterator it = mTracks.begin();

697 it != mTracks.end(); ++it) {

698 status_t err = (*it)->start(params);

699

700 if (err != OK) {

701 for (List<Track *>::iterator it2 = mTracks.begin();

702 it2 != it; ++it2) {

703 (*it2)->stop();

704 }

705

706 return err;

707 }

708 }

709 return OK;

710 }2854 status_t MPEG4Writer::Track::start(MetaData *params) {

2855 if (!mDone && mPaused) {

2856 mPaused = false;

2857 mResumed = true;

2858 return OK;

2859 }

2860

2861 int64_t startTimeUs;

2862 if (params == NULL || !params->findInt64(kKeyTime, &startTimeUs)) {

2863 startTimeUs = 0;

2864 }

2865 mStartTimeRealUs = startTimeUs;

2866

2867 int32_t rotationDegrees;

2868 if ((mIsVideo || mIsHeic) && params &&

2869 params->findInt32(kKeyRotation, &rotationDegrees)) {

2870 mRotation = rotationDegrees;

2871 }

2872 if (mIsHeic) {

2873 // Reserve the item ids, so that the item ids are ordered in the same

2874 // order that the image tracks are added.

2875 // If we leave the item ids to be assigned when the sample is written out,

2876 // the original track order may not be preserved, if two image tracks

2877 // have data around the same time. (This could happen especially when

2878 // we're encoding with single tile.) The reordering may be undesirable,

2879 // even if the file is well-formed and the primary picture is correct.

2880

2881 // Reserve item ids for samples + grid

2882 size_t numItemsToReserve = mNumTiles + (mNumTiles > 0);

2883 status_t err = mOwner->reserveItemId_l(numItemsToReserve, &mItemIdBase);

2884 if (err != OK) {

2885 return err;

2886 }

2887 }

2888

2889 initTrackingProgressStatus(params);

2890

2891 sp<MetaData> meta = new MetaData;

2892 if (mOwner->isRealTimeRecording() && mOwner->numTracks() > 1) {

2893 /*

2894 * This extra delay of accepting incoming audio/video signals

2895 * helps to align a/v start time at the beginning of a recording

2896 * session, and it also helps eliminate the "recording" sound for

2897 * camcorder applications.

2898 *

2899 * If client does not set the start time offset, we fall back to

2900 * use the default initial delay value.

2901 */

2902 int64_t startTimeOffsetUs = mOwner->getStartTimeOffsetMs() * 1000LL;

2903 if (startTimeOffsetUs < 0) { // Start time offset was not set

2904 startTimeOffsetUs = kInitialDelayTimeUs;

2905 }

2906 startTimeUs += startTimeOffsetUs;

2907 ALOGI("Start time offset: %" PRId64 " us", startTimeOffsetUs);

2908 }

2909

2910 meta->setInt64(kKeyTime, startTimeUs);

2911 //调用对应的解码器的start函数,音视频的解码器都是MediaCodecSource类型继承于MediaSource

2912 status_t err = mSource->start(meta.get());

2913 if (err != OK) {

2914 mDone = mReachedEOS = true;

2915 return err;

2916 }

2917

2918 pthread_attr_t attr;

2919 pthread_attr_init(&attr);

2920 pthread_attr_setdetachstate(&attr, PTHREAD_CREATE_JOINABLE);

2921

2922 mDone = false;

2923 mStarted = true;

2924 mTrackDurationUs = 0;

2925 mReachedEOS = false;

2926 mEstimatedTrackSizeBytes = 0;

2927 mMdatSizeBytes = 0;

2928 mMaxChunkDurationUs = 0;

2929 mLastDecodingTimeUs = -1;

2930 //为每个track创建一个子线程执行 track的ThreadWrapper函数

2931 pthread_create(&mThread, &attr, ThreadWrapper, this);

2932 pthread_attr_destroy(&attr);

2933

2934 return OK;

2935 }

track指的是一个音频或者视频序列,是在设置writer时构建的音视频的编码器包装成track,而编码器创建时就是MediaCodecSource对象,这里的mSource是MediaCodecSource对象,所以会调用MediaCodecSource的start函数,如下:

/frameworks/av/media/libstagefright/MediaCodecSource.cpp

373 status_t MediaCodecSource::start(MetaData* params) {//native的Amessage是一个状态机转换这里最终会调用到 MediaCodecSource::onStart

374 sp<AMessage> msg = new AMessage(kWhatStart, mReflector);

375 msg->setObject("meta", params);

376 return postSynchronouslyAndReturnError(msg);

377 }

804 status_t MediaCodecSource::onStart(MetaData *params) {...

831

832 ALOGI("MediaCodecSource (%s) starting", mIsVideo ? "video" : "audio");

833

834 status_t err = OK;

835 //视频track由于我们没有传入surface所以这边走else分支 音频本就没有surface,所以也是走else

836 if (mFlags & FLAG_USE_SURFACE_INPUT) {...

845 } else {

846 CHECK(mPuller != NULL);

847 sp<MetaData> meta = params;

848 if (mSetEncoderFormat) {

849 if (meta == NULL) {

850 meta = new MetaData;

851 }

852 meta->setInt32(kKeyPixelFormat, mEncoderFormat);

853 meta->setInt32(kKeyColorSpace, mEncoderDataSpace);

854 }

855

856 sp<AMessage> notify = new AMessage(kWhatPullerNotify, mReflector);//puller start也和上面时一种机制,最终会调用到 MediaCodecSource::Puller::onMessageReceived

857 err = mPuller->start(meta.get(), notify);

858 if (err != OK) {

859 return err;

860 }

861 }

862

863 ALOGI("MediaCodecSource (%s) started", mIsVideo ? "video" : "audio");

864

865 mStarted = true;

866 return OK;

867 }

在MediaCodecSource::Puller::onMessageReceived则会走到kWhatStart分支,如下:

/frameworks/av/media/libstagefright/MediaCodecSource.cpp

230 void MediaCodecSource::Puller::onMessageReceived(const sp<AMessage> &msg) {

231 switch (msg->what()) {

232 case kWhatStart:

233 {

234 sp<RefBase> obj;

235 CHECK(msg->findObject("meta", &obj));

236

237 {

238 Mutexed<Queue>::Locked queue(mQueue);

239 queue->mPulling = true;

240 }

241 //这里的mSource则是设置给编码器的media source 视频track是CameraSource 音频track则是AudioSource

242 status_t err = mSource->start(static_cast<MetaData *>(obj.get()));

243

244 if (err == OK) {

245 schedulePull();

246 }

247

248 sp<AMessage> response = new AMessage;

249 response->setInt32("err", err);

250

251 sp<AReplyToken> replyID;

252 CHECK(msg->senderAwaitsResponse(&replyID));

253 response->postReply(replyID);

254 break;

255 }

其中设置音视频的编码器是在StagefrightRecorder::setupMPEG4orWEBMRecording函数中。具体的start做了什么,我们先省略掉,接着看整体流程。

在调用Track的mSource —> start 后会为每一个Truck创建一个子线程去执行Track的ThreadWrapper函数,

2.4.6 Track::ThreadWrapper

/frameworks/av/media/libstagefright/MPEG4Writer.cpp

2983 void *MPEG4Writer::Track::ThreadWrapper(void *me) {

2984 Track *track = static_cast<Track *>(me);

2985

2986 status_t err = track->threadEntry();

2987 return (void *)(uintptr_t)err;

2988 }3364 status_t MPEG4Writer::Track::threadEntry() {//一些变量的初始化...

3389 //1.当前的子线程命名并设置优先级

3390 if (mIsAudio) {

3391 prctl(PR_SET_NAME, (unsigned long)"MP4WtrAudTrkThread", 0, 0, 0);

3392 } else if (mIsVideo) {

3393 prctl(PR_SET_NAME, (unsigned long)"MP4WtrVidTrkThread", 0, 0, 0);

3394 } else {

3395 prctl(PR_SET_NAME, (unsigned long)"MP4WtrMetaTrkThread", 0, 0, 0);

3396 }

3397

3398 if (mOwner->isRealTimeRecording()) {

3399 androidSetThreadPriority(0, ANDROID_PRIORITY_AUDIO);

3400 } else if (mOwner->isBackgroundMode()) {

3401 // Background priority for media transcoding.

3402 androidSetThreadPriority(0 /* tid (0 = current) */, ANDROID_PRIORITY_BACKGROUND);

3403 }

3404

3405 sp<MetaData> meta_data;

3406

3407 status_t err = OK;

3408 MediaBufferBase *buffer;

3409 const char *trackName = getTrackType();//2.这里的mSource是MediaCodecSource对象 循环从MediaCodecSource中读取buffer

3410 while (!mDone && (err = mSource->read(&buffer)) == OK) {

3411 ALOGV("read:buffer->range_length:%lld", (long long)buffer->range_length());

3412 ...

3450

3451 int32_t isCodecConfig;

3452 if (buffer->meta_data().findInt32(kKeyIsCodecConfig, &isCodecConfig)

3453 && isCodecConfig) {

3454 // if config format (at track addition) already had CSD, keep that

3455 // UNLESS we have not received any frames yet.

3456 // TODO: for now the entire CSD has to come in one frame for encoders, even though

3457 // they need to be spread out for decoders.

3458 if (mGotAllCodecSpecificData && nActualFrames > 0) {

3459 ALOGI("ignoring additional CSD for video track after first frame");

3460 } else {//为不同的编码格式写入不同的数据

3461 mMeta = mSource->getFormat(); // get output format after format change

3462 status_t err;

3463 if (mIsAvc) {//h264/avc编码写入的特殊数据

3464 err = makeAVCCodecSpecificData(

3465 (const uint8_t *)buffer->data()

3466 + buffer->range_offset(),

3467 buffer->range_length());

3468 } else if (mIsHevc || mIsHeic) {

3469 err = makeHEVCCodecSpecificData(

3470 (const uint8_t *)buffer->data()

3471 + buffer->range_offset(),

3472 buffer->range_length());

3473 } else if (mIsMPEG4) {

3474 err = copyCodecSpecificData((const uint8_t *)buffer->data() + buffer->range_offset(),

3475 buffer->range_length());

3476 }

3477 }

3478

3479 buffer->release();

3480 buffer = NULL;

3481 if (OK != err) {

3482 mSource->stop();

3483 mIsMalformed = true;

3484 uint32_t trackNum = (mTrackId.getId() << 28);

3485 mOwner->notify(MEDIA_RECORDER_TRACK_EVENT_ERROR,

3486 trackNum | MEDIA_RECORDER_TRACK_ERROR_GENERAL, err);

3487 break;

3488 }

3489

3490 mGotAllCodecSpecificData = true;

3491 continue;

3492 }

3493

3494 // Per-frame metadata sample's size must be smaller than max allowed.

3495 if (!mIsVideo && !mIsAudio && !mIsHeic &&

3496 buffer->range_length() >= kMaxMetadataSize) {

3497 ALOGW("Buffer size is %zu. Maximum metadata buffer size is %lld for %s track",

3498 buffer->range_length(), (long long)kMaxMetadataSize, trackName);

3499 buffer->release();

3500 mSource->stop();

3501 mIsMalformed = true;

3502 break;

3503 }

3504

3505 bool isExif = false;

3506 uint32_t tiffHdrOffset = 0;

3507 int32_t isMuxerData;

3508 if (buffer->meta_data().findInt32(kKeyIsMuxerData, &isMuxerData) && isMuxerData) {

3509 // We only support one type of muxer data, which is Exif data block.

3510 isExif = isExifData(buffer, &tiffHdrOffset);

3511 if (!isExif) {

3512 ALOGW("Ignoring bad Exif data block");

3513 buffer->release();

3514 buffer = NULL;

3515 continue;

3516 }

3517 }

3518 if (!buffer->meta_data().findInt64(kKeySampleFileOffset, &sampleFileOffset)) {

3519 sampleFileOffset = -1;

3520 }

3521 int64_t lastSample = -1;

3522 if (!buffer->meta_data().findInt64(kKeyLastSampleIndexInChunk, &lastSample)) {

3523 lastSample = -1;

3524 }

3525 ALOGV("sampleFileOffset:%lld", (long long)sampleFileOffset);

3526

3527 /*

3528 * Reserve space in the file for the current sample + to be written MOOV box. If reservation

3529 * for a new sample fails, preAllocate(...) stops muxing session completely. Stop() could

3530 * write MOOV box successfully as space for the same was reserved in the prior call.

3531 * Release the current buffer/sample here.

3532 */

3533 if (sampleFileOffset == -1 && !mOwner->preAllocate(buffer->range_length())) {

3534 buffer->release();

3535 buffer = nullptr;

3536 break;

3537 }

3538

3539 ++nActualFrames;

3540

3541 // Make a deep copy of the MediaBuffer and Metadata and release

3542 // the original as soon as we can

3543 MediaBuffer *copy = new MediaBuffer(buffer->range_length());

3544 if (sampleFileOffset != -1) {

3545 copy->meta_data().setInt64(kKeySampleFileOffset, sampleFileOffset);

3546 } else {//从buffer中拷贝数据到MediaBuffer中

3547 memcpy(copy->data(), (uint8_t*)buffer->data() + buffer->range_offset(),

3548 buffer->range_length());

3549 }

3550 copy->set_range(0, buffer->range_length());

3551 ...3604 int32_t isSync = false;

3605 meta_data->findInt32(kKeyIsSyncFrame, &isSync);

3606 CHECK(meta_data->findInt64(kKeyTime, ×tampUs));

3607 timestampUs += mFirstSampleStartOffsetUs;

3608

3609 // For video, skip the first several non-key frames until getting the first key frame.

3610 if (mIsVideo && !mGotStartKeyFrame && !isSync) {

3611 ALOGD("Video skip non-key frame");

3612 copy->release();

3613 continue;

3614 }//3.从得到同步信号开始的frame开始算作第一帧

3615 if (mIsVideo && isSync) {

3616 mGotStartKeyFrame = true;

3617 }

3618 ...

3834 if (!hasMultipleTracks) {

3835 size_t bytesWritten;//如果当前只有一个truck那么进入下一个循环

3836 off64_t offset = mOwner->addSample_l(

3837 copy, usePrefix, tiffHdrOffset, &bytesWritten);

3838

3839 if (mIsHeic) {

3840 addItemOffsetAndSize(offset, bytesWritten, isExif);

3841 } else {

3842 if (mCo64TableEntries->count() == 0) {

3843 addChunkOffset(offset);

3844 }

3845 }

3846 copy->release();

3847 copy = NULL;

3848 continue;

3849 }

3850 //将当前帧加入到mChunkSamples中

3851 mChunkSamples.push_back(copy);

3852 if (mIsHeic) {

3853 bufferChunk(0 /*timestampUs*/);

3854 ++nChunks;

3855 } else if (interleaveDurationUs == 0) {//根据间隔时间决定是否执行bufferChunk

3856 addOneStscTableEntry(++nChunks, 1);//bufferChunk其实就是每一个timestampUs的mChunkSamples的状态

3857 bufferChunk(timestampUs);

3858 } else {

3859 if (chunkTimestampUs == 0) {

3860 chunkTimestampUs = timestampUs;

3861 } else {

3862 int64_t chunkDurationUs = timestampUs - chunkTimestampUs;

3863 if (chunkDurationUs > interleaveDurationUs || lastSample > 1) {

3864 ALOGV("lastSample:%lld", (long long)lastSample);

3865 if (chunkDurationUs > mMaxChunkDurationUs) {

3866 mMaxChunkDurationUs = chunkDurationUs;

3867 }

3868 ++nChunks;

3869 if (nChunks == 1 || // First chunk

3870 lastSamplesPerChunk != mChunkSamples.size()) {

3871 lastSamplesPerChunk = mChunkSamples.size();

3872 addOneStscTableEntry(nChunks, lastSamplesPerChunk);

3873 }

3874 bufferChunk(timestampUs);

3875 chunkTimestampUs = timestampUs;

3876 }

3877 }

3878 }

3879 }

3880 ...

3946

3947 if (err == ERROR_END_OF_STREAM) {

3948 return OK;

3949 }

3950 return err;

3951 }void MPEG4Writer::Track::bufferChunk(int64_t timestampUs) {

4109 ALOGV("bufferChunk");

4110

4111 Chunk chunk(this, timestampUs, mChunkSamples);

4112 mOwner->bufferChunk(chunk);

4113 mChunkSamples.clear();

4114 }2679 void MPEG4Writer::bufferChunk(const Chunk& chunk) {

2680 ALOGV("bufferChunk: %p", chunk.mTrack);

2681 Mutex::Autolock autolock(mLock);

2682 CHECK_EQ(mDone, false);

2683 //这里的mChunkInfos是音视频对应的Track的包装

2684 for (List<ChunkInfo>::iterator it = mChunkInfos.begin();

2685 it != mChunkInfos.end(); ++it) {

2686

2687 if (chunk.mTrack == it->mTrack) { // Found owner

2688 it->mChunks.push_back(chunk);//发送mChunkReadyCondition信号

2689 mChunkReadyCondition.signal();

2690 return;

2691 }

2692 }

2693

2694 CHECK(!"Received a chunk for a unknown track");

2695 }

每一个Track都有一个子线程,在子线程中循环读取MediaCodecSource的数据并push到mChunkSamples,接着将这些数据都组成块放到对应的Track的mChunks中。

在上面2.2.4的startWriterThread函数中有句代码在等待mChunkReadyCondition信号的产生,

2789 void MPEG4Writer::threadFunc() {

2790 ALOGV("threadFunc");

2791

2792 prctl(PR_SET_NAME, (unsigned long)"MPEG4Writer", 0, 0, 0);

2793

2794 if (mIsBackgroundMode) {

2795 // Background priority for media transcoding.

2796 androidSetThreadPriority(0 /* tid (0 = current) */, ANDROID_PRIORITY_BACKGROUND);

2797 }

2798

2799 Mutex::Autolock autoLock(mLock);

2800 while (!mDone) {

2801 Chunk chunk;

2802 bool chunkFound = false;

2803 //findChunkToWrite寻找需要写入的数据块

2804 while (!mDone && !(chunkFound = findChunkToWrite(&chunk))) {//等待mChunkReadyCondition信号

2805 mChunkReadyCondition.wait(mLock);

2806 }

2807

2808 // In real time recording mode, write without holding the lock in order

2809 // to reduce the blocking time for media track threads.

2810 // Otherwise, hold the lock until the existing chunks get written to the

2811 // file.

2812 if (chunkFound) {

2813 if (mIsRealTimeRecording) {

2814 mLock.unlock();

2815 }//写入数据块到file中

2816 writeChunkToFile(&chunk);

2817 if (mIsRealTimeRecording) {

2818 mLock.lock();

2819 }

2820 }

2821 }

2822 //把上面循环中没有写入的数据块都写入文件中

2823 writeAllChunks();

2824 ALOGV("threadFunc mOffset:%lld, mMaxOffsetAppend:%lld", (long long)mOffset,

2825 (long long)mMaxOffsetAppend);

2826 mOffset = std::max(mOffset, mMaxOffsetAppend);

2827 }

当有信号产生时就会接着走循环,但是这时已经有chunk写入,所以会跳出while循环将数据块写入到文件,接着进行下一次循环等待mChunkReadyCondition的信号产生。至此录像的启动流程就分析完毕了

2.5 MediaRecorder.stop

当需要停止录像时会调用MediaRecorder.stop最终也是StagefrightRecorder去完成停止的任务。

/frameworks/av/media/libmediaplayerservice/StagefrightRecorder.cpp

2026 status_t StagefrightRecorder::stop() {

2027 ALOGV("stop");

2028 Mutex::Autolock autolock(mLock);

2029 status_t err = OK;

2030

2031 if (mCaptureFpsEnable && mCameraSourceTimeLapse != NULL) {

2032 mCameraSourceTimeLapse->startQuickReadReturns();

2033 mCameraSourceTimeLapse = NULL;

2034 }

2035

2036 int64_t stopTimeUs = systemTime() / 1000;

2037 for (const auto &source : { mAudioEncoderSource, mVideoEncoderSource }) {

2038 if (source != nullptr && OK != source->setStopTimeUs(stopTimeUs)) {

2039 ALOGW("Failed to set stopTime %lld us for %s",

2040 (long long)stopTimeUs, source->isVideo() ? "Video" : "Audio");

2041 }

2042 }

2043 //停止MPEG4 writer

2044 if (mWriter != NULL) {

2045 err = mWriter->stop();

2046 mWriter.clear();

2047 }

2096 return err;

2097 }

StagefrightRecorder的stop主要就是停止MPEG4 writer线程,而MPEG4Writer的stop则是在头文件里调用了reset;

/frameworks/av/media/libstagefright/include/media/stagefright/MPEG4Writer.h

virtual status_t stop() { return reset(); }/frameworks/av/media/libstagefright/MPEG4Writer.cpp

1069 status_t MPEG4Writer::reset(bool stopSource) {

1070 if (mInitCheck != OK) {

1071 return OK;

1072 } else {

1073 if (!mWriterThreadStarted ||

1074 !mStarted) {

1075 if (mWriterThreadStarted) {

1076 stopWriterThread();

1077 }

1078 release();

1079 return OK;

1080 }

1081 }

1082

1083 status_t err = OK;

1084 int64_t maxDurationUs = 0;

1085 int64_t minDurationUs = 0x7fffffffffffffffLL;

1086 int32_t nonImageTrackCount = 0;

1087 for (List<Track *>::iterator it = mTracks.begin();

1088 it != mTracks.end(); ++it) {//停止两个 track

1089 status_t status = (*it)->stop(stopSource);

1090 if (err == OK && status != OK) {

1091 err = status;

1092 }

1093

1094 // skip image tracks

1095 if ((*it)->isHeic()) continue;

1096 nonImageTrackCount++;

1097

1098 int64_t durationUs = (*it)->getDurationUs();

1099 if (durationUs > maxDurationUs) {

1100 maxDurationUs = durationUs;

1101 }

1102 if (durationUs < minDurationUs) {

1103 minDurationUs = durationUs;

1104 }

1105 }

1106

1107 if (nonImageTrackCount > 1) {

1108 ALOGD("Duration from tracks range is [%" PRId64 ", %" PRId64 "] us",

1109 minDurationUs, maxDurationUs);

1110 }

1111 //停止WriterThread

1112 stopWriterThread();

1113

1114 // Do not write out movie header on error.

1115 if (err != OK) {

1116 release();

1117 return err;

1118 }

1119

1120 // Fix up the size of the 'mdat' chunk.

1121 if (mUse32BitOffset) {

1122 lseek64(mFd, mMdatOffset, SEEK_SET);

1123 uint32_t size = htonl(static_cast<uint32_t>(mOffset - mMdatOffset));

1124 ::write(mFd, &size, 4);

1125 } else {

1126 lseek64(mFd, mMdatOffset + 8, SEEK_SET);

1127 uint64_t size = mOffset - mMdatOffset;

1128 size = hton64(size);

1129 ::write(mFd, &size, 8);

1130 }

1131 lseek64(mFd, mOffset, SEEK_SET);

1132

1133 // Construct file-level meta and moov box now

1134 mInMemoryCacheOffset = 0;

1135 mWriteBoxToMemory = mStreamableFile;

1136 if (mWriteBoxToMemory) {

1137 // There is no need to allocate in-memory cache

1138 // if the file is not streamable.

1139

1140 mInMemoryCache = (uint8_t *) malloc(mInMemoryCacheSize);

1141 CHECK(mInMemoryCache != NULL);

1142 }

1143 //更新mp4文件的相关box

1144 if (mHasFileLevelMeta) {

1145 writeFileLevelMetaBox();

1146 if (mWriteBoxToMemory) {

1147 writeCachedBoxToFile("meta");

1148 } else {

1149 ALOGI("The file meta box is written at the end.");

1150 }

1151 }

1152

1153 if (mHasMoovBox) {

1154 writeMoovBox(maxDurationUs);

1155 // mWriteBoxToMemory could be set to false in

1156 // MPEG4Writer::write() method

1157 if (mWriteBoxToMemory) {

1158 writeCachedBoxToFile("moov");

1159 } else {

1160 ALOGI("The mp4 file will not be streamable.");

1161 }

1162 }

1163 mWriteBoxToMemory = false;

1164 //释放内存

1165 // Free in-memory cache for box writing

1166 if (mInMemoryCache != NULL) {

1167 free(mInMemoryCache);

1168 mInMemoryCache = NULL;

1169 mInMemoryCacheOffset = 0;

1170 }

1171

1172 CHECK(mBoxes.empty());

1173 //调用relase关闭文件句柄 释放内存等操作

1174 release();

1175 return err;

1176 }

MPEG4Writer::reset主要干了两件事情:1.停止之前启动的Track 2.停止writer线程,接着就是更新了MP4文件的相关box,最后释放内存,重点看一下Track的停止和writer线程停止。

2.5.1 Track::stop

/frameworks/av/media/libstagefright/MPEG4Writer.cpp

2452 status_t MPEG4Writer::Track::stop(bool stopSource) {...

2462

2463 if (stopSource) {

2464 ALOGD("%s track source stopping", getTrackType());//这里的mSource是MediaCodecSource对象对应的是音视频的编码器

2465 mSource->stop();

2466 ALOGD("%s track source stopped", getTrackType());

2467 }...

2478 return err;

2479 }

Track的stop对象主要是调用MediaCodecSource的stop函数

/frameworks/av/media/libstagefright/MediaCodecSource.cpp

386 status_t MediaCodecSource::stop() {//发送kWhatStop的message

387 sp<AMessage> msg = new AMessage(kWhatStop, mReflector);

388 return postSynchronouslyAndReturnError(msg);

389 }

840 void MediaCodecSource::onMessageReceived(const sp<AMessage> &msg) {...

999 case kWhatStop:

1000 {

1001 ALOGI("encoder (%s) stopping", mIsVideo ? "video" : "audio");

1002

1003 sp<AReplyToken> replyID;

1004 CHECK(msg->senderAwaitsResponse(&replyID));

1005

1006 if (mOutput.lock()->mEncoderReachedEOS) {

1007 // if we already reached EOS, reply and return now

1008 ALOGI("encoder (%s) already stopped",

1009 mIsVideo ? "video" : "audio");

1010 (new AMessage)->postReply(replyID);

1011 break;

1012 }

1013

1014 mStopReplyIDQueue.push_back(replyID);

1015 if (mStopping) {

1016 // nothing to do if we're already stopping, reply will be posted

1017 // to all when we're stopped.

1018 break;

1019 }

1020

1021 mStopping = true;

1022

1023 int64_t timeoutUs = kStopTimeoutUs;

1024 // if using surface, signal source EOS and wait for EOS to come back.

1025 // otherwise, stop puller (which also clears the input buffer queue)

1026 // and wait for the EOS message. We cannot call source->stop() because

1027 // the encoder may still be processing input buffers.//由于我们一直用的Camera的资源,没有传入Surface,所以走else分支

1028 if (mFlags & FLAG_USE_SURFACE_INPUT) {...

1046 } else {//这里又调用了puller的stop

1047 mPuller->stop();

1048 }

1049

1050 // complete stop even if encoder/puller stalled

1051 sp<AMessage> timeoutMsg = new AMessage(kWhatStopStalled, mReflector);

1052 timeoutMsg->setInt32("generation", mGeneration);

1053 timeoutMsg->post(timeoutUs);

1054 break;

1055 }...}189 void MediaCodecSource::Puller::stop() {

190 bool interrupt = false;

191 {

192 // mark stopping before actually reaching kWhatStop on the looper, so the pulling will

193 // stop.//把当前队列的mPulling置为 false 等待下一次从source读取数据时就会发送一条消息给MediaCodecSource的Looper

194 Mutexed<Queue>::Locked queue(mQueue);

195 queue->mPulling = false;//如果距离上次读取数据的时间不超过1s那么interrupt 为true

196 interrupt = queue->mReadPendingSince && (queue->mReadPendingSince < ALooper::GetNowUs() - 1000000);

197 queue->flush(); // flush any unprocessed pulled buffers

198 }

199

200 if (interrupt) {//中断资源

201 interruptSource();

202 }

203 }

MediaCodecSource则是通过发送消息给Puller,然后puller在将mPulling置成fasle后 如果上次读取的时间距离现在比较长,那么就调用interruptSource停止相应的资源,比如,音频时AudioSource,视频则是CameraSource 否则在下次读取文件时当检测到mPulling为false会发送EOS消息给MediaCodecSource,MediaCodecSource在接收到消息后会调用。具体的代码分析后面在详细分析MediaCodecSource时再了解。

2.5.2 stopWriterThread

/frameworks/av/media/libstagefright/MPEG4Writer.cpp

966 void MPEG4Writer::stopWriterThread() {

967 ALOGD("Stopping writer thread");

968 if (!mWriterThreadStarted) {

969 return;

970 }

971

972 {

973 Mutex::Autolock autolock(mLock);//通过将mDone置成true来停止WriterThread的循环

975 mDone = true;//防止线程卡在等待mChunkReadyCondition的信号的地方

976 mChunkReadyCondition.signal();

977 }

978

979 void *dummy;

980 pthread_join(mThread, &dummy);

981 mWriterThreadStarted = false;

982 ALOGD("Writer thread stopped");

983 }

三、MediaCodecSource相关流程

MediaCodecSource是录像流程中非常重要的一个类,他作为媒体的编码器存在,接受Camera传过来的视频数据和麦克风传来的音频数据,并编码后提供媒体数据给MPEG4Writer去写入文件。

3.1 MediaCodecSource的初始化

在2.4.1和2.4.2 设置媒体编码器中我们已经知道了MediaCodecSource是如何创建的,下面就来具体分析一下MediaCodecSource的具体创建过程

2094 sp<MediaCodecSource> encoder = MediaCodecSource::Create(

2095 mLooper, format, mSource, mPersistentSurface, flags);

目前创建编码器都是调用MediaCodecSource的Create函数去创建的,对于音视频不同的就是编码格式format和资源mSource,对于视频track来说mSource就是CameraSource,对于音频来说就是AudioSource。

3.1.1 MediaCodecSource::Create

/frameworks/av/media/libstagefright/MediaCodecSource.cpp

348 sp<MediaCodecSource> MediaCodecSource::Create(

349 const sp<ALooper> &looper,

350 const sp<AMessage> &format,

351 const sp<MediaSource> &source,

352 const sp<PersistentSurface> &persistentSurface,

353 uint32_t flags) {//创建MediaCodecSource对象并执行init函数

354 sp<MediaCodecSource> mediaSource = new MediaCodecSource(

355 looper, format, source, persistentSurface, flags);

356

357 if (mediaSource->init() == OK) {

358 return mediaSource;

359 }

360 return NULL;

361 }

MediaCodecSource::Create主要就是以传入的参数创建一个MediaCodecSource对象,接着调用init完成初始化

3.1.2 MediaCodecSource::init

/frameworks/av/media/libstagefright/MediaCodecSource.cpp

473 status_t MediaCodecSource::init() {

474 status_t err = initEncoder();

475

476 if (err != OK) {

477 releaseEncoder();

478 }

479

480 return err;

481 }

483 status_t MediaCodecSource::initEncoder() {

484

485 mReflector = new AHandlerReflector<MediaCodecSource>(this);

486 mLooper->registerHandler(mReflector);

487 //创建ALooper并启动 name是codec_looper

488 mCodecLooper = new ALooper;

489 mCodecLooper->setName("codec_looper");

490 mCodecLooper->start();

491

492 if (mFlags & FLAG_USE_SURFACE_INPUT) {

493 mOutputFormat->setInt32("create-input-buffers-suspended", 1);

494 }

495 //取出编码方式 mOutputFormat就是我们传进来的format编码格式

496 AString outputMIME;

497 CHECK(mOutputFormat->findString("mime", &outputMIME));

498 mIsVideo = outputMIME.startsWithIgnoreCase("video/");

499

500 AString name;

501 status_t err = NO_INIT;//if分支是测试使用的,我们一般走else分支

502 if (mOutputFormat->findString("testing-name", &name)) {

503 mEncoder = MediaCodec::CreateByComponentName(mCodecLooper, name);

504

505 mEncoderActivityNotify = new AMessage(kWhatEncoderActivity, mReflector);

506 mEncoder->setCallback(mEncoderActivityNotify);

507

508 err = mEncoder->configure(

509 mOutputFormat,

510 NULL /* nativeWindow */,

511 NULL /* crypto */,

512 MediaCodec::CONFIGURE_FLAG_ENCODE);

513 } else {//根据而我们传的编码方式匹配编码器

514 Vector<AString> matchingCodecs;

515 MediaCodecList::findMatchingCodecs(

516 outputMIME.c_str(), true /* encoder */,

517 ((mFlags & FLAG_PREFER_SOFTWARE_CODEC) ? MediaCodecList::kPreferSoftwareCodecs : 0),

518 &matchingCodecs);

519 //为匹配上的编码器创建编码器,但是只会使用最后创建的

520 for (size_t ix = 0; ix < matchingCodecs.size(); ++ix) {

521 mEncoder = MediaCodec::CreateByComponentName(

522 mCodecLooper, matchingCodecs[ix]);

523

524 if (mEncoder == NULL) {

525 continue;

526 }

527

528 ALOGV("output format is '%s'", mOutputFormat->debugString(0).c_str());

529 //把kWhatEncoderActivity的消息给了mEncoder,当mEncoder有消息需要发送给MediaCodecSource时会发送kWhatEncoderActivity消息

530 mEncoderActivityNotify = new AMessage(kWhatEncoderActivity, mReflector);

531 mEncoder->setCallback(mEncoderActivityNotify);

532 //配置编码器

533 err = mEncoder->configure(

534 mOutputFormat,

535 NULL /* nativeWindow */,

536 NULL /* crypto */,

537 MediaCodec::CONFIGURE_FLAG_ENCODE);

538

539 if (err == OK) {

540 break;

541 }

542 mEncoder->release();

543 mEncoder = NULL;

544 }

545 }

546

547 if (err != OK) {

548 return err;

549 }

550 //获取到编码器的编码格式

551 mEncoder->getOutputFormat(&mOutputFormat);

552 sp<MetaData> meta = new MetaData;

553 convertMessageToMetaData(mOutputFormat, meta);

554 mMeta.lock().set(meta);

555 ...

572

573 sp<AMessage> inputFormat;

574 int32_t usingSwReadOften;

575 mSetEncoderFormat = false;

576 if (mEncoder->getInputFormat(&inputFormat) == OK) {

577 mSetEncoderFormat = true;

578 if (inputFormat->findInt32("using-sw-read-often", &usingSwReadOften)

579 && usingSwReadOften) {

580 // this is a SW encoder; signal source to allocate SW readable buffers

581 mEncoderFormat = kDefaultSwVideoEncoderFormat;

582 } else {

583 mEncoderFormat = kDefaultHwVideoEncoderFormat;

584 }

585 if (!inputFormat->findInt32("android._dataspace", &mEncoderDataSpace)) {

586 mEncoderDataSpace = kDefaultVideoEncoderDataSpace;

587 }

588 ALOGV("setting dataspace %#x, format %#x", mEncoderDataSpace, mEncoderFormat);

589 }

590 //启动编码器

591 err = mEncoder->start();

592

593 if (err != OK) {

594 return err;

595 }

596

597 {

598 Mutexed<Output>::Locked output(mOutput);

599 output->mEncoderReachedEOS = false;

600 output->mErrorCode = OK;

601 }

602

603 return OK;

604 }

MediaCodecSource::init主要是调用initEncoder去初始化编码器,主要做的工作是创建配置并启动编码器

目前Android仅支持三种encoder

- c2. (CCodec.cpp 在libstagefright_ccodec中 )

- omx. (ACodec.cpp)

- android.filter. (MediaFilter.cpp)

这里以最常见的omx编码器来介绍。

3.1.3 创建Encoder

上面提到创建Encoder走的是MediaCodec::CreateByComponentName

/frameworks/av/media/libstagefright/MediaCodec.cpp

468 // static

469 sp<MediaCodec> MediaCodec::CreateByComponentName(

470 const sp<ALooper> &looper, const AString &name, status_t *err, pid_t pid, uid_t uid) {//创建MediaCodec并初始化

471 sp<MediaCodec> codec = new MediaCodec(looper, pid, uid);

472

473 const status_t ret = codec->init(name);

474 if (err != NULL) {

475 *err = ret;

476 }

477 return ret == OK ? codec : NULL; // NULL deallocates codec.

478 }

862 //static

863 sp<CodecBase> MediaCodec::GetCodecBase(const AString &name) {

864 if (name.startsWithIgnoreCase("c2.")) {

865 return CreateCCodec();

866 } else if (name.startsWithIgnoreCase("omx.")) {

867 // at this time only ACodec specifies a mime type.

868 return new ACodec;

869 } else if (name.startsWithIgnoreCase("android.filter.")) {

870 return new MediaFilter;

871 } else {

872 return NULL;

873 }

874 }

876 status_t MediaCodec::init(const AString &name) {

877 mResourceManagerService->init();

878

879 // save init parameters for reset

880 mInitName = name;

881

882 // Current video decoders do not return from OMX_FillThisBuffer

883 // quickly, violating the OpenMAX specs, until that is remedied

884 // we need to invest in an extra looper to free the main event

885 // queue.

886 //根据名称创建不同的编码器codec 赋给MediaCodec对象的mCodec

887 mCodec = GetCodecBase(name);

888 if (mCodec == NULL) {

889 return NAME_NOT_FOUND;

890 }

891

892 mCodecInfo.clear();...

924

925 if (mIsVideo) {//视频编码器需要专门的Looper

926 // video codec needs dedicated looper

927 if (mCodecLooper == NULL) {

928 mCodecLooper = new ALooper;

929 mCodecLooper->setName("CodecLooper");

930 mCodecLooper->start(false, false, ANDROID_PRIORITY_AUDIO);

931 }

932

933 mCodecLooper->registerHandler(mCodec);

934 } else {

935 mLooper->registerHandler(mCodec);

936 }

937

938 mLooper->registerHandler(this);

939 //设置一些回调的消息

940 mCodec->setCallback(

941 std::unique_ptr<CodecBase::CodecCallback>(

942 new CodecCallback(new AMessage(kWhatCodecNotify, this))));//获取缓冲区通道

943 mBufferChannel = mCodec->getBufferChannel();

944 mBufferChannel->setCallback(

945 std::unique_ptr<CodecBase::BufferCallback>(

946 new BufferCallback(new AMessage(kWhatCodecNotify, this))));

947

948 sp<AMessage> msg = new AMessage(kWhatInit, this);

949 msg->setObject("codecInfo", mCodecInfo);

950 // name may be different from mCodecInfo->getCodecName() if we stripped

951 // ".secure"

952 msg->setString("name", name);

953 ...

1460 sp<AMessage> response;

1461 err = PostAndAwaitResponse(msg, &response);

980 return err;

981 }

Encoder的创建是通过创建MediaCodec对象实现的,不过真正的编码器则是器成员函数mCodec 根据名称创建的,omx编码器则是ACodec对象,如果编码视频还是需要创建新的Looper。接着发送kWhatInit消息调用ACodec的initiateAllocateComponent创建一个omx的节点mOMXNode,同时创建了一个CodecObserver

来接受Omx框架的消息。

3.1.4 设置Encoder

在3.2.2小节中Encoder的设置时调用MediaCodec的configure函数

/frameworks/av/media/libstagefright/MediaCodec.cpp

997 status_t MediaCodec::configure(

998 const sp<AMessage> &format,

999 const sp<Surface> &nativeWindow,

1000 const sp<ICrypto> &crypto,

1001 uint32_t flags) {

1002 return configure(format, nativeWindow, crypto, NULL, flags);

1003 }

1005 status_t MediaCodec::configure(

1006 const sp<AMessage> &format,

1007 const sp<Surface> &surface,

1008 const sp<ICrypto> &crypto,

1009 const sp<IDescrambler> &descrambler,

1010 uint32_t flags) {//构建kWhatConfigure消息

1011 sp<AMessage> msg = new AMessage(kWhatConfigure, this);...//将参数设置给msg

1053 msg->setMessage("format", format);

1054 msg->setInt32("flags", flags);

1055 msg->setObject("surface", surface);...//发送msg

1092 err = PostAndAwaitResponse(msg, &response);1107 return err;

1108 }

发送kWhatConfigure的消息看一下如何处理的

1762 void MediaCodec::onMessageReceived(const sp<AMessage> &msg) {

2374 case kWhatConfigure:...

2383 //取出msg中的参数

2384 sp<RefBase> obj;

2385 CHECK(msg->findObject("surface", &obj));

2386

2387 sp<AMessage> format;

2388 CHECK(msg->findMessage("format", &format));

2389

2390 int32_t push;

2391 if (msg->findInt32("push-blank-buffers-on-shutdown", &push) && push != 0) {

2392 mFlags |= kFlagPushBlankBuffersOnShutdown;

2393 }...

2440 //这里的mCodec就是创建Encoder时根据名称创建的ACodec对象

2441 mCodec->initiateConfigureComponent(format);

2442 break;

2443 }}

调用ACodec的initiateConfigureComponent 在Acodec中也是发送了kWhatConfigureComponent的msg,主要看一下如何处理的

/frameworks/av/media/libstagefright/ACodec.cpp

6575 bool ACodec::LoadedState::onMessageReceived(const sp<AMessage> &msg) {

6576 bool handled = false;

6577

6578 switch (msg->what()) {

6579 case ACodec::kWhatConfigureComponent:

6580 {

6581 onConfigureComponent(msg);

6582 handled = true;

6583 break;

6584 }

6585 }

6634 bool ACodec::LoadedState::onConfigureComponent(

6635 const sp<AMessage> &msg) {

6636 ALOGV("onConfigureComponent");

6637

6638 CHECK(mCodec->mOMXNode != NULL);

6639

6640 status_t err = OK;

6641 AString mime;

6642 if (!msg->findString("mime", &mime)) {

6643 err = BAD_VALUE;

6644 } else {//这里的mCodec就是Acodec对象

6645 err = mCodec->configureCodec(mime.c_str(), msg);

6646 }

6647 if (err != OK) {

6648 ALOGE("[%s] configureCodec returning error %d",

6649 mCodec->mComponentName.c_str(), err);

6650

6651 mCodec->signalError(OMX_ErrorUndefined, makeNoSideEffectStatus(err));

6652 return false;

6653 }

6654 //回调mCallback的onComponentConfigured将编码器的输入和输出的格式返回给MediaCodec最后返回给MediaCodecSource

6655 mCodec->mCallback->onComponentConfigured(mCodec->mInputFormat, mCodec->mOutputFormat);

6656

6657 return true;

6658 }

最终调用mCodec->configureCodec去设置编码器,而设置编码器最后则是向omx的node中设置一系列参数(无论音频还是视频,编码还是解码)。

mOMXNode->setParameter();

3.1.5 启动编码器

启动编码器调用的是MediaCodec的start函数

/frameworks/av/media/libstagefright/MediaCodec.cpp

2254 status_t MediaCodec::start() {//发送kWhatStart msg

2255 sp<AMessage> msg = new AMessage(kWhatStart, this);...

2298

2299 sp<AMessage> response;

2300 err = PostAndAwaitResponse(msg, &response);....

2305 return err;

2306 }

2964 void MediaCodec::onMessageReceived(const sp<AMessage> &msg) {

3881 case kWhatStart:

3882 {...

3912 mCodec->initiateStart();

3913 break;

3914 }}

MediaCodec的start函数同样是通过Amessage调用到了Acodec的initiateStart,在Acodec的initiateStart函数中也是通过Amessage机制调用到Acodec的onStart函数

/frameworks/av/media/libstagefright/ACodec.cpp

7282 void ACodec::LoadedState::onStart() {

7283 ALOGV("onStart");

7284 //向mOMXNode发送命令使编码器处于Idle状态

7285 status_t err = mCodec->mOMXNode->sendCommand(OMX_CommandStateSet, OMX_StateIdle);

7286 if (err != OK) {

7287 mCodec->signalError(OMX_ErrorUndefined, makeNoSideEffectStatus(err));

7288 } else {

7289 mCodec->changeState(mCodec->mLoadedToIdleState);

7290 }

7291 }

编码器的启动就是调用mOMXNode的sendCommand函数将编码器的状态设置成Idle状态

3.2 MediaCodecSource的启动

在上面的2.4.5小节中我们知道在启动track时会调用MediaCodecSource的start函数,接着根据我们在CameraApp中设置的VideoSource会走Puller.start

在puller.start中则有会调用mSource -->start,这里的mSource根据不同的资源类型是不同的对象,比如视频资源在这里就是CameraSource,音频资源则是AudioSource。如下:

/frameworks/av/media/libstagefright/MediaCodecSource.cpp

230 void MediaCodecSource::Puller::onMessageReceived(const sp<AMessage> &msg) {

231 switch (msg->what()) {

232 case kWhatStart:

233 {

234 sp<RefBase> obj;

235 CHECK(msg->findObject("meta", &obj));

236

237 {

238 Mutexed<Queue>::Locked queue(mQueue);

239 queue->mPulling = true;

240 }

241 //这里的mSource则是设置给编码器的media source 视频track是CameraSource 音频track则是AudioSource

242 status_t err = mSource->start(static_cast<MetaData *>(obj.get()));

243

244 if (err == OK) {//开始读取数据

245 schedulePull();

246 }

247

248 sp<AMessage> response = new AMessage;

249 response->setInt32("err", err);

250

251 sp<AReplyToken> replyID;

252 CHECK(msg->senderAwaitsResponse(&replyID));

253 response->postReply(replyID);

254 break;

255 }

所以上面的mSource->start对于视频来说是CameraSource -->start 对于音频来说则是AudioSource.start

3.2.1 CameraSource::start

/frameworks/av/media/libstagefright/CameraSource.cpp

647 status_t CameraSource::start(MetaData *meta) {...//调用startCameraRecording开启录像

686 status_t err;

687 if ((err = startCameraRecording()) == OK) {

688 mStarted = true;

689 }

690

691 return err;

692 }

609 status_t CameraSource::startCameraRecording() {

610 ALOGV("startCameraRecording");

611 // Reset the identity to the current thread because media server owns the

612 // camera and recording is started by the applications. The applications

613 // will connect to the camera in ICameraRecordingProxy::startRecording.

614 int64_t token = IPCThreadState::self()->clearCallingIdentity();

615 status_t err;

616 //初始化BufferQueue

617 // Initialize buffer queue.

618 err = initBufferQueue(mVideoSize.width, mVideoSize.height, mEncoderFormat,

619 (android_dataspace_t)mEncoderDataSpace,

620 mNumInputBuffers > 0 ? mNumInputBuffers : 1);

621 if (err != OK) {

622 ALOGE("%s: Failed to initialize buffer queue: %s (err=%d)", __FUNCTION__,

623 strerror(-err), err);

624 return err;

625 }

626

627 // Start data flow

628 err = OK;

629 if (mCameraFlags & FLAGS_HOT_CAMERA) {

630 mCamera->unlock();

631 mCamera.clear();

632 if ((err = mCameraRecordingProxy->startRecording()) != OK) {

633 ALOGE("Failed to start recording, received error: %s (%d)",

634 strerror(-err), err);

635 }

636 } else {//这里的mCamera则是CameraDevice的代理对象调用Camera的startRecording

637 mCamera->startRecording();

638 if (!mCamera->recordingEnabled()) {

639 err = -EINVAL;

640 ALOGE("Failed to start recording");

641 }

642 }

643 IPCThreadState::self()->restoreCallingIdentity(token);

644 return err;

645 }

在 CameraSource::startCameraRecording中首先调用initBufferQueue初始化了一个BufferQueue作为传输数据的通道,并把生产者设置给了Camera

/frameworks/av/media/libstagefright/CameraSource.cpp

458 status_t CameraSource::initBufferQueue(uint32_t width, uint32_t height,

459 uint32_t format, android_dataspace dataSpace, uint32_t bufferCount) {

460 ALOGV("initBufferQueue");

461

462 if (mVideoBufferConsumer != nullptr || mVideoBufferProducer != nullptr) {

463 ALOGE("%s: Buffer queue already exists", __FUNCTION__);

464 return ALREADY_EXISTS;

465 }

466

467 // Create a buffer queue.

468 sp<IGraphicBufferProducer> producer;

469 sp<IGraphicBufferConsumer> consumer;

470 BufferQueue::createBufferQueue(&producer, &consumer);

471

472 uint32_t usage = GRALLOC_USAGE_SW_READ_OFTEN;

473 if (format == HAL_PIXEL_FORMAT_IMPLEMENTATION_DEFINED) {

474 usage = GRALLOC_USAGE_HW_VIDEO_ENCODER;

475 }

476

477 bufferCount += kConsumerBufferCount;

478

479 mVideoBufferConsumer = new BufferItemConsumer(consumer, usage, bufferCount);

480 mVideoBufferConsumer->setName(String8::format("StageFright-CameraSource"));

481 mVideoBufferProducer = producer;

482 ...

503 //把生产者设置给camera

504 res = mCamera->setVideoTarget(mVideoBufferProducer);

505 if (res != OK) {

506 ALOGE("%s: Failed to set video target: %s (%d)", __FUNCTION__, strerror(-res), res);

507 return res;

508 }

509

510 // Create memory heap to store buffers as VideoNativeMetadata.

511 createVideoBufferMemoryHeap(sizeof(VideoNativeMetadata), bufferCount);

512 //以消费者为参数创建一个BufferQueueListener并启动

513 mBufferQueueListener = new BufferQueueListener(mVideoBufferConsumer, this);

514 res = mBufferQueueListener->run("CameraSource-BufferQueueListener");

515 if (res != OK) {

516 ALOGE("%s: Could not run buffer queue listener thread: %s (%d)", __FUNCTION__,

517 strerror(-res), res);

518 return res;

519 }

520

521 return OK;

522 }