本文主要是介绍微调codebert、unixcoder、grapghcodebert完成漏洞检测代码,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

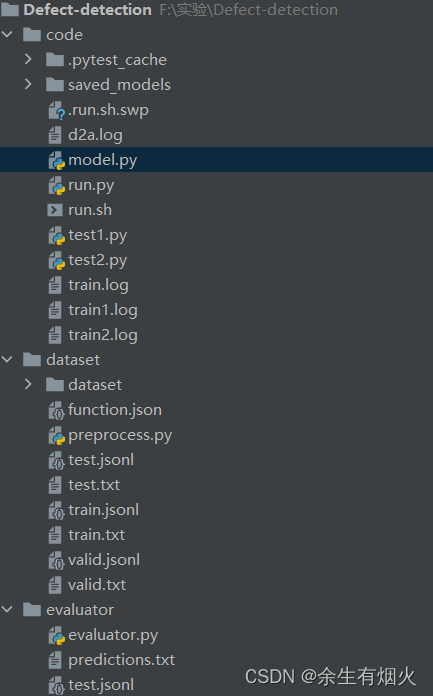

文件结构如下所示:

mode.py

# Copyright (c) Microsoft Corporation.

# Licensed under the MIT License.

import torch

import torch.nn as nn

import torch

from torch.autograd import Variable

import copy

from torch.nn import CrossEntropyLoss, MSELossclass Model(nn.Module): def __init__(self, encoder,config,tokenizer,args):super(Model, self).__init__()self.encoder = encoderself.config=configself.tokenizer=tokenizerself.args=args# Define dropout layer, dropout_probability is taken from args.self.dropout = nn.Dropout(args.dropout_probability)def forward(self, input_ids=None,labels=None): outputs=self.encoder(input_ids,attention_mask=input_ids.ne(1))[0]# Apply dropoutoutputs = self.dropout(outputs)logits=outputsprob=torch.sigmoid(logits)if labels is not None:labels=labels.float()loss=torch.log(prob[:,0]+1e-10)*labels+torch.log((1-prob)[:,0]+1e-10)*(1-labels)loss=-loss.mean()return loss,probelse:return probrun.py

# coding=utf-8

# Copyright 2018 The Google AI Language Team Authors and The HuggingFace Inc. team.

# Copyright (c) 2018, NVIDIA CORPORATION. All rights reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

"""

Fine-tuning the library models for language modeling on a text file (GPT, GPT-2, BERT, RoBERTa).

GPT and GPT-2 are fine-tuned using a causal language modeling (CLM) loss while BERT and RoBERTa are fine-tuned

using a masked language modeling (MLM) loss.

"""from __future__ import absolute_import, division, print_functionimport argparse

import glob

import logging

import os

import pickle

import random

import re

import shutil

import time

import numpy as np

import torch

from torch.utils.data import DataLoader, Dataset, SequentialSampler, RandomSampler,TensorDataset

from torch.utils.data.distributed import DistributedSampler

import json

try:from torch.utils.tensorboard import SummaryWriter

except:from tensorboardX import SummaryWriterfrom tqdm import tqdm, trange

import multiprocessing

from model import Model

from sklearn.metrics import precision_score, recall_score, f1_score,accuracy_score

cpu_cont = multiprocessing.cpu_count()

from transformers import (WEIGHTS_NAME, AdamW, get_linear_schedule_with_warmup,BertConfig, BertForMaskedLM, BertTokenizer, BertForSequenceClassification,GPT2Config, GPT2LMHeadModel, GPT2Tokenizer,OpenAIGPTConfig, OpenAIGPTLMHeadModel, OpenAIGPTTokenizer,RobertaConfig, RobertaForSequenceClassification, RobertaTokenizer,DistilBertConfig, DistilBertForMaskedLM, DistilBertForSequenceClassification, DistilBertTokenizer)logger = logging.getLogger(__name__)MODEL_CLASSES = {'gpt2': (GPT2Config, GPT2LMHeadModel, GPT2Tokenizer),'openai-gpt': (OpenAIGPTConfig, OpenAIGPTLMHeadModel, OpenAIGPTTokenizer),'bert': (BertConfig, BertForSequenceClassification, BertTokenizer),'roberta': (RobertaConfig, RobertaForSequenceClassification, RobertaTokenizer),'distilbert': (DistilBertConfig, DistilBertForSequenceClassification, DistilBertTokenizer)

}class InputFeatures(object):"""A single training/test features for a example."""def __init__(self,input_tokens,input_ids,idx,label,):self.input_tokens = input_tokensself.input_ids = input_idsself.idx=str(idx)self.label=labeldef convert_examples_to_features(js,tokenizer,args):#sourcecode=' '.join(js['func'].split())code_tokens=tokenizer.tokenize(code)[:args.block_size-2]source_tokens =[tokenizer.cls_token]+code_tokens+[tokenizer.sep_token]source_ids = tokenizer.convert_tokens_to_ids(source_tokens)padding_length = args.block_size - len(source_ids)source_ids+=[tokenizer.pad_token_id]*padding_lengthreturn InputFeatures(source_tokens,source_ids,js['idx'],js['target'])class TextDataset(Dataset):def __init__(self, tokenizer, args, file_path=None):self.examples = []with open(file_path) as f:for line in f:js=json.loads(line.strip())self.examples.append(convert_examples_to_features(js,tokenizer,args))if 'train' in file_path:for idx, example in enumerate(self.examples[:3]):logger.info("*** Example ***")logger.info("idx: {}".format(idx))logger.info("label: {}".format(example.label))logger.info("input_tokens: {}".format([x.replace('\u0120','_') for x in example.input_tokens]))logger.info("input_ids: {}".format(' '.join(map(str, example.input_ids))))def __len__(self):return len(self.examples)def __getitem__(self, i): return torch.tensor(self.examples[i].input_ids),torch.tensor(self.examples[i].label)def set_seed(seed=42):random.seed(seed)os.environ['PYHTONHASHSEED'] = str(seed)np.random.seed(seed)torch.manual_seed(seed)torch.cuda.manual_seed(seed)torch.backends.cudnn.deterministic = Truedef train(args, train_dataset, model, tokenizer):""" Train the model """ args.train_batch_size = args.per_gpu_train_batch_size * max(1, args.n_gpu)train_sampler = RandomSampler(train_dataset) if args.local_rank == -1 else DistributedSampler(train_dataset)train_dataloader = DataLoader(train_dataset, sampler=train_sampler, batch_size=args.train_batch_size,num_workers=4,pin_memory=True)args.max_steps=args.epoch*len( train_dataloader)args.save_steps=len( train_dataloader)args.warmup_steps=len( train_dataloader)args.logging_steps=len( train_dataloader)args.num_train_epochs=args.epochmodel.to(args.device)# Prepare optimizer and schedule (linear warmup and decay)no_decay = ['bias', 'LayerNorm.weight']optimizer_grouped_parameters = [{'params': [p for n, p in model.named_parameters() if not any(nd in n for nd in no_decay)],'weight_decay': args.weight_decay},{'params': [p for n, p in model.named_parameters() if any(nd in n for nd in no_decay)], 'weight_decay': 0.0}]num_params = sum(p.numel() for p in model.parameters())trainable_param = sum(p.numel() for p in model.parameters() if p.requires_grad )logger.info(f"Number of model parameters: {num_params}")logger.info(f"Number of model trainable_param: {trainable_param}")optimizer = AdamW(optimizer_grouped_parameters, lr=args.learning_rate, eps=args.adam_epsilon)scheduler = get_linear_schedule_with_warmup(optimizer, num_warmup_steps=args.max_steps*0.1,num_training_steps=args.max_steps)if args.fp16:try:from apex import ampexcept ImportError:raise ImportError("Please install apex from https://www.github.com/nvidia/apex to use fp16 training.")model, optimizer = amp.initialize(model, optimizer, opt_level=args.fp16_opt_level)# multi-gpu training (should be after apex fp16 initialization)if args.n_gpu > 1:model = torch.nn.DataParallel(model)# Distributed training (should be after apex fp16 initialization)if args.local_rank != -1:model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank],output_device=args.local_rank,find_unused_parameters=True)checkpoint_last = os.path.join(args.output_dir, 'checkpoint-last')scheduler_last = os.path.join(checkpoint_last, 'scheduler.pt')optimizer_last = os.path.join(checkpoint_last, 'optimizer.pt')if os.path.exists(scheduler_last):scheduler.load_state_dict(torch.load(scheduler_last))if os.path.exists(optimizer_last):optimizer.load_state_dict(torch.load(optimizer_last))# Train!logger.info("***** Running training *****")logger.info(" Num examples = %d", len(train_dataset))logger.info(" Num Epochs = %d", args.num_train_epochs)logger.info(" Instantaneous batch size per GPU = %d", args.per_gpu_train_batch_size)logger.info(" Total train batch size (w. parallel, distributed & accumulation) = %d",args.train_batch_size * args.gradient_accumulation_steps * (torch.distributed.get_world_size() if args.local_rank != -1 else 1))logger.info(" Gradient Accumulation steps = %d", args.gradient_accumulation_steps)logger.info(" Total optimization steps = %d", args.max_steps)global_step = args.start_steptr_loss, logging_loss,avg_loss,tr_nb,tr_num,train_loss = 0.0, 0.0,0.0,0,0,0best_mrr=0.0best_acc=0.0# model.resize_token_embeddings(len(tokenizer))model.zero_grad()# Initialize early stopping parameters at the start of trainingearly_stopping_counter = 0best_loss = Nonefor idx in range(args.start_epoch, int(args.num_train_epochs)):bar = tqdm(train_dataloader,total=len(train_dataloader))tr_num=0train_loss=0for step, batch in enumerate(bar):inputs = batch[0].to(args.device) labels=batch[1].to(args.device) model.train()loss,logits = model(inputs,labels)preds = logits[:, 0] > 0.5if args.n_gpu > 1:loss = loss.mean() # mean() to average on multi-gpu parallel trainingif args.gradient_accumulation_steps > 1:loss = loss / args.gradient_accumulation_stepsif args.fp16:with amp.scale_loss(loss, optimizer) as scaled_loss:scaled_loss.backward()torch.nn.utils.clip_grad_norm_(amp.master_params(optimizer), args.max_grad_norm)else:loss.backward()torch.nn.utils.clip_grad_norm_(model.parameters(), args.max_grad_norm)tr_loss += loss.item()tr_num+=1train_loss+=loss.item()if avg_loss==0:avg_loss=tr_lossavg_loss=round(train_loss/tr_num,5)bar.set_description("epoch {} loss {}".format(idx,avg_loss))if (step + 1) % args.gradient_accumulation_steps == 0:optimizer.step()optimizer.zero_grad()scheduler.step() global_step += 1output_flag=Trueavg_loss=round(np.exp((tr_loss - logging_loss) /(global_step- tr_nb)),4)if args.local_rank in [-1, 0] and args.logging_steps > 0 and global_step % args.logging_steps == 0:logging_loss = tr_losstr_nb=global_stepif args.local_rank in [-1, 0] and args.save_steps > 0 and global_step % args.save_steps == 0:if args.local_rank == -1 and args.evaluate_during_training: # Only evaluate when single GPU otherwise metrics may not average wellresults = evaluate(args, model, tokenizer,eval_when_training=True)for key, value in results.items():logger.info(" %s = %s", key, round(value,4)) # Save model checkpointif results['eval_acc']>best_acc:best_acc=results['eval_acc']logger.info(" "+"*"*20) logger.info(" Best acc:%s",round(best_acc,4))logger.info(" "+"*"*20) checkpoint_prefix = 'checkpoint-best-acc'output_dir = os.path.join(args.output_dir, '{}'.format(checkpoint_prefix)) if not os.path.exists(output_dir):os.makedirs(output_dir) model_to_save = model.module if hasattr(model,'module') else modeloutput_dir = os.path.join(output_dir, '{}'.format('model.bin')) torch.save(model_to_save.state_dict(), output_dir)logger.info("Saving model checkpoint to %s", output_dir)# Calculate average loss for the epochavg_loss = train_loss / tr_num# Check for early stopping conditionif args.early_stopping_patience is not None:if best_loss is None or avg_loss < best_loss - args.min_loss_delta:best_loss = avg_lossearly_stopping_counter = 0else:early_stopping_counter += 1if early_stopping_counter >= args.early_stopping_patience:logger.info("Early stopping")break # Exit the loop earlydef evaluate(args, model, tokenizer,eval_when_training=False):# Loop to handle MNLI double evaluation (matched, mis-matched)eval_output_dir = args.output_direval_dataset = TextDataset(tokenizer, args,args.eval_data_file)if not os.path.exists(eval_output_dir) and args.local_rank in [-1, 0]:os.makedirs(eval_output_dir)args.eval_batch_size = args.per_gpu_eval_batch_size * max(1, args.n_gpu)# Note that DistributedSampler samples randomlyeval_sampler = SequentialSampler(eval_dataset) if args.local_rank == -1 else DistributedSampler(eval_dataset)eval_dataloader = DataLoader(eval_dataset, sampler=eval_sampler, batch_size=args.eval_batch_size,num_workers=4,pin_memory=True)# multi-gpu evaluateif args.n_gpu > 1 and eval_when_training is False:model = torch.nn.DataParallel(model)# Eval!logger.info("***** Running evaluation *****")logger.info(" Num examples = %d", len(eval_dataset))logger.info(" Batch size = %d", args.eval_batch_size)eval_loss = 0.0nb_eval_steps = 0model.eval()logits=[]labels=[]for batch in eval_dataloader:inputs = batch[0].to(args.device)label=batch[1].to(args.device)with torch.no_grad():lm_loss,logit = model(inputs,label)eval_loss += lm_loss.mean().item()logits.append(logit.cpu().numpy())labels.append(label.cpu().numpy())nb_eval_steps += 1logits=np.concatenate(logits,0)labels=np.concatenate(labels,0)preds=logits[:,0]>0.5eval_acc=np.mean(labels==preds)precision = precision_score(labels, preds)recall = recall_score(labels, preds)f1 = f1_score(labels, preds)eval_loss = eval_loss / nb_eval_stepsperplexity = torch.tensor(eval_loss)logger.info(f"test_Evaluation Accuracy: {eval_acc}\n")logger.info(f"test_Precision: {precision}")logger.info(f"test_Recall: {recall}")logger.info(f"test_F1 Score: {f1}")result = {"eval_loss": float(perplexity),"eval_acc":round(eval_acc,4),}end_time = time.time()elapsed_time = end_time - start_time# Log timing informationlogger.info(f"{ elapsed_time:.2f}")return resultdef test(args, model, tokenizer):# Loop to handle MNLI double evaluation (matched, mis-matched)eval_dataset = TextDataset(tokenizer, args,args.test_data_file)args.eval_batch_size = args.per_gpu_eval_batch_size * max(1, args.n_gpu)# Note that DistributedSampler samples randomlyeval_sampler = SequentialSampler(eval_dataset) if args.local_rank == -1 else DistributedSampler(eval_dataset)eval_dataloader = DataLoader(eval_dataset, sampler=eval_sampler, batch_size=args.eval_batch_size)# multi-gpu evaluateif args.n_gpu > 1:model = torch.nn.DataParallel(model)# Eval!logger.info("***** Running Test *****")logger.info(" Num examples = %d", len(eval_dataset))logger.info(" Batch size = %d", args.eval_batch_size)eval_loss = 0.0nb_eval_steps = 0model.eval()logits=[] labels=[]for batch in tqdm(eval_dataloader,total=len(eval_dataloader)):inputs = batch[0].to(args.device) label=batch[1].to(args.device) with torch.no_grad():logit = model(inputs)logits.append(logit.cpu().numpy())labels.append(label.cpu().numpy())logits=np.concatenate(logits,0)labels=np.concatenate(labels,0)preds=logits[:,0]>0.5with open(os.path.join(args.output_dir,"predictions.txt"),'w') as f:for example,pred in zip(eval_dataset.examples,preds):if pred:f.write(example.idx+'\t1\n')else:f.write(example.idx+'\t0\n') def main():parser = argparse.ArgumentParser()## Required parametersparser.add_argument("--train_data_file", default=None, type=str, required=True,help="The input training data file (a text file).")parser.add_argument("--output_dir", default=None, type=str, required=True,help="The output directory where the model predictions and checkpoints will be written.")## Other parametersparser.add_argument("--eval_data_file", default=None, type=str,help="An optional input evaluation data file to evaluate the perplexity on (a text file).")parser.add_argument("--test_data_file", default=None, type=str,help="An optional input evaluation data file to evaluate the perplexity on (a text file).")parser.add_argument("--model_type", default="bert", type=str,help="The model architecture to be fine-tuned.")parser.add_argument("--model_name_or_path", default=None, type=str,help="The model checkpoint for weights initialization.")parser.add_argument("--mlm", action='store_true',help="Train with masked-language modeling loss instead of language modeling.")parser.add_argument("--mlm_probability", type=float, default=0.15,help="Ratio of tokens to mask for masked language modeling loss")parser.add_argument("--config_name", default="", type=str,help="Optional pretrained config name or path if not the same as model_name_or_path")parser.add_argument("--tokenizer_name", default="", type=str,help="Optional pretrained tokenizer name or path if not the same as model_name_or_path")parser.add_argument("--cache_dir", default="", type=str,help="Optional directory to store the pre-trained models downloaded from s3 (instread of the default one)")parser.add_argument("--block_size", default=-1, type=int,help="Optional input sequence length after tokenization.""The training dataset will be truncated in block of this size for training.""Default to the model max input length for single sentence inputs (take into account special tokens).")parser.add_argument("--do_train", action='store_true',help="Whether to run training.")parser.add_argument("--do_eval", action='store_true',help="Whether to run eval on the dev set.")parser.add_argument("--do_test", action='store_true',help="Whether to run eval on the dev set.") parser.add_argument("--evaluate_during_training", action='store_true',help="Run evaluation during training at each logging step.")parser.add_argument("--do_lower_case", action='store_true',help="Set this flag if you are using an uncased model.")parser.add_argument("--train_batch_size", default=4, type=int,help="Batch size per GPU/CPU for training.")parser.add_argument("--eval_batch_size", default=4, type=int,help="Batch size per GPU/CPU for evaluation.")parser.add_argument('--gradient_accumulation_steps', type=int, default=1,help="Number of updates steps to accumulate before performing a backward/update pass.")parser.add_argument("--learning_rate", default=5e-5, type=float,help="The initial learning rate for Adam.")parser.add_argument("--weight_decay", default=0.0, type=float,help="Weight deay if we apply some.")parser.add_argument("--adam_epsilon", default=1e-8, type=float,help="Epsilon for Adam optimizer.")parser.add_argument("--max_grad_norm", default=1.0, type=float,help="Max gradient norm.")parser.add_argument("--num_train_epochs", default=1.0, type=float,help="Total number of training epochs to perform.")parser.add_argument("--max_steps", default=-1, type=int,help="If > 0: set total number of training steps to perform. Override num_train_epochs.")parser.add_argument("--warmup_steps", default=0, type=int,help="Linear warmup over warmup_steps.")parser.add_argument('--logging_steps', type=int, default=50,help="Log every X updates steps.")parser.add_argument('--save_steps', type=int, default=50,help="Save checkpoint every X updates steps.")parser.add_argument('--save_total_limit', type=int, default=None,help='Limit the total amount of checkpoints, delete the older checkpoints in the output_dir, does not delete by default')parser.add_argument("--eval_all_checkpoints", action='store_true',help="Evaluate all checkpoints starting with the same prefix as model_name_or_path ending and ending with step number")parser.add_argument("--no_cuda", action='store_true',help="Avoid using CUDA when available")parser.add_argument('--overwrite_output_dir', action='store_true',help="Overwrite the content of the output directory")parser.add_argument('--overwrite_cache', action='store_true',help="Overwrite the cached training and evaluation sets")parser.add_argument('--seed', type=int, default=42,help="random seed for initialization")parser.add_argument('--epoch', type=int, default=42,help="random seed for initialization")parser.add_argument('--fp16', action='store_true',help="Whether to use 16-bit (mixed) precision (through NVIDIA apex) instead of 32-bit")parser.add_argument('--fp16_opt_level', type=str, default='O1',help="For fp16: Apex AMP optimization level selected in ['O0', 'O1', 'O2', and 'O3'].""See details at https://nvidia.github.io/apex/amp.html")parser.add_argument("--local_rank", type=int, default=-1,help="For distributed training: local_rank")parser.add_argument('--server_ip', type=str, default='', help="For distant debugging.")parser.add_argument('--server_port', type=str, default='', help="For distant debugging.")# Add early stopping parameters and dropout probability parametersparser.add_argument("--early_stopping_patience", type=int, default=None,help="Number of epochs with no improvement after which training will be stopped.")parser.add_argument("--min_loss_delta", type=float, default=0.001,help="Minimum change in the loss required to qualify as an improvement.")parser.add_argument('--dropout_probability', type=float, default=0, help='dropout probability')args = parser.parse_args()# Setup distant debugging if neededif args.server_ip and args.server_port:# Distant debugging - see https://code.visualstudio.com/docs/python/debugging#_attach-to-a-local-scriptimport ptvsdprint("Waiting for debugger attach")ptvsd.enable_attach(address=(args.server_ip, args.server_port), redirect_output=True)ptvsd.wait_for_attach()# Setup CUDA, GPU & distributed trainingif args.local_rank == -1 or args.no_cuda:device = torch.device("cuda" if torch.cuda.is_available() and not args.no_cuda else "cpu")args.n_gpu = torch.cuda.device_count()else: # Initializes the distributed backend which will take care of sychronizing nodes/GPUstorch.cuda.set_device(args.local_rank)device = torch.device("cuda", args.local_rank)torch.distributed.init_process_group(backend='nccl')args.n_gpu = 1args.device = deviceargs.per_gpu_train_batch_size=args.train_batch_size//args.n_gpuargs.per_gpu_eval_batch_size=args.eval_batch_size//args.n_gpu# Setup logginglogging.basicConfig(format='%(asctime)s - %(levelname)s - %(name)s - %(message)s',datefmt='%m/%d/%Y %H:%M:%S',level=logging.INFO if args.local_rank in [-1, 0] else logging.WARN)logger.warning("Process rank: %s, device: %s, n_gpu: %s, distributed training: %s, 16-bits training: %s",args.local_rank, device, args.n_gpu, bool(args.local_rank != -1), args.fp16)# Set seedset_seed(args.seed)# Load pretrained model and tokenizerif args.local_rank not in [-1, 0]:torch.distributed.barrier() # Barrier to make sure only the first process in distributed training download model & vocabargs.start_epoch = 0args.start_step = 0checkpoint_last = os.path.join(args.output_dir, 'checkpoint-last')if os.path.exists(checkpoint_last) and os.listdir(checkpoint_last):args.model_name_or_path = os.path.join(checkpoint_last, 'pytorch_model.bin')args.config_name = os.path.join(checkpoint_last, 'config.json')idx_file = os.path.join(checkpoint_last, 'idx_file.txt')with open(idx_file, encoding='utf-8') as idxf:args.start_epoch = int(idxf.readlines()[0].strip()) + 1step_file = os.path.join(checkpoint_last, 'step_file.txt')if os.path.exists(step_file):with open(step_file, encoding='utf-8') as stepf:args.start_step = int(stepf.readlines()[0].strip())logger.info("reload model from {}, resume from {} epoch".format(checkpoint_last, args.start_epoch))config_class, model_class, tokenizer_class = MODEL_CLASSES[args.model_type]config = config_class.from_pretrained(args.config_name if args.config_name else args.model_name_or_path,cache_dir=args.cache_dir if args.cache_dir else None)config.num_labels=1tokenizer = tokenizer_class.from_pretrained(args.tokenizer_name,do_lower_case=args.do_lower_case,cache_dir=args.cache_dir if args.cache_dir else None)if args.block_size <= 0:args.block_size = tokenizer.max_len_single_sentence # Our input block size will be the max possible for the modelargs.block_size = min(args.block_size, tokenizer.max_len_single_sentence)if args.model_name_or_path:model = model_class.from_pretrained(args.model_name_or_path,from_tf=bool('.ckpt' in args.model_name_or_path),config=config,cache_dir=args.cache_dir if args.cache_dir else None) else:model = model_class(config)model=Model(model,config,tokenizer,args)if args.local_rank == 0:torch.distributed.barrier() # End of barrier to make sure only the first process in distributed training download model & vocablogger.info("Training/evaluation parameters %s", args)# Trainingif args.do_train:if args.local_rank not in [-1, 0]:torch.distributed.barrier() # Barrier to make sure only the first process in distributed training process the dataset, and the others will use the cachetrain_dataset = TextDataset(tokenizer, args,args.train_data_file)if args.local_rank == 0:torch.distributed.barrier()train(args, train_dataset, model, tokenizer)# Evaluationresults = {}if args.do_eval and args.local_rank in [-1, 0]:checkpoint_prefix = 'checkpoint-best-acc/model.bin'output_dir = os.path.join(args.output_dir, '{}'.format(checkpoint_prefix)) model.load_state_dict(torch.load(output_dir)) model.to(args.device)result=evaluate(args, model, tokenizer)logger.info("***** Eval results *****")for key in sorted(result.keys()):logger.info(" %s = %s", key, str(round(result[key],4)))if args.do_test and args.local_rank in [-1, 0]:checkpoint_prefix = 'checkpoint-best-acc/model.bin'output_dir = os.path.join(args.output_dir, '{}'.format(checkpoint_prefix)) model.load_state_dict(torch.load(output_dir)) model.to(args.device)test(args, model, tokenizer)return resultsif __name__ == "__main__":start_time = time.time()main()run.sh

python run.pq \--output_dir=./saved_models \--model_type=roberta \--tokenizer_name=microsoft/unixcoder-base \--model_name_or_path=microsoft/unixcoder-base \--do_train \--train_data_file=/data/code/codebert/dataset/dataset/d2a/d2a_train.json \--eval_data_file=/data/code/codebert/dataset/dataset/d2a/d2a_test.json \--epoch 20 \--block_size 400 \--train_batch_size 4 \--eval_batch_size 8 \--learning_rate 2e-6 \--max_grad_norm 1.0 \--evaluate_during_training \--seed 123213 2>&1 | tee ada.log

这篇关于微调codebert、unixcoder、grapghcodebert完成漏洞检测代码的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!