本文主要是介绍第N4周:中文文本分类-Pytorch实现,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊 | 接辅导、项目定制

- 🚀 文章来源:K同学的学习圈子

目录

一、准备工作

1.任务说明

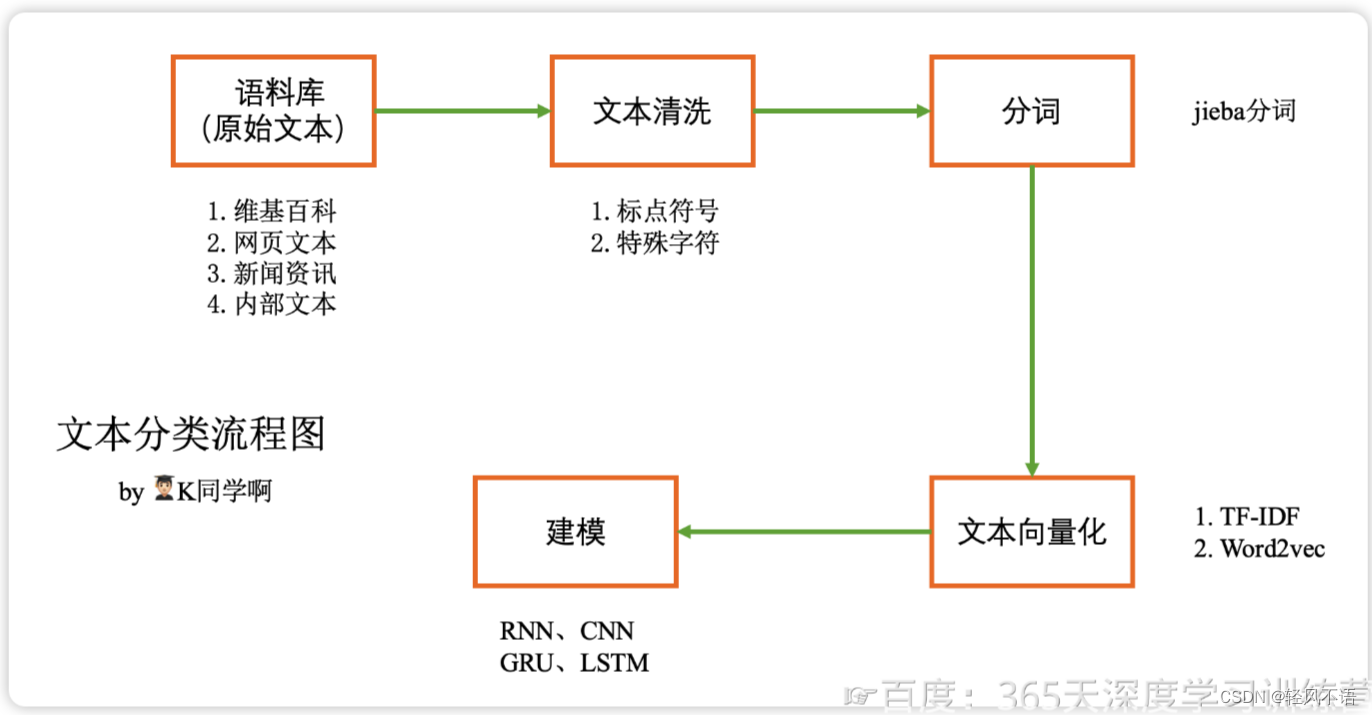

文本分类流程图:

2.加载数据

编辑 二、数据的预处理

1.构建词典

2.生成数据批次和迭代器

三、模型构建

四、训练模型

五、小结

一、准备工作

1.任务说明

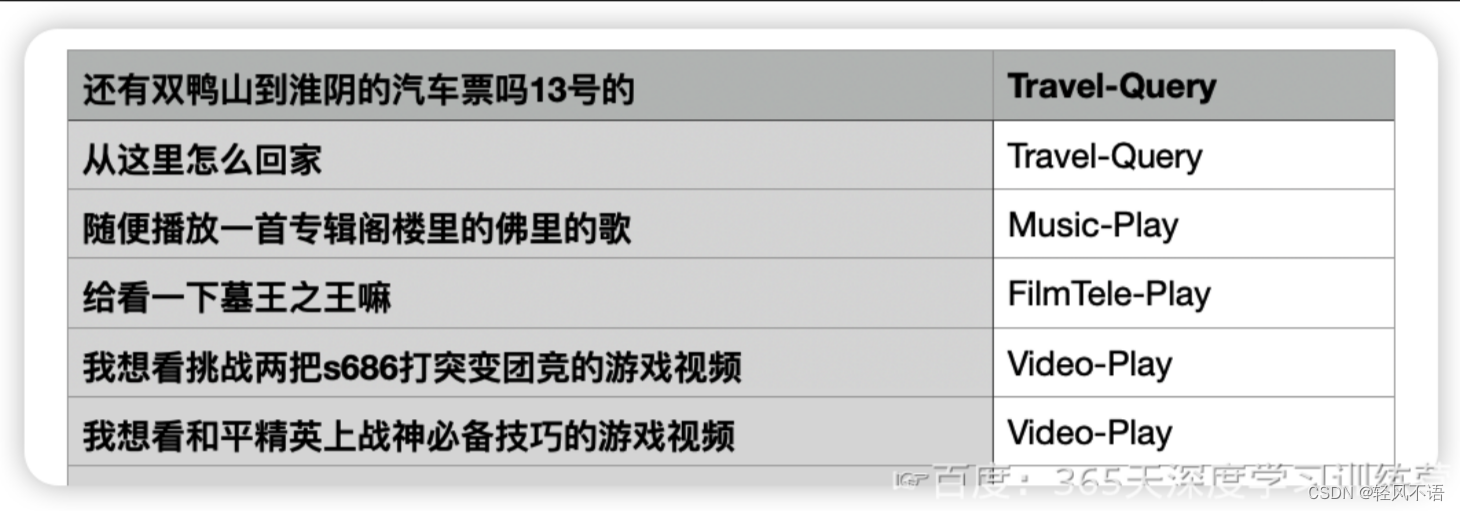

本次将使用PyTorch实现中文文本分类。主要代码与N1周基本一致,不同的是本次任务中使用了本地的中文数据,数据示例如下:

本周任务:

1.学习如何进行中文本文预处理

2.根据文本内容(第1列)预测文本标签(第2列)

进阶任务:

1.尝试根据第一周的内容独立实现,尽可能的不看本文的代码

2.构建更复杂的网络模型,将准确率提升至91%

文本分类流程图:

2.加载数据

import torch

import torch.nn as nn

import torchvision

from torchvision import transforms,datasets

import os,PIL,pathlib,warningswarnings.filterwarnings("ignore") #忽略警告信息device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(device)import pandas as pd#加载自定义中文数据

train_data = pd.read_csv('./train.csv',sep='\t',header = None)

#构造数据集迭代器

def coustom_data_iter(texts,labels):for x,y in zip(texts,labels):yield x,ytrain_iter =coustom_data_iter(train_data[0].values[:],train_data[1].values[:])

输出:

二、数据的预处理

二、数据的预处理

1.构建词典

#构建词典

from torchtext.data.utils import get_tokenizer

from torchtext.vocab import build_vocab_from_iterator

import jieba#中文分词方法

tokenizer = jieba.lcut

def yield_tokens(data_iter):for text,_ in data_iter:yield tokenizer(text)vocab = build_vocab_from_iterator(yield_tokens(train_iter),specials=["<unk>"])

vocab.set_default_index(vocab["<unk>"]) #设置默认索引,如果找不到单词,则会选择默认索引

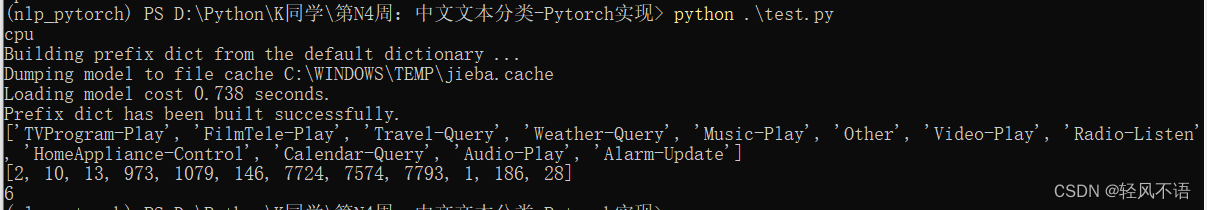

vocab(['我','想','看','和平','精英','上','战神','必备','技巧','的','游戏','视频'])

label_name = list(set(train_data[1].values[:]))

print(label_name)

text_pipeline = lambda x : vocab(tokenizer(x))

label_pipeline = lambda x : label_name.index(x)print(text_pipeline('我想看和平精英上战神必备技巧的游戏视频'))

print(label_pipeline('Video-Play'))

输出:

2.生成数据批次和迭代器

#生成数据批次和迭代器

from torch.utils.data import DataLoaderdef collate_batch(batch):label_list, text_list, offsets = [],[],[0] for(_text, _label) in batch:#标签列表label_list.append(label_pipeline(_label))#文本列表processed_text = torch.tensor(text_pipeline(_text), dtype=torch.int64)text_list.append(processed_text)#偏移量offsets.append(processed_text.size(0))label_list = torch.tensor(label_list,dtype=torch.int64)text_list = torch.cat(text_list)offsets = torch.tensor(offsets[:-1]).cumsum(dim=0) #返回维度dim中输入元素的累计和return text_list.to(device), label_list.to(device), offsets.to(device)#数据加载器

dataloader = DataLoader(train_iter,batch_size = 8,shuffle = False,collate_fn = collate_batch

)三、模型构建

#搭建模型

from torch import nnclass TextClassificationModel(nn.Module):def __init__(self, vocab_size, embed_dim, num_class):super(TextClassificationModel,self).__init__()self.embedding = nn.EmbeddingBag(vocab_size, #词典大小embed_dim, # 嵌入的维度sparse=False) #self.fc = nn.Linear(embed_dim, num_class)self.init_weights()def init_weights(self):initrange = 0.5self.embedding.weight.data.uniform_(-initrange, initrange)self.fc.weight.data.uniform_(-initrange, initrange)self.fc.bias.data.zero_()def forward(self, text, offsets):embedded = self.embedding(text, offsets)return self.fc(embedded)

#初始化模型

#定义实例

num_class = len(label_name)

vocab_size = len(vocab)

em_size = 64

model = TextClassificationModel(vocab_size, em_size, num_class).to(device)

#定义训练与评估函数

import timedef train(dataloader):model.train() #切换为训练模式total_acc, train_loss, total_count = 0,0,0log_interval = 50start_time = time.time()for idx, (text,label, offsets) in enumerate(dataloader):predicted_label = model(text, offsets)optimizer.zero_grad() #grad属性归零loss = criterion(predicted_label, label) #计算网络输出和真实值之间的差距,label为真loss.backward() #反向传播torch.nn.utils.clip_grad_norm_(model.parameters(),0.1) #梯度裁剪optimizer.step() #每一步自动更新#记录acc与losstotal_acc += (predicted_label.argmax(1) == label).sum().item()train_loss += loss.item()total_count += label.size(0)if idx % log_interval == 0 and idx > 0:elapsed = time.time() - start_timeprint('|epoch{:d}|{:4d}/{:4d} batches|train_acc{:4.3f} train_loss{:4.5f}'.format(epoch,idx,len(dataloader),total_acc/total_count,train_loss/total_count))total_acc,train_loss,total_count = 0,0,0staet_time = time.time()def evaluate(dataloader):model.eval() #切换为测试模式total_acc,train_loss,total_count = 0,0,0with torch.no_grad():for idx,(text,label,offsets) in enumerate(dataloader):predicted_label = model(text, offsets)loss = criterion(predicted_label,label) #计算loss值#记录测试数据total_acc += (predicted_label.argmax(1) == label).sum().item()train_loss += loss.item()total_count += label.size(0)return total_acc/total_count, train_loss/total_count四、训练模型

#拆分数据集并运行模型

from torch.utils.data.dataset import random_split

from torchtext.data.functional import to_map_style_dataset# 超参数设定

EPOCHS = 10 #epoch

LR = 5 #learningRate

BATCH_SIZE = 64 #batch size for training#设置损失函数、选择优化器、设置学习率调整函数

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr = LR)

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, 1.0, gamma = 0.1)

total_accu = None# 构建数据集

train_iter = custom_data_iter(train_data[0].values[:],train_data[1].values[:])

train_dataset = to_map_style_dataset(train_iter)

split_train_, split_valid_ = random_split(train_dataset,[int(len(train_dataset)*0.8),int(len(train_dataset)*0.2)])train_dataloader = DataLoader(split_train_, batch_size = BATCH_SIZE, shuffle = True, collate_fn = collate_batch)

valid_dataloader = DataLoader(split_valid_, batch_size = BATCH_SIZE, shuffle = True, collate_fn = collate_batch)for epoch in range(1, EPOCHS + 1):epoch_start_time = time.time()train(train_dataloader)val_acc, val_loss = evaluate(valid_dataloader)#获取当前的学习率lr = optimizer.state_dict()['param_groups'][0]['lr']if total_accu is not None and total_accu > val_acc:scheduler.step()else:total_accu = val_accprint('-' * 69)print('| epoch {:d} | time:{:4.2f}s | valid_acc {:4.3f} valid_loss {:4.3f}'.format(epoch,time.time() - epoch_start_time,val_acc,val_loss))print('-' * 69)

test_acc,test_loss = evaluate(valid_dataloader)

print('模型准确率为:{:5.4f}'.format(test_acc))

#测试指定的数据

def predict(text, text_pipeline):with torch.no_grad():text = torch.tensor(text_pipeline(text))output = model(text, torch.tensor([0]))return output.argmax(1).item()ex_text_str = "还有双鸭山到淮阴的汽车票吗13号的"

model = model.to("cpu")print("该文本的类别是: %s" %label_name[predict(ex_text_str,text_pipeline)])输出:

|epoch1| 50/ 152 batches|train_acc0.431 train_loss0.03045

|epoch1| 100/ 152 batches|train_acc0.700 train_loss0.01936

|epoch1| 150/ 152 batches|train_acc0.768 train_loss0.01370

---------------------------------------------------------------------

| epoch 1 | time:1.58s | valid_acc 0.789 valid_loss 0.012

---------------------------------------------------------------------

|epoch2| 50/ 152 batches|train_acc0.818 train_loss0.01030

|epoch2| 100/ 152 batches|train_acc0.831 train_loss0.00932

|epoch2| 150/ 152 batches|train_acc0.850 train_loss0.00811

---------------------------------------------------------------------

| epoch 2 | time:1.47s | valid_acc 0.837 valid_loss 0.008

---------------------------------------------------------------------

|epoch3| 50/ 152 batches|train_acc0.870 train_loss0.00688

|epoch3| 100/ 152 batches|train_acc0.887 train_loss0.00658

|epoch3| 150/ 152 batches|train_acc0.893 train_loss0.00575

---------------------------------------------------------------------

| epoch 3 | time:1.46s | valid_acc 0.866 valid_loss 0.007

---------------------------------------------------------------------

|epoch4| 50/ 152 batches|train_acc0.906 train_loss0.00507

|epoch4| 100/ 152 batches|train_acc0.918 train_loss0.00468

|epoch4| 150/ 152 batches|train_acc0.915 train_loss0.00478

---------------------------------------------------------------------

| epoch 4 | time:1.47s | valid_acc 0.886 valid_loss 0.006

---------------------------------------------------------------------

|epoch5| 50/ 152 batches|train_acc0.938 train_loss0.00378

|epoch5| 100/ 152 batches|train_acc0.935 train_loss0.00379

|epoch5| 150/ 152 batches|train_acc0.932 train_loss0.00376

---------------------------------------------------------------------

| epoch 5 | time:1.51s | valid_acc 0.890 valid_loss 0.006

---------------------------------------------------------------------

|epoch6| 50/ 152 batches|train_acc0.951 train_loss0.00310

|epoch6| 100/ 152 batches|train_acc0.952 train_loss0.00287

|epoch6| 150/ 152 batches|train_acc0.950 train_loss0.00289

---------------------------------------------------------------------

| epoch 6 | time:1.50s | valid_acc 0.894 valid_loss 0.006

---------------------------------------------------------------------

|epoch7| 50/ 152 batches|train_acc0.963 train_loss0.00233

|epoch7| 100/ 152 batches|train_acc0.963 train_loss0.00244

|epoch7| 150/ 152 batches|train_acc0.965 train_loss0.00222

---------------------------------------------------------------------

| epoch 7 | time:1.49s | valid_acc 0.898 valid_loss 0.005

---------------------------------------------------------------------

|epoch8| 50/ 152 batches|train_acc0.975 train_loss0.00183

|epoch8| 100/ 152 batches|train_acc0.976 train_loss0.00176

|epoch8| 150/ 152 batches|train_acc0.971 train_loss0.00188

---------------------------------------------------------------------

| epoch 8 | time:1.67s | valid_acc 0.900 valid_loss 0.005

---------------------------------------------------------------------

|epoch9| 50/ 152 batches|train_acc0.982 train_loss0.00145

|epoch9| 100/ 152 batches|train_acc0.982 train_loss0.00139

|epoch9| 150/ 152 batches|train_acc0.980 train_loss0.00141

---------------------------------------------------------------------

| epoch 9 | time:2.05s | valid_acc 0.901 valid_loss 0.006

---------------------------------------------------------------------

|epoch10| 50/ 152 batches|train_acc0.990 train_loss0.00108

|epoch10| 100/ 152 batches|train_acc0.984 train_loss0.00119

|epoch10| 150/ 152 batches|train_acc0.986 train_loss0.00105

---------------------------------------------------------------------

| epoch 10 | time:1.98s | valid_acc 0.900 valid_loss 0.005

---------------------------------------------------------------------

模型准确率为:0.8996

该文本的类别是: Travel-Query

五、小结

- 数据加载:

- 定义一个生成器函数,将文本和标签成对迭代。这是为了后续的数据处理和加载做准备。

- 分词与词汇表:

- 使用

jieba进行中文分词,jieba.lcut可以将中文文本切割成单个词语列表。 - 使用

torchtext的build_vocab_from_iterator从分词后的文本中构建词汇表,并设置默认索引为<unk>,表示未知词汇。这对处理未见过的词汇非常重要。

- 使用

- 数据管道:创建文本和标签处理管道。

-

创建两个处理管道:

text_pipeline:将文本转换为词汇表中的索引。label_pipeline:将标签转换为索引。

-

- 模型构建:定义带嵌入层和全连接层的文本分类模型。

- 定义一个文本分类模型

TextClassificationModel,包括一个嵌入层nn.EmbeddingBag和一个全连接层nn.Linear。nn.EmbeddingBag在处理变长序列时性能较好,因为它不需要明确的填充操作。

- 定义一个文本分类模型

这篇关于第N4周:中文文本分类-Pytorch实现的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!