本文主要是介绍使用隐藏层解决非线性问题,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

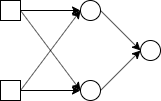

使用隐藏层解决非线性问题:

输入层: 隐藏层: 输出层:

import tensorflow as tf

import numpy as nplearning_rate = 0.0001

n_input = 2

n_label = 1

n_hidden = 2x = tf.placeholder(tf.float32, [None, n_input])

y = tf.placeholder(tf.float32, [None, n_label])weights = {'h1': tf.Variable(tf.truncated_normal([ n_input, n_hidden], stddev =0.1)),'h2': tf.Variable(tf.truncated_normal([n_hidden, n_label], stddev =0.1))

}

biases = {'h1': tf.Variable(tf.zeros([n_hidden])),'h2': tf.Variable(tf.zeros([n_label]))

}layer_1 = tf.nn.relu(tf.add(tf.matmul(x, weights['h1']), biases['h1']))

y_pred = tf.nn.tanh(tf.add(tf.matmul(layer_1,weights['h2']), biases['h2']))loss = tf.reduce_mean((y_pred - y)**2)

train_step = tf.train.AdamOptimizer(learning_rate).minimize(loss)X = [[0,0],[0,1],[1,0],[1,1]]

Y = [[0],[1],[1],[0]]

X = np.array(X).astype('float32')

Y = np.array(Y).astype('int16')# 迭代训练模型

sess = tf.InteractiveSession()

sess.run(tf.global_variables_initializer())

for i in range(1000):sess.run(train_step, feed_dict = {x:X, y:Y})# 计算预测值print(sess.run(y_pred, feed_dict={x:X}))# 查看隐藏层的输出print(sess.run(layer_1, feed_dict = {x:X}))

结果:

[[ 9.9999961e-05]

[-1.2277756e-03]

[-1.2540328e-02]

[-1.3875227e-02]]

[[0. 0. ]

[0.01804843 0. ]

[0.17182861 0. ]

[0.18997703 0. ]]

[[ 0.0002 ]

[-0.00111128]

[-0.01240848]

[-0.01373422]]

......

这篇关于使用隐藏层解决非线性问题的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!