本文主要是介绍ceph 1 pool(s) have non-power-of-two pg_num,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

执行ceph –s 发现集群状态并非ok,具体信息如下:

[ceph-admin@ceph-node1 cluster]$ ceph -w

cluster:

id: a2e7e83b-8319-4d28-957f-27c8d7b62fa8

health: HEALTH_WARN

1 pool(s) have non-power-of-two pg_num

由于是新配置的集群,只有一个pool

$ sudo ceph osd lspools

1 rbd

查看rbd pool的PGS

$ sudo ceph osd pool get rbd pg_num

pg_num: 64

*pgs为64,因为是2副本的配置,所以当有8个osd的时候,每个osd上均分了64/8 2=16个pgs,也就是出现了如上的错误 小于最小配置30个

解决办法:修改默认pool rbd的pgs

$ sudo ceph osd pool set rbd pg_num 128

set pool 0 pg_num to 128

$ sudo ceph -s

cluster 257faba1-f259-4164-a0f9-1726bd70b05a

health HEALTH_WARN

64 pgs stuck inactive

64 pgs stuck unclean

pool rbd pg_num 128 > pgp_num 64

monmap e1: 1 mons at {bdc217=192.168.13.217:6789/0}

election epoch 2, quorum 0 bdc217

osdmap e52: 8 osds: 8 up, 8 in

flags sortbitwise

pgmap v121: 128 pgs, 1 pools, 0 bytes data, 0 objects

715 MB used, 27550 GB / 29025 GB avail

64 active+clean

64 creating

发现需要把pgp_num也一并修改,默认两个pg_num和pgp_num一样大小均为64,此处也将两个的值都设为128

$ sudo ceph osd pool set rbd pgp_num 128

set pool 0 pgp_num to 128

最后查看集群状态,显示为OK,错误解决:

[ceph-admin@ceph-node1 cluster]$ ceph -s

cluster:

id: a2e7e83b-8319-4d28-957f-27c8d7b62fa8

health: HEALTH_OK

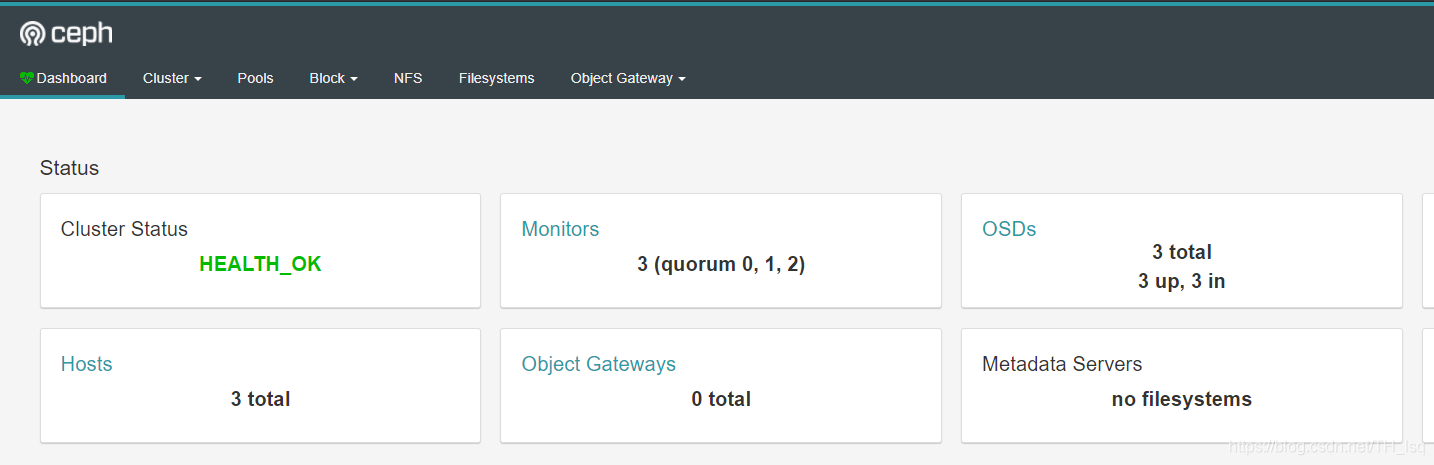

web

这篇关于ceph 1 pool(s) have non-power-of-two pg_num的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!