本文主要是介绍计算机视觉-猫狗大战,希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

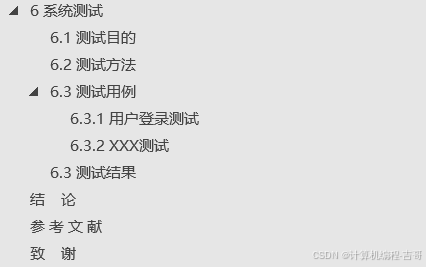

文章目录

- 一.猫狗大战

- 1.1 简介

- 1.2 数据集

- 二.代码:

- dataset.py

- train.py

- predict.py

- 三.程序输出:

- train.py

- predict.py

一.猫狗大战

1.1 简介

这是计算机视觉系列的第一篇博文,主要介绍用TensorFlow来实现猫狗分类、识别。该项目主要包括dataset、train、predict三部分。其中dataset.py主要是读取数据并对数据进行预处理;train.py主要是训练一个二分类模型;predict.py是用训练好的模型进行测试。GitHub地址:https://github.com/jx1100370217/dog-cat-master

1.2 数据集

该项目的数据主要包括1000张网上找的猫和狗的图片,其中猫,狗各500张。

二.代码:

dataset.py

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

# author: JX

# data: 20181101import cv2

import os

import glob

from sklearn.utils import shuffle

import numpy as npdef load_train(train_path,image_size,classes):images = []labels = []img_names = []cls = []print('Going to read training images')for fields in classes:index = classes.index(fields)print('Now going to read {} file (Index: {})'.format(fields,index))path = os.path.join(train_path,fields,'*g')files = glob.glob(path)for f1 in files:image = cv2.imread(f1)image = cv2.resize(image,(image_size,image_size),0,0,cv2.INTER_LINEAR)image = image.astype(np.float32)image = np.multiply(image,1.0 / 255.0)images.append(image)label = np.zeros(len(classes))label[index] = 1.0labels.append(label)flbase = os.path.basename(f1)img_names.append(flbase)cls.append(fields)images = np.array(images)labels = np.array(labels)img_names = np.array(img_names)cls = np.array(cls)return images,labels,img_names,clsclass DataSet(object):def __init__(self,images,labels,img_names,cls):self._num_examples = images.shape[0]self._images = imagesself._labels = labelsself._img_names = img_namesself._cls = clsself._epochs_done = 0self._index_in_epoch = 0@propertydef images(self):return self._images@propertydef labels(self):return self._labels@propertydef img_names(self):return self._img_names@propertydef cls(self):return self._cls@propertydef num_examples(self):return self._num_examples@propertydef epochs_done(self):return self._epochs_donedef next_batch(self,batch_size):start = self._index_in_epochself._index_in_epoch += batch_sizeif self._index_in_epoch > self._num_examples:self._epochs_done += 1start = 0self._index_in_epoch = batch_sizeassert batch_size <= self._num_examplesend = self._index_in_epochreturn self._images[start:end],self._labels[start:end],self._img_names[start:end],self.cls[start:end]def read_train_sets(train_path,image_size,classes,validation_size):class DataSets(object):passdata_sets = DataSets()images, labels, img_names, cls = load_train(train_path, image_size, classes)images, labels, img_names, cls = shuffle(images, labels, img_names, cls)if isinstance(validation_size, float):validation_size = int(validation_size * images.shape[0])validation_images = images[:validation_size]validation_labels = labels[:validation_size]validation_img_names = img_names[:validation_size]validation_cls = cls[:validation_size]train_images = images[validation_size:]train_labels = labels[validation_size:]train_img_names = img_names[validation_size:]train_cls = cls[validation_size:]data_sets.train = DataSet(train_images,train_labels,train_img_names,train_cls)data_sets.valid = DataSet(validation_images,validation_labels,validation_img_names,validation_cls)return data_sets

train.py

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

# author: JX

# data: 20181101import dataset

import tensorflow as tf

import time

from datetime import timedelta

import math

import random

import numpy as np

from tensorflow import set_random_seed

from numpy.random import seed

seed(10)

set_random_seed(20)batch_size = 32classes = ['dogs','cats']

num_classes = len(classes)validation_size = 0.2

img_size = 64

num_channels = 3

train_path = 'training_data'data = dataset.read_train_sets(train_path,img_size,classes,validation_size=validation_size)print("Complete reading input data.Will Now print a snippet of it")

print("Number of files in Training-set:\t\t{}".format(len(data.train.labels)))

print("Number of files in Validation-set:\t\t{}".format(len(data.valid.labels)))session = tf.Session()

x = tf.placeholder(tf.float32,shape=[None,img_size,img_size,num_channels],name='x')y_true = tf.placeholder(tf.float32,shape=[None,num_classes],name='y_true')

y_true_cls = tf.argmax(y_true,dimension=1)filter_size_conv1 = 3

num_filters_conv1 = 32filter_size_conv2 = 3

num_filters_conv2 = 32filter_size_conv3 = 3

num_filters_conv3 = 64fc_layer_size = 1024def create_weights(shape):return tf.Variable(tf.truncated_normal(shape,stddev=0.05))def create_biases(size):return tf.Variable(tf.constant(0.05,shape=[size]))def create_convolutional_layer(input,num_input_channels,conv_filter_size,num_filters):weights = create_weights(shape=[conv_filter_size,conv_filter_size,num_input_channels,num_filters])biases = create_biases(num_filters)layer = tf.nn.conv2d(input=input,filter=weights,strides=[1,1,1,1],padding='SAME')layer += biaseslayer = tf.nn.relu(layer)layer = tf.nn.max_pool(value=layer,ksize=[1,2,2,1],strides=[1,2,2,1],padding='SAME')return layerdef create_flatten_layer(layer):layer_shape = layer.get_shape()num_features = layer_shape[1:4].num_elements()layer = tf.reshape(layer,[-1,num_features])return layerdef create_fc_layer(input,num_inputs,num_outputs,use_relu=True):weights = create_weights(shape=[num_inputs,num_outputs])biases = create_biases(num_outputs)layer = tf.matmul(input,weights) + biaseslayer = tf.nn.dropout(layer,keep_prob=0.7)if use_relu:layer = tf.nn.relu(layer)return layerlayer_conv1 = create_convolutional_layer(input=x,num_input_channels=num_channels,conv_filter_size=filter_size_conv1,num_filters=num_filters_conv1)

layer_conv2 = create_convolutional_layer(input=layer_conv1,num_input_channels=num_filters_conv1,conv_filter_size=filter_size_conv2,num_filters=num_filters_conv2)

layer_conv3 = create_convolutional_layer(input=layer_conv2,num_input_channels=num_filters_conv2,conv_filter_size=filter_size_conv3,num_filters=num_filters_conv3)layer_flat = create_flatten_layer(layer_conv3)layer_fc1 = create_fc_layer(input=layer_flat,num_inputs=layer_flat.get_shape()[1:4].num_elements(),num_outputs=fc_layer_size,use_relu=True)layer_fc2 = create_fc_layer(input=layer_fc1,num_inputs=fc_layer_size,num_outputs=num_classes,use_relu=False)y_pred = tf.nn.softmax(layer_fc2,name='y_pred')y_pred_cls = tf.argmax(y_pred,dimension=1)

session.run(tf.global_variables_initializer())

cross_entropy = tf.nn.softmax_cross_entropy_with_logits(logits=layer_fc2,labels=y_true)

cost = tf.reduce_mean(cross_entropy)

optimizer = tf.train.AdamOptimizer(learning_rate=1e-4).minimize(cost)

correct_prediction = tf.equal(y_pred_cls,y_true_cls)

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))session.run(tf.global_variables_initializer())def show_pregress(epoch,feed_dict_train,feed_dict_validata,val_loss,i):acc = session.run(accuracy,feed_dict=feed_dict_train)val_acc = session.run(accuracy,feed_dict=feed_dict_validata)msg = "Training Epoch {0}---iterations: {1}---Training Accuracy:{2:>6.1%}," \"Validation Accuracy:{3:>6.1%},Validation Loss:{4:.3f}"print(msg.format(epoch+1,i,acc,val_acc,val_loss))total_iterations = 0saver = tf.train.Saver()

def train(num_iteration):global total_iterationsfor i in range(total_iterations,total_iterations + num_iteration):x_batch,y_true_batch,_,cls_batch = data.train.next_batch(batch_size)x_valid_batch,y_valid_batch,_,valid_cls_batch = data.valid.next_batch(batch_size)feed_dict_tr = {x: x_batch,y_true:y_true_batch}feed_dict_val = {x: x_valid_batch, y_true: y_valid_batch}session.run(optimizer,feed_dict=feed_dict_tr)if i % int(data.train.num_examples/batch_size) == 0:val_loss = session.run(cost,feed_dict=feed_dict_val)epoch = int(i / int(data.train.num_examples/batch_size))show_pregress(epoch,feed_dict_tr,feed_dict_val,val_loss,i)saver.save(session,'./dogs-cats-model/dog-cat.ckpt',global_step=i)total_iterations += num_iterationtrain(num_iteration=10000)

predict.py

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

# author: JX

# data: 20181101import tensorflow as tf

import numpy as np

import os

import glob

import cv2

import sysimage_size = 64

num_channels = 3

images = []path = 'cat.1.jpg'

image = cv2.imread(path)

image = cv2.resize(image,(image_size,image_size),0,0,cv2.INTER_LINEAR)

images.append(image)

images = np.array(images,dtype=np.uint8)

images = images.astype('float32')

images = np.multiply(images,1.0/255.0)

x_batch = images.reshape(1,image_size,image_size,num_channels)sess = tf.Session()

saver = tf.train.import_meta_graph('./dogs-cats-model/dog-cat.ckpt-9975.meta')

saver.restore(sess,'./dogs-cats-model/dog-cat.ckpt-9975')

graph = tf.get_default_graph()y_pred = graph.get_tensor_by_name("y_pred:0")x = graph.get_tensor_by_name("x:0")

y_true = graph.get_tensor_by_name("y_true:0")

y_test_images = np.zeros((1,2))feed_dict_testing = {x:x_batch,y_true:y_test_images}

result = sess.run(y_pred,feed_dict=feed_dict_testing)res_label = ['dog','cat']

print(res_label[result.argmax()])

三.程序输出:

train.py

predict.py

这篇关于计算机视觉-猫狗大战的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!